Modular RL infrastructure platform for Unreal Engine 5 environments

Project description

Unreal Engine Deep Reinforcement Learning Library

Library and framework for streamlined development of learning agents in Unreal Engine 5

Table of Contents

About The Project

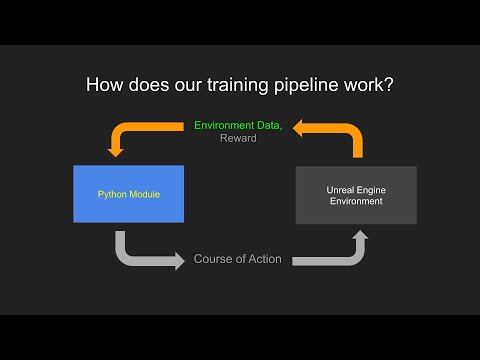

A deep reinforcement learning framework designed specifically for integration with Unreal Engine.

Made for both developers and non-programmers, the framework supports native code alongside Blueprint visual scripting, enabling users to implement learning agents within their Unreal projects regardless of their programming background.

Features a minimal setup process to get you started fast. The design makes it straightforward to set up training environments and experiment with different reinforcement learning algorithms without hassle.

You can find more information and documentation at the GitHub repository wiki. Below is a library overview video demonstrating an implementation of the framework with a basic example.

Built With

Installation

Follow the instructions below to get started.

Prerequisites

Getting Started

Open your terminal and clone the repository to your local machine:

git clone https://github.com/kcccr123/ue-reinforcement-learning.git

The repository is split into an external python module and an Unreal Editor project plugin.

Instead of cloning, you can choose to download the Unreal Plugin and Python module seperately from the GitHub releases.

Unreal Setup

- Copy the entire plugin folder,

UnrealPlugininto the Plugins directory of your Unreal project.

If the Plugins folder doesn’t exist, create one in the root of your project. The folder structure of your Unreal project should look like this:

YourUnrealProject/

└── Plugins/

└── UnrealPlugin/

- Enable the plugin inside the Unreal Editor.

Open your Unreal project inside the editor. Navigate to Edit > Plugins. Locate your plugin in the list (it might be under a relevant category such as "Other" or "Installed Plugins"). Check the box to enable the plugin. Restart Unreal Engine.

Python Setup

It is generally recommended you create a virtual enviornment to manage dependencies, but it is not strictly required.

-

In your terminal, cd into the

PythonEnvdirectory. -

Run the commmand

pip install -r requirements.txtto install the required Python dependencies.

Contact

Feel free to contact me at:

@Kevin Chen - kevinz.chen@mail.utoronto.ca

@Gary Guo - garyz.guo@mail.utoronto.ca

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file uerl-0.1.0.tar.gz.

File metadata

- Download URL: uerl-0.1.0.tar.gz

- Upload date:

- Size: 4.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c34ea997d0e07d18b9cb75c176e2886139a9b01a7ab0ed562c8540f1a1ce60c8

|

|

| MD5 |

a83eb72bb750dcf342fb7590e84061dc

|

|

| BLAKE2b-256 |

ad95c4e088dffe12cfa7f62609f239acf3bbc354dd5847efddc22f3217c1ffb2

|

Provenance

The following attestation bundles were made for uerl-0.1.0.tar.gz:

Publisher:

publish.yml on kcccr123/ue-reinforcement-learning

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

uerl-0.1.0.tar.gz -

Subject digest:

c34ea997d0e07d18b9cb75c176e2886139a9b01a7ab0ed562c8540f1a1ce60c8 - Sigstore transparency entry: 1392549579

- Sigstore integration time:

-

Permalink:

kcccr123/ue-reinforcement-learning@c49c59db847998ea20be99529690ba340077c3eb -

Branch / Tag:

refs/heads/main - Owner: https://github.com/kcccr123

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@c49c59db847998ea20be99529690ba340077c3eb -

Trigger Event:

push

-

Statement type:

File details

Details for the file uerl-0.1.0-py3-none-any.whl.

File metadata

- Download URL: uerl-0.1.0-py3-none-any.whl

- Upload date:

- Size: 5.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8e442dd786ac2f8a250a6b43838a96af5b7ea2647389b0efa590c5dc2e0a266f

|

|

| MD5 |

9307b2b02724074446c2f7503045166f

|

|

| BLAKE2b-256 |

5900a560a56d46a8d397d3df6b3eade48a50a56825177ed713cc1dcd68b11f41

|

Provenance

The following attestation bundles were made for uerl-0.1.0-py3-none-any.whl:

Publisher:

publish.yml on kcccr123/ue-reinforcement-learning

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

uerl-0.1.0-py3-none-any.whl -

Subject digest:

8e442dd786ac2f8a250a6b43838a96af5b7ea2647389b0efa590c5dc2e0a266f - Sigstore transparency entry: 1392549582

- Sigstore integration time:

-

Permalink:

kcccr123/ue-reinforcement-learning@c49c59db847998ea20be99529690ba340077c3eb -

Branch / Tag:

refs/heads/main - Owner: https://github.com/kcccr123

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@c49c59db847998ea20be99529690ba340077c3eb -

Trigger Event:

push

-

Statement type: