Extract hardcoded subtitles from videos using machine learning

Project description

videocr

Extract hardcoded (burned-in) subtitles from videos using the Tesseract OCR engine with Python.

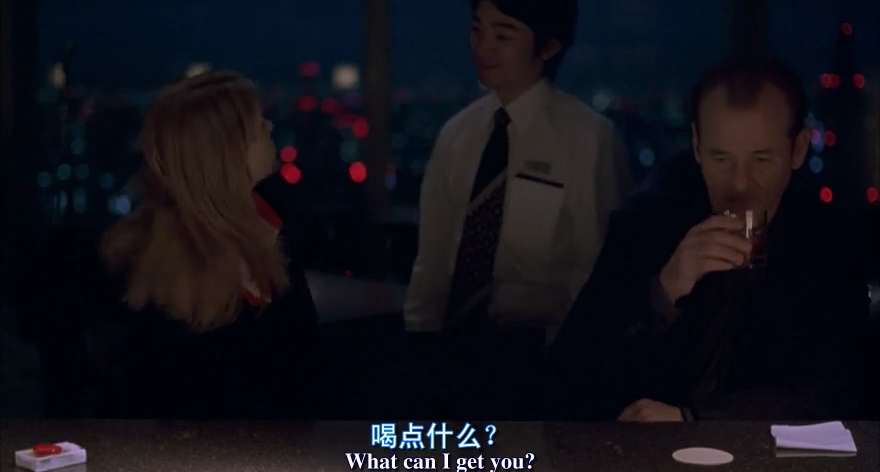

Input a video with hardcoded subtitles:

# example.py

from videocr import get_subtitles

if __name__ == '__main__': # This check is mandatory for Windows.

print(get_subtitles('video.mp4', lang='chi_sim+eng', sim_threshold=70, conf_threshold=65))

$ python3 example.py

Output:

0

00:00:01,042 --> 00:00:02,877

喝 点 什么 ?

What can I get you?

1

00:00:03,044 --> 00:00:05,463

我 不 知道

Um, I'm not sure.

2

00:00:08,091 --> 00:00:10,635

休闲 时 光 …

For relaxing times, make it...

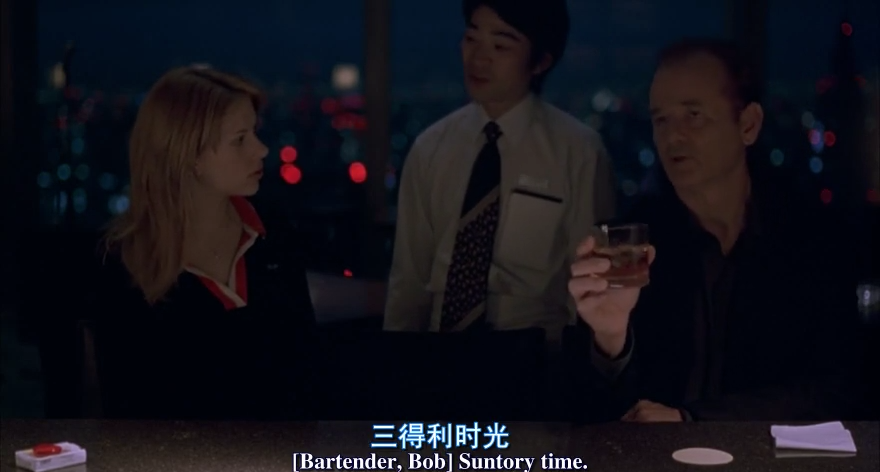

3

00:00:10,677 --> 00:00:12,595

三 得 利 时 光

Bartender, Bob Suntory time.

4

00:00:14,472 --> 00:00:17,142

我 要 一 杯 伏特 加

Un, I'll have a vodka tonic.

5

00:00:18,059 --> 00:00:19,019

谢谢

Laughs Thanks.

Performance

The OCR process is CPU intensive. It takes 3 minutes on my dual-core laptop to extract a 20 seconds video. More CPU cores will make it faster.

Installation

-

Install Tesseract and make sure it is in your

$PATH -

$ pip install videocr

API

-

Return subtitle string in SRT format

get_subtitles( video_path: str, lang='eng', time_start='0:00', time_end='', conf_threshold=65, sim_threshold=90, use_fullframe=False)

-

Write subtitles to

file_pathsave_subtitles_to_file( video_path: str, file_path='subtitle.srt', lang='eng', time_start='0:00', time_end='', conf_threshold=65, sim_threshold=90, use_fullframe=False)

Parameters

-

langThe language of the subtitles. You can extract subtitles in almost any language. All language codes on this page (e.g.

'eng'for English) and all script names in this repository (e.g.'HanS'for simplified Chinese) are supported.Note that you can use more than one language, e.g.

lang='hin+eng'for Hindi and English together.Language files will be automatically downloaded to your

~/tessdata. You can read more about Tesseract language data files on their wiki page. -

conf_thresholdConfidence threshold for word predictions. Words with lower confidence than this value will be discarded. The default value

65is fine for most cases.Make it closer to 0 if you get too few words in each line, or make it closer to 100 if there are too many excess words in each line.

-

sim_thresholdSimilarity threshold for subtitle lines. Subtitle lines with larger Levenshtein ratios than this threshold will be merged together. The default value

90is fine for most cases.Make it closer to 0 if you get too many duplicated subtitle lines, or make it closer to 100 if you get too few subtitle lines.

-

time_startandtime_endExtract subtitles from only a clip of the video. The subtitle timestamps are still calculated according to the full video length.

-

use_fullframeBy default, only the bottom half of each frame is used for OCR. You can explicitly use the full frame if your subtitles are not within the bottom half of each frame.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

File details

Details for the file videocr-0.1.6.tar.gz.

File metadata

- Download URL: videocr-0.1.6.tar.gz

- Upload date:

- Size: 6.5 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.1.1 pkginfo/1.5.0.1 requests/2.22.0 setuptools/41.6.0 requests-toolbelt/0.9.1 tqdm/4.40.2 CPython/3.7.5

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c3484f7d42b8ba7e4902300feefc0e02e2a5b19fb3bfab05576bc3fd197791b2

|

|

| MD5 |

396039d7e04004bd84eb24779358d6b5

|

|

| BLAKE2b-256 |

cbc4bce8bbe0aa1cfac2f00f72f9314b7f57700c339f26d41dfc8697601d91bf

|