VisionZip: Longer is Better but Not Necessary in Vision Language Models

Project description

VisionZip: Longer is Better but Not Necessary in Vision Language Models

TABLE OF CONTENTS

- News

- Highlights

- Video

- Demo

- Installation

- Quick Start

- Evaluation

- Examples

- Citation

- Acknowledgement

- License

News

- [2024.12.05] We add an Usage-Video, providing a step-by-step guide on how to use the demo.

- [2024.12.05] We add a new Demo-Chat, where users can manually select visual tokens to send to the LLM and observe how different visual tokens affect the final response. We believe this will further enhance the analysis of VLM interpretability.

- [2024.11.30] We release Paper and this GitHub repo, including code for LLaVA.

VisionZip: Longer is Better but Not Necessary in Vision Language Models [Paper]

Senqiao Yang,

Yukang Chen,

Zhuotao Tian,

Chengyao Wang,

Jingyao Li,

Bei Yu,

Jiaya Jia

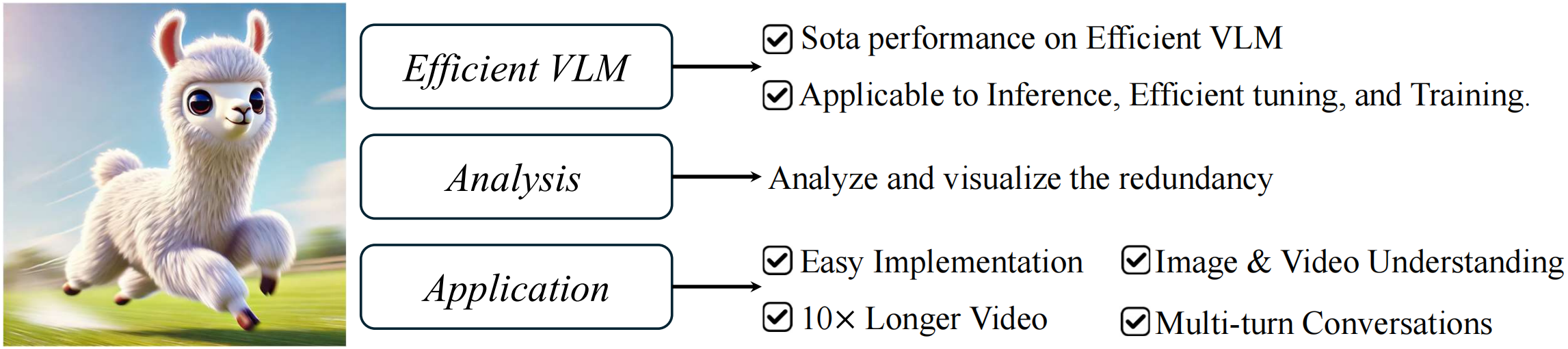

Highlights

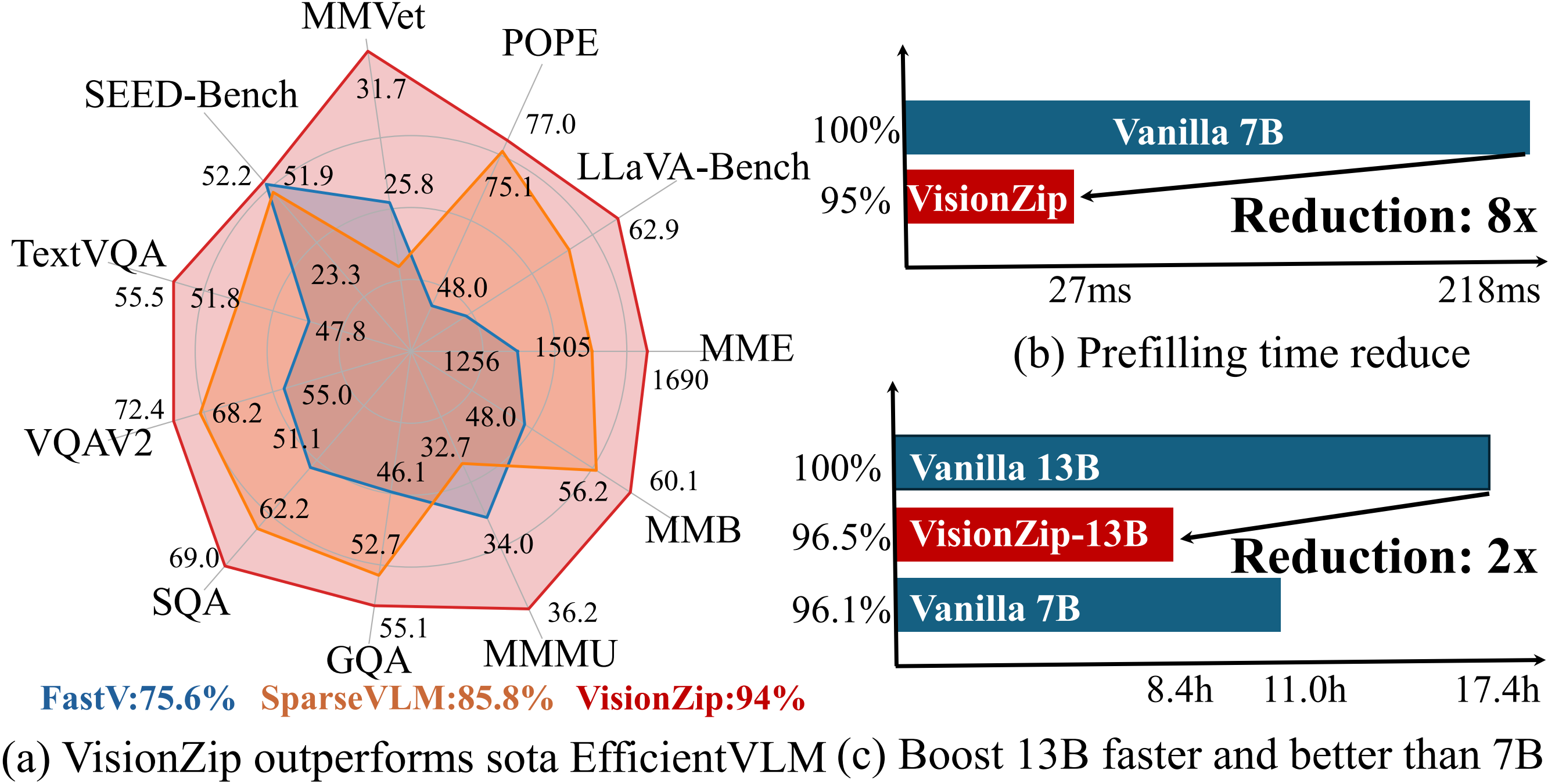

- Our VisionZip achieves state-of-the-art performance among efficient VLM methods. By retaining only 10% of visual tokens, it achieves nearly 95% of the performance in training-free mode.

- VisionZip can be applied during the inference stage (without incurring any additional training cost), the efficient tuning stage (to achieve better results), and the training stage (almost no performance degradation,saving 2× memory and 2× training time).

- VisionZip significantly reduces the prefilling time and the total inference time (with KV cache enabled).

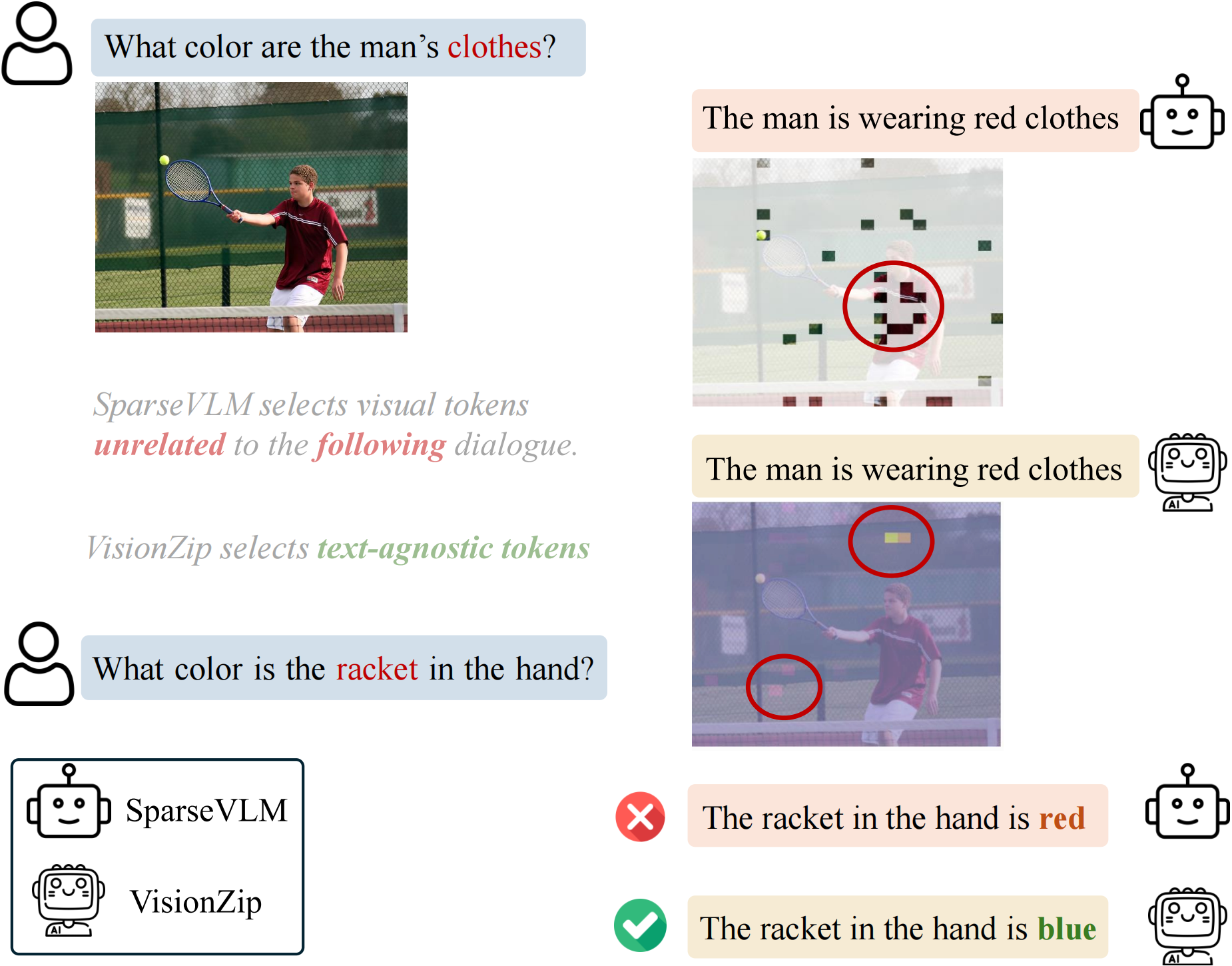

- Why does this simple, text-agnostic method outperform text-relevant methods? We conduct an in-depth analysis in the paper and provide a demo to visualize these findings.

- Since VisionZip is a text-agnostic method that reduces visual tokens before input into the LLM, it can adapt to any existing LLM acceleration algorithms and is applicable to any task that a vanilla VLM can perform, such as multi-turn conversations.

Video

Demo

Speed Improvement

The input video is about the Titanic, and the question is, "What’s the video talking about?"

It is important to note that the left side shows the vanilla model, which encodes only 16 frames, while the right side shows our VisionZip, which, despite encoding 32 frames, is still twice as fast as the vanilla model.

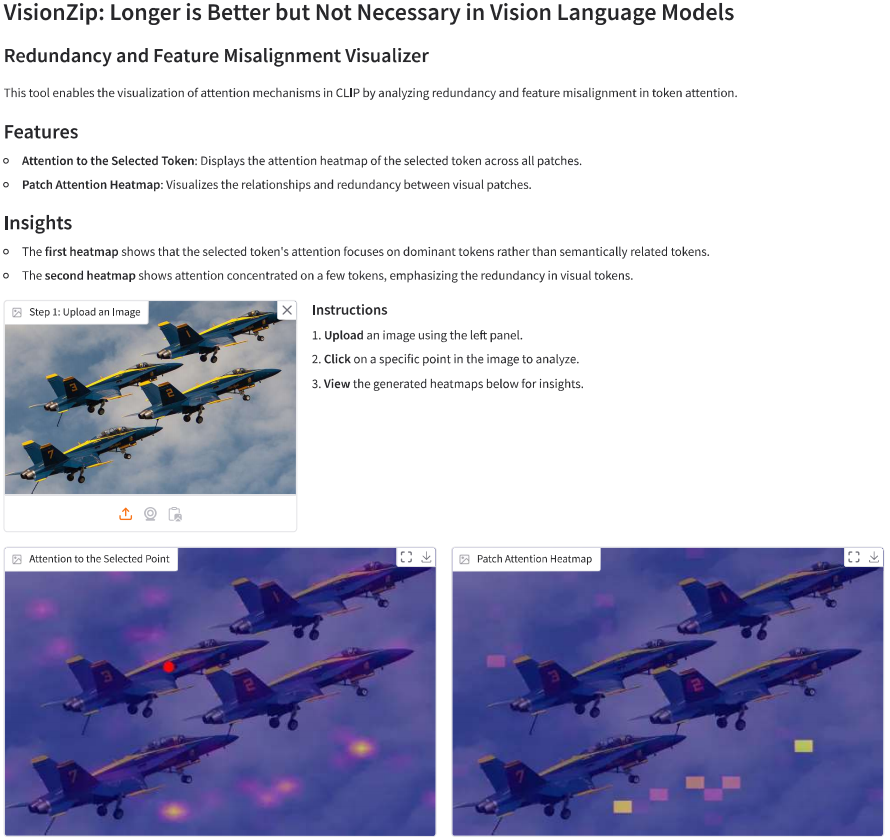

Visualize Redundancy and Misalignment

Explore the visual redundancy and feature misalignment in the above Demo. To run it locally, use the following command:

python gradio_demo.py

Observe How Different Visual Tokens Impact the Final Response

This Demo-Chat lets users to manually select which visual tokens to send to the LLM and observe how different visual tokens affect the final response.

Installation

Our code is easy to use.

-

Install the LLaVA environment.

-

For formal usage, you can install the package from PyPI by running the following command:

pip install visionzip

For development, you can install the package by cloning the repository and running the following command:

git clone https://github.com/dvlab-research/VisionZip

cd VisionZip

pip install -e .

Quick Start

from llava.model.builder import load_pretrained_model

from llava.mm_utils import get_model_name_from_path

from llava.eval.run_llava import eval_model

from visionzip import visionzip

model_path = "liuhaotian/llava-v1.5-7b"

tokenizer, model, image_processor, context_len = load_pretrained_model(

model_path=model_path,

model_base=None,

model_name=get_model_name_from_path(model_path)

)

## VisoinZip retains 54 dominant tokens and 10 contextual tokens

model = visionzip(model, dominant=54, contextual=10)

Evaluation

The evaluation code follows the structure of LLaVA or Lmms-Eval. After loading the model, simply add two lines as shown below:

## Load LLaVA Model (code from llava.eval.model_vqa_loader)

tokenizer, model, image_processor, context_len = load_pretrained_model(model_path, args.model_base, model_name)

## add VisionZip

from visionzip import visionzip

model = visionzip(model, dominant=54, contextual=10)

Examples

Multi-turn Conversations

VisionZip, which extracts text-agnostic tokens, is better suited for multi-turn dialogue.

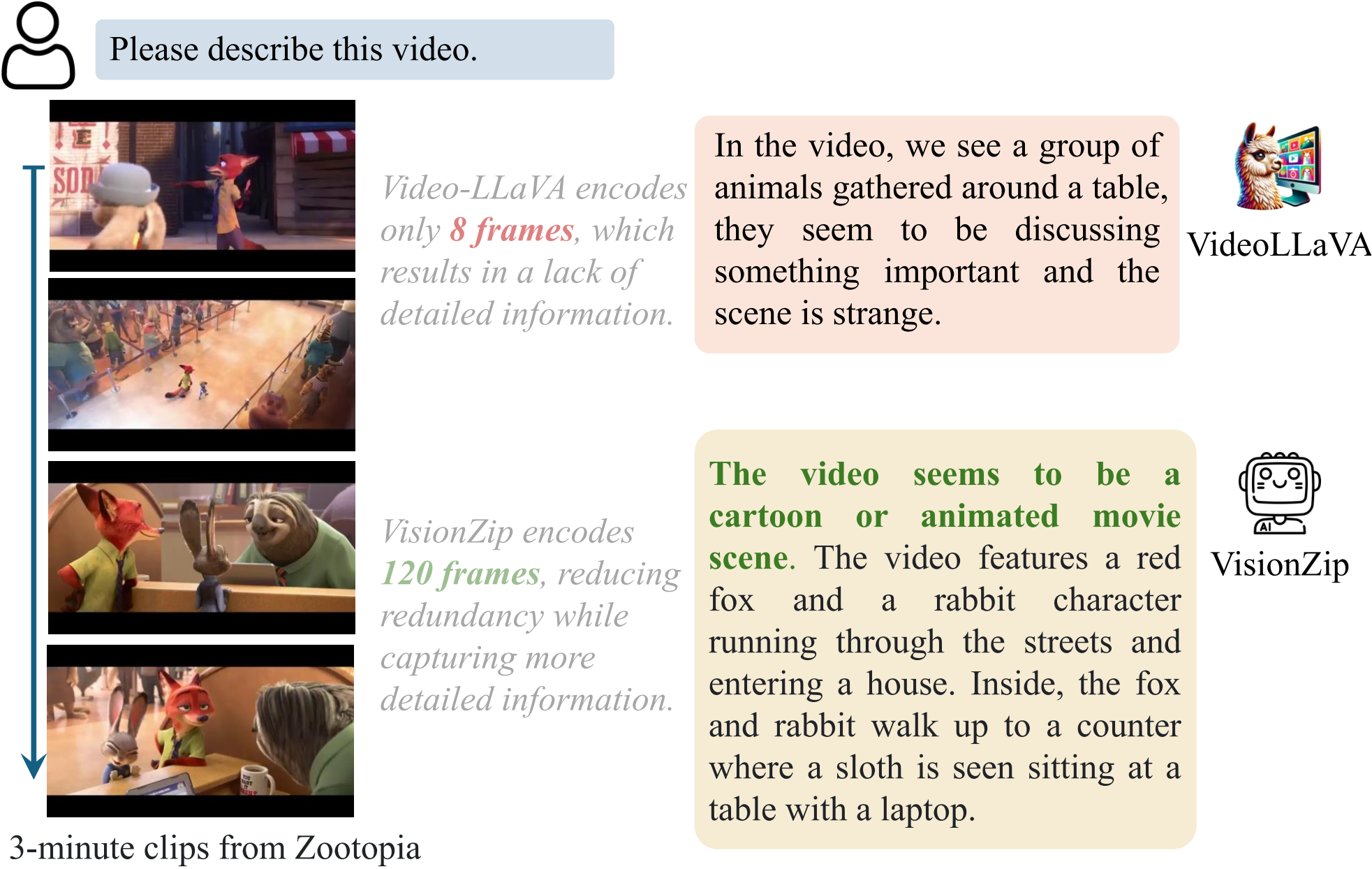

Longer Videos with More Frames

VisionZip reduces the number of visual tokens per frame, allowing more frames to be processed. This improves the model's ability to understand longer videos.

Citation

If you find this project useful in your research, please consider citing:

@article{yang2024visionzip,

title={VisionZip: Longer is Better but Not Necessary in Vision Language Models},

author={Yang, Senqiao and Chen, Yukang and Tian, Zhuotao and Wang, Chengyao and Li, Jingyao and Yu, Bei and Jia, Jiaya},

journal={arXiv preprint arXiv:2412.04467},

year={2024}

}

Acknowledgement

-

This work is built upon LLaVA, mini-Gemini, Lmms-Eval, and Video-LLaVA. We thank them for their excellent open-source contributions.

-

We also thank StreamingLLM, FastV, SparseVLM, and others for their contributions, which have provided valuable insights.

License

- VisionZip is licensed under the Apache License 2.0.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file visionzip-0.1.3.tar.gz.

File metadata

- Download URL: visionzip-0.1.3.tar.gz

- Upload date:

- Size: 8.2 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.1.1 CPython/3.10.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1a289a849d1b589ee1c64b218eb56c067998ad3be60a991434d1b2b1ed13f36c

|

|

| MD5 |

39b5c65a27fd09809a65fe53b0ef98e5

|

|

| BLAKE2b-256 |

74716980211f90cbbb8d6c6fb42d6a8fb81ecdebf636b93d8064b28d7f666224

|

File details

Details for the file visionzip-0.1.3-py3-none-any.whl.

File metadata

- Download URL: visionzip-0.1.3-py3-none-any.whl

- Upload date:

- Size: 8.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.1.1 CPython/3.10.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

76e8a4ea0e3d1dd522e5f9aa5d7ede2ef6271fc0cfebee96f11fe99d2e8dbfc1

|

|

| MD5 |

0a7f7aaf1040ef0c7e8d54ca5ddd5bfc

|

|

| BLAKE2b-256 |

854ff0c1a691ce2f8c7a84ebf5e7da8b29e72caf03d967d907fa0b9eedf02a6a

|