Visual Element-based Saliency Toolkit for multimodal webpage saliency extraction and scoring.

Project description

V.E.S.T.

V.E.S.T.

Read Me First

Welcome to the Visual Element-based Saliency Toolkit (V.E.S.T.)

High-level summary: Built on a 2 TB corpus of official tourism websites, this toolkit allows researchers to seamlessly extract and measure attraction priorities, portfolio diversification, framing narratives (e.g., authenticity, luxury), and multimodal coherence. It assists in building quantitative communication profiles that map how states market national branding online.

Core Mission: The primary goal is to assess the branding of a webpage and programmatically identify the kinds of topics and narratives that are most prevalent on it.

About the Package

Varieties of Tourism: Soft Power and National Branding in Official Tourism Portals

This project uses a 2 TB corpus of official tourism websites from roughly 100 countries to measure how states communicate tourism policy and national branding online. We extract which attractions countries prioritize, how concentrated or diversified their promoted portfolios are, and how they frame similar attractions through recurring narratives such as authenticity, luxury, safety, modernity, spirituality, and sustainability. We also evaluate multimodal coherence by testing whether the images countries publish support the claims in surrounding text or instead rely on generic visuals that weaken or contradict the stated message. To connect branding to politics without making causal claims, we link each country’s communication profile to political context, including regime type and governing ideology, and examine how these factors correlate with differences in attraction portfolios and framing strategies. The result is a scalable, interpretable set of indices and cross country comparisons that map what countries promote, how they describe it, and how politics relates to these choices.

Key Features Highlights

- Automated Web Crawling & Archiving: Crawl domains natively from

.txtlists, preserving structures and taking high-quality full-page screenshots. - Topological Graph Generation: Automatically map the structure of crawled domains as directed edge graphs serialized into GraphML format.

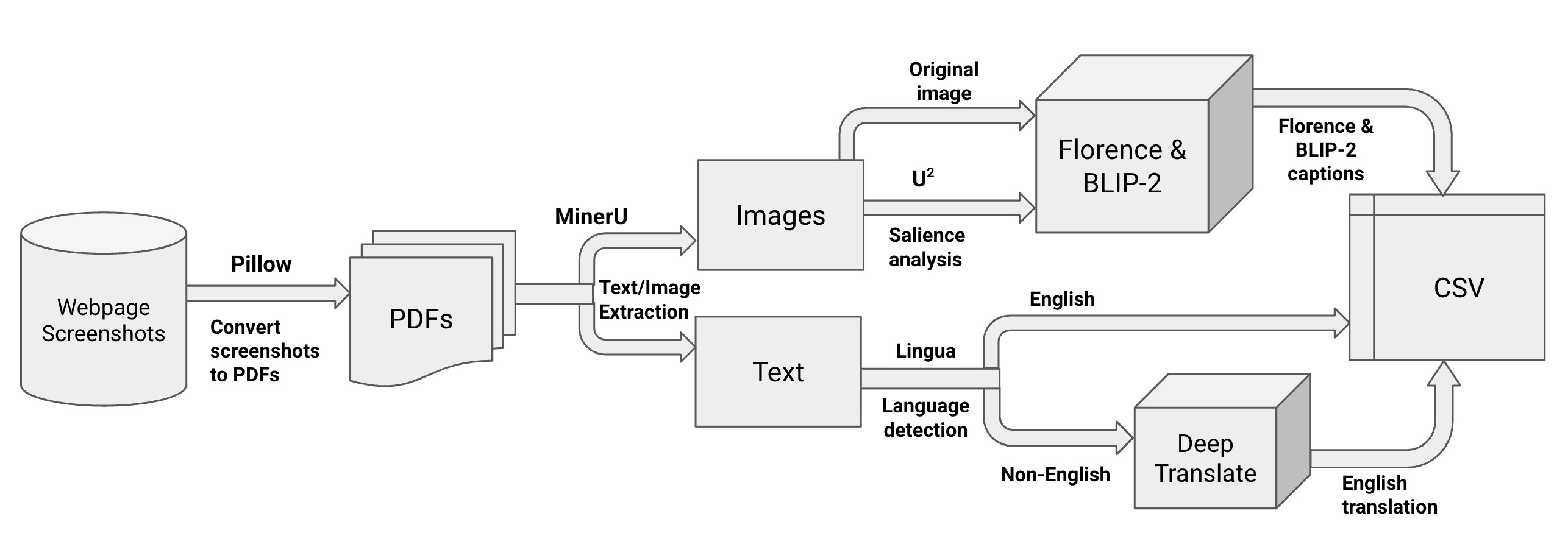

- Multimodal Content Extraction: Run a customizable, locally hosted image-to-text pipeline combining MinerU structuring, U2-Net saliency detection, and a choice of modern large vision models (e.g., FLORENCE-2, BLIP-2) to generate structured multimodal CSV datasets.

- Element Importance Scoring: Compute quantitative assessments of visual and textual elements using our bespoke VoT Formula.

Table of Contents

- Installation

- Architecture & The VoT Formula

- Quick Start Guide

- Implemented Tools & Supported Models

- Usage Notes

Installation

This project leverages deep learning for computer vision and linguistics, requiring a robust environment setup. We recommend using Conda to manage your dependencies.

Setting up your Conda Environment

We will walk through setting up a dedicated workspace (vest), modeled after the project's internal environment:

- Create the virtual environment:

conda create -n vest python=3.10 -y

- Activate the environment:

conda activate vest

- Install Required Python Dependencies (aligned with

pyproject.toml; adjusttorchinstall for your hardware):pip install torch torchvision pip install transformers pillow deep-translator lingua-language-detector beautifulsoup4 gdown networkx numpy opencv-python pandas requests mineru playwright

- Optional: Install MinerU Extra Dependencies:

Use this if you want the full MinerU extras stack in your environment.

pip install --upgrade pip pip install uv uv pip install -U "mineru[all]"

(When formally published, you will simply use pip install vest for the final module layer).

Architecture & The VoT Formula

Pipeline Architecture

The VoT Formula

Once elements are extracted, structured, captioned, and translated, they reflect specific themes and visual real estate on the host sites. To establish "what matters most" on any given parsed page, the toolkit uses the VoT Formula:

Importance = weight_1(size_of_content) + weight_2(coordinates_on_page) + weight_3(host_webpage_importance)

- size_of_content: The raw pixel area the text or image occupies on the screen.

- coordinates_on_page: Positional penalty/bonus (e.g., elements at the top coordinate space matter more).

- host_webpage_importance: A multiplier reflecting the domain graph's PageRank or explicitly defined weight of the host domain.

Quick Start Guide

1. Generate Site Graphs from a URL List

generate-site-graphs seeds.txt --output-folder site-graphs

seeds.txt should contain one website per line.

2. Run Preprocessing Independently

preprocess-folder data/raw data/interim

3. Run Webpage Element Extraction Independently

extract-webpage-elements data/interim data/interim

4. Run Captioning and Translation Independently

process-webpage-elements \

data/interim \

data/processed \

--model florence \

--hf-token "$HF_TOKEN" \

--generate-salient-image no \

--translate-to-eng yes

5. Rank Webpages (PageRank) Independently

rank-webpages site-graphs/visitqatar_com.graphml data/processed

This creates data/processed/visitqatar_com.csv with columns:

webpage_namerank

6. Score Webpages Independently

score-webpages \

0.5 0.3 0.2 \

data/processed/webpage_elements_captions.csv \

data/processed/visitqatar_com.csv \

data/processed/webpage_elements_scored.csv

7. Run the Entire Pipeline in One Command

web-saliency \

--raw-files-path data/raw \

--model florence \

--generate-salient-image no \

--translate-to-eng yes \

--output-csv-name webpage_elements_captions.csv

Implemented Tools & Supported Models

| Type | Library / Model | Purpose |

|---|---|---|

| Crawling | Playwright, Requests | Archiving and rendering JavaScript-heavy pages |

| Topology | NetworkX | Parsing links into a directed GraphML object |

| Structuring | MinerU | Bounding box generation and modality classification |

| Saliency | U2-Net | "Soft dimming" background elements prior to captioning |

| Captioning | BLIP-2, Florence-2 | Vision-Language Models to summarize visual context |

| NLP | Lingua, Google Translate | Detecting languages and providing English homogenization |

Usage Notes

- Hugging Face Token: If you plan to use gated models like BLIP-2 (or want faster downloads), you may need to export a Hugging Face API token:

export HF_TOKEN="your_token". - GPU Acceleration: MinerU, Florence-2, and BLIP-2 all highly benefit from CUDA (NVIDIA GPUs) or MPS (Apple Silicon). When available, the pipeline automatically routes tensor processing to these accelerators.

- Data Preprocessing: Place directories containing the webpages into

data/raw. Make sure to structure folders cleanly (e.g.,Country/Webpage/dimensions/image.jpg).

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file web_vest-0.1.0.tar.gz.

File metadata

- Download URL: web_vest-0.1.0.tar.gz

- Upload date:

- Size: 1.6 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9c6bc7e344aa5c1338df73d128990c681e17e957b3e1ce3af326e5ea9506d052

|

|

| MD5 |

8effd76ef8624ceb5f66e1b1dcf4324f

|

|

| BLAKE2b-256 |

f35e170696780316b54c44be16765ff812148d7cec0ad0895b980db9db5eb14e

|

File details

Details for the file web_vest-0.1.0-py3-none-any.whl.

File metadata

- Download URL: web_vest-0.1.0-py3-none-any.whl

- Upload date:

- Size: 1.6 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

490e7e2201ad765e531bb923cf0913d3e4b2d66d8951b59c018b9b52912eff12

|

|

| MD5 |

154e40610b48d257ce77a7066ee96395

|

|

| BLAKE2b-256 |

86ef381b51913e07ab858a7bf13a494b9ee7034282a3e0efa8fcbaf64bfac547

|