Transparent, drop-in compression for Python's pickle — smaller files, same API

Project description

zpickle

Transparent, drop-in compression for Python's pickle — smaller files, same API.

zpickle adds high-performance compression to your serialized Python objects using multiple state-of-the-art algorithms without changing how you work with pickle.

# Replace this:

import pickle

# With this:

import zpickle as pickle

# Everything else stays the same!

Features

- Drop-in replacement for the standard

picklemodule - Transparent compression — everything happens automatically

- Multiple algorithms — choose

zstd,brotli,zlib, orlzma(powered bycompress_utils) - Configure once, use everywhere — set global defaults for your entire app

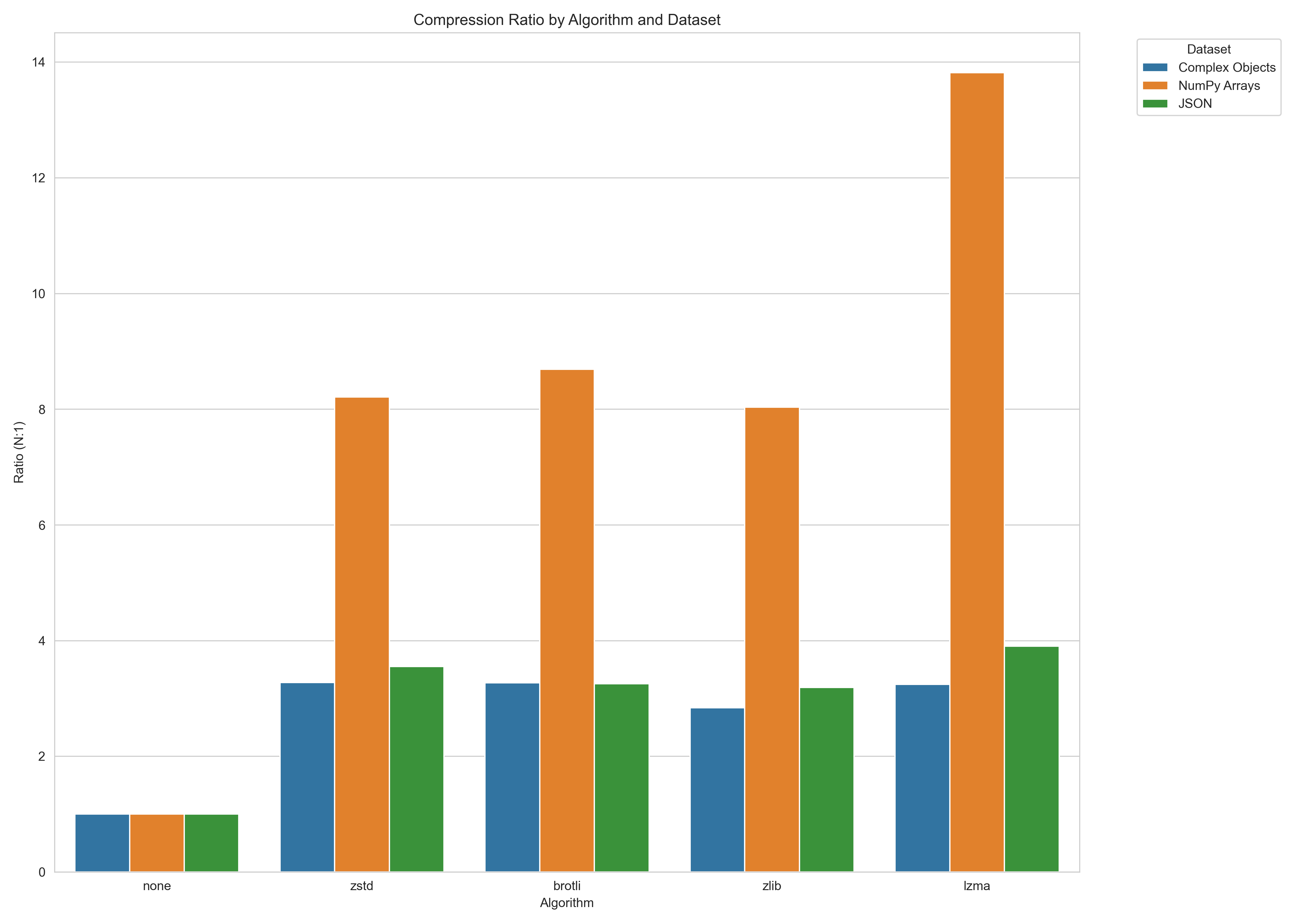

- Smaller data — 2-10× smaller serialized data (depending on content and algorithm)

- Backward compatible — automatically reads both compressed and regular pickle data

- Complete API compatibility — all pickle functions work as expected

Installation

pip install zpickle

Quick Start

Basic Usage

import zpickle as pickle

# Serializing works exactly like pickle

data = {"complex": ["nested", {"data": "structure"}], "with": "lots of repetition"}

serialized = pickle.dumps(data) # Automatically compressed!

# Deserializing works the same way

restored = pickle.loads(serialized) # Automatically decompressed!

# File operations work too

with open("data.zpkl", "wb") as f:

pickle.dump(data, f)

with open("data.zpkl", "rb") as f:

restored = pickle.load(f)

Custom Configuration

import zpickle

# Configure global settings

zpickle.configure(algorithm='brotli', level=9) # Higher compression

# Or configure for a single operation

data = [1, 2, 3] * 1000

compressed = zpickle.dumps(data, algorithm='zstd', level=6)

Performance

Compression ratios versus standard pickle (higher is better):

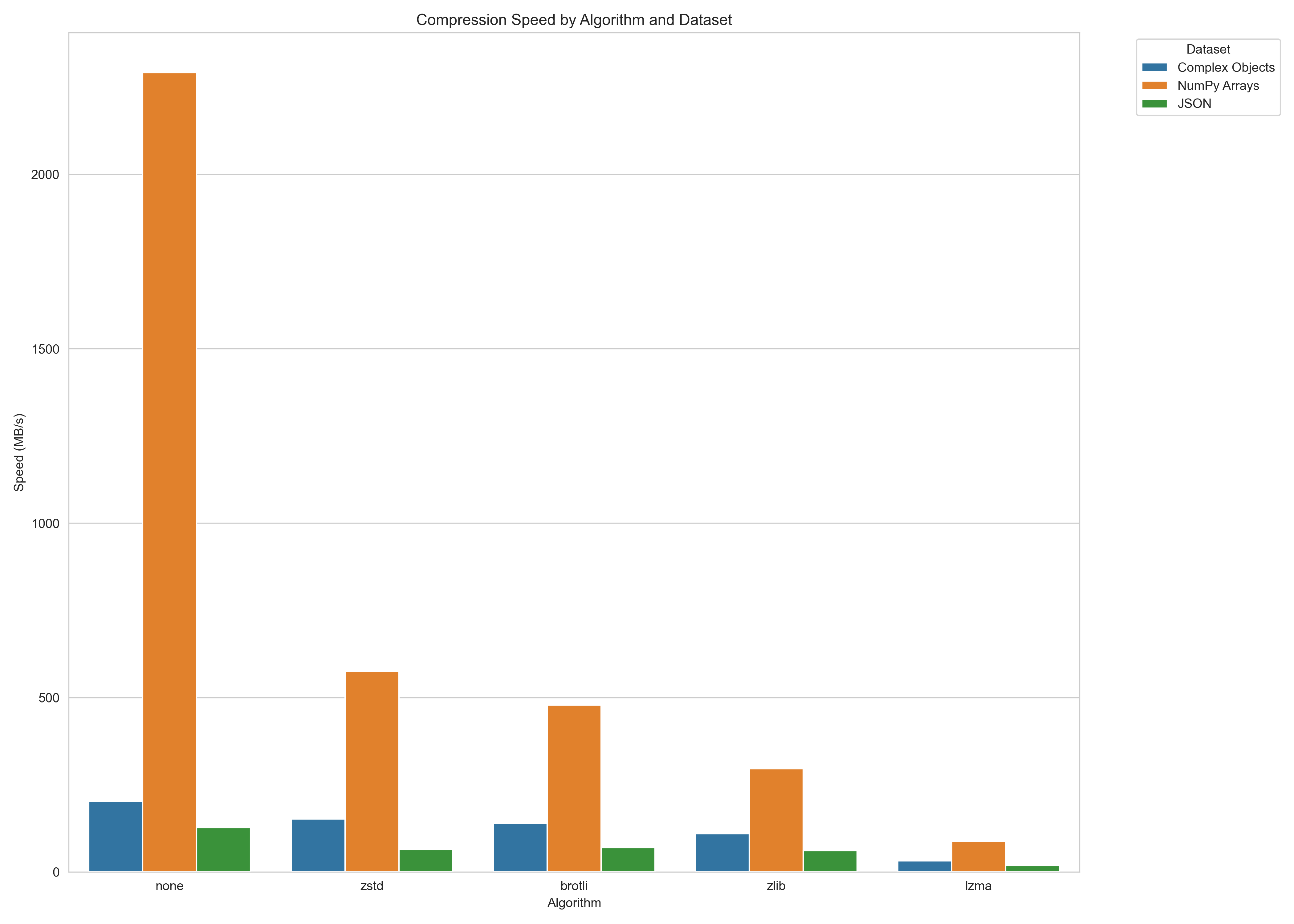

Serialization speed (MB/s, higher is better):

Note: Performance varies by data characteristics. Run benchmarks on your specific data for accurate results.

To run your own benchmarks, you can use:

python -m benchmarks.benchmark

How It Works

zpickle applies compression with minimal overhead:

- Objects are first serialized using standard pickle

- The pickle data is compressed using the selected algorithm

- A small header (8 bytes) is added to identify the format and algorithm

- When deserializing,

zpickleauto-detects the format and decompresses if needed

API Reference

zpickle maintains complete API compatibility with the standard pickle module:

Core Functions

dumps(obj, protocol=None, ..., algorithm=None, level=None)- Serialize and compress objectloads(data, ...)- Deserialize and decompress objectdump(obj, file, protocol=None, ..., algorithm=None, level=None)- Serialize to fileload(file, ...)- Deserialize from file

Configuration

configure(algorithm=None, level=None, min_size=None)- Set global defaultsget_config()- Get current configuration

Classes

Pickler(file, ...)- Subclass of pickle.Pickler with compressionUnpickler(file, ...)- Subclass of pickle.Unpickler with decompression

Alternatives

- Standard

pickle: No compression, but native to Python compressed_pickle: Similar concept, but less configurablejoblib: More focused on large NumPy arrays and parallel processingmsgpack,protobuf: Different serialization formats (not pickle-compatible)

License

This project is distributed under the MIT License. Read more >

Links

- GitHub Repository

- PyPI Package

- Issue Tracker

- compress-utils - The underlying compression library

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file zpickle-1.0.1.tar.gz.

File metadata

- Download URL: zpickle-1.0.1.tar.gz

- Upload date:

- Size: 544.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

34d40bd5c0f75c79b4f425cac186c5ca79e604bb7c80bdd589e29eeb5160cb34

|

|

| MD5 |

4b938242e931b3f0e4ec17410b5d235a

|

|

| BLAKE2b-256 |

32fc5bf4162377d35041b47c48f0ebcf77ec6a08417abe7e220d2912f477ad74

|

Provenance

The following attestation bundles were made for zpickle-1.0.1.tar.gz:

Publisher:

test_and_package_wheel.yml on dupontcyborg/zpickle

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

zpickle-1.0.1.tar.gz -

Subject digest:

34d40bd5c0f75c79b4f425cac186c5ca79e604bb7c80bdd589e29eeb5160cb34 - Sigstore transparency entry: 183612212

- Sigstore integration time:

-

Permalink:

dupontcyborg/zpickle@a76832ddc96048a851154c376867edd3d71bda9c -

Branch / Tag:

refs/tags/v1.0.1 - Owner: https://github.com/dupontcyborg

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

test_and_package_wheel.yml@a76832ddc96048a851154c376867edd3d71bda9c -

Trigger Event:

push

-

Statement type:

File details

Details for the file zpickle-1.0.1-py3-none-any.whl.

File metadata

- Download URL: zpickle-1.0.1-py3-none-any.whl

- Upload date:

- Size: 11.9 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

824481965b5c52b9966f8dd7740409d011e214b56b51856214c5df3d9bbc18e3

|

|

| MD5 |

58a014208cd7d31849ad9d2b42f3a878

|

|

| BLAKE2b-256 |

5dac116acde0010ee0042a4d366242c6d70683dbc2c7a89a3a534e0ade6b3107

|

Provenance

The following attestation bundles were made for zpickle-1.0.1-py3-none-any.whl:

Publisher:

test_and_package_wheel.yml on dupontcyborg/zpickle

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

zpickle-1.0.1-py3-none-any.whl -

Subject digest:

824481965b5c52b9966f8dd7740409d011e214b56b51856214c5df3d9bbc18e3 - Sigstore transparency entry: 183612213

- Sigstore integration time:

-

Permalink:

dupontcyborg/zpickle@a76832ddc96048a851154c376867edd3d71bda9c -

Branch / Tag:

refs/tags/v1.0.1 - Owner: https://github.com/dupontcyborg

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

test_and_package_wheel.yml@a76832ddc96048a851154c376867edd3d71bda9c -

Trigger Event:

push

-

Statement type: