A python package for clustering and summarizing graphs of texts.

Project description

Graph Clustering and Summarizing

Introduction

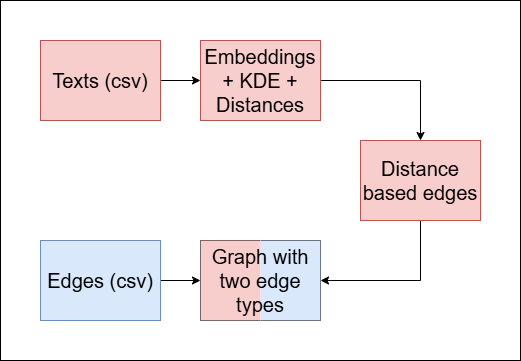

We aim to improve the quality of a multi-document summary, given the connections between the texts (via shared authors, institution, subject etc.). Our method is as follows:

- Calculate the direct distances between the texts in the graph.

- Build a new graph with two edge types:

- Blue edges: represent the original connections given.

- Red edges: represent similarity between texts.

- Cluster the multi color edged graph.

- Summarize each cluster.

- Evaluate the summarization of each cluster.

Our python package contains a full pipeline that performs the above operations (1-5), or alternatively perform specific operations individually.

Table of Contents

Installation

We do not have an official python package manager installation available yet. However, this repository is public, and one can clone the repository and open it in any IDE that accepts git.

Quick tour

To immediately use our package, you only need to run three functions (two if you wish to skip the final evaluations).

You need to have your data in one of the following formats:

- a prepared graph of texts in ''.gpickle'' format.

- two csv files to make the graph from: vertex metadata and edges.

Case 1

In this case you can skip the graph processing part, and go straight to the clustering part. The prepared graph needs to include the followings:

- vertex attribute called 'content', whose value is the textual data of the vertex.

- (optional) vertex attribute called 'shape', in order to distinguish between texts in different languages (for now, this case deals with each language individually).

Case 2

In this case, we need to create the graph first.

- The csv containing vertex data has to be named '

{dataset name}_papers.csv', and has to contain at least an ID column and 'abstract' column. The ID column values are used to identify texts, and the 'abstract' column should contain the texts. - The csv containing edges has to be named '

{dataset name}_graph.csv' and have 2 columns by default, and each of its rows has to contain at least one identifiable text that appears in the vertex data file. In case the other element is not identifiable, a new vertex will be created, with a different type and no content. - Rows with 3 or more elements will be dealt as a clique.

Secondly, calculation of distances between texts are required. For that we firstly have to convert the texts to sets of embedding vectors.

We do that in '2_embed_abstracts.py' using the sentence-transformers package, refer to each text as a set of sentence embeddings, and calculate 'energy distance' between two texts (two sets of vectors) in 'calc_energy.py'.

In order to successfully estimate the distance between two texts, we filter sentences that interrupt the procedure (in our case, we prefer to filter out the most common sentences, as well as the rarest sentences). We do that by combining all of the sentences' embeddings in the dataset into one list, order the items according to their frequencies, and filtering least_cutoff_percentage embeddings from the least common sentences. Similarly, we filter most_cutoff_percentage embeddings from the most common sentences. Both cutoff parameters are optimized for each dataset given.

After filtering out the irrelevant embeddings, we compute the distances between each pair of texts using 'energy distance':

def compute_energy_distance_matrix(ds_name, least_cutoff_percentage, most_cutoff_percentage):

Where:

ds_name: The name of the dataset.least_cutoff_percentage: The percentage of data to filter among rare sentences.most_cutoff_percentage: The percentage of data to filter among frequent sentences. We then calculate the energy distance between each pair of embeddings using the usingdcorpackage.

Returns: The energy distance matrix for the matched embedding.

You can then run the graph creating part of our pipeline in 'functions.py':

def make_graph(name, **kwargs):

Where:

name: The name of the dataset.**kwargs: A dictionary of values to manually configure the graph creation. Here is an example dictionary:

graph_kwargs = {

'size': 2000,

'color': '#1f78b4',

'K': 5,

}

where:

k: The number of neighbors to account for in the distance based edges.color: The default vertex color (needed in order to plot the final vertex partition).size: The default vertex size (needed in order to plot the final vertex partition).

Returns: Processed graph (networkx.Graph object)

Clustering

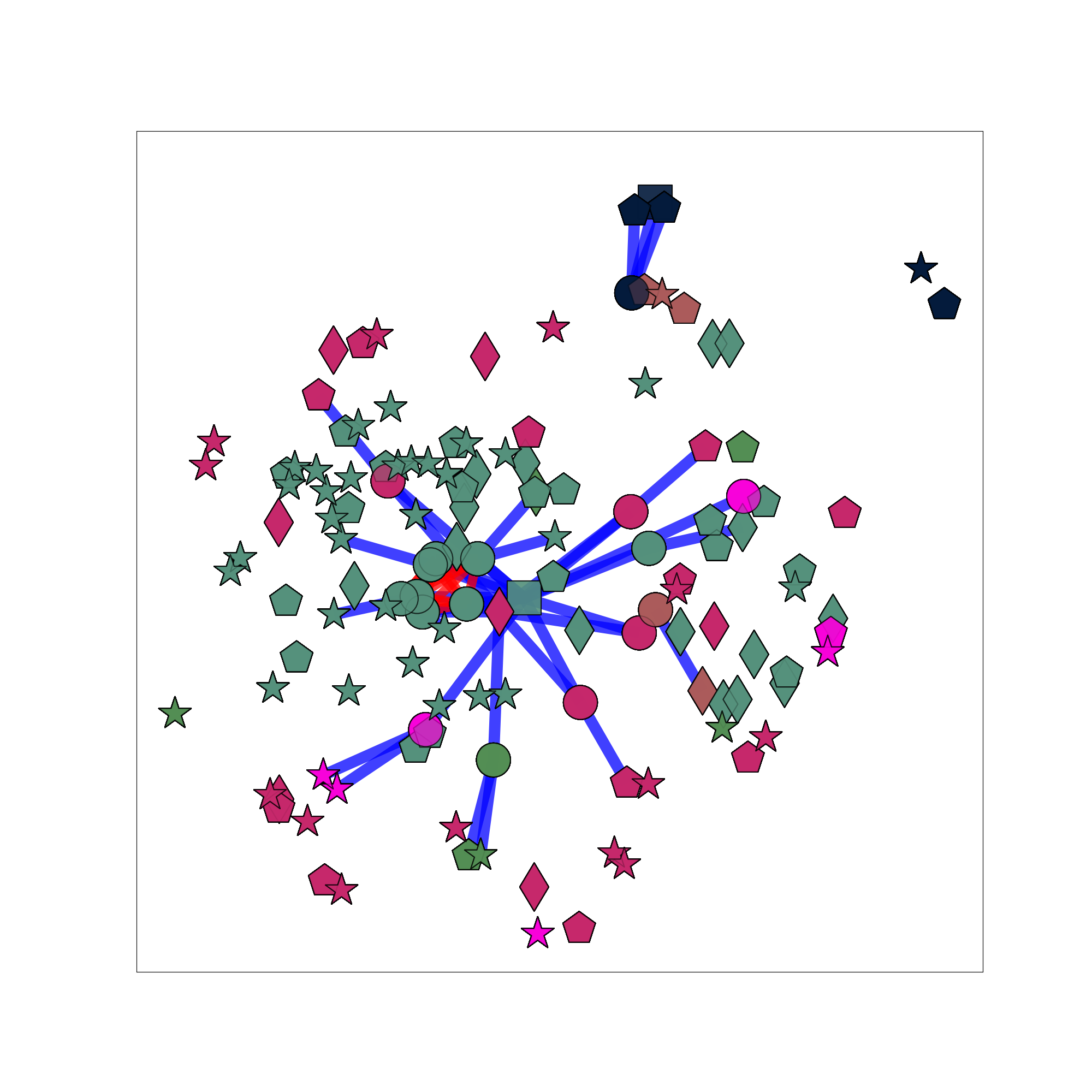

After processing/creating the graph of texts (and keywords), we cluster the graph using the Louvain method with the implementation embedded in the networkx module.

In case the graph consists of keyword vertices, and not exclusively texts, the other vertices are included in the clustering part of our pipeline, but ignored from then on.

The clustering is also done in the 'function.py' source file. In addition to return the partition, our method also assigns each vertex with a color, as a way to map the vertices to clusters later on.

def cluster_graph(G, name, **kwargs):

Where:

G: The processed/given graph.name: The name of the dataset.**kwargs: A dictionary of values to manually configure the clustering process. Here is an example dictionary:

clustering_kwargs = {

'save': True,

'resolution': res,

'weight': weight,

'K': K

}

Where:

save: A flag indicating whether to save the clustered graph as a '.gpickle' format or not.weight: The assigned weight for distance based edges.k: The number of neighbors to account for in the distance based edges.

Returns: $\mathcal{P}$ a partition of vertices from G into communities.

Example for the clustering:

Summarization

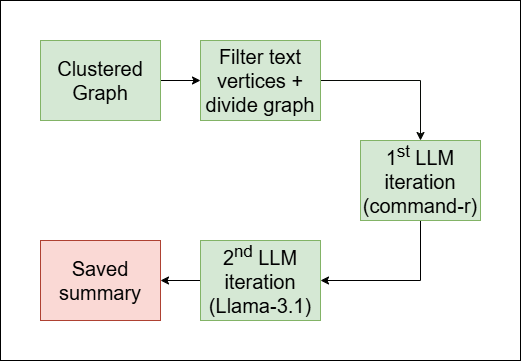

As mentioned in the introduction section, the main goal of our pipeline is to summarize clusters of vertices, rather than the full graph. To achieve that we perform the following steps:

-

Cluster the graph (see clustering).

-

Divide the graph according to the clusters (done in

filter_by_color()). -

Summarize each cluster individually. In this step, each time we are given a subgraph of only the cluster. Firstly we filter out non-textual vertices, then we access the texts and save them in a list, and then we send the list with a prompt to two iterations of LLM generation:

command-r: in this iteration, we generate a draft of the final summary.llama-3.1: in this iteration, we refine the summary and enrich its language.

And a third iteration generates a title for the summary (also made with

llama-3.1).

The full summarization process happen in summarize_per_color():

def summarize_per_color(subgraphs, name):

Where:

subgraphs: A list of subgraphs, each belong to a different cluster (see step 2).name: The name of the dataset.

The function fetches the texts from the vertices in the subgraph, send them to the above 3 iterations of LLM generation, and saves the summaries for each cluster as a '.txt' file in a folder named name.

Example of a summary:

Biomass-derived carbons (BDCs) and their composites with conductive materials, such as metals, metal sulfides, carbon nanotubes,

and reduced graphene oxide, are used to enhance the performance of supercapacitors. By combining BDCs with conductive additives,

researchers aim to improve conductivity, charge/discharge capabilities, and specific surface area, resulting in higher specific

capacitance values. This approach integrates the benefits of electrochemical double-layer capacitors (EDLCs) and pseudocapacitors,

leading to enhanced energy density. Layered double hydroxides (LDHs), synthetic two-dimensional nano-structured anionic clays, are

also explored as hosts for Azo-compounds to create nano-hybrid materials. Intercalating large anionic pigments like phenyl azobenzoic

sodium salt into Zn-Al LDH increases the interlayer spacing significantly, and the resulting nano-hybrid material is used as a filler

for polyvinyl alcohol (PVA) to form nano-composites that exhibit improved thermal stability compared to pure PVA.

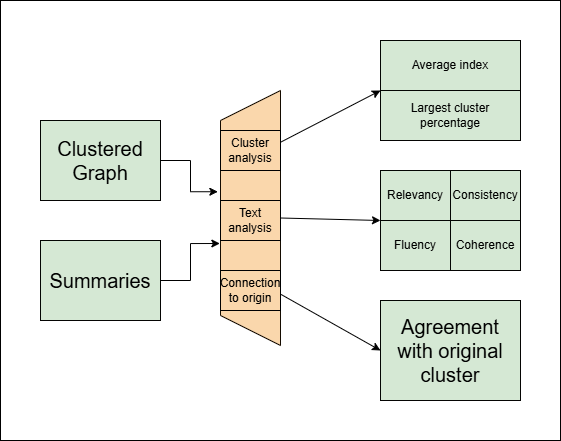

Evaluation

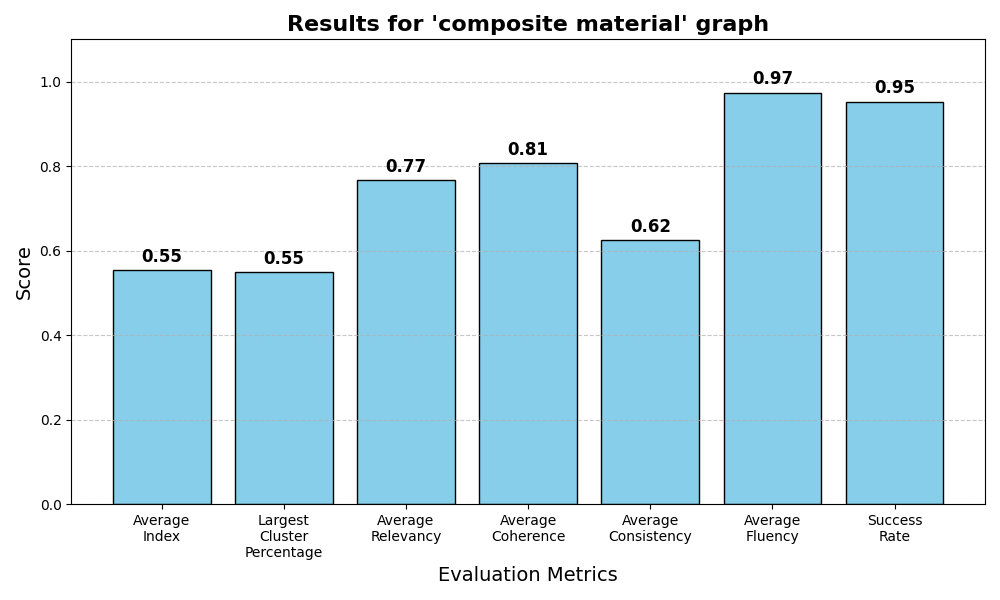

In the evaluation section, we execute a series of tests in order to assess the quality of:

- The clustering.

- The summaries (as text documents).

- The consistency between a summary and its origin.

Clustering scores

The clustering scores evaluate how good the clustering partitioned the graph. For that we used two metrics (one is irrelevant if the graph is given by the user and not created by our 'make_graph()' method). Here we computed everything by ourselves.

- 'Average index': This metric measures the proportion between the average distance between two vertices from the same cluster, compared to two random vertices. (This method is relevant only for graphs our method created)

- 'Largest cluster percentage': This metric measures the percentage of data in the largest cluster created.

Both metrics are between 0 and 1, and we would expect different optimal results:

- The optimal 'average index' should be as low as possible, but strictly positive.

- The optimal 'largest cluster percentage' shoule be around 0.5 (or 50% of the data).

Summary scores

The summary scores measure how understandable a summary is as a text. For that four scores are estimated:

- 'Fluency'

- 'Consistency'

- 'Coherence'

- 'Relevancy'

The estimation is made with an LLM judge (we used 'command-r', but other models perform similarly here).

The scripts we used for each of the four metrics are from this repository.

Consistency index

In addition to the other metrics, we also estimated how much a given summary agrees with texts from its origin compared to texts from other clusters.

In order to estimate this metric, for each cluster we sampled texts from within and from outside it, then sent them with a specially designed prompt to an LLM judge (we used 'command-r' here as well).

Results

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file graphclusterer-0.0.9.tar.gz.

File metadata

- Download URL: graphclusterer-0.0.9.tar.gz

- Upload date:

- Size: 32.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.0.1 CPython/3.12.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e067fbfa7dc3a7c0db042a6f1c3ac8dc835b51809de98dc053b05eb880cd9670

|

|

| MD5 |

2986320fa548fbadd04d4bc26a7c834d

|

|

| BLAKE2b-256 |

5d22e0ae70a63d381920c9e2cec38290ee2da7e5004a578b6e3e664d23730b68

|

File details

Details for the file GraphClusterer-0.0.9-py3-none-any.whl.

File metadata

- Download URL: GraphClusterer-0.0.9-py3-none-any.whl

- Upload date:

- Size: 35.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.0.1 CPython/3.12.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

fd1fb028e658df92504e02c43f773b0648afc7b48ec7a23546108bbebe7a25fe

|

|

| MD5 |

edf5d6ddfcdfbeb1b47a1b300f504bec

|

|

| BLAKE2b-256 |

548c1ea15fe22a190578366ba0e3ee2e2cc253b5b6653f64d80115baf7bb5d9e

|