preprocess microbiome data and use IMIC

Project description

Preprocessing for 16S values.

The input file for the preprocessing should contain detailed unnormalized OTU/Feature values as a biom table, the appropriate taxonomy as a tsv file, and a possible tag file, with the class of each sample. The tag file is not used for the preprocessing, but is used to provide some statistics on the relation between the features and the class. You can also run the preprocessing without a tag file.

input

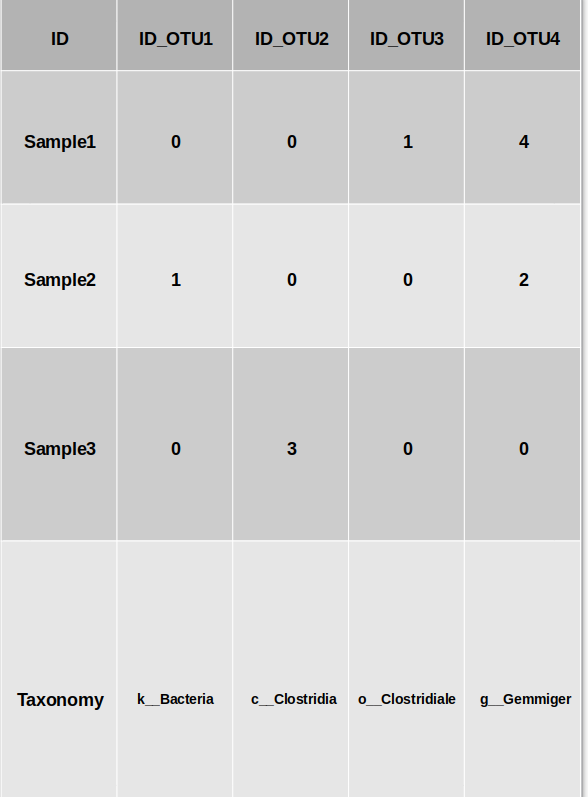

Here is an example of how the input OTU file should look like : (file example)

Parameters to the preprocessing

Now you will have to select the parameters for the preprocessing.

- The taxonomy level used - taxonomy sensitive dimension reduction by grouping the bacteria at a given taxonomy level. All features with a given representation at a given taxonomy level will be grouped and merged using three different methods: Average, Sum or Merge (using PCA then followed by normalization).

- Normalization - after the grouping process, you can apply two different normalization methods. the first one is the log (10 base)scale. in this method

x → log10(x + ɛ),where ɛ is a minimal value to prevent log of zero values.

The second methos is to normalize each bacteria through its relative frequency.

If you chose the Log normalization, you now have four standardization

possibilities:

a) No standardization

b) Z-score each sample

c) Z-score each bacteria

d) Z-score each sample, and Z-score each bacteria (in this order)

When performing relative normalization, we either dont standardize the results or performe only a standardization on the bacteria.

- Dimension reduction - after the grouping, normalization and standardization you can choose from two Dimension reduction method: PCA or ICA. If you chose to apply a Dimension reduction method, you will also have to decide the number of dimensions you want to leave.

How to use

use MIPMLP.preprocess(input_df)

####parameters:

taxonomy_level 4-7 , default is 7

taxnomy_group : sub PCA, mean, sum, default is mean

epsilon: 0-1

z_scoring: row, col, both, No, default is No

pca: (0, 'PCA') second element always PCA. first is 0/1

normalization: log, relative, default is log

norm_after_rel: No, relative, default is No

output

The output is the processed file.

iMic

iMic is a method to combine information from different taxa and improves data representation for machine learning using microbial taxonomy. iMic translate the microbiome to images, and convolutional neural networks are then applied to the image.

micro2matrix

Translates the microbiome values and the taxonomy tree into an image. micro2matrix also save the images that were created in a guven folder.

input

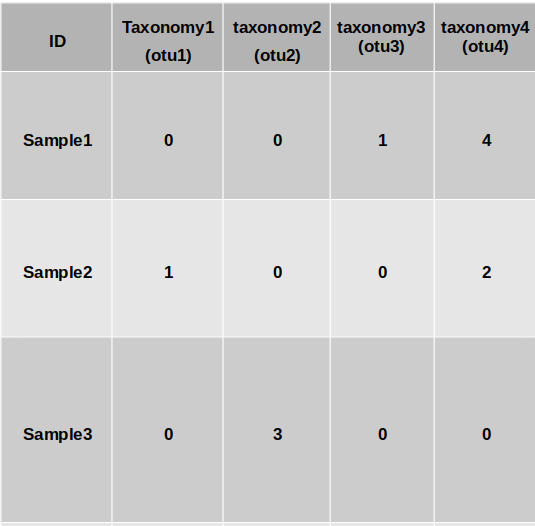

A pandas dataframe which is similar to the MIPMLP preprocessing's input.

A folder to save the new images at.

Parameters

You can determine all the MIPMLP preprocessing parameters too, otherwise it will run with its deafulting parameters.

How to use

import pandas as pd

df = pd.read_csv("address/ASVS_file.csv")

folder = "save_img_folder"

MIPMLP.micro2matrix(df, folder)

CNN2 class - optional

A model of 2 convolutional layer followed by 2 fully connected layers.

####CNN model parameters l1 loss = the coefficient of the L1 loss

weight decay = L2 regularization

lr = learning rate

batch size = as it sounds

activation = activation function one of: "elu", | "relu" | "tanh"

dropout = as it sounds (is common to all the layers)

kernel_size_a = the size of the kernel of the first CNN layer (rows)

kernel_size_b = the size of the kernel of the first CNN layer (columns)

stride = the stride's size of the first CNN

padding = the padding size of the first CNN layer

padding_2 = the padding size of the second CNN layer

kernel_size_a_2 = the size of the kernel of the second CNN layer (rows)

kernel_size_b_2 = the size of the kernel of the second CNN layer (columns)

stride_2 = the stride size of the second CNN

channels = number of channels of the first CNN layer

channels_2 = number of channels of the second CNN layer

linear_dim_divider_1 = the number to divide the original input size to get the number of neurons in the first FCN layer

linear_dim_divider_2 = the number to divide the original input size to get the number of neurons in the second FCN layer

How to use

params = {

"l1_loss": 0.1,

"weight_decay": 0.01,

"lr": 0.001,

"batch_size": 128,

"activation": "elu",

"dropout": 0.1,

"kernel_size_a": 4,

"kernel_size_b": 4,

"stride": 2,

"padding": 3,

"padding_2": 0,

"kernel_size_a_2": 2,

"kernel_size_b_2": 7,

"stride_2": 3,

"channels": 3,

"channels_2": 14,

"linear_dim_divider_1": 10,

"linear_dim_divider_2": 6

}

model = MIPMLP.CNN(params)

A trainer on the model should be applied by the user.

Citation

Oshrit, Shtossel, et al. "Image and graph convolution networks improve microbiome-based machine learning accuracy." arXiv preprint arXiv:2205.06525 (2022).

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file MIPMLP-1.2.1.tar.gz.

File metadata

- Download URL: MIPMLP-1.2.1.tar.gz

- Upload date:

- Size: 16.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.1 CPython/3.9.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c1e5e993addb630ce84e324f4ef97d30c072f169c565e4372102a4039d6ec6c6

|

|

| MD5 |

cc8811fb864400abf1055ba8836fc66f

|

|

| BLAKE2b-256 |

206d8a1f0f82625b09af73a359668dfe275a55f57bd2d91b0b6b6ba4a0449c91

|

File details

Details for the file MIPMLP-1.2.1-py3-none-any.whl.

File metadata

- Download URL: MIPMLP-1.2.1-py3-none-any.whl

- Upload date:

- Size: 19.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.1 CPython/3.9.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

07971e952785c1afebd99e1a0594ebecc605ff5b0d98b21bbb0e11a5aaf0586c

|

|

| MD5 |

e510492c9a4b20f8a2d8ed69d41610b8

|

|

| BLAKE2b-256 |

5e318e3fe4ffebdafc3f0db8ec9f90434ff9b2a92a9d9416786f7e907c392093

|