A python implementation of OpenNMT

Project description

OpenNMT-py: Open-Source Neural Machine Translation

This is a Pytorch port of OpenNMT, an open-source (MIT) neural machine translation system. It is designed to be research friendly to try out new ideas in translation, summary, image-to-text, morphology, and many other domains. Some companies have proven the code to be production ready.

We love contributions. Please consult the Issues page for any Contributions Welcome tagged post.

Before raising an issue, make sure you read the requirements and the documentation examples.

Unless there is a bug, please use the Forum or Gitter to ask questions.

Table of Contents

Requirements

Install OpenNMT-py from pip:

pip install OpenNMT-py

or from the sources:

git clone https://github.com/OpenNMT/OpenNMT-py.git

cd OpenNMT-py

python setup.py install

Note: If you have MemoryError in the install try to use pip with --no-cache-dir.

(Optionnal) some advanced features (e.g. working audio, image or pretrained models) requires extra packages, you can install it with:

pip install -r requirements.opt.txt

Note:

- some features require Python 3.5 and after (eg: Distributed multigpu, entmax)

- we currently only support PyTorch 1.2 (should work with 1.1)

Features

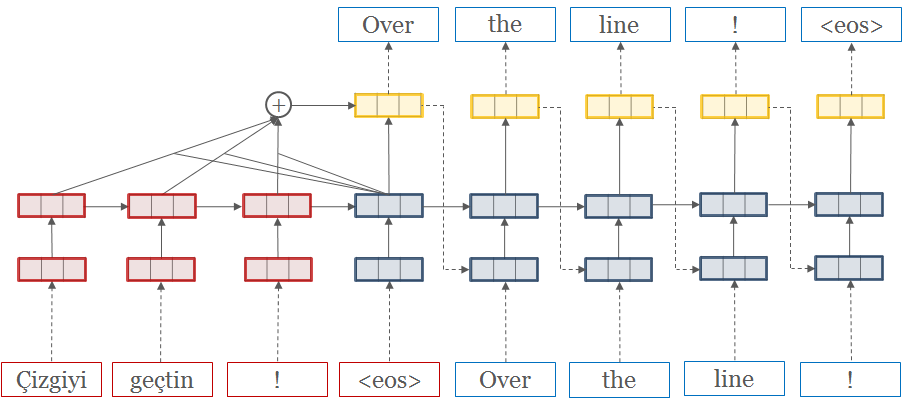

- Seq2Seq models (encoder-decoder) with multiple RNN cells (lstm/gru) and attention (dotprod/mlp) types

- Transformer models"

- Copy and Coverage Attention

- Pretrained Embeddings

- Source word features

- Image-to-text processing

- Speech-to-text processing

- TensorBoard logging

- Multi-GPU training

- Data preprocessing

- Inference (translation) with batching and beam search

- Inference time loss functions.

- [Conv2Conv convolution model]

- SRU "RNNs faster than CNN" paper

- Mixed-precision training with APEX, optimized on Tensor Cores

Quickstart

Step 1: Preprocess the data

onmt_preprocess -train_src data/src-train.txt -train_tgt data/tgt-train.txt -valid_src data/src-val.txt -valid_tgt data/tgt-val.txt -save_data data/demo

We will be working with some example data in data/ folder.

The data consists of parallel source (src) and target (tgt) data containing one sentence per line with tokens separated by a space:

src-train.txttgt-train.txtsrc-val.txttgt-val.txt

Validation files are required and used to evaluate the convergence of the training. It usually contains no more than 5000 sentences.

After running the preprocessing, the following files are generated:

demo.train.pt: serialized PyTorch file containing training datademo.valid.pt: serialized PyTorch file containing validation datademo.vocab.pt: serialized PyTorch file containing vocabulary data

Internally the system never touches the words themselves, but uses these indices.

Step 2: Train the model

onmt_train -data data/demo -save_model demo-model

The main train command is quite simple. Minimally it takes a data file

and a save file. This will run the default model, which consists of a

2-layer LSTM with 500 hidden units on both the encoder/decoder.

If you want to train on GPU, you need to set, as an example:

CUDA_VISIBLE_DEVICES=1,3

-world_size 2 -gpu_ranks 0 1 to use (say) GPU 1 and 3 on this node only.

To know more about distributed training on single or multi nodes, read the FAQ section.

Step 3: Translate

onmt_translate -model demo-model_acc_XX.XX_ppl_XXX.XX_eX.pt -src data/src-test.txt -output pred.txt -replace_unk -verbose

Now you have a model which you can use to predict on new data. We do this by running beam search. This will output predictions into pred.txt.

!!! note "Note" The predictions are going to be quite terrible, as the demo dataset is small. Try running on some larger datasets! For example you can download millions of parallel sentences for translation or summarization.

Alternative: Run on FloydHub

Click this button to open a Workspace on FloydHub for training/testing your code.

Pretrained embeddings (e.g. GloVe)

Please see the FAQ: How to use GloVe pre-trained embeddings in OpenNMT-py

Pretrained Models

The following pretrained models can be downloaded and used with translate.py.

Acknowledgements

OpenNMT-py is run as a collaborative open-source project. The original code was written by Adam Lerer (NYC) to reproduce OpenNMT-Lua using Pytorch.

Major contributors are: Sasha Rush (Cambridge, MA) Vincent Nguyen (Ubiqus) Ben Peters (Lisbon) Sebastian Gehrmann (Harvard NLP) Yuntian Deng (Harvard NLP) Guillaume Klein (Systran) Paul Tardy (Ubiqus / Lium) François Hernandez (Ubiqus) Jianyu Zhan (Shanghai) [Dylan Flaute](http://github.com/flauted (University of Dayton) and more !

OpentNMT-py belongs to the OpenNMT project along with OpenNMT-Lua and OpenNMT-tf.

Citation

OpenNMT: Neural Machine Translation Toolkit

@inproceedings{opennmt,

author = {Guillaume Klein and

Yoon Kim and

Yuntian Deng and

Jean Senellart and

Alexander M. Rush},

title = {Open{NMT}: Open-Source Toolkit for Neural Machine Translation},

booktitle = {Proc. ACL},

year = {2017},

url = {https://doi.org/10.18653/v1/P17-4012},

doi = {10.18653/v1/P17-4012}

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file OpenNMT-py-1.0.0rc1.tar.gz.

File metadata

- Download URL: OpenNMT-py-1.0.0rc1.tar.gz

- Upload date:

- Size: 143.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/1.15.0 pkginfo/1.4.2 requests/2.20.0 setuptools/41.2.0 requests-toolbelt/0.8.0 tqdm/4.30.0 CPython/3.6.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1d73c746a171364f93d60cd02f328ef1046039ff8566cbafb8344ba610c2fd4a

|

|

| MD5 |

679b6826d7ae91aef3eed6338aa7837b

|

|

| BLAKE2b-256 |

46656dc4449f0218e43e9f06eebad35f29aa36d04108192ecf270b93858bd6f4

|

File details

Details for the file OpenNMT_py-1.0.0rc1-py3-none-any.whl.

File metadata

- Download URL: OpenNMT_py-1.0.0rc1-py3-none-any.whl

- Upload date:

- Size: 179.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/1.15.0 pkginfo/1.4.2 requests/2.20.0 setuptools/41.2.0 requests-toolbelt/0.8.0 tqdm/4.30.0 CPython/3.6.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

31f1488c23238911da2dc0c7d31faa8c296303d9130c81d16e15b9f8a3d7d152

|

|

| MD5 |

c5619c98d66317c03d3a5f9b2ca419ec

|

|

| BLAKE2b-256 |

d5ab0f5223cb441d33cc807fd021c60266d27054be9599d3464c1a016063381c

|