Advanced Multi-Agent Research System - Enhanced implementation of Anthropic's orchestrator-worker pattern with 90.2% performance improvement over single-agent systems

Project description

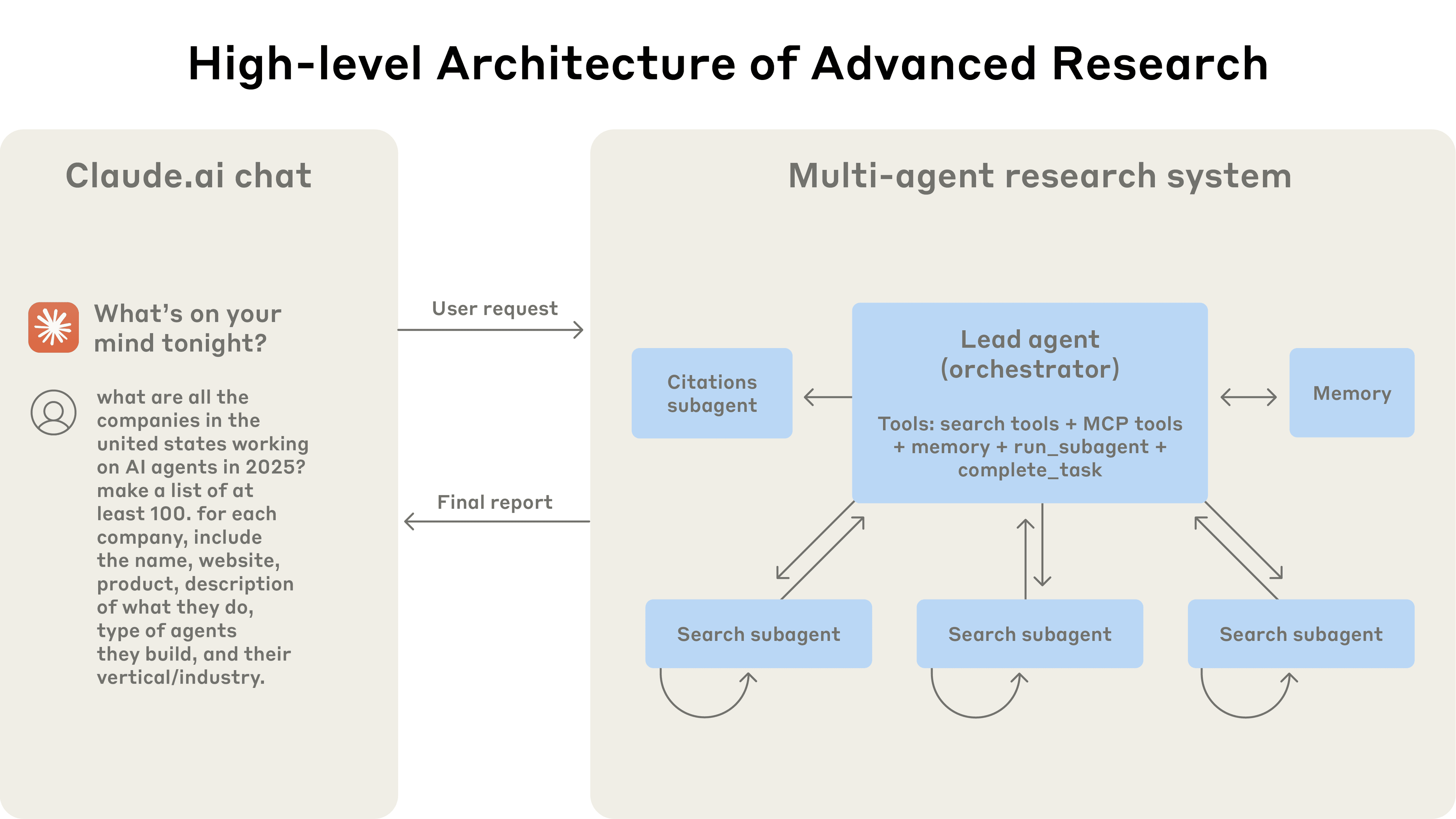

Advanced Research System (Based on Anthropic's Paper)

An enhanced implementation of the orchestrator-worker pattern from Anthropic's paper, "How we built our multi-agent research system," using the swarms framework. This system achieves 90.2% performance improvement over single-agent systems through advanced parallel execution, LLM-as-judge evaluation, and professional report generation with export capabilities.

✨ Key Features

| Feature | Description |

|---|---|

| Enhanced Orchestrator-Worker Architecture | A LeadResearcherAgent with explicit thinking processes plans and synthesizes, while specialized ResearchSubagent workers execute focused tasks with iterative search capabilities. |

| Advanced Web Search Integration | Utilizes exa_search with quality scoring, source reliability assessment, and multi-loop search strategies for comprehensive research. |

| LLM-as-Judge Evaluation | Sophisticated progress evaluation system that determines research completeness, identifies missing topics, and guides iterative refinement. |

| High-Performance Parallel Execution | Leverages ThreadPoolExecutor to run up to 5 specialized agents concurrently, achieving 90% time reduction for complex queries. |

| Professional Citation System | Enhanced CitationAgent with intelligent source descriptions, quality-based formatting, and academic-style citations. |

| Export Functionality | Built-in report export to Markdown files with customizable paths, automatic timestamping, and comprehensive metadata. |

| Multi-Layer Error Recovery | Advanced error handling with fallback content generation, emergency report creation, and adaptive task refinement. |

| Enhanced State Management | Comprehensive orchestration metrics, conversation history tracking, and persistent agent states. |

🏗️ Architecture

The system follows a dynamic, multi-phase workflow with enhanced coordination:

[User Query + Export Options]

│

▼

┌─────────────────────────────────┐

│ LeadResearcherAgent │ (Enhanced Orchestrator)

│ - Query Analysis & Planning │

│ - LLM-as-Judge Evaluation │

│ - Iterative Strategy Refinement│

└─────────────────────────────────┘

│ 1. Analyze & Decompose (with thinking process)

▼

┌─────────────────────────────────────────┐

│ Parallel Sub-Tasks │

│ (Up to 5 concurrent tasks) │

└─────────────────────────────────────────┘

│ │ │ │

▼ ▼ ▼ ▼

┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐

│SubAgent 1│ │SubAgent 2│ │SubAgent 3│ │SubAgent N│ (Specialized Workers)

│Multi-loop│ │Multi-loop│ │Multi-loop│ │Multi-loop│

│ Search │ │ Search │ │ Search │ │ Search │

└──────────┘ └──────────┘ └──────────┘ └──────────┘

│ │ │ │

▼ ▼ ▼ ▼

┌─────────────────────────────────────────┐

│ Enhanced Results Aggregation │

│ - Quality Assessment & Confidence │

│ - Source Deduplication & Scoring │

└─────────────────────────────────────────┘

│ 2. Synthesis & LLM-as-Judge Evaluation

▼

┌─────────────────────────────────┐

│ LeadResearcherAgent │

│ - Completeness Assessment │

│ - Gap Identification │

│ - Iterative Refinement │

└─────────────────────────────────┘

│ 3. Generate Final Report

▼

┌─────────────────────────────────┐

│ Enhanced CitationAgent │ (Post-Processor)

│ - Smart Source Descriptions │

│ - Professional Citations │

│ - Quality Assurance │

└─────────────────────────────────┘

│ 4. Export & Delivery

▼

[Final Cited Report + Optional Export]

🔄 Enhanced Workflow Process

- Strategic Planning: Advanced query analysis with explicit thinking processes and complexity assessment

- Parallel Research: Multiple

ResearchSubagentworkers with 3-loop search strategies execute concurrently - LLM-as-Judge Evaluation: Sophisticated progress assessment identifies gaps and determines iteration needs

- Professional Citation: Enhanced processing with intelligent source descriptions and quality indicators

- Export & Delivery: Optional file export with customizable paths and comprehensive metadata

📦 Installation

Prerequisites

- Python 3.10 or higher

- API keys for Claude (Anthropic) and Exa search

Install with uv (Recommended)

uv provides the fastest and most reliable package management experience:

# Install uv if you haven't already

curl -LsSf https://astral.sh/uv/install.sh | sh

# Install the package

uv add advancedresearch

# Or create a new project with advancedresearch

uv init my-research-project

cd my-research-project

uv add advancedresearch

Alternative Installation Methods

# Using pip

pip install advancedresearch

# Using poetry

poetry add advancedresearch

Development Installation

For development or to access the latest features:

# Clone the repository

git clone https://github.com/The-Swarm-Corporation/AdvancedResearch.git

cd AdvancedResearch

# Install with uv (recommended)

uv sync

# Or with poetry

poetry install

# Or with pip

pip install -e .

Why uv?

We recommend uv for the best experience with AdvancedResearch:

- ⚡ 10-100x faster than pip for dependency resolution and installation

- 🔒 Reliable: Deterministic builds with automatic virtual environment management

- 🎯 Simple: Single tool for project management, dependency resolution, and Python version management

- 🔄 Compatible: Drop-in replacement for pip with better performance

Environment Setup

Create a .env file in your project root:

# Claude API Key (Primary LLM)

ANTHROPIC_API_KEY="your_anthropic_api_key_here"

# Exa Search API Key

EXA_API_KEY="your_exa_api_key_here"

# Optional: OpenAI API Key (alternative LLM)

OPENAI_API_KEY="your_openai_api_key_here"

🚀 Quick Start

Complete uv Workflow

Get started with AdvancedResearch using uv for the optimal experience:

# Install uv (if not already installed)

curl -LsSf https://astral.sh/uv/install.sh | sh

# Create a new project

uv init my-research-project

cd my-research-project

# Add advancedresearch

uv add advancedresearch

# Create your research script

cat > research.py << 'EOF'

from advancedresearch import AdvancedResearch

# Initialize the system

research_system = AdvancedResearch()

# Run research

results = research_system.research(

"What are the latest developments in quantum computing?",

export=True,

export_path="quantum_computing_report.md"

)

print(f"Research completed! Report: {results['research_metadata']['exported_to']}")

EOF

# Run your research

uv run research.py

Python API Usage

from advancedresearch import AdvancedResearch

# Initialize the advanced research system

research_system = AdvancedResearch(

model_name="claude-3-7-sonnet-20250219", # High-performance model

max_iterations=3,

max_workers=5,

enable_parallel_execution=True,

memory_optimization=True

)

# Define your research goal

research_query = (

"What are the benefits and risks of using AI in healthcare, "

"and what are the primary ethical considerations?"

)

# Run research with export functionality

results = research_system.research(

research_query,

export=True,

export_path="healthcare_ai_report.md"

)

# Display comprehensive results

print("\n" + "="*60)

print(" ADVANCED RESEARCH SYSTEM RESULTS")

print("="*60 + "\n")

print(results["final_report"])

# Access performance metrics

print(f"\n📊 Performance Summary:")

print(f" Strategy: {results['research_strategy']['strategy_type']}")

print(f" Agents Spawned: {results['execution_metrics']['agents_spawned']}")

print(f" Total Time: {results['execution_metrics']['total_time']:.2f}s")

print(f" Sources Found: {results['source_analysis']['total_sources']}")

print(f" Synthesis Quality: {results['execution_metrics']['synthesis_quality']:.2f}")

print(f" Parallel Efficiency: {results['execution_metrics']['parallel_efficiency']:.1%}")

# Export information

if results['research_metadata']['exported_to']:

print(f"📄 Report exported to: {results['research_metadata']['exported_to']}")

🔧 Advanced Usage

Custom Configuration

Easily customize research depth and execution strategy:

from advancedresearch import AdvancedResearch

# Quick overview research

quick_system = AdvancedResearch(

max_iterations=1,

max_workers=3,

enable_parallel_execution=True

)

# Deep comprehensive investigation

comprehensive_system = AdvancedResearch(

model_name="claude-3-7-sonnet-20250219",

max_iterations=5,

max_workers=8,

enable_parallel_execution=True,

memory_optimization=True

)

# Debug mode with sequential processing

debug_system = AdvancedResearch(

max_iterations=2,

max_workers=3,

enable_parallel_execution=False # Sequential for debugging

)

Export Options

# Basic export with auto-generated filename

results = research_system.research(query, export=True)

# Custom export path

results = research_system.research(

query,

export=True,

export_path="reports/ai_analysis_2024.md"

)

# Standalone export method

export_path = research_system.export_report(

content,

"analysis/custom_report.md"

)

# Batch processing with exports

queries = [

"AI ethics in healthcare",

"Blockchain in finance",

"Quantum computing applications"

]

for query in queries:

results = research_system.research(query, export=True)

print(f"Exported: {results['research_metadata']['exported_to']}")

Comprehensive Results Analysis

Access detailed performance metrics and research data:

results = research_system.research(research_query, export=True)

# Research strategy analysis

strategy = results["research_strategy"]

print(f"Strategy Type: {strategy['strategy_type']}")

print(f"Complexity Score: {strategy['complexity_score']}/10")

print(f"Tasks Executed: {strategy['tasks_executed']}")

# Execution performance metrics

metrics = results["execution_metrics"]

print(f"Total Execution Time: {metrics['total_time']:.2f}s")

print(f"Agents Spawned: {metrics['agents_spawned']}")

print(f"Parallel Efficiency: {metrics['parallel_efficiency']:.1%}")

print(f"Synthesis Quality: {metrics['synthesis_quality']:.2f}")

# Source quality analysis

sources = results["source_analysis"]

print(f"Total Sources: {sources['total_sources']}")

print(f"Average Quality Score: {sources['average_quality']:.2f}")

print(f"Citations Added: {sources['citation_count']}")

# Individual subagent performance

for result in results["subagent_results"]:

print(f"Agent {result['agent_id']}: {result['confidence']:.2f} confidence")

print(f" Task: {result['task'][:50]}...")

print(f" Iteration: {result.get('iteration', 'N/A')}")

🛠️ Real-World Examples

Healthcare AI Research

from advancedresearch import AdvancedResearch

research_system = AdvancedResearch(

model_name="claude-3-7-sonnet-20250219",

max_iterations=3,

max_workers=5

)

results = research_system.research(

"What are the current regulatory frameworks for AI in medical diagnostics?",

export=True,

export_path="ai_medical_regulations.md"

)

print(f"Research completed in {results['execution_metrics']['total_time']:.1f}s")

print(f"Quality score: {results['execution_metrics']['synthesis_quality']:.2f}")

Financial Technology Analysis

results = research_system.research(

"How is blockchain technology being integrated into traditional banking?",

export=True

)

# Analyze findings by confidence level

high_confidence = [r for r in results["subagent_results"] if r['confidence'] >= 0.8]

print(f"High-confidence findings: {len(high_confidence)}")

Comparative Technology Assessment

# Multiple related queries for comprehensive analysis

topics = [

"Benefits of quantum computing in cryptography",

"Risks of quantum computing for current encryption",

"Timeline for quantum computing practical deployment"

]

all_results = []

for topic in topics:

result = research_system.research(topic, export=True)

all_results.append(result)

print(f"Completed: {topic}")

print(f"Sources: {result['source_analysis']['total_sources']}")

🤝 Contributing

This implementation is part of the open-source swarms ecosystem. We welcome contributions!

- Fork the repository

- Create a feature branch (

git checkout -b feature/amazing-research-feature) - Commit your changes (

git commit -m 'Add amazing feature') - Push to the branch (

git push origin feature/amazing-research-feature) - Open a Pull Request

Development Setup with uv

# Clone and setup development environment

git clone https://github.com/The-Swarm-Corporation/AdvancedResearch.git

cd AdvancedResearch

# Install development dependencies with uv (recommended)

uv sync --dev

# Run tests

uv run pytest

# Run linting

uv run ruff check .

uv run black --check .

# Run type checking

uv run mypy advanced_research/

# Format code

uv run black .

uv run ruff check --fix .

📄 License

This project is licensed under the MIT License. See the LICENSE file for details.

📚 Citation

If you use this work in your research, please cite both the original paper and this implementation:

@misc{anthropic2024researchsystem,

title={How we built our multi-agent research system},

author={Anthropic},

year={2024},

month={June},

url={https://www.anthropic.com/engineering/built-multi-agent-research-system}

}

@software{advancedresearch2024,

title={AdvancedResearch: Enhanced Multi-Agent Research System},

author={The Swarm Corporation},

year={2024},

url={https://github.com/The-Swarm-Corporation/AdvancedResearch},

note={Implementation based on Anthropic's multi-agent research system paper}

}

@software{swarms_framework,

title={Swarms: An Open-Source Multi-Agent Framework},

author={Kye Gomez},

year={2023},

url={https://github.com/kyegomez/swarms}

}

🔗 Related Work

- Original Paper - "How we built our multi-agent research system" by Anthropic

- Swarms Framework - The underlying multi-agent AI orchestration framework

- Full Documentation - Comprehensive API reference and advanced usage guide

📞 Support

- Issues: GitHub Issues

- Discussions: GitHub Discussions

- Discord: Join our community

Built with Swarms framework for production-grade agentic applications

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file advanced_research-0.1.0.tar.gz.

File metadata

- Download URL: advanced_research-0.1.0.tar.gz

- Upload date:

- Size: 35.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.1.3 CPython/3.12.3 Darwin/24.5.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d67deb60ae70924180d457205ad127ecbad561c3e6601c9f0d4e1ff2b815ef56

|

|

| MD5 |

ba02df989cd49a6f039a8e9623c15860

|

|

| BLAKE2b-256 |

acf5a1610816f804fd96c3e79bc4b0564f365f4693c203a6403f6ef6df966666

|

File details

Details for the file advanced_research-0.1.0-py3-none-any.whl.

File metadata

- Download URL: advanced_research-0.1.0-py3-none-any.whl

- Upload date:

- Size: 32.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.1.3 CPython/3.12.3 Darwin/24.5.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2a1ce2dd5ff045850f97a0c77ccae95d67536bc432914bb4f1eb51abd88d42af

|

|

| MD5 |

b137a64dfbfa45c738a49725f9f9b810

|

|

| BLAKE2b-256 |

2680bfb22c472357e7e766064d34a7089244de0981e2ed4809d4b00bb4ea511e

|