Persistent memory for AI coding agents. Correct once, remembered forever. Every design decision validated by experiment before shipping to production.

Project description

agentmemory

Correct your AI agent once. It remembers forever.

You correct your AI agent. It says "got it." Next session, it makes the same mistake. You correct it again. And again. And again.

agentmemory makes the next correction your last. It captures what matters from your conversations (corrections, decisions, preferences), stores them locally, and injects them into every future session. Silently. Automatically. You stop repeating yourself.

pip install agentmemory-rrs

agentmemory setup

Restart Claude Code. In any project: /mem:onboard .

That's it. Three commands. Your agent now remembers permanently.

What It Actually Does

Here's a real example. You type push the release to github. Before the agent sees your message, agentmemory's hook fires and runs a 7-layer search in ~50ms:

Layer 0: Structural analysis -> task type: deployment, target: github

Layer 1: FTS5 full-text search -> 4 hits (publish script, CI checks, remote config)

Layer 2: Entity expansion -> "github" links to 3 beliefs about repo setup

Layer 3: Action-context -> "push to github" triggers activation condition

Layer 4: Supersession check -> old remote URL excluded (superseded)

Layer 5: Recent observations -> correction from 2 days ago about publish script

Layer 6: Cross-project scopes -> checks shared infra beliefs

The agent receives this context injection alongside your message:

== OPERATIONAL STATE ==

[!] GitHub account renamed (changed 2d ago)

== STANDING CONSTRAINTS ==

- NEVER use git push github directly. Use scripts/publish-to-github.sh

- Pre-push hook scans for PII; direct push bypasses safety checks

- To release with tag: bash scripts/publish-to-github.sh --tag vX.Y.Z

== BACKGROUND ==

- Remote 'github' points to git@github.com:robot-rocket-science/agentmemory.git

Without agentmemory, the agent takes "push to github" literally and runs git push github main, bypassing every safety check. With it, the agent heard three words and executed the full procedure: publish script, PII guards, pre-push hook. That procedure was never taught in one session. It accumulated from corrections over weeks. Why this matters: from low-context to high-context.

What It Remembers

| You say | It stores |

|---|---|

| "Never commit .env files" | Permanent rule. Injected every session. |

| "The endpoint moved to /v2" | Correction. Replaces the old belief. |

| "I prefer terse commits" | Preference. Shapes behavior silently. |

Beliefs accumulate over time. Each one carries a Bayesian confidence score that strengthens when the belief proves useful and fades when it doesn't. After a few weeks:

/mem:stats

Beliefs: 312 (18 locked, 294 learned)

Sessions: 47

Corrections surfaced this session: 3

Last locked: "never force-push to main" (4 weeks ago)

The Problem Is Bigger Than You Think

Most power users end up building the same workaround: a growing collection of markdown files. STATE.md for current position. ROADMAP.md for what's next. DECISIONS.md for why you stopped doing it that way. Cross-references in your config file pointing to runbooks, registries, and troubleshooting guides. Some projects have 7+ mandatory external reads the agent is supposed to follow.

Every cross-reference is a bet. You write "see docs/deploy-runbook.md for deployment steps" and hope the agent actually reads it, finds the right section, and follows it. When it doesn't (and it often doesn't), you get silent failures: re-suggested dead approaches, guessed credentials, skipped safety checks.

agentmemory replaces that entire chain with a mechanism. Relevant context is found through a 7-layer search and injected as part of your prompt before the agent sees it. The agent doesn't have to remember to check a file. It doesn't have to follow a cross-reference. The knowledge is already there, selected by relevance, every time.

| Manual approach | What breaks | agentmemory |

|---|---|---|

| Rules in config files | Agent reads them, doesn't follow them | Injected per-prompt, not per-session |

| Cross-references to docs | Agent skips the reference or reads the wrong section | Relevant content extracted and injected directly |

| State files updated by hand | One missed update and the chain is broken | State tracked automatically with 100% accuracy |

| Workaround frameworks | Add structure but still rely on the agent to voluntarily read files in the right order | No voluntary reads. Mechanical injection. |

Your Data Stays Yours

- 100% local. SQLite on your machine. No network calls in the retrieval pipeline.

- No cloud, no accounts. No server, no signup, no telemetry by default.

- No GPU or vector database. Runs on any machine that runs Python.

What Happens After Install

agentmemory works in the background:

- It listens. Picks up decisions, corrections, and preferences from your conversations.

- It retrieves. Injects relevant beliefs before the agent responds to each prompt.

- It learns. Beliefs that help get stronger; beliefs that don't, fade.

To explicitly lock a permanent rule:

/mem:lock "never commit .env files"

For deeper exploration:

| Command | What it does |

|---|---|

/mem:search <query> |

Find specific memories |

/mem:lock <rule> |

Create a permanent rule |

/mem:wonder <topic> |

Deep parallel research across the memory graph |

/mem:reason <question> |

Test a hypothesis against stored evidence |

/mem:stats |

See what's in memory |

Full command reference: docs/COMMANDS.md.

Emergent Behavior

These weren't planned features. They emerged from persistent memory + reasoning tools.

The agent audited its own memory and found a bug

user Clean up the config file, I think agentmemory handles this now.

agent [searches memory, compares against config]

Found 2 redundant items. But also: this locked belief about

your server setup is stale. It references infrastructure

you removed. Every session is getting incorrect instructions.

user Fix it.

agent [corrects the belief, supersedes the old one]

Done. Want to lock the updated version?

The system caught its own error because it had enough accumulated context to notice the inconsistency. More examples and a full session transcript showing wonder + reason in action.

Under the Hood

Conversations are broken into individual beliefs stored in a local SQLite database. Retrieval uses full-text search, graph traversal, and vocabulary bridging. Nothing to install, nothing to host, nothing that phones home.

98 experiments drove every design decision. 954 tests. 5 academic benchmarks. Architecture details: docs/ARCHITECTURE.md.

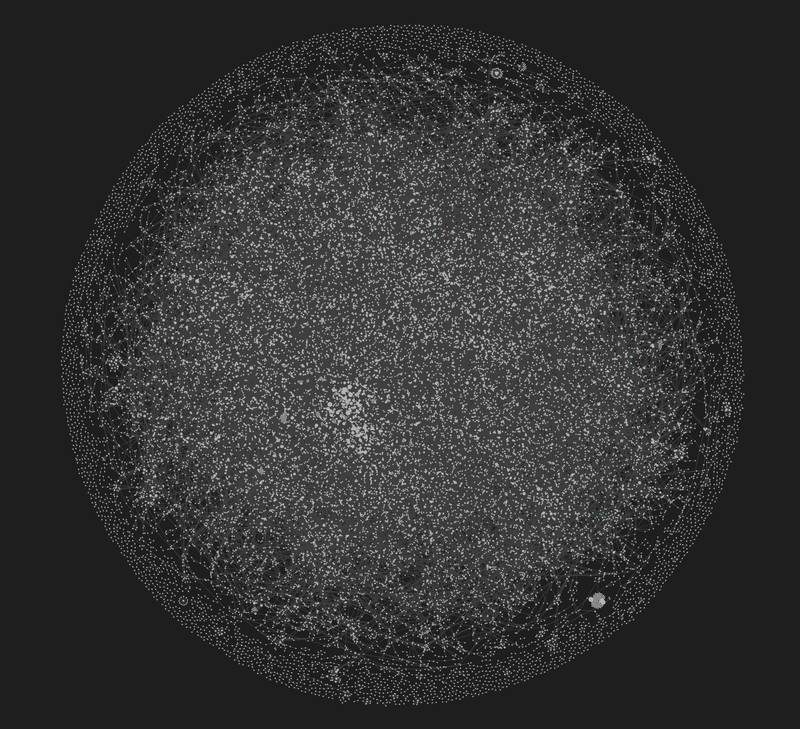

The knowledge graph after a few weeks of daily use. Each dot is a belief. Lines are relationships (supports, contradicts, supersedes).

Compatibility

Currently supports Claude Code via MCP (Model Context Protocol). The architecture is agent-agnostic. Any MCP-compatible client can use agentmemory as a memory backend.

Documentation

- Getting Started: Installation | Workflow

- Reference: Commands | Obsidian Integration | Privacy

- Technical: Architecture | Benchmarks | Case Studies | Philosophy

Development

git clone https://github.com/robot-rocket-science/agentmemory.git

cd agentmemory

uv sync --all-groups

uv run pytest tests/ -x -q

Contributions welcome. See CONTRIBUTING.md.

Citation

@software{agentmemory2026,

author = {robotrocketscience},

title = {agentmemory: Persistent Memory for AI Coding Agents},

year = {2026},

url = {https://github.com/robot-rocket-science/agentmemory},

license = {MIT}

}

License

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file agentmemory_rrs-3.0.2.tar.gz.

File metadata

- Download URL: agentmemory_rrs-3.0.2.tar.gz

- Upload date:

- Size: 4.9 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

504758a7d65c2e3a80342a8a28964737bd7ff63275610096d1b7bdf371892106

|

|

| MD5 |

3a538420b9d3a0b38a2094a53b3bb144

|

|

| BLAKE2b-256 |

dbadcb3690d46edcaf99022b3759d5bd20a2893c8ae84ac1a7b25fe4e432971b

|

Provenance

The following attestation bundles were made for agentmemory_rrs-3.0.2.tar.gz:

Publisher:

publish.yml on robot-rocket-science/agentmemory

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

agentmemory_rrs-3.0.2.tar.gz -

Subject digest:

504758a7d65c2e3a80342a8a28964737bd7ff63275610096d1b7bdf371892106 - Sigstore transparency entry: 1342849134

- Sigstore integration time:

-

Permalink:

robot-rocket-science/agentmemory@620bbc295401ca46ebf7406d0c3651b1f6d2f429 -

Branch / Tag:

refs/tags/v3.0.2 - Owner: https://github.com/robot-rocket-science

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@620bbc295401ca46ebf7406d0c3651b1f6d2f429 -

Trigger Event:

push

-

Statement type:

File details

Details for the file agentmemory_rrs-3.0.2-py3-none-any.whl.

File metadata

- Download URL: agentmemory_rrs-3.0.2-py3-none-any.whl

- Upload date:

- Size: 201.9 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6995c51eb794a0304660e1d4718f41c08143350fed5a1730c4b5d60d7a8fa182

|

|

| MD5 |

d9a67c522f438f78bdeab11b6df8dc54

|

|

| BLAKE2b-256 |

d2ba2db35bf4a74489d00fd12a799025b2998cbda3eba6cc445c6833caa40322

|

Provenance

The following attestation bundles were made for agentmemory_rrs-3.0.2-py3-none-any.whl:

Publisher:

publish.yml on robot-rocket-science/agentmemory

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

agentmemory_rrs-3.0.2-py3-none-any.whl -

Subject digest:

6995c51eb794a0304660e1d4718f41c08143350fed5a1730c4b5d60d7a8fa182 - Sigstore transparency entry: 1342849176

- Sigstore integration time:

-

Permalink:

robot-rocket-science/agentmemory@620bbc295401ca46ebf7406d0c3651b1f6d2f429 -

Branch / Tag:

refs/tags/v3.0.2 - Owner: https://github.com/robot-rocket-science

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@620bbc295401ca46ebf7406d0c3651b1f6d2f429 -

Trigger Event:

push

-

Statement type: