Generated from aind-library-template

Project description

aind-dynamic-foraging-models

AIND library for generative (RL) and descriptive (logistic regression) models of dynamic foraging tasks.

User documentation available on readthedocs.

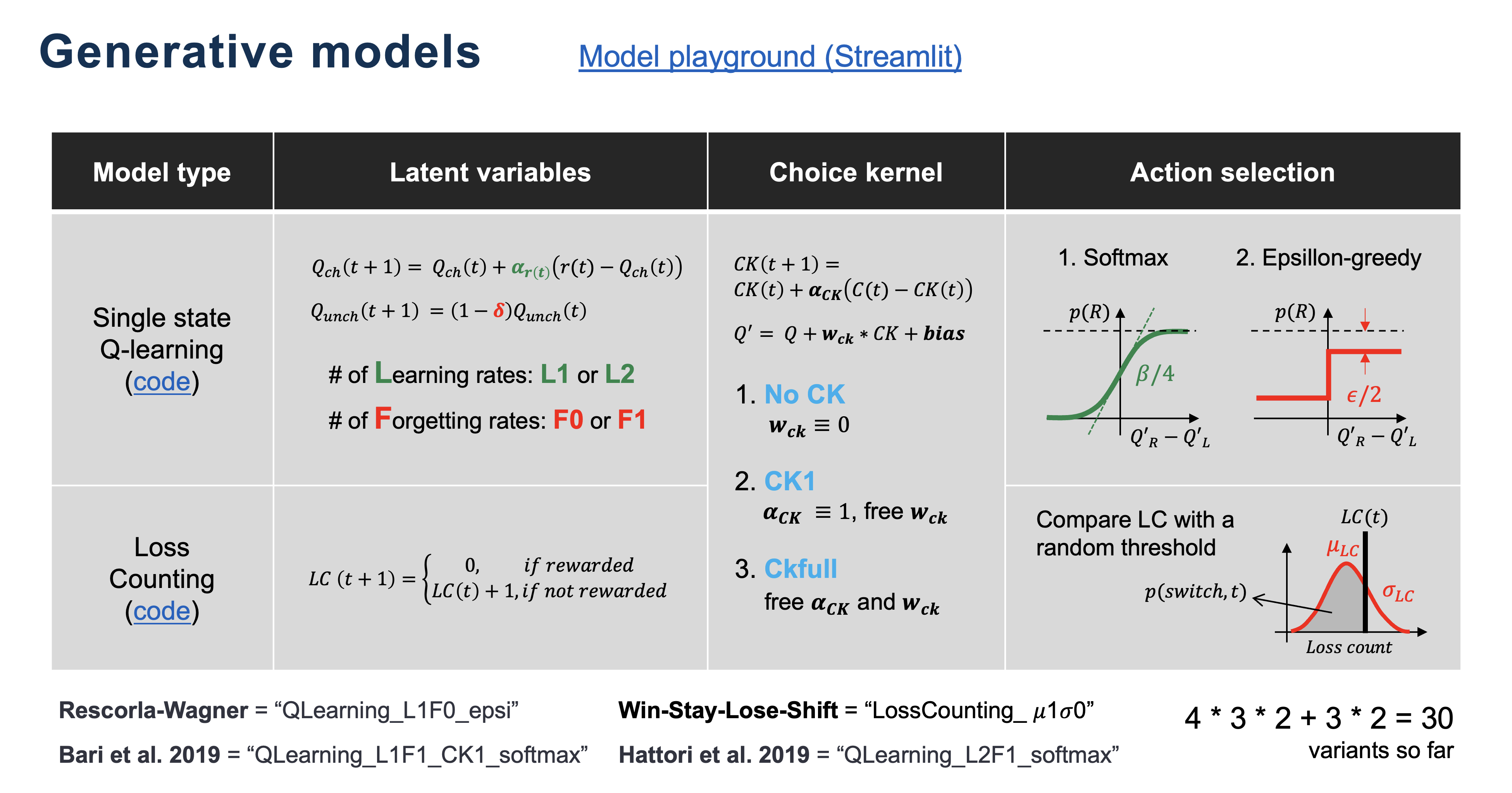

Reinforcement Learning (RL) models with Maximum Likelihood Estimation (MLE) fitting

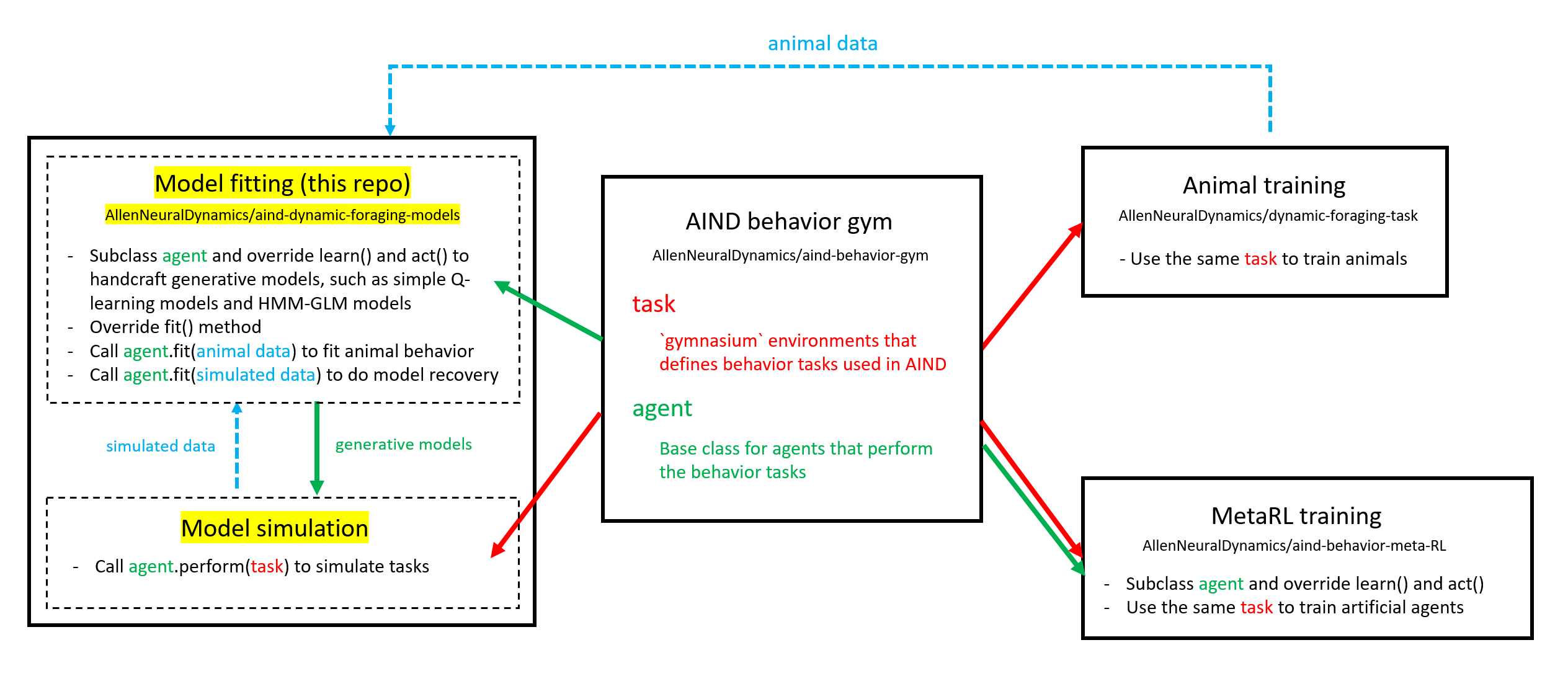

Overview

RL agents that can perform any dynamic foraging task in aind-behavior-gym and can fit behavior using MLE.

Code structure

- To add more generative models, please subclass

DynamicForagingAgentMLEBase.

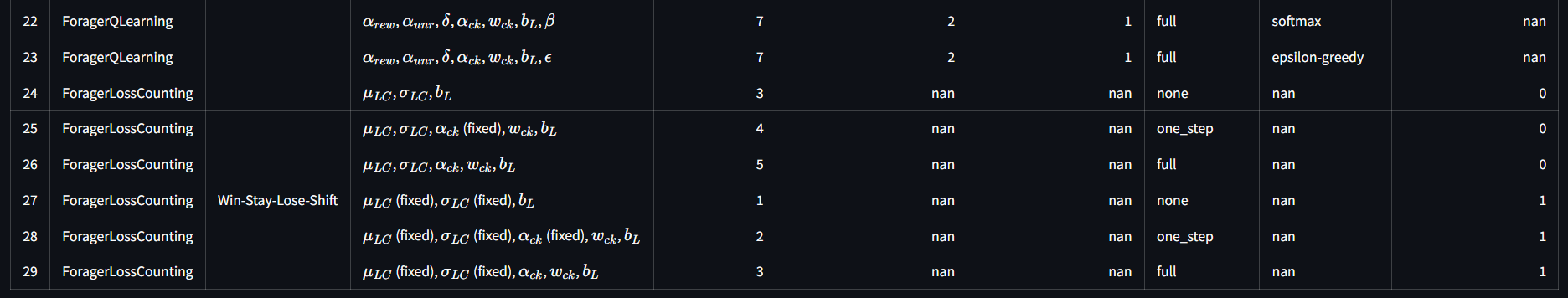

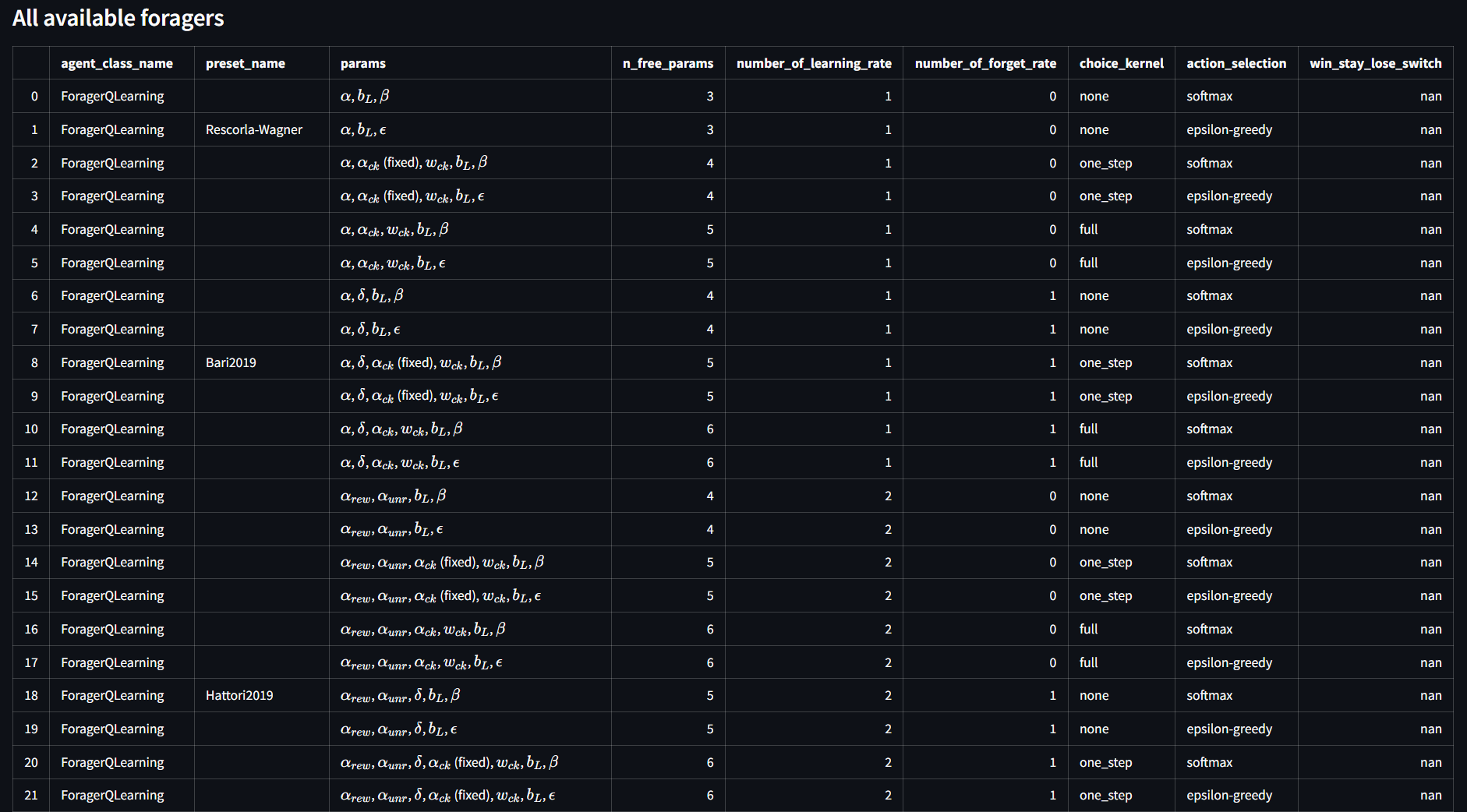

Implemented foragers

ForagerQLearning: Simple Q-learning agents that incrementally update Q-values.- Available

agent_kwargs:number_of_learning_rate: Literal[1, 2] = 2, number_of_forget_rate: Literal[0, 1] = 1, choice_kernel: Literal["none", "one_step", "full"] = "none", action_selection: Literal["softmax", "epsilon-greedy"] = "softmax",

- Available

ForagerLossCounting: Loss counting agents with probabilisticloss_count_threshold.- Available

agent_kwargs:win_stay_lose_switch: Literal[False, True] = False, choice_kernel: Literal["none", "one_step", "full"] = "none",

- Available

- Action selections (readthedoc)

Here is the full list of available foragers:

Usage

- Jupyter notebook

- See also these unittest functions.

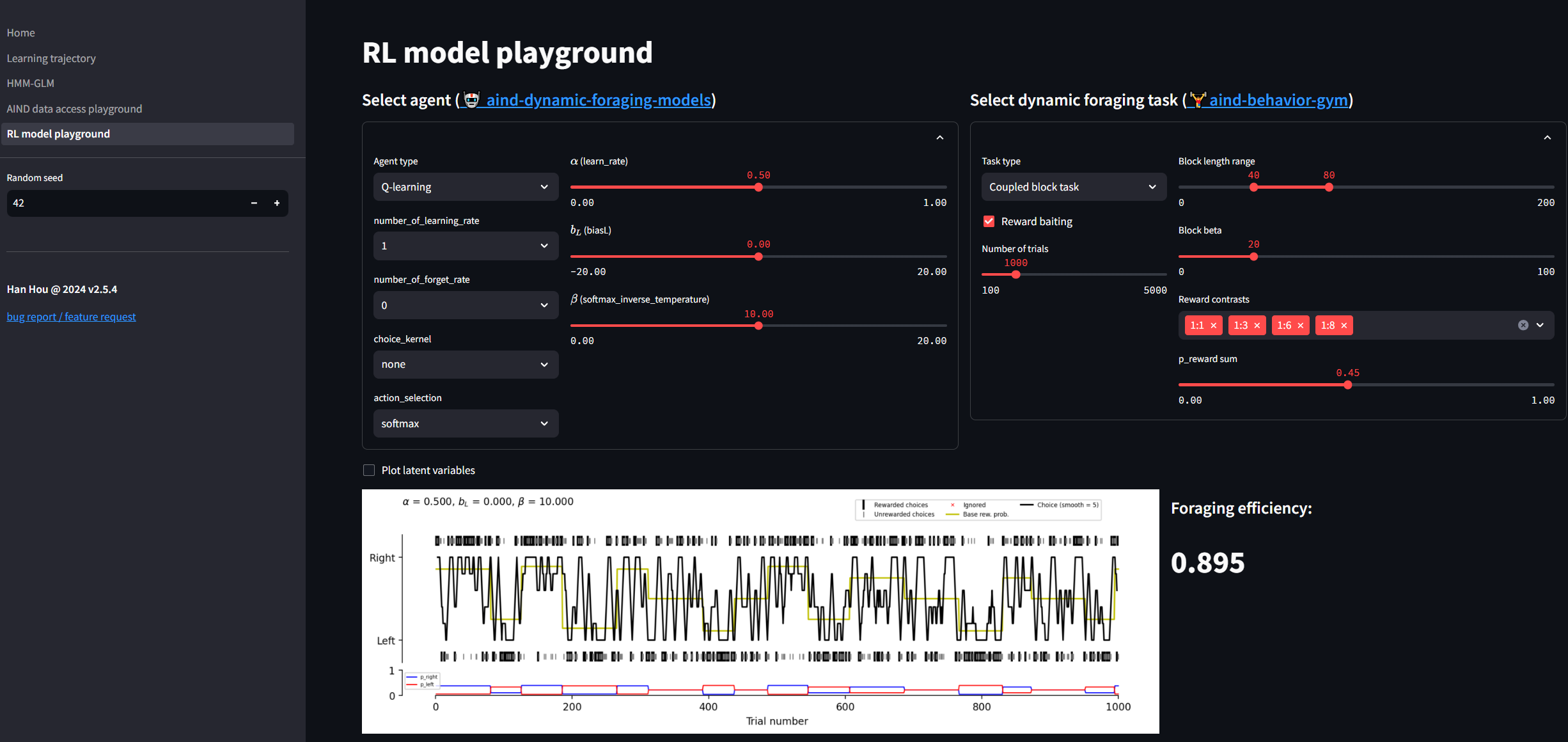

RL model playground

Play with the generative models here.

Logistic regression

Choosing logistic regression models

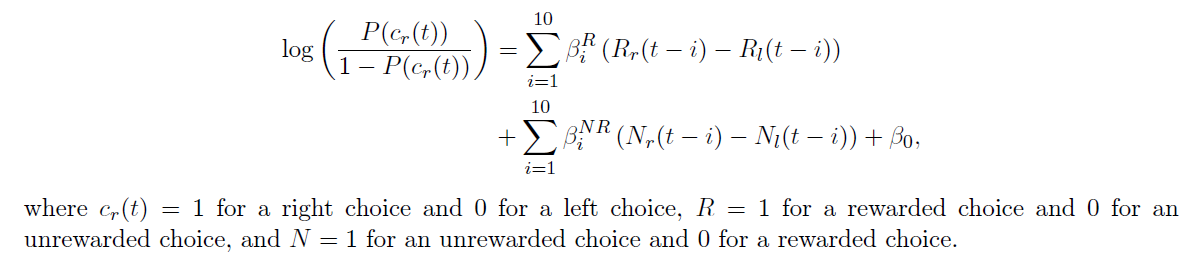

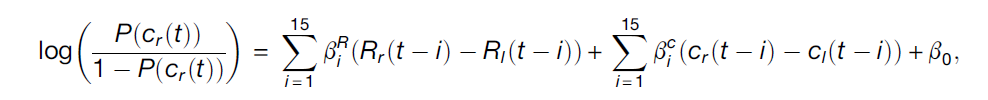

Su 2022

$$ logit(p(c_r)) \sim RewardedChoice+UnrewardedChoice $$

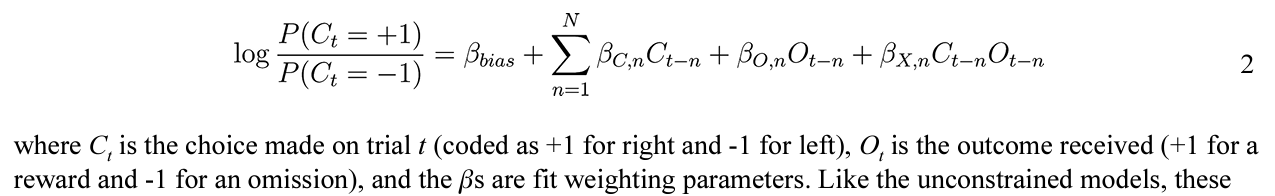

Bari 2019

$$ logit(p(c_r)) \sim RewardedChoice+Choice $$

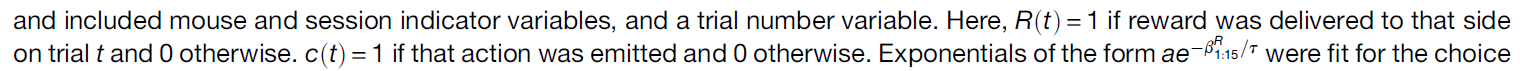

Hattori 2019

$$ logit(p(c_r)) \sim RewardedChoice+UnrewardedChoice+Choice $$

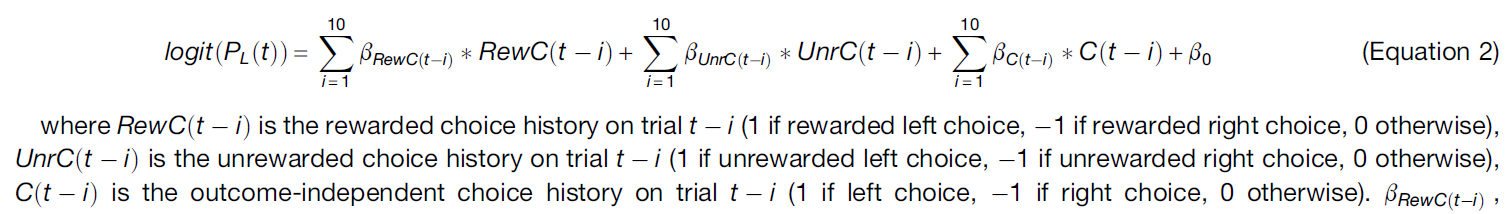

Miller 2021

$$ logit(p(c_r)) \sim Choice + Reward+ Choice*Reward $$

Encodings

- Ignored trials are removed

| choice | reward | Choice | Reward | RewardedChoice | UnrewardedChoice | Choice * Reward |

|---|---|---|---|---|---|---|

| L | yes | -1 | 1 | -1 | 0 | -1 |

| L | no | -1 | -1 | 0 | -1 | 1 |

| R | yes | 1 | 1 | 1 | 0 | 1 |

| L | yes | -1 | 1 | -1 | 0 | -1 |

| R | no | 1 | -1 | 0 | 1 | -1 |

| R | yes | 1 | 1 | 1 | 0 | 1 |

| L | no | -1 | -1 | 0 | -1 | 1 |

Some observations:

- $RewardedChoice$ and $UnrewardedChoice$ are orthogonal

- $Choice = RewardedChoice + UnrewardedChoice$

- $Choice * Reward = RewardedChoice - UnrewardedChoice$

Comparison

| Su 2022 | Bari 2019 | Hattori 2019 | Miller 2021 | |

|---|---|---|---|---|

| Equivalent to | RewC + UnrC | RewC + (RewC + UnrC) | RewC + UnrC + (RewC + UnrC) | (RewC + UnrC) + (RewC - UnrC) + Rew |

| Severity of multicollinearity | Not at all | Medium | Severe | Slight |

| Interpretation | Like a RL model with different learning rates on reward and unrewarded trials. | Like a RL model that only updates on rewarded trials, plus a choice kernel (tendency to repeat previous choices). | Like a RL model that has different learning rates on reward and unrewarded trials, plus a choice kernel (the full RL model from the same paper). | Like a RL model that has symmetric learning rates for rewarded and unrewarded trials, plus a choice kernel. However, the $Reward $ term seems to be a strawman assumption, as it means “if I get reward on any side, I’ll choose the right side more”, which doesn’t make much sense. |

| Conclusion | Probably the best | Okay | Not good due to the severe multicollinearity | Good |

Regularization and optimization

The choice of optimizer depends on the penality term, as listed here.

lbfgs- [l2, None]liblinear- [l1,l2]newton-cg- [l2, None]newton-cholesky- [l2, None]sag- [l2, None]saga- [elasticnet,l1,l2, None]

See also

- Foraging model simulation, model recovery, etc.: https://github.com/hanhou/Dynamic-Foraging

Installation

To install the package (logistic regression only), run

pip install aind-dynamic-foraging-models

To install the package with RL models, run

pip install aind-dynamic-foraging-models[rl]

To develop the code, clone the repo to your local machine, and run

pip install -e .[dev]

Contributing

Linters and testing

There are several libraries used to run linters, check documentation, and run tests.

- Please test your changes using the coverage library, which will run the tests and log a coverage report:

coverage run -m unittest discover && coverage report

- Use interrogate to check that modules, methods, etc. have been documented thoroughly:

interrogate .

- Use flake8 to check that code is up to standards (no unused imports, etc.):

flake8 .

- Use black to automatically format the code into PEP standards:

black .

- Use isort to automatically sort import statements:

isort .

Pull requests

For internal members, please create a branch. For external members, please fork the repository and open a pull request from the fork. We'll primarily use Angular style for commit messages. Roughly, they should follow the pattern:

<type>(<scope>): <short summary>

where scope (optional) describes the packages affected by the code changes and type (mandatory) is one of:

- build: Changes that affect build tools or external dependencies (example scopes: pyproject.toml, setup.py)

- ci: Changes to our CI configuration files and scripts (examples: .github/workflows/ci.yml)

- docs: Documentation only changes

- feat: A new feature

- fix: A bugfix

- perf: A code change that improves performance

- refactor: A code change that neither fixes a bug nor adds a feature

- test: Adding missing tests or correcting existing tests

Semantic Release

The table below, from semantic release, shows which commit message gets you which release type when semantic-release runs (using the default configuration):

| Commit message | Release type |

|---|---|

fix(pencil): stop graphite breaking when too much pressure applied |

|

feat(pencil): add 'graphiteWidth' option |

|

perf(pencil): remove graphiteWidth optionBREAKING CHANGE: The graphiteWidth option has been removed.The default graphite width of 10mm is always used for performance reasons. |

(Note that the BREAKING CHANGE: token must be in the footer of the commit) |

Documentation

To generate the rst files source files for documentation, run

sphinx-apidoc -o doc_template/source/ src

Then to create the documentation HTML files, run

sphinx-build -b html doc_template/source/ doc_template/build/html

More info on sphinx installation can be found here.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file aind_dynamic_foraging_models-0.13.1.tar.gz.

File metadata

- Download URL: aind_dynamic_foraging_models-0.13.1.tar.gz

- Upload date:

- Size: 6.6 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

260e8cacb8b55b43f12b2443f876ced4d1cde364f9938199c1b2466425cd2419

|

|

| MD5 |

4d9f28fa43b3f082d6b5b5db24d36e26

|

|

| BLAKE2b-256 |

eb47a7b35997f9767cc4911ce65db39a06492192d338175487916d98b70b1640

|

File details

Details for the file aind_dynamic_foraging_models-0.13.1-py3-none-any.whl.

File metadata

- Download URL: aind_dynamic_foraging_models-0.13.1-py3-none-any.whl

- Upload date:

- Size: 50.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

34602f2ee02fa1d5b48449f05980b2ddc37c4f770608a2df3989875856571a13

|

|

| MD5 |

404926bbb690b70e52e7d48a493937a7

|

|

| BLAKE2b-256 |

6112e299389a360dae91b07b936ebed085fa1a718e210319faa50d4766054b26

|