A ultra-high performance package for sending requests to Baseten Embedding Inference'

Project description

High performance client for Baseten.co

This library provides a high-performance Python client for Baseten.co endpoints including embeddings, reranking, and classification. It was built for massive concurrent post requests to any URL, also outside of baseten.co. PerformanceClient releases the GIL while performing requests in the Rust, and supports simultaneous sync and async usage. It was benchmarked with >1200 rps per client in our blog. PerformanceClient is built on top of pyo3, reqwest and tokio and is MIT licensed.

Installation

pip install baseten_performance_client

Usage

import os

import asyncio

from baseten_performance_client import PerformanceClient, OpenAIEmbeddingsResponse, RerankResponse, ClassificationResponse

api_key = os.environ.get("BASETEN_API_KEY")

base_url_embed = "https://model-yqv4yjjq.api.baseten.co/environments/production/sync"

# Also works with OpenAI or Mixedbread.

# base_url_embed = "https://api.openai.com" or "https://api.mixedbread.com"

# Basic client setup

client = PerformanceClient(base_url=base_url_embed, api_key=api_key)

# Advanced setup with HTTP version selection and connection pooling

from baseten_performance_client import HttpClientWrapper

http_wrapper = HttpClientWrapper(http_version=1) # HTTP/1.1 (default)

advanced_client = PerformanceClient(

base_url=base_url_embed,

api_key=api_key,

http_version=1, # HTTP/1.1

client_wrapper=http_wrapper # Share connection pool

)

Embeddings

Synchronous Embedding

from baseten_performance_client import RequestProcessingPreference

texts = ["Hello world", "Example text", "Another sample"]

preference = RequestProcessingPreference(

batch_size=16,

max_concurrent_requests=32,

timeout_s=360,

max_chars_per_request=256000, # Character limit per request

hedge_delay=0.5, # Enable hedging with 0.5s delay

total_timeout_s=360 # Total operation timeout

)

response = client.embed(

input=texts,

model="my_model",

preference=preference

)

# Accessing embedding data

print(f"Model used: {response.model}")

print(f"Total tokens used: {response.usage.total_tokens}")

print(f"Total time: {response.total_time:.4f}s")

if response.individual_batch_request_times:

for i, batch_time in enumerate(response.individual_batch_request_times):

print(f" Time for batch {i}: {batch_time:.4f}s")

for i, embedding_data in enumerate(response.data):

print(f"Embedding for text {i} (original input index {embedding_data.index}):")

# embedding_data.embedding can be List[float] or str (base64)

if isinstance(embedding_data.embedding, list):

print(f" First 3 dimensions: {embedding_data.embedding[:3]}")

print(f" Length: {len(embedding_data.embedding)}")

# Using the numpy() method (requires numpy to be installed)

import numpy as np

numpy_array = response.numpy()

print("\nEmbeddings as NumPy array:")

print(f" Shape: {numpy_array.shape}")

print(f" Data type: {numpy_array.dtype}")

if numpy_array.shape[0] > 0:

print(f" First 3 dimensions of the first embedding: {numpy_array[0][:3]}")

Note: The embed method is versatile and can be used with any embeddings service, e.g. OpenAI API embeddings, not just for Baseten deployments.

Asynchronous Embedding

async def async_embed():

from baseten_performance_client import RequestProcessingPreference

texts = ["Async hello", "Async example"]

preference = RequestProcessingPreference(

batch_size=16,

max_concurrent_requests=32,

timeout_s=360,

max_chars_per_request=256000, # Character limit per request

hedge_delay=0.5, # Enable hedging with 0.5s delay

total_timeout_s=360 # Total operation timeout

)

response = await client.async_embed(

input=texts,

model="my_model",

preference=preference

)

print("Async embedding response:", response.data)

# To run:

# asyncio.run(async_embed())

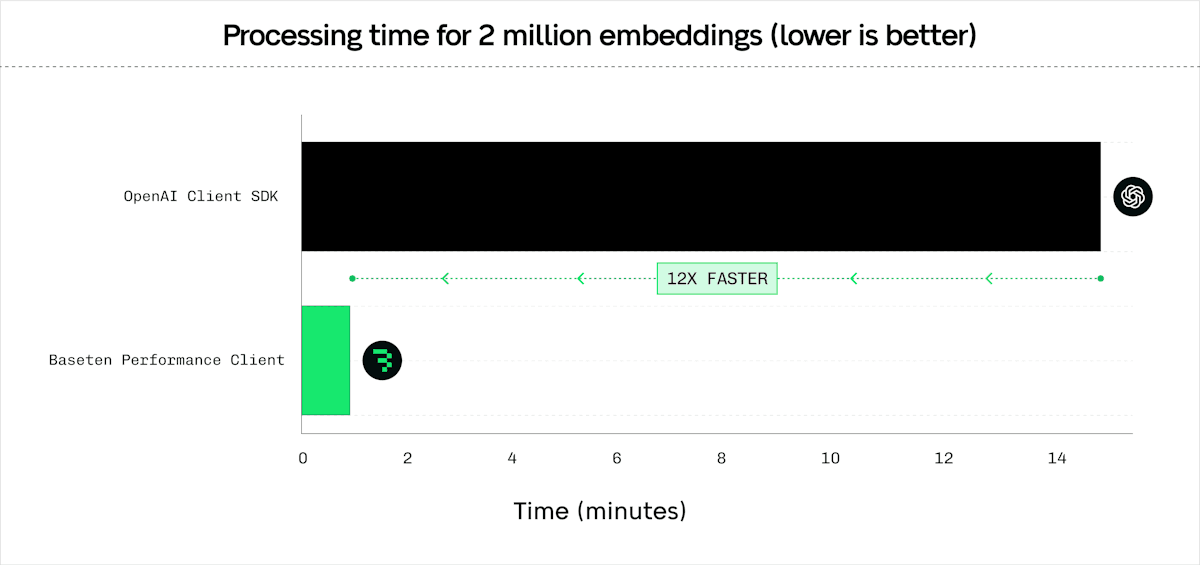

Embedding Benchmarks

Comparison against pip install openai for /v1/embeddings. Tested with the ./scripts/compare_latency_openai.py with mini_batch_size of 128, and 4 server-side replicas. Results with OpenAI similar, OpenAI allows a max mini_batch_size of 2048.

| Number of inputs / embeddings | Number of Tasks | PerformanceClient (s) | AsyncOpenAI (s) | Speedup |

|---|---|---|---|---|

| 128 | 1 | 0.12 | 0.13 | 1.08× |

| 512 | 4 | 0.14 | 0.21 | 1.50× |

| 8 192 | 64 | 0.83 | 1.95 | 2.35× |

| 131 072 | 1 024 | 4.63 | 39.07 | 8.44× |

| 2 097 152 | 16 384 | 70.92 | 903.68 | 12.74× |

General Batch POST

The batch_post method is generic. It can be used to send POST requests to any URL, not limited to Baseten endpoints. The input and output can be any JSON item.

Synchronous Batch POST

from baseten_performance_client import RequestProcessingPreference

payload1 = {"model": "my_model", "input": ["Batch request sample 1"]}

payload2 = {"model": "my_model", "input": ["Batch request sample 2"]}

preference = RequestProcessingPreference(

max_concurrent_requests=32,

timeout_s=360,

hedge_delay=0.5, # Enable hedging with 0.5s delay

total_timeout_s=360, # Total operation timeout

extra_headers={"x-custom-header": "value"} # Custom headers

)

response_obj = client.batch_post(

url_path="/v1/embeddings", # Example path, adjust to your needs

payloads=[payload1, payload2],

preference=preference

)

print(f"Total time for batch POST: {response_obj.total_time:.4f}s")

for i, (resp_data, headers, time_taken) in enumerate(zip(response_obj.data, response_obj.response_headers, response_obj.individual_request_times)):

print(f"Response {i+1}:")

print(f" Data: {resp_data}")

print(f" Headers: {headers}")

print(f" Time taken: {time_taken:.4f}s")

Asynchronous Batch POST

async def async_batch_post_example():

from baseten_performance_client import RequestProcessingPreference

payload1 = {"model": "my_model", "input": ["Async batch sample 1"]}

payload2 = {"model": "my_model", "input": ["Async batch sample 2"]}

preference = RequestProcessingPreference(

max_concurrent_requests=32,

timeout_s=360,

hedge_delay=0.5, # Enable hedging with 0.5s delay

total_timeout_s=360, # Total operation timeout

extra_headers={"x-custom-header": "value"} # Custom headers

)

response_obj = await client.async_batch_post(

url_path="/v1/embeddings",

payloads=[payload1, payload2],

preference=preference

)

print(f"Async total time for batch POST: {response_obj.total_time:.4f}s")

for i, (resp_data, headers, time_taken) in enumerate(zip(response_obj.data, response_obj.response_headers, response_obj.individual_request_times)):

print(f"Async Response {i+1}:")

print(f" Data: {resp_data}")

print(f" Headers: {headers}")

print(f" Time taken: {time_taken:.4f}s")

# To run:

# asyncio.run(async_batch_post_example())

Reranking

Reranking compatible with BEI or text-embeddings-inference.

Synchronous Reranking

from baseten_performance_client import RequestProcessingPreference

query = "What is the best framework?"

documents = ["Doc 1 text", "Doc 2 text", "Doc 3 text"]

preference = RequestProcessingPreference(

batch_size=16,

max_concurrent_requests=32,

timeout_s=360,

max_chars_per_request=256000, # Character limit per request

hedge_delay=0.5, # Enable hedging with 0.5s delay

total_timeout_s=360 # Total operation timeout

)

rerank_response = client.rerank(

query=query,

texts=documents,

model="rerank-model", # Optional model specification

return_text=True,

preference=preference

)

for res in rerank_response.data:

print(f"Index: {res.index} Score: {res.score}")

Asynchronous Reranking

async def async_rerank():

from baseten_performance_client import RequestProcessingPreference

query = "Async query sample"

docs = ["Async doc1", "Async doc2"]

preference = RequestProcessingPreference(

batch_size=16,

max_concurrent_requests=32,

timeout_s=360,

max_chars_per_request=256000, # Character limit per request

hedge_delay=0.5, # Enable hedging with 0.5s delay

total_timeout_s=360 # Total operation timeout

)

response = await client.async_rerank(

query=query,

texts=docs,

model="rerank-model", # Optional model specification

return_text=True,

preference=preference

)

for res in response.data:

print(f"Async Index: {res.index} Score: {res.score}")

# To run:

# asyncio.run(async_rerank())

Classification

Predict (classification endpoint) compatible with BEI or text-embeddings-inference.

Synchronous Classification

from baseten_performance_client import RequestProcessingPreference

texts_to_classify = [

"This is great!",

"I did not like it.",

"Neutral experience."

]

preference = RequestProcessingPreference(

batch_size=16,

max_concurrent_requests=32,

timeout_s=360,

max_chars_per_request=256000, # Character limit per request

hedge_delay=0.5, # Enable hedging with 0.5s delay

total_timeout_s=360 # Total operation timeout

)

classify_response = client.classify(

inputs=texts_to_classify,

model="classification-model", # Optional model specification

preference=preference

)

for group in classify_response.data:

for result in group:

print(f"Label: {result.label}, Score: {result.score}")

Asynchronous Classification

async def async_classify():

from baseten_performance_client import RequestProcessingPreference

texts = ["Async positive", "Async negative"]

preference = RequestProcessingPreference(

batch_size=16,

max_concurrent_requests=32,

timeout_s=360,

max_chars_per_request=256000, # Character limit per request

hedge_delay=0.5, # Enable hedging with 0.5s delay

total_timeout_s=360 # Total operation timeout

)

response = await client.async_classify(

inputs=texts,

model="classification-model", # Optional model specification

preference=preference

)

for group in response.data:

for res in group:

print(f"Async Label: {res.label}, Score: {res.score}")

# To run:

# asyncio.run(async_classify())

Advanced Features

RequestProcessingPreference

The RequestProcessingPreference class provides a unified way to configure all request processing parameters. This is the recommended approach for advanced configuration as it provides better type safety and clearer intent.

from baseten_performance_client import RequestProcessingPreference

# Create a preference with custom settings

preference = RequestProcessingPreference(

max_concurrent_requests=64, # Parallel requests (default: 128)

batch_size=32, # Items per batch (default: 128)

timeout_s=30.0, # Per-request timeout (default: 3600.0)

hedge_delay=0.5, # Hedging delay (default: None)

hedge_budget_pct=0.15, # Hedge budget percentage (default: 0.10)

retry_budget_pct=0.08, # Retry budget percentage (default: 0.05)

max_retries=5, # Maximum HTTP retries (default: 5)

initial_backoff_ms=250, # Initial backoff in milliseconds (default: 125)

total_timeout_s=300.0 # Total operation timeout (default: None)

)

# Use with any method

response = client.embed(

input=["text1", "text2"],

model="my_model",

preference=preference

)

# Also works with async methods

response = await client.async_embed(

input=["text1", "text2"],

model="my_model",

preference=preference

)

Property-based Configuration: You can also modify preferences after creation using property setters:

# Create preference and modify properties

preference = RequestProcessingPreference()

preference.max_concurrent_requests = 64 # Set parallel requests

preference.batch_size = 32 # Set batch size

preference.timeout_s = 30.0 # Set timeout

preference.hedge_delay = 0.5 # Enable hedging

preference.hedge_budget_pct = 0.15 # Set hedge budget

preference.retry_budget_pct = 0.08 # Set retry budget

preference.max_retries = 3 # Set max retries

preference.initial_backoff_ms = 250 # Set backoff

# Use with any method

response = client.embed(

input=["text1", "text2"],

model="my_model",

preference=preference

)

Budget Percentages:

hedge_budget_pct: Percentage of total requests allocated for hedging (default: 10%)retry_budget_pct: Percentage of total requests allocated for retries (default: 5%)- Maximum allowed: 300% for both budgets

Retry Configuration:

max_retries: Maximum number of HTTP retries (default: 5, max: 6)initial_backoff_ms: Initial backoff duration in milliseconds (default: 125, range: 50-30000)- Backoff uses exponential backoff with jitter

Request Hedging

The client supports request hedging for improved latency by sending duplicate requests after a specified delay:

# Enable hedging with 0.5 second delay

preference = RequestProcessingPreference(

hedge_delay=0.5, # Send hedge request after 0.5s

max_chars_per_request=256000,

total_timeout_s=360

)

response = client.embed(

input=texts,

model="my_model",

preference=preference

)

Custom Headers

Use custom headers with batch_post:

preference = RequestProcessingPreference(

extra_headers={

"x-custom-header": "value",

"authorization": "Bearer token"

}

)

response = client.batch_post(

url_path="/v1/embeddings",

payloads=payloads,

preference=preference

)

HTTP Version Selection

Choose between HTTP/1.1 and HTTP/2:

# HTTP/1.1 (default, better for high concurrency)

client_http1 = PerformanceClient(base_url, api_key, http_version=1)

# HTTP/2 (better for single requests)

client_http2 = PerformanceClient(base_url, api_key, http_version=2)

Connection Pooling

Share connection pools across multiple clients:

from baseten_performance_client import HttpClientWrapper

# Create shared wrapper

wrapper = HttpClientWrapper(http_version=1)

# Reuse across multiple clients

client1 = PerformanceClient(base_url="https://api1.example.com", client_wrapper=wrapper)

client2 = PerformanceClient(base_url="https://api2.example.com", client_wrapper=wrapper)

HTTP Proxy Support

Route all HTTP requests through a proxy (e.g., for connection pooling with Envoy):

from baseten_performance_client import HttpClientWrapper

# Create wrapper with HTTP proxy

wrapper = HttpClientWrapper(

http_version=1,

proxy="http://envoy-proxy.local:8080"

)

# Share the wrapper across multiple clients

client1 = PerformanceClient(

base_url="https://api1.example.com",

api_key="your_key",

client_wrapper=wrapper

)

client2 = PerformanceClient(

base_url="https://api2.example.com",

api_key="your_key",

client_wrapper=wrapper

)

# Both clients will use the same connection pool and proxy

You can also specify the proxy directly when creating a client:

client = PerformanceClient(

base_url="https://api.example.com",

api_key="your_key",

proxy="http://envoy-proxy.local:8080"

)

Endpoint Pool and Health Checks

Route traffic across reusable endpoints with deterministic weighted routing. Each Endpoint

owns its own health worker, so the same endpoint object can be shared across many pools

without duplicate probes:

from baseten_performance_client import Endpoint, EndpointPool, HttpClientWrapper, PerformanceClient

health_wrapper = HttpClientWrapper(http_version=1)

endpoint_a = Endpoint(

base_url="https://model-AAAA.api.baseten.co/environments/production/sync",

api_key="your_key",

client_wrapper=health_wrapper,

deployment_health_path="/health",

deployment_timeout_is_no_vote=False,

)

endpoint_b = Endpoint(

base_url="https://model-BBBB.api.baseten.co/environments/production/sync",

api_key="your_key",

client_wrapper=health_wrapper,

deployment_health_path="/health",

deployment_timeout_is_no_vote=False,

)

endpoint_pool = EndpointPool(

endpoints=[endpoint_a, endpoint_b],

endpoint_weights=[0.8, 0.2], # deterministic weighted routing

)

client = PerformanceClient(

base_url="https://model-AAAA.api.baseten.co/environments/production/sync",

api_key="your_key",

endpoint_pool=endpoint_pool,

)

Health semantics:

- Weights are deterministic weighted routing, not weighted round robin.

- Each configured health check is retried up to

health_check_retries, and one successful retry is enough for that check. - If an endpoint has

deep_health_urlconfigured, both the shallow deployment health path and the deep health URL are evaluated. health_fail_on_first=Trueshort-circuits on the first hard failing check within an endpoint refresh cycle.

Error Handling

The client can raise several types of errors. Here's how to handle common ones:

requests.exceptions.HTTPError: This error is raised for HTTP issues, such as authentication failures (e.g., 403 Forbidden if the API key is wrong), server errors (e.g., 5xx), or if the endpoint is not found (404). You can inspecte.response.status_codeande.response.text(ore.response.json()if the body is JSON) for more details.ValueError: This error can occur due to invalid input parameters (e.g., an emptyinputlist forembed, invalidbatch_sizeormax_concurrent_requestsvalues). It can also be raised byresponse.numpy()if embeddings are not float vectors or have inconsistent dimensions.

Here's an example demonstrating how to catch these errors for the embed method:

import requests

from baseten_performance_client import RequestProcessingPreference

# client = PerformanceClient(base_url="your_baseten_url", api_key="your_baseten_api_key")

texts_to_embed = ["Hello world", "Another text example"]

try:

preference = RequestProcessingPreference(

batch_size=2,

max_concurrent_requests=4,

timeout_s=60 # Timeout in seconds

)

response = client.embed(

input=texts_to_embed,

model="your_embedding_model", # Replace with your actual model name

preference=preference

)

# Process successful response

print(f"Model used: {response.model}")

print(f"Total tokens: {response.usage.total_tokens}")

for item in response.data:

embedding_preview = item.embedding[:3] if isinstance(item.embedding, list) else "Base64 Data"

print(f"Index {item.index}, Embedding (first 3 dims or type): {embedding_preview}")

except requests.exceptions.HTTPError as e:

print(f"An HTTP error occurred: {e}, code {e.args[0]}")

For asynchronous methods (async_embed, async_rerank, async_classify, async_batch_post), the same exceptions will be raised by the await call and can be caught using a try...except block within an async def function.

Development

# Install prerequisites

sudo apt-get install patchelf

# Install cargo if not already installed.

# Set up a Python virtual environment

python -m venv .venv

source .venv/bin/activate

# Install development dependencies

pip install maturin[patchelf] pytest requests numpy

# Build and install the Rust extension in development mode

maturin develop

cargo fmt

# Run tests

pytest tests

Contributions

Feel free to contribute to this repo, tag @michaelfeil for review.

License

MIT License

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file baseten_performance_client-0.1.8.tar.gz.

File metadata

- Download URL: baseten_performance_client-0.1.8.tar.gz

- Upload date:

- Size: 105.5 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

16274a44453c09dd7b52b999ad85069db97f3ad025591ee6f83006b137490f86

|

|

| MD5 |

f21194aecc97670a422190a863eedf4b

|

|

| BLAKE2b-256 |

25b381706cc93cf01ea4883a0f8c5ab34fc607f073919340fe6ce4f2a94ef139

|

File details

Details for the file baseten_performance_client-0.1.8-cp313-cp313t-musllinux_1_2_x86_64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp313-cp313t-musllinux_1_2_x86_64.whl

- Upload date:

- Size: 5.9 MB

- Tags: CPython 3.13t, musllinux: musl 1.2+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

df7325218a551b38bdb3212035123cb9a23e3155afb6dbbac0bf084d61c87c48

|

|

| MD5 |

58982970e7db2c1047f7b43eaa466e54

|

|

| BLAKE2b-256 |

989a8a903e232adfbacd7b3c35fe68e5a6ca376c2865d6df7b0665eff7e73b6a

|

File details

Details for the file baseten_performance_client-0.1.8-cp313-cp313t-musllinux_1_2_i686.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp313-cp313t-musllinux_1_2_i686.whl

- Upload date:

- Size: 5.7 MB

- Tags: CPython 3.13t, musllinux: musl 1.2+ i686

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

320df550bddf797d9f50755db53ff8ff82ae80dccea8ed197520728aace304d7

|

|

| MD5 |

5f2764e7afa7595e9b78f76e47a4fb46

|

|

| BLAKE2b-256 |

8b8afa1847e4410a4135968cdab793727fe4ec2d479bcbe8ffa71a313a7ac2b3

|

File details

Details for the file baseten_performance_client-0.1.8-cp313-cp313t-musllinux_1_2_armv7l.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp313-cp313t-musllinux_1_2_armv7l.whl

- Upload date:

- Size: 5.3 MB

- Tags: CPython 3.13t, musllinux: musl 1.2+ ARMv7l

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

284bd8bd9ef13e4a48de814d4c19e882634f0f00167022eef640502f77d86aab

|

|

| MD5 |

b90c587ba7d34fe4c5cbe4e3561da9ac

|

|

| BLAKE2b-256 |

f9f1c4fae16be03705559041d9fa00c05cd2930987117c8d825dba863293fda6

|

File details

Details for the file baseten_performance_client-0.1.8-cp313-cp313t-musllinux_1_2_aarch64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp313-cp313t-musllinux_1_2_aarch64.whl

- Upload date:

- Size: 6.2 MB

- Tags: CPython 3.13t, musllinux: musl 1.2+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

102b4b4989a0bf876954b7e2e9a8b3c07ef88830f6f1c3919c97c3dd77cfdb54

|

|

| MD5 |

8fc1fc44de113d9372ac117fc592c479

|

|

| BLAKE2b-256 |

31691594b8d3d1fa190aa317a8201e1fab31d4ed0bdf2ee22c4390f5d4d11023

|

File details

Details for the file baseten_performance_client-0.1.8-cp313-cp313t-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp313-cp313t-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 5.6 MB

- Tags: CPython 3.13t, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

229fec7426a9c18588d32da31a9efe7d7a68bbe566e857c4a96c71b7c21d88fb

|

|

| MD5 |

d1f63f61736cce7b327716200975f2dc

|

|

| BLAKE2b-256 |

973d366503adfbc72f2877c81b67a22330549494b304a2ecff865e87ae11153f

|

File details

Details for the file baseten_performance_client-0.1.8-cp313-cp313t-manylinux_2_17_ppc64le.manylinux2014_ppc64le.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp313-cp313t-manylinux_2_17_ppc64le.manylinux2014_ppc64le.whl

- Upload date:

- Size: 5.9 MB

- Tags: CPython 3.13t, manylinux: glibc 2.17+ ppc64le

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

7db953cae875b3c47a854e69dc1a99a82ec79e32f7956797766ba2898aac4a3b

|

|

| MD5 |

9f1db3d84fd6e07d1ee189bf16ade483

|

|

| BLAKE2b-256 |

e12a5cf43b8426ab1a572543ba97b51868c27fb43d2ad67a2c6f4484c942e276

|

File details

Details for the file baseten_performance_client-0.1.8-cp313-cp313t-manylinux_2_17_i686.manylinux2014_i686.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp313-cp313t-manylinux_2_17_i686.manylinux2014_i686.whl

- Upload date:

- Size: 5.7 MB

- Tags: CPython 3.13t, manylinux: glibc 2.17+ i686

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

5c7344ac865787d36d65fd68609639f0fdd9237039ab6b2bf1dcfd16dd136043

|

|

| MD5 |

9ea43b287622215886a2ad2b54966013

|

|

| BLAKE2b-256 |

d29c24e5e1fc4d8fe7b42dcf6f0fbbd1a2118e8d00fb1fae7729c7fe50ed7aca

|

File details

Details for the file baseten_performance_client-0.1.8-cp313-cp313t-macosx_11_0_arm64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp313-cp313t-macosx_11_0_arm64.whl

- Upload date:

- Size: 3.2 MB

- Tags: CPython 3.13t, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a25b1fecce183fc1c4fc5b20e43419328b33bf4444f86be0fae40185476299e9

|

|

| MD5 |

a437b66a5dec0e242509b0e846a5bab2

|

|

| BLAKE2b-256 |

7411a66656440080f59b7a6ad8c991bec078a08392a71e53dd145c955fe26e1f

|

File details

Details for the file baseten_performance_client-0.1.8-cp313-cp313t-macosx_10_12_x86_64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp313-cp313t-macosx_10_12_x86_64.whl

- Upload date:

- Size: 3.3 MB

- Tags: CPython 3.13t, macOS 10.12+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b5857972c5cc7c092080672040ae01ff957f6467f4a33afdad538c72421f1685

|

|

| MD5 |

0467002fff9bdbe70eb55e56a96007a2

|

|

| BLAKE2b-256 |

3be470a56f7360794025d39cdb3de3cd5e06e074dc235608216e5fe5d4109b3f

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-win_amd64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-win_amd64.whl

- Upload date:

- Size: 3.0 MB

- Tags: CPython 3.8+, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

aa869043cbedec257d4e3ad9d4ccb8883ce67533e199761a374247667f3a41a7

|

|

| MD5 |

81c0716962360b70da389089ccd3ddcd

|

|

| BLAKE2b-256 |

305201bd292276479c5cd7fff6c16dfda2a767636e2f577b7706b7b6f6849989

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-musllinux_1_2_x86_64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-musllinux_1_2_x86_64.whl

- Upload date:

- Size: 5.9 MB

- Tags: CPython 3.8+, musllinux: musl 1.2+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

72f22a0de51b35cf6b6e4c600cd3758571d7f98d2057dbab257d3358cf9e856e

|

|

| MD5 |

a0482420e531a581941fbaf2cfe757d3

|

|

| BLAKE2b-256 |

3b894f784cee783fb92c38be6c66ab7c2188982b68b2776f6653dc8b8859fabe

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-musllinux_1_2_i686.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-musllinux_1_2_i686.whl

- Upload date:

- Size: 5.7 MB

- Tags: CPython 3.8+, musllinux: musl 1.2+ i686

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

74508794b694d64debc4b92c958ebe0be4d961c350a99452f3fc27c86db814e0

|

|

| MD5 |

b5dde838630b199bf809a3f0a69849cd

|

|

| BLAKE2b-256 |

ff1a23afc1594810196558a6dbf2b33367e7bb0576273fc57e467f28826ed1fb

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-musllinux_1_2_armv7l.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-musllinux_1_2_armv7l.whl

- Upload date:

- Size: 5.3 MB

- Tags: CPython 3.8+, musllinux: musl 1.2+ ARMv7l

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f591dba91ccb01b7926098a9534a42b657b43f2f4d8af6eabf4b27f5722dc6fe

|

|

| MD5 |

b7f7f1b26418d76f103dcceca713fa42

|

|

| BLAKE2b-256 |

e27295774a53ba08669e7bbb32bca2bf588437da3239af8fcc8ccfc185109a62

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-musllinux_1_2_aarch64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-musllinux_1_2_aarch64.whl

- Upload date:

- Size: 6.2 MB

- Tags: CPython 3.8+, musllinux: musl 1.2+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

88dc76a941ed58d9f93b2464378fd28d6283c60e1d99e75b25e7037a2efe24a2

|

|

| MD5 |

8cf37d5d95110c04fcf0eedfa458139b

|

|

| BLAKE2b-256 |

3260c657264d6469b11b57981c142140f39c434004db2158fbab544b21d29854

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-manylinux_2_28_armv7l.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-manylinux_2_28_armv7l.whl

- Upload date:

- Size: 5.0 MB

- Tags: CPython 3.8+, manylinux: glibc 2.28+ ARMv7l

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

03f447dc6fa48aae8186662484fdf62f2e27489b8f7c1abdb2dcf082f1b3d21f

|

|

| MD5 |

c696da59a8e35d238d2ecb5211110462

|

|

| BLAKE2b-256 |

b0711527c47349524d8f2f74482e94b26057886b8054acf67085dbd8eef8b07b

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 5.9 MB

- Tags: CPython 3.8+, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

feff7552453ff93fc2e76d719ac12d3cc7d148ae3aaca88457b1f05dfefe8430

|

|

| MD5 |

d8f1b387968d9cbdf32baf84f38d79d9

|

|

| BLAKE2b-256 |

84898c94c2706292d058d6df9cf4d23b7d55f57902c7e2341034c1511272fd49

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 5.6 MB

- Tags: CPython 3.8+, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

998e1726d4853c117da77e5c9f211284597e62f5b932b1c1802cda85d8fe975e

|

|

| MD5 |

951576d585e49aa3282f217926dc8bf3

|

|

| BLAKE2b-256 |

0cfe565c418f84bee653967705edca1e0c1f2ba869e426b2910762b3d80ff795

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-manylinux_2_17_ppc64le.manylinux2014_ppc64le.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-manylinux_2_17_ppc64le.manylinux2014_ppc64le.whl

- Upload date:

- Size: 5.9 MB

- Tags: CPython 3.8+, manylinux: glibc 2.17+ ppc64le

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2ce0d3ca3d13ed5a8a42f80a5754c0caea0c07a39cda3d1d9f2aa1e402f2dc9e

|

|

| MD5 |

aef585996aaffc5da203c7af76243961

|

|

| BLAKE2b-256 |

27d9f33daf92d6f28ab3004a23596363d6648d57ebdcbc8162a2d81dc3f298b7

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-manylinux_2_17_i686.manylinux2014_i686.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-manylinux_2_17_i686.manylinux2014_i686.whl

- Upload date:

- Size: 5.7 MB

- Tags: CPython 3.8+, manylinux: glibc 2.17+ i686

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b307abeede5e2353afe20e6fcc09e8079f8a988698a3023ab1062ca8a46bccc5

|

|

| MD5 |

99d3141208876d1a7bff19593fabcc96

|

|

| BLAKE2b-256 |

5d49ef83bdda8c5ea4a30ae178719e1ad55102395ed5d232c81c9cfa9feb0f82

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-macosx_11_0_arm64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-macosx_11_0_arm64.whl

- Upload date:

- Size: 3.2 MB

- Tags: CPython 3.8+, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0553044de736afbc1ba0edb8075d53ac0d0617f31d2ada905c5167688ae9914e

|

|

| MD5 |

9c88717841b1756f5aa1074a1c3618b0

|

|

| BLAKE2b-256 |

4a02bb3844f89dc0cad2c49632abdd2f0cbabf558b8203bf09642bc8f9f2cd75

|

File details

Details for the file baseten_performance_client-0.1.8-cp38-abi3-macosx_10_12_x86_64.whl.

File metadata

- Download URL: baseten_performance_client-0.1.8-cp38-abi3-macosx_10_12_x86_64.whl

- Upload date:

- Size: 3.3 MB

- Tags: CPython 3.8+, macOS 10.12+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c8719dd4aff8c9031ee597738c148113e6991801d2a1684091f13bc443d57e1f

|

|

| MD5 |

6e02c57957871e18f2350bf5f45ada0f

|

|

| BLAKE2b-256 |

331c80162eded2042e3e331d93441399dfc6ccabc7ad409d3cbcccc7549c9478

|