Gradient-Efficient Knowledge Optimization - Smart training for any LLM

Project description

GEKO: Gradient-Efficient Knowledge Optimization

A plug-and-play training framework that makes LLM training more efficient.

Like LoRA revolutionized fine-tuning, GEKO revolutionizes training.

Key Insight

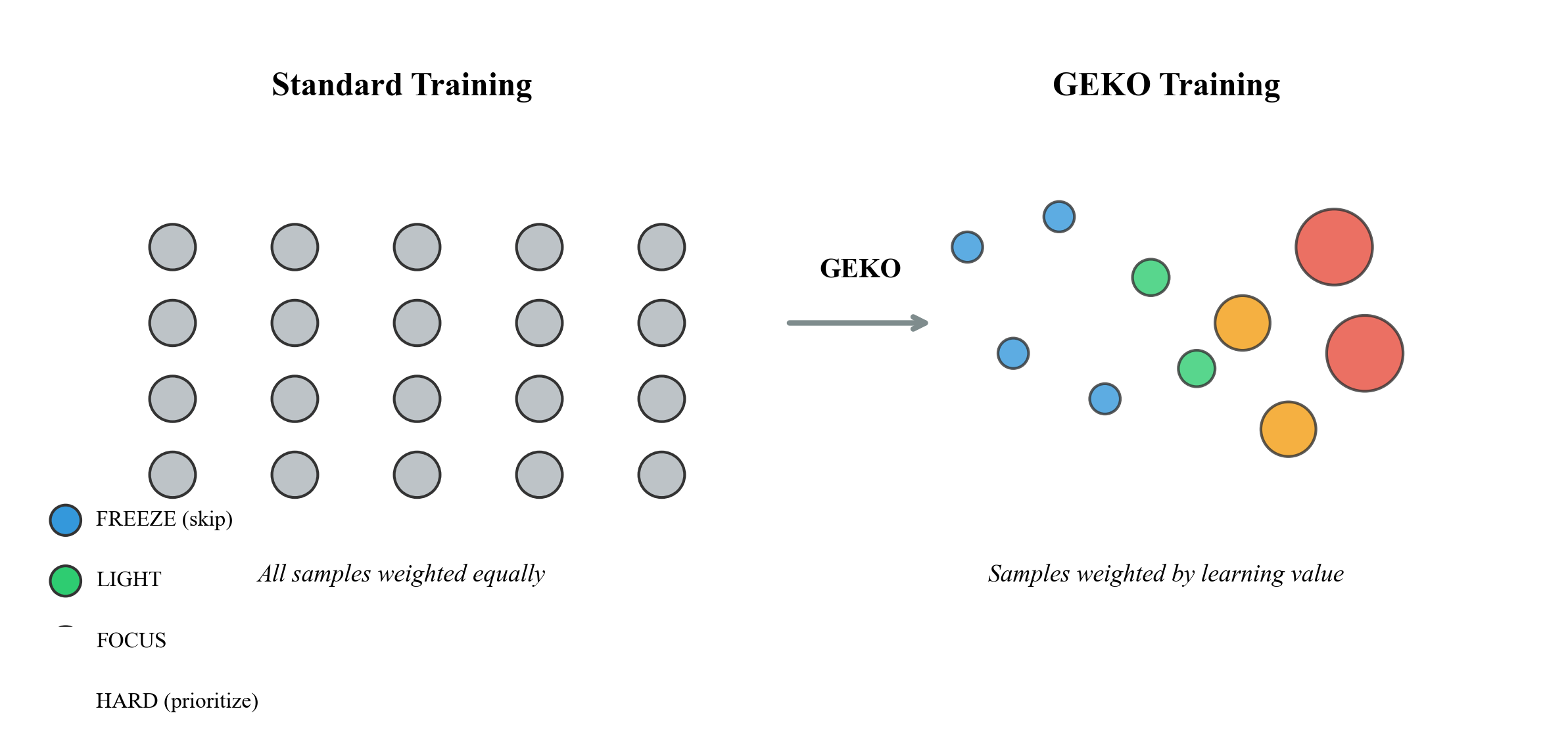

Traditional training treats all samples equally:

$$\mathcal{L}{standard} = \frac{1}{N} \sum{i=1}^{N} \ell(x_i, y_i)$$

GEKO weights samples by their learning value:

$$\mathcal{L}{GEKO} = \frac{1}{N} \sum{i=1}^{N} w_i \cdot \ell(x_i, y_i) \quad \text{where} \quad w_i = f(bucket_i)$$

Installation

pip install gekolib

Quick Start

from geko import GEKOTrainer, GEKOConfig

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained("gpt2")

tokenizer = AutoTokenizer.from_pretrained("gpt2")

trainer = GEKOTrainer(

model=model,

tokenizer=tokenizer,

train_dataset=your_dataset,

)

trainer.train()

print(trainer.get_efficiency_report())

The GEKO Algorithm

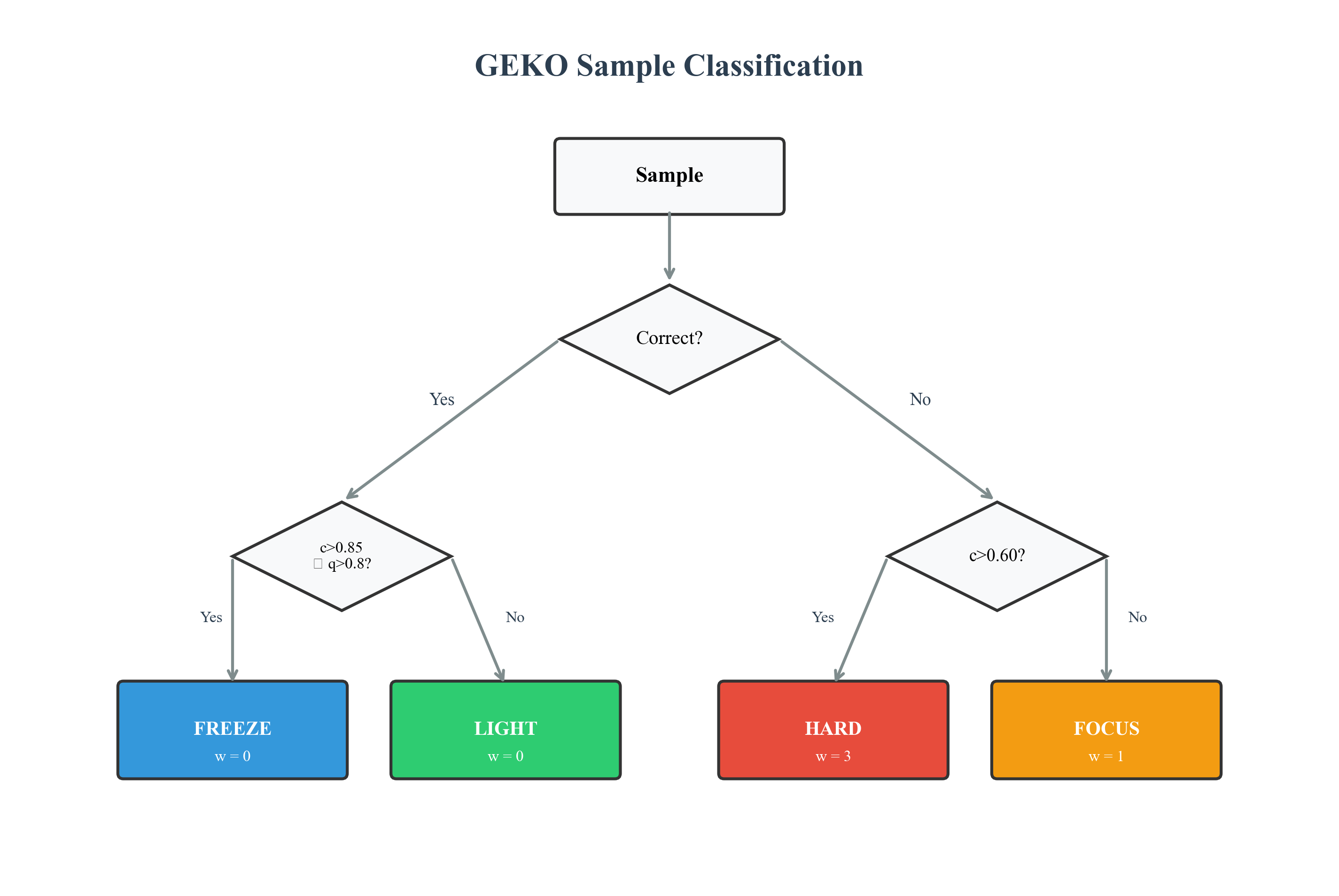

Bucket Definitions

| Bucket | Condition | Weight | Description |

|---|---|---|---|

| 🔵 FREEZE | $correct \land c > 0.85 \land q > 0.80$ | $w = 0$ | Mastered |

| 🟢 LIGHT | $correct \land (c \leq 0.85 \lor q \leq 0.80)$ | $w = 0$ | Uncertain |

| 🟠 FOCUS | $\neg correct \land c \leq 0.60$ | $w = 1$ | Wrong |

| 🔴 HARD | $\neg correct \land c > 0.60$ | $w = 3$ | Confident-wrong |

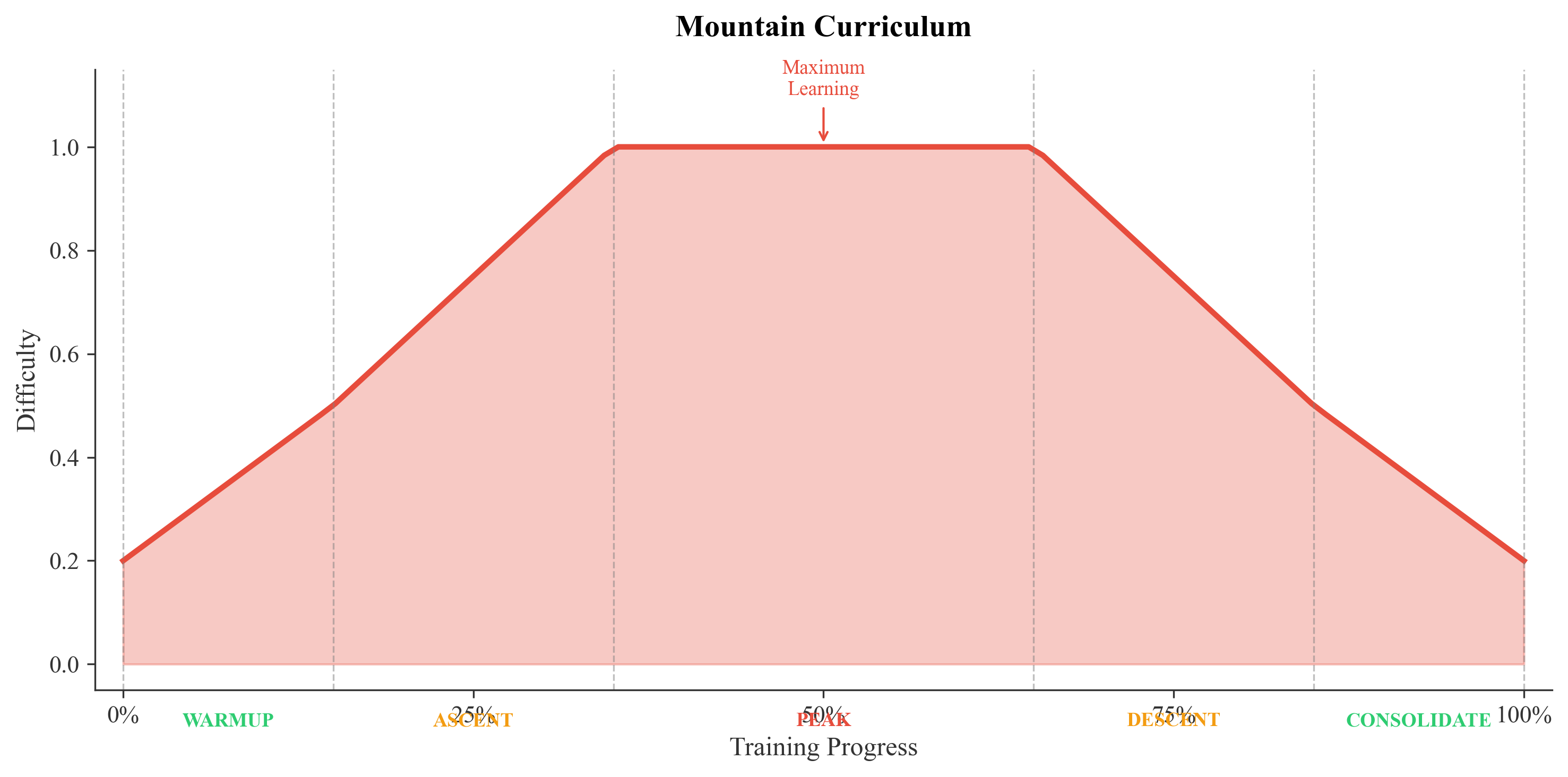

Mountain Curriculum

Five Phases

| Phase | Progress | HARD | FOCUS | LIGHT | Strategy |

|---|---|---|---|---|---|

| WARMUP | 0-15% | 1 | 2 | 3 | Build foundation |

| ASCENT | 15-35% | 2 | 3 | 1 | Increase difficulty |

| PEAK | 35-65% | 5 | 2 | 0 | Maximum learning |

| DESCENT | 65-85% | 2 | 3 | 1 | Reduce difficulty |

| CONSOLIDATE | 85-100% | 1 | 2 | 3 | Reinforce |

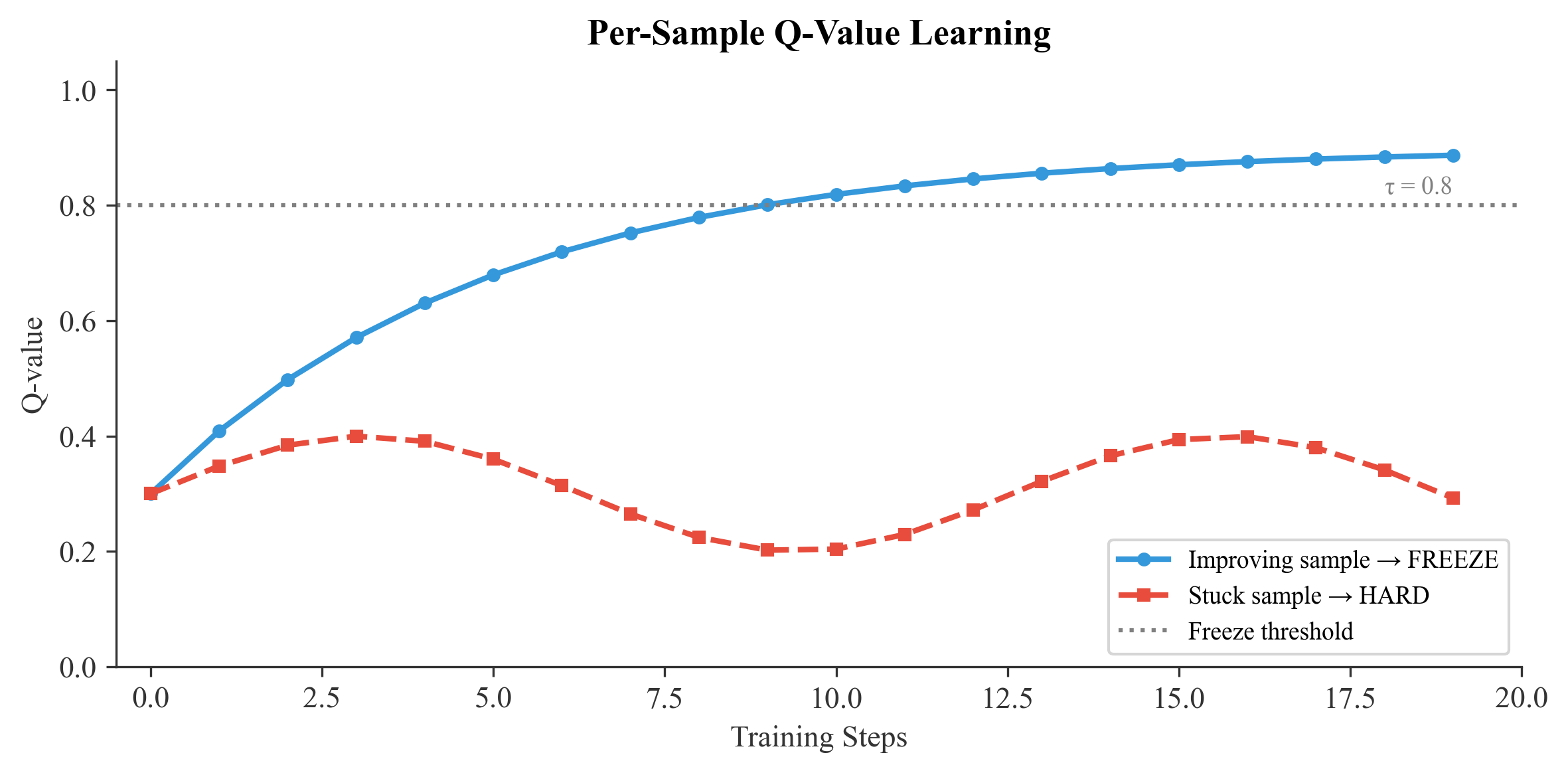

Q-Value Learning

Each sample maintains a Q-value representing "learnability":

$$Q_{t+1}(s) = (1 - \alpha) \cdot Q_t(s) + \alpha \cdot \left(1 - \frac{\ell_t(s)}{\ell_{max}}\right)$$

Efficiency Analysis

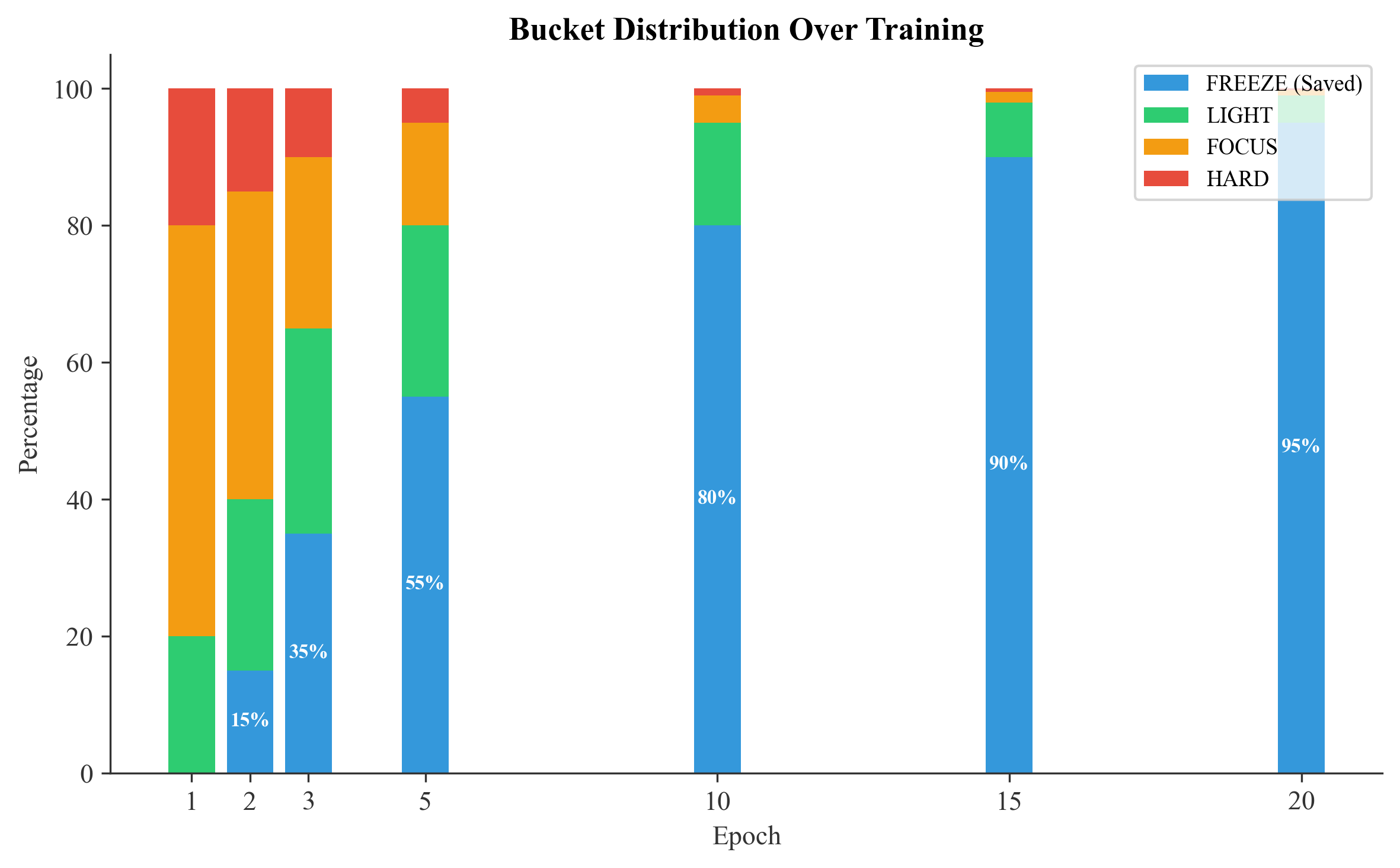

Training Progression

| Epoch | FREEZE | LIGHT | FOCUS | HARD | Compute Saved |

|---|---|---|---|---|---|

| 1 | 0% | 20% | 60% | 20% | 0% |

| 2 | 15% | 25% | 45% | 15% | 15% |

| 3 | 35% | 30% | 25% | 10% | 35% |

| 5 | 55% | 25% | 15% | 5% | 55% |

| 10 | 80% | 15% | 4% | 1% | 80% |

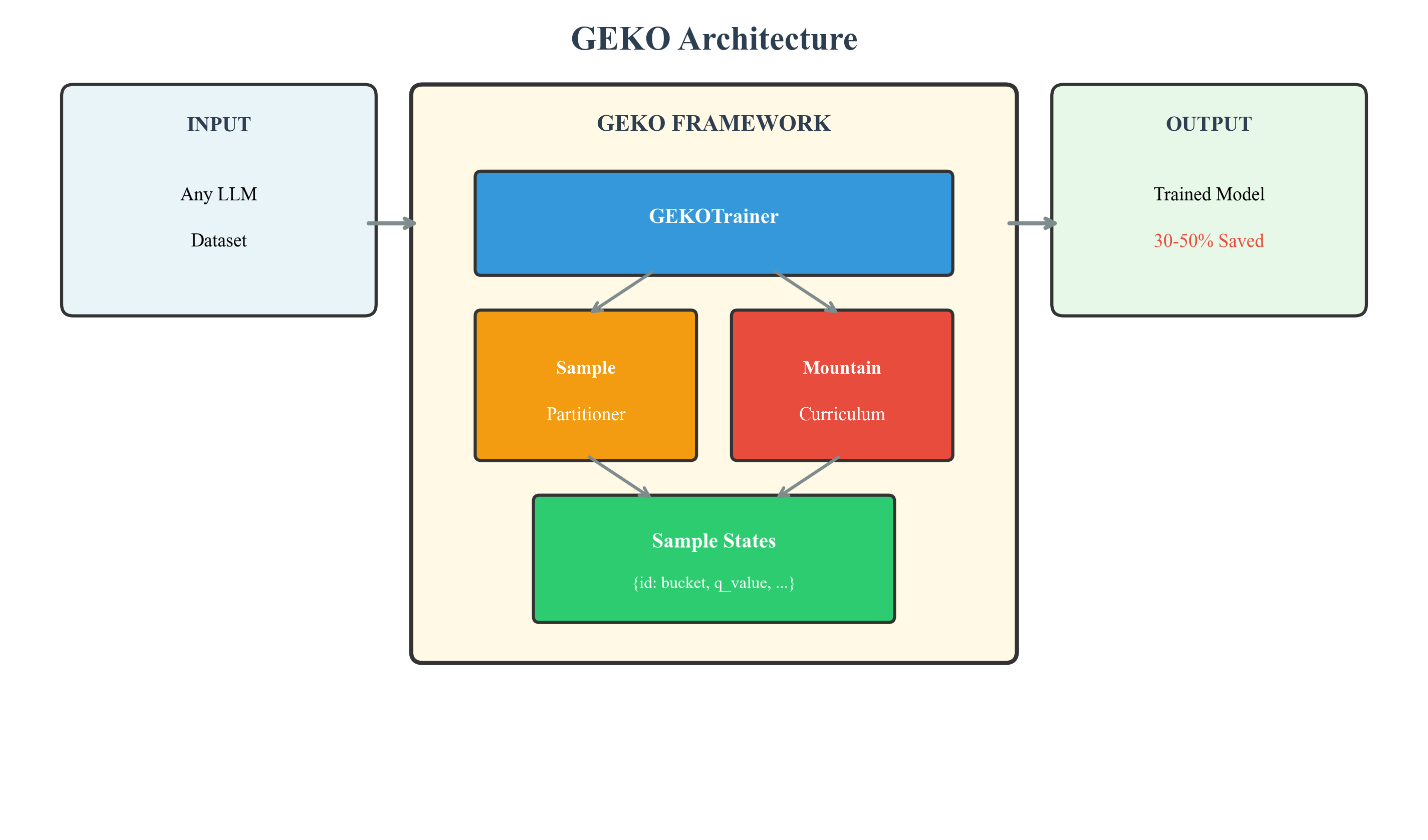

Architecture

Theoretical Guarantees

Convergence

Under standard assumptions, GEKO converges:

$$\sum_{t=1}^{\infty} w_t^{(s)} = \infty \quad \forall s \notin \text{FREEZE}$$

Efficiency Bound

$$T_{GEKO} \leq T_{standard} \cdot (1 - \mathbb{E}[F])$$

Where $\mathbb{E}[F]$ = expected freeze fraction.

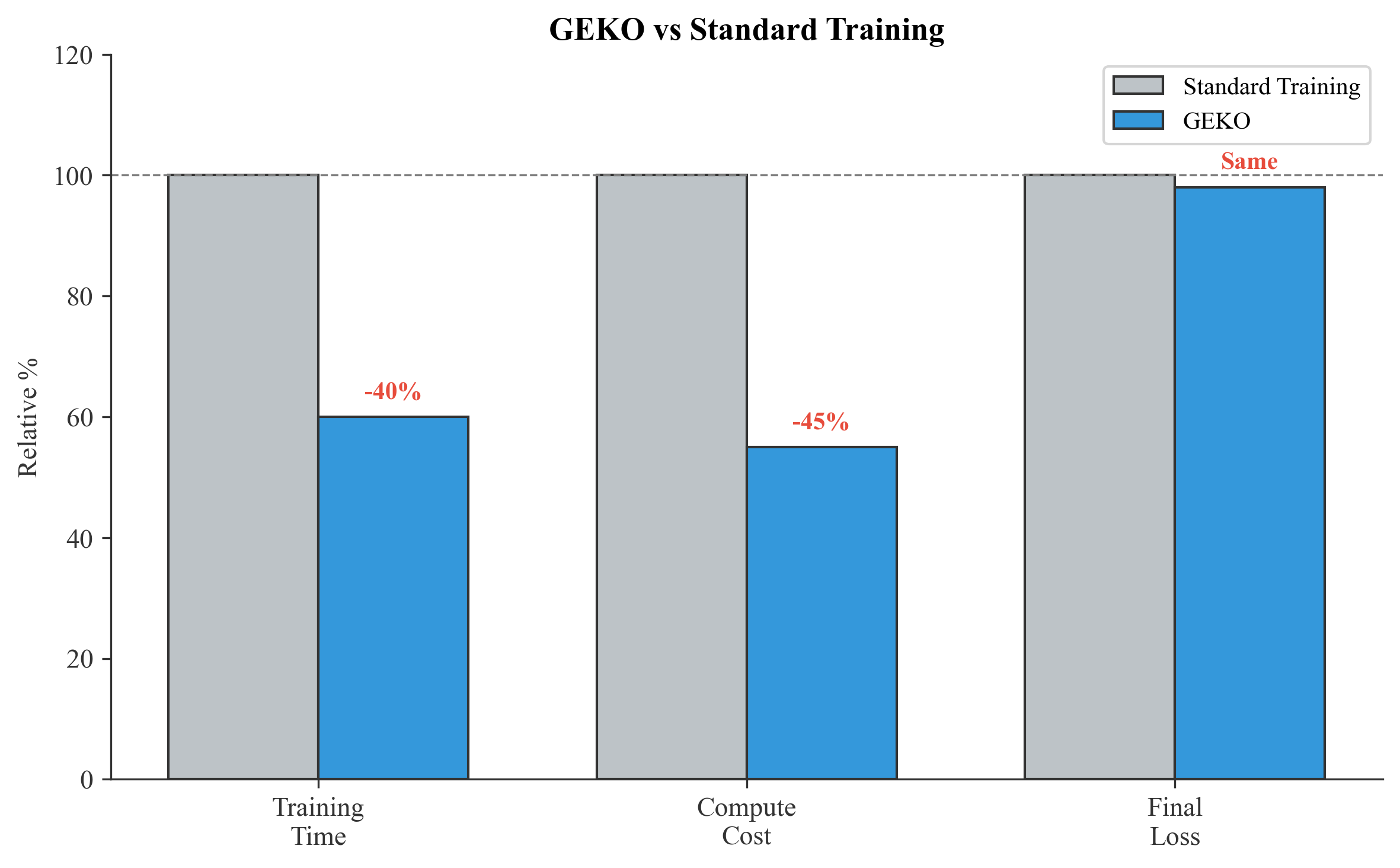

Results

| Metric | Standard | GEKO | Improvement |

|---|---|---|---|

| Training Time | 100% | 50-70% | 30-50% faster |

| Compute Cost | 100% | 50-70% | 30-50% cheaper |

| Final Loss | $\ell^*$ | $\leq \ell^*$ | Equal or better |

Citation

@software{geko2026,

author = {Syed Abdur Rehman},

title = {GEKO: Gradient-Efficient Knowledge Optimization},

year = {2026},

url = {https://github.com/ra2157218-boop/GEKO},

doi = {10.5281/zenodo.18177743}

}

License

Apache 2.0

GEKO - Train smarter, not harder.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file gekolib-0.1.1.tar.gz.

File metadata

- Download URL: gekolib-0.1.1.tar.gz

- Upload date:

- Size: 20.5 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6d7c1a3957f4d43d415bd2cb00fa1172817ae7e48d9601ebb9fd4e04bf03110a

|

|

| MD5 |

b253d5e6d385269ead12542d0e60e59d

|

|

| BLAKE2b-256 |

baf647ececab14b8d6ecd25f64f3579fc53e6dfa1791a68da0e74e1be307f17d

|

File details

Details for the file gekolib-0.1.1-py3-none-any.whl.

File metadata

- Download URL: gekolib-0.1.1-py3-none-any.whl

- Upload date:

- Size: 20.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

180ed86c0347e2ca1d9b91fa6259dcdf07d336a1903651e403ec1a32a027fedd

|

|

| MD5 |

4d63bb74088f9da85809c9a1336c74ce

|

|

| BLAKE2b-256 |

60a50e289b10aa1a3563686711f60334bfffa32a3f33f6cdc0def8a499ca4975

|