Comprehensive OpenTelemetry auto-instrumentation for LLM/GenAI applications

Project description

TraceVerde

The most comprehensive OpenTelemetry auto-instrumentation library for LLM/GenAI applications

Trace from OpenTelemetry traces. Verde meaning green - for sustainable, transparent AI observability.

Documentation | Examples | Discord | PyPI

Get Started in 30 Seconds

pip install genai-otel-instrument

import genai_otel

genai_otel.instrument()

# Your existing code works unchanged - traces, metrics, and costs are captured automatically

import openai

client = openai.OpenAI()

response = client.chat.completions.create(model="gpt-4o-mini", messages=[{"role": "user", "content": "Hello!"}])

That's it. No wrappers, no decorators, no config files. Every LLM call, database query, and agent interaction is automatically traced with full cost breakdown.

Why TraceVerde?

| Feature | TraceVerde | OpenLIT | Traceloop/OpenLLMetry | Langfuse |

|---|---|---|---|---|

| Zero-code setup | Yes | Yes | Yes | SDK required |

| LLM providers | 19+ | 25+ | 15+ | Via integrations |

| Multi-agent frameworks | 8 (CrewAI, LangGraph, ADK, AutoGen, OpenAI Agents, Pydantic AI, etc.) | Limited | Limited | Limited |

| Cost tracking | Automatic (1,050+ models) | Manual config | Manual config | Manual config |

| GPU metrics (NVIDIA + AMD) | Yes | No | No | No |

| MCP tool instrumentation | Yes (databases, caches, vector DBs, queues) | Limited | Limited | No |

| Evaluation (PII, toxicity, bias, hallucination, prompt injection) | Built-in (6 detectors) | No | No | Separate service |

| OpenTelemetry native | Yes | Yes | Yes | Partial |

| License | Apache-2.0 | Apache-2.0 | Apache-2.0 | MIT |

What Gets Instrumented?

LLM Providers (19+)

OpenAI, OpenRouter, Anthropic, Google AI, Google GenAI, AWS Bedrock, Azure OpenAI, Cohere, Mistral AI, Together AI, Groq, Ollama, Vertex AI, Replicate, HuggingFace, SambaNova, Sarvam AI, Hyperbolic, LiteLLM

See all providers with examples >>

Multi-Agent Frameworks (8)

CrewAI, LangGraph, Google ADK, AutoGen, AutoGen AgentChat, OpenAI Agents SDK, Pydantic AI, AWS Bedrock Agents

See all frameworks with examples >>

MCP Tools (20+)

Databases: PostgreSQL, MySQL, MongoDB, SQLAlchemy, TimescaleDB, OpenSearch, Elasticsearch, FalkorDB Caching: Redis | Queues: Kafka, RabbitMQ | Storage: MinIO Vector DBs: Pinecone, Weaviate, Qdrant, ChromaDB, Milvus, FAISS, LanceDB

Built-in Evaluation (6 Detectors)

PII Detection (GDPR/HIPAA/PCI-DSS), Toxicity Detection, Bias Detection, Prompt Injection Detection, Restricted Topics, Hallucination Detection

See all evaluation features with examples >>

Screenshots

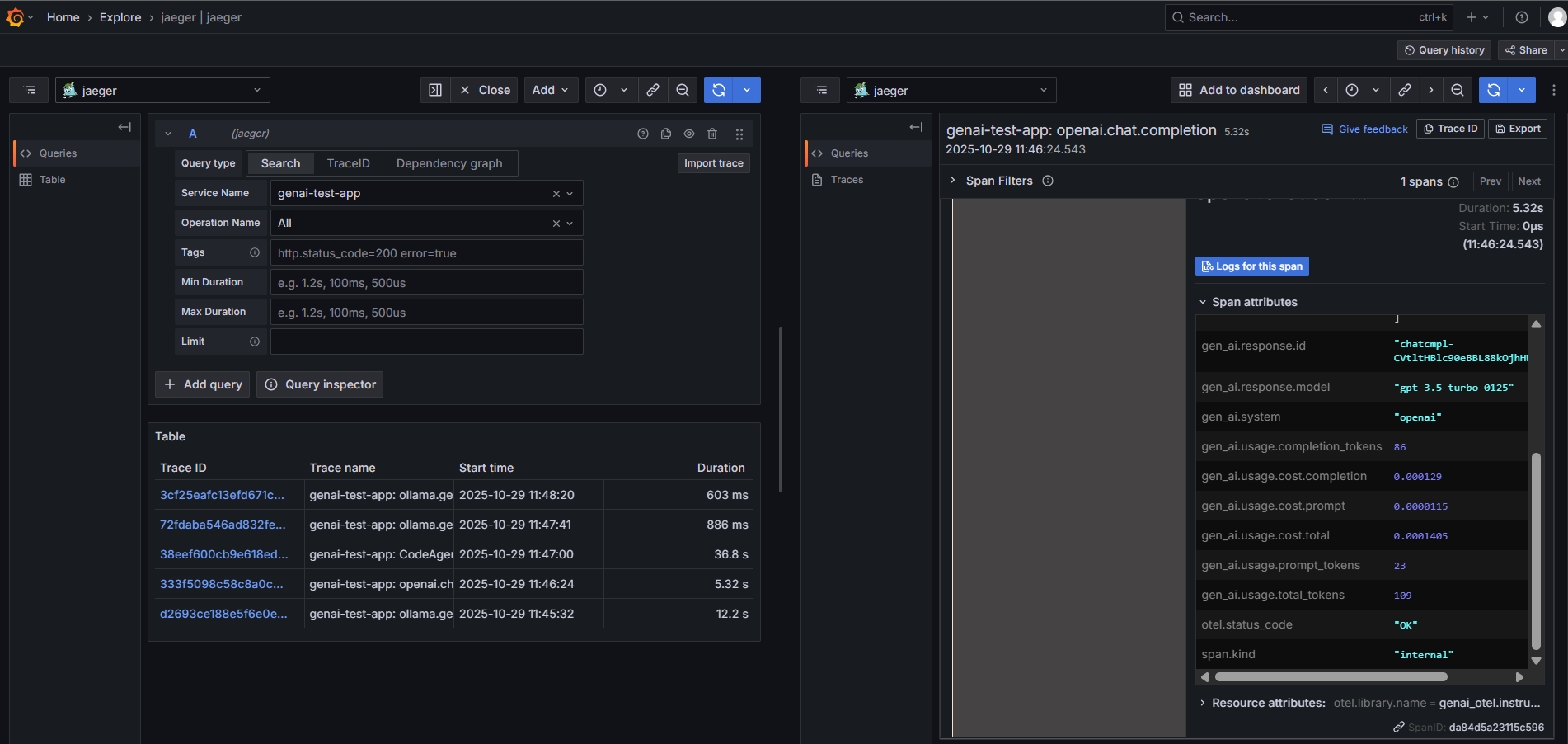

OpenAI traces with token usage, costs, and latency

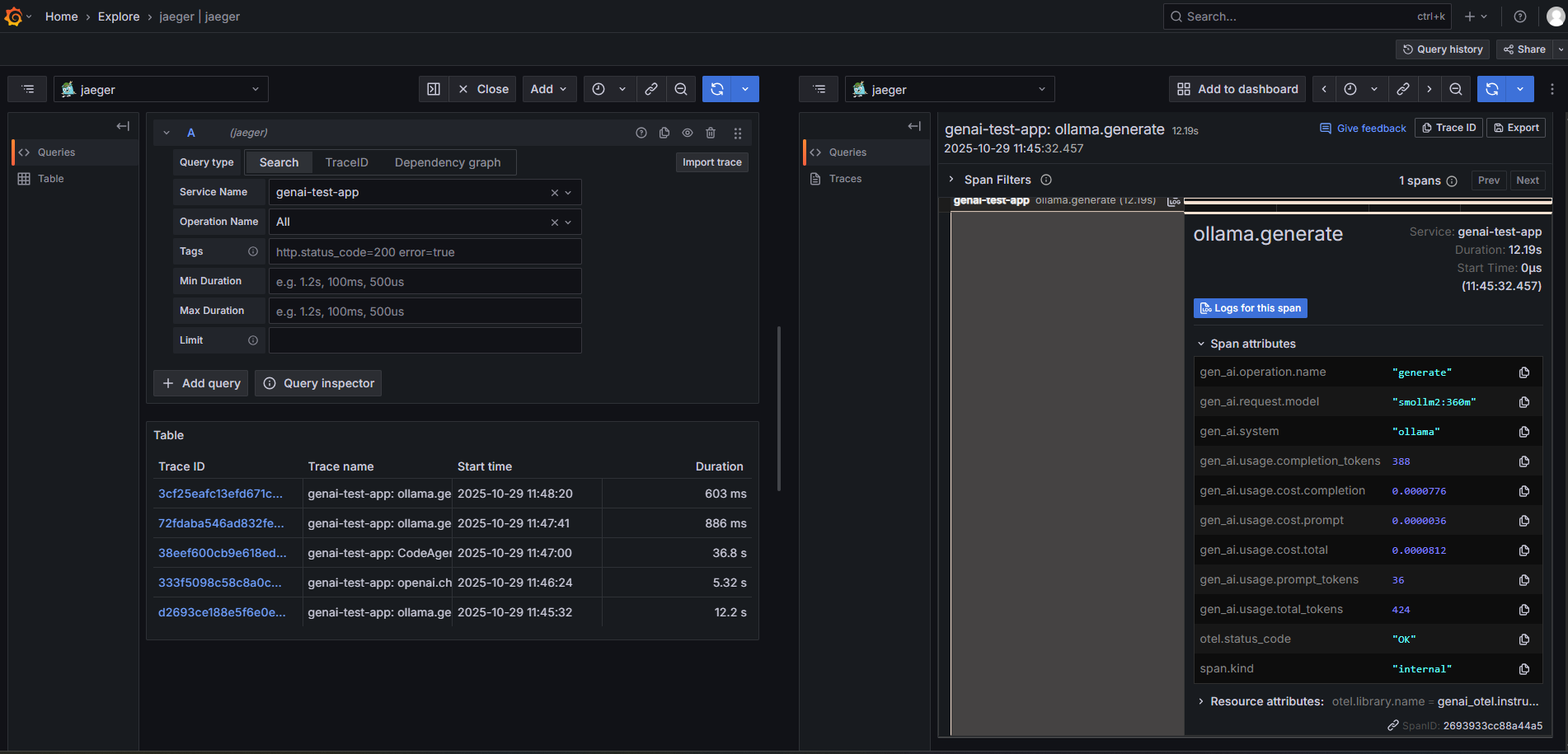

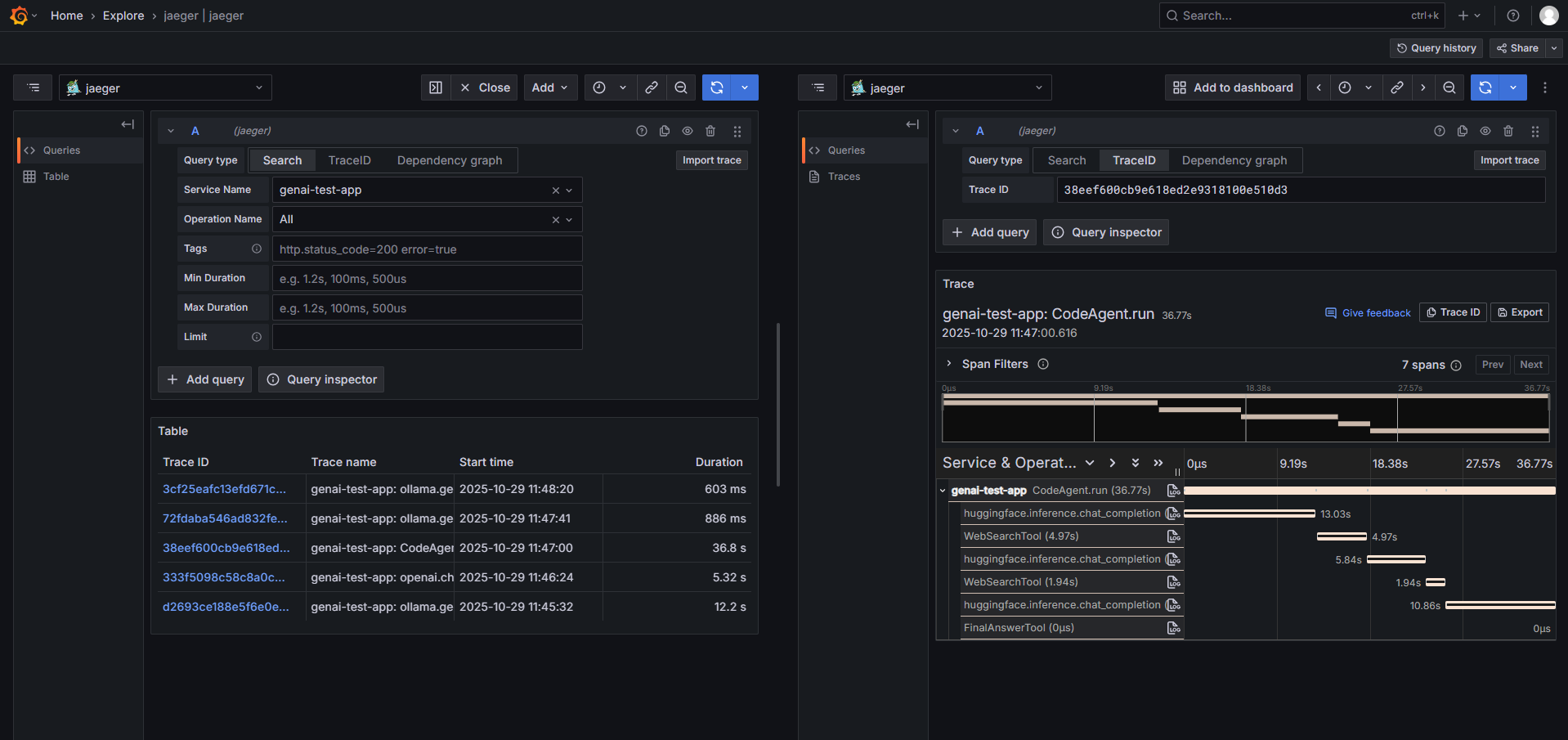

More screenshots

Ollama (Local LLM)

SmolAgents with Tool Calls

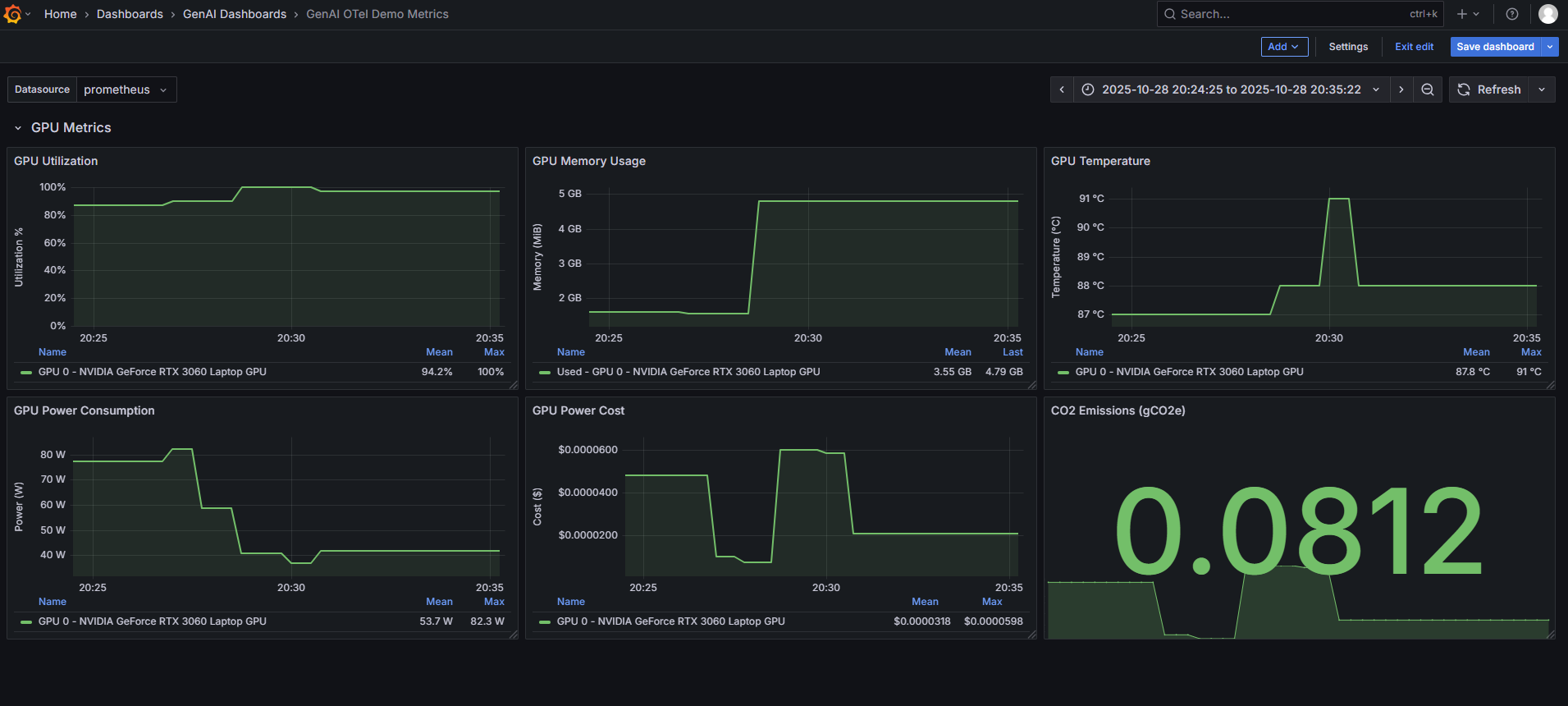

GPU Metrics

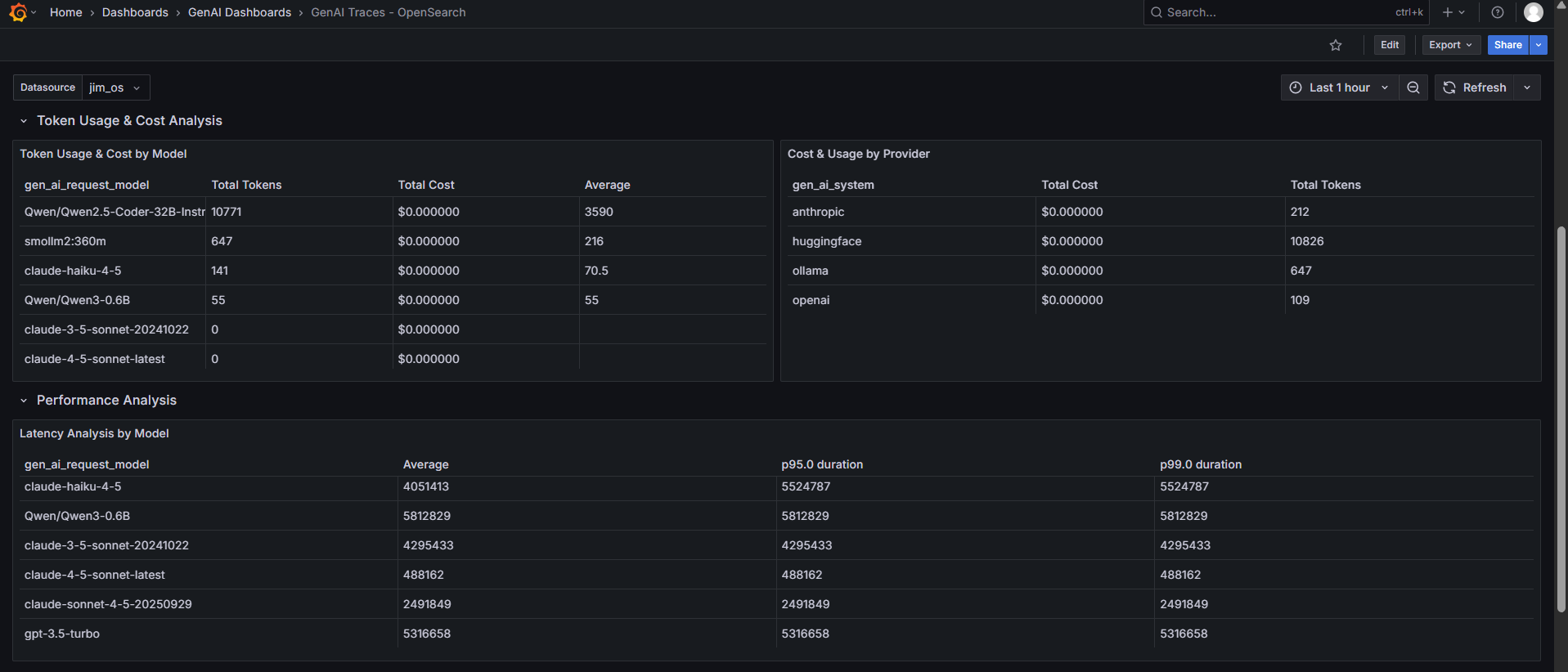

OpenSearch Dashboard

Key Features

Automatic Cost Tracking

1,050+ models across 30+ providers with per-request cost breakdown. Supports differential pricing (prompt vs completion), reasoning tokens, cache pricing, and custom model pricing.

# Cost tracking is enabled by default - just instrument and go

genai_otel.instrument()

# Or add custom pricing for proprietary models

export GENAI_CUSTOM_PRICING_JSON='{"chat":{"my-model":{"promptPrice":0.001,"completionPrice":0.002}}}'

GPU Metrics (NVIDIA + AMD)

Real-time monitoring of utilization, memory, temperature, power, PCIe throughput, throttling, and ECC errors. Multi-GPU aggregate metrics included.

pip install genai-otel-instrument[gpu] # NVIDIA

pip install genai-otel-instrument[amd-gpu] # AMD

Multi-Agent Tracing

Complete span hierarchy for agent frameworks with automatic context propagation:

Crew Execution

+-- Agent: Senior Researcher (gpt-4)

| +-- Task: Research OpenTelemetry

| +-- openai.chat.completions (tokens: 1250, cost: $0.03)

+-- Agent: Technical Writer (ollama:llama2)

+-- Task: Write blog post

+-- ollama.chat (tokens: 890, cost: $0.00)

Multimodal Observability (v1.1.0)

First-class capture of image, audio, video, and document content parts on OpenAI, Anthropic, Google Gemini, and Groq spans. Bytes are offloaded to your configured object store (MinIO / S3 / filesystem / HTTP) and referenced from spans by URI — they never appear inline in span attributes.

# Opt in (default is off — text-only behaviour is byte-identical to 1.0.x)

export GENAI_OTEL_MEDIA_CAPTURE_MODE=full

export GENAI_OTEL_MEDIA_STORE=minio

export GENAI_OTEL_MEDIA_STORE_ENDPOINT=http://localhost:9000

export GENAI_OTEL_MEDIA_STORE_ACCESS_KEY=...

export GENAI_OTEL_MEDIA_STORE_SECRET_KEY=...

# Optional: plug in a redactor before upload

export GENAI_OTEL_MEDIA_REDACTOR=genai_otel.media.redactors.face_blur

Spans get a flat, queryable attribute namespace —

gen_ai.prompt.{n}.content.{m}.{type, media_uri, media_mime_type, media_byte_size, media_source} —

that is being proposed upstream to OpenTelemetry semantic-conventions

(issue #3672).

Safety & Evaluation

genai_otel.instrument(

enable_pii_detection=True, # GDPR/HIPAA/PCI-DSS compliance

enable_toxicity_detection=True, # Perspective API + Detoxify

enable_bias_detection=True, # 8 bias categories

enable_prompt_injection_detection=True,

enable_hallucination_detection=True,

enable_restricted_topics=True,

)

Evaluation guide with 50+ examples >>

Configuration

# Required

export OTEL_SERVICE_NAME=my-llm-app

export OTEL_EXPORTER_OTLP_ENDPOINT=http://localhost:4318

# Optional

export GENAI_ENABLE_GPU_METRICS=true

export GENAI_ENABLE_COST_TRACKING=true

export GENAI_SAMPLING_RATE=0.5 # Reduce volume in production

export GENAI_ENABLED_INSTRUMENTORS=openai,crewai # Select specific instrumentors

Full configuration reference >>

Backend Integration

Works with any OpenTelemetry-compatible backend:

Jaeger, Zipkin, Prometheus, Grafana, Datadog, New Relic, Honeycomb, AWS X-Ray, Google Cloud Trace, Elastic APM, Splunk, SigNoz, self-hosted OTel Collector

Pre-built Grafana dashboard templates included.

Examples

90+ ready-to-run examples covering every provider, framework, and evaluation feature:

examples/

+-- openai/ # OpenAI chat, embeddings

+-- anthropic/ # Anthropic + PII/toxicity detection

+-- ollama/ # Local models + all evaluation features

+-- crewai_example.py # Multi-agent crew orchestration

+-- langgraph_example.py # Stateful graph workflows

+-- google_adk_example.py # Google Agent Development Kit

+-- autogen_example.py # Microsoft AutoGen agents

+-- pii_detection/ # 10 PII examples (GDPR, HIPAA, PCI-DSS)

+-- toxicity_detection/ # 8 toxicity examples

+-- bias_detection/ # 8 bias examples (hiring compliance, etc.)

+-- prompt_injection/ # 6 injection defense examples

+-- hallucination/ # 4 hallucination detection examples

+-- ... # And many more

Who Uses TraceVerde?

TraceVerde is used by developers and teams building production GenAI applications. If you're using TraceVerde, we'd love to hear from you!

Add your company | Join Discord

Community

- Documentation - Full guides, API reference, and tutorials

- Discord - Chat with the community

- GitHub Issues - Bug reports and feature requests

- Contributing - How to contribute

License

Copyright 2025 Kshitij Thakkar. Licensed under the Apache License 2.0.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file genai_otel_instrument-1.1.0.tar.gz.

File metadata

- Download URL: genai_otel_instrument-1.1.0.tar.gz

- Upload date:

- Size: 2.4 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3be205a26b9f4fbb77bee0b31e5764a9725e9cb49f93f87624fe89434c59710b

|

|

| MD5 |

6d054fe9477e37db89988ebb74618931

|

|

| BLAKE2b-256 |

1e3481bd9a5ceccd7e336a7cd7ffaabfeefa5d78f0284156f0ab373e8e339c9a

|

File details

Details for the file genai_otel_instrument-1.1.0-py3-none-any.whl.

File metadata

- Download URL: genai_otel_instrument-1.1.0-py3-none-any.whl

- Upload date:

- Size: 257.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a5a31105567ba3f69e47e8c22601aea774a9cad9d68cea086332948bdf64a040

|

|

| MD5 |

fde49c2fadf0931c51ad61e2f87dcfbf

|

|

| BLAKE2b-256 |

65e5dfe20fa981d3dd196393003811cd4d8c65027f2b5d91c2d957015904192d

|