gguf node for comfyui

Project description

gguf node for comfyui

install it via pip/pip3

pip install gguf-node

enter the user menu by (if no py command; use python/python3 instead)

py -m gguf_node

Please select:

- download the full pack

- clone the node only

Enter your choice (1 to 2): _

for new/all user(s)

opt 1 to download the compressed comfy pack (7z), decompress it, and run the .bat file striaght (idiot option)

for existing user/developer(s)

opt 2 to clone the gguf repo to the current directory (either navigate to ./ComfyUI/custom_nodes first or drag and drop there after the clone)

alternatively, you could execute the git clone command to perform that task (see below):

- navigate to

./ComfyUI/custom_nodes - clone the gguf repo to that folder by

git clone https://github.com/calcuis/gguf

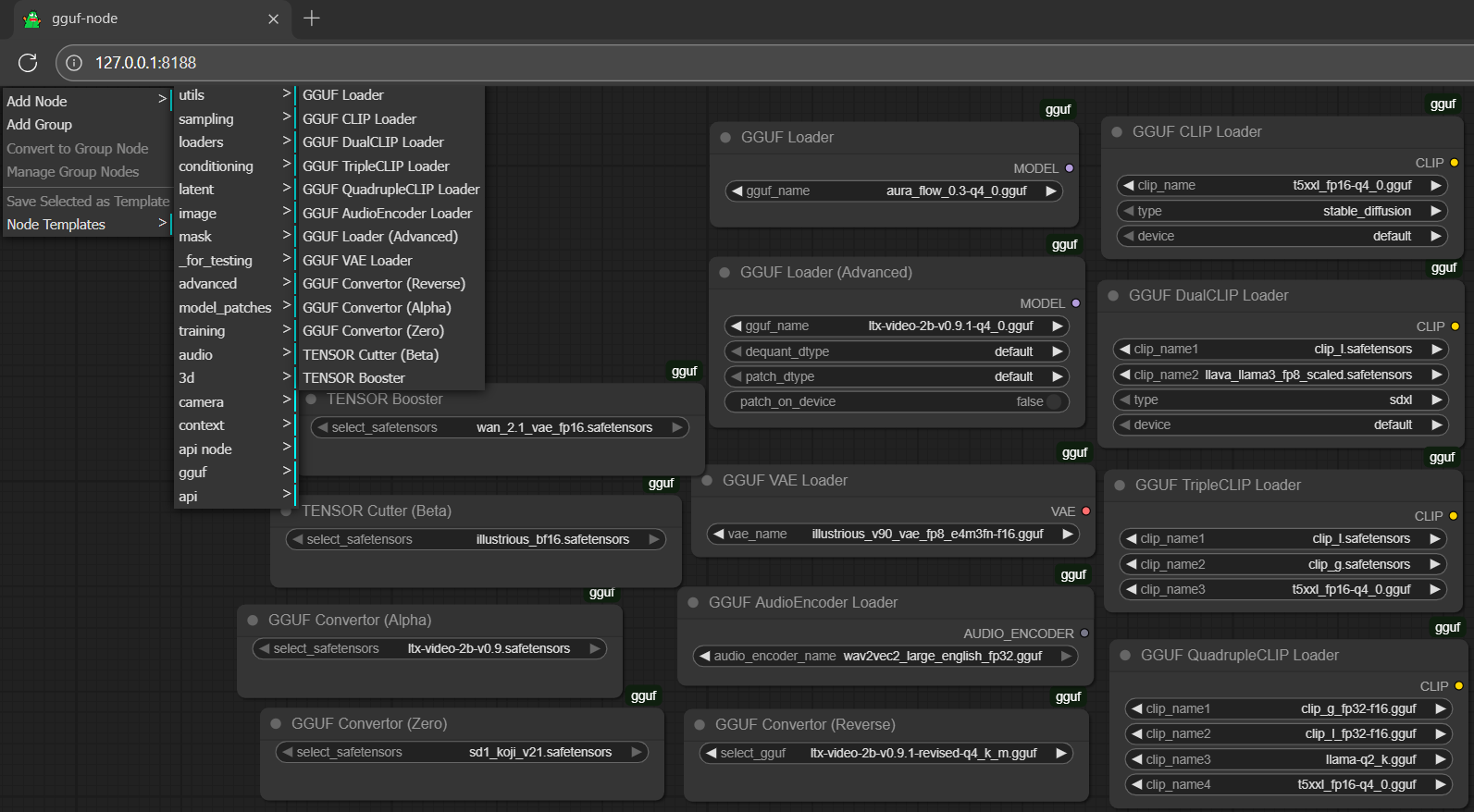

same operation for the standalone pack; then you should be able to see it under Add Node >

gguf

🐷🐷📄 for the latest update, gguf-connector deployment copy is now attached to the node itself; don't need to clone it to site-packages; and, as the default setting in comfyui is sufficient; no dependencies needed right away 🙌 no extra step anymore

other(s): get it somewhere else trustworthy/reliable

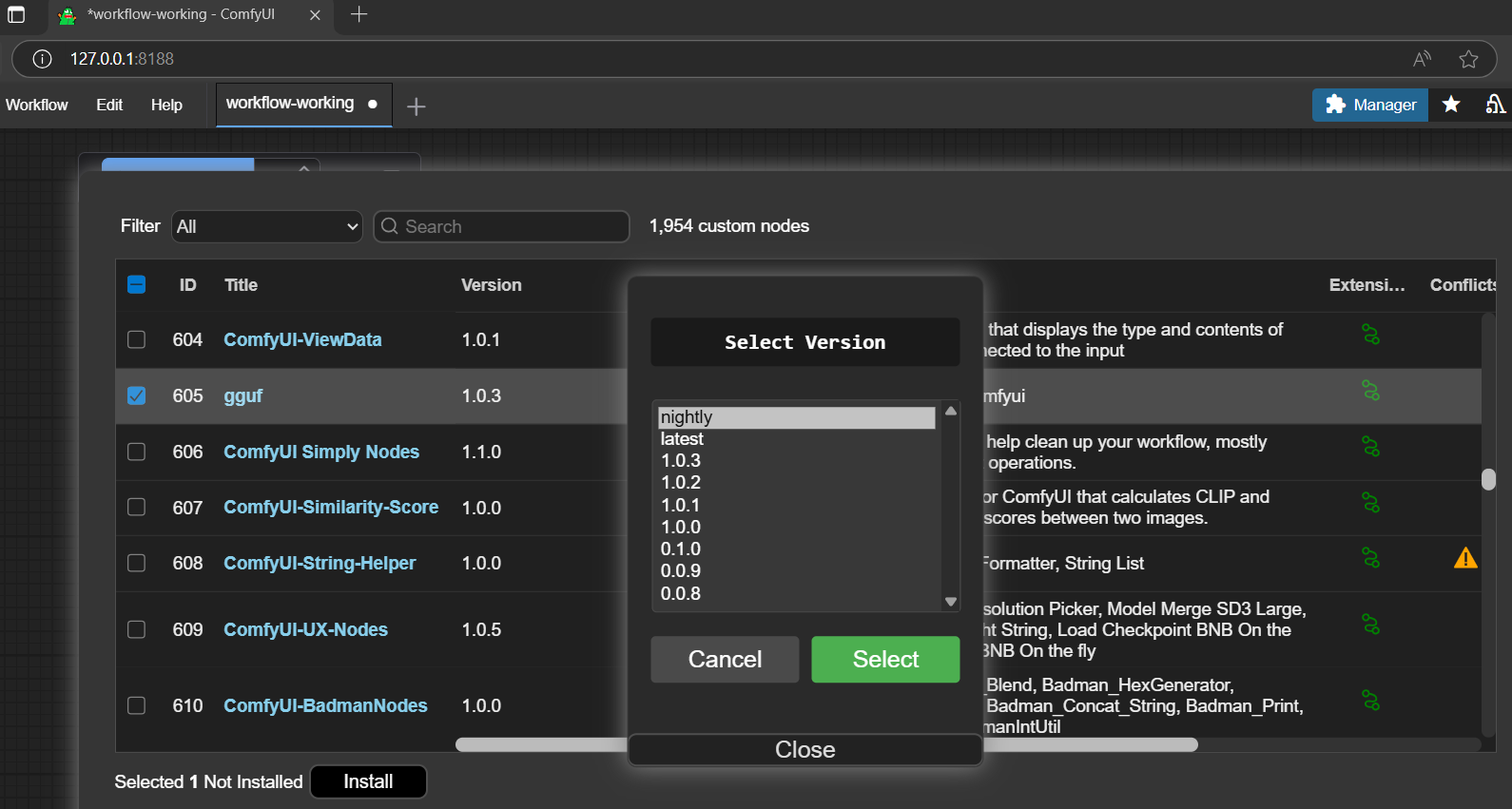

you are also welcome to get the node through other available channels, i.e., comfy-cli, comfyui-manager (search gguf from the bar; and opt to install it there should be fine; see picture below), etc.

gguf node is no conflict with the popular comfyui-gguf node (can coexist; and this project actually inspired by it; built upon its code base; we are here honor their developers' contribution; we all appreciate their great work truly; then you could test our version and their version both; or mixing up use, switch in between freely, all for your own purpose and need); and is more lightweight (no dependencies needed), more functions (i.e., built-in tensor cutter, gguf convertor, etc.), compatible with the latest version numpy and other updated libraries come with comfyui

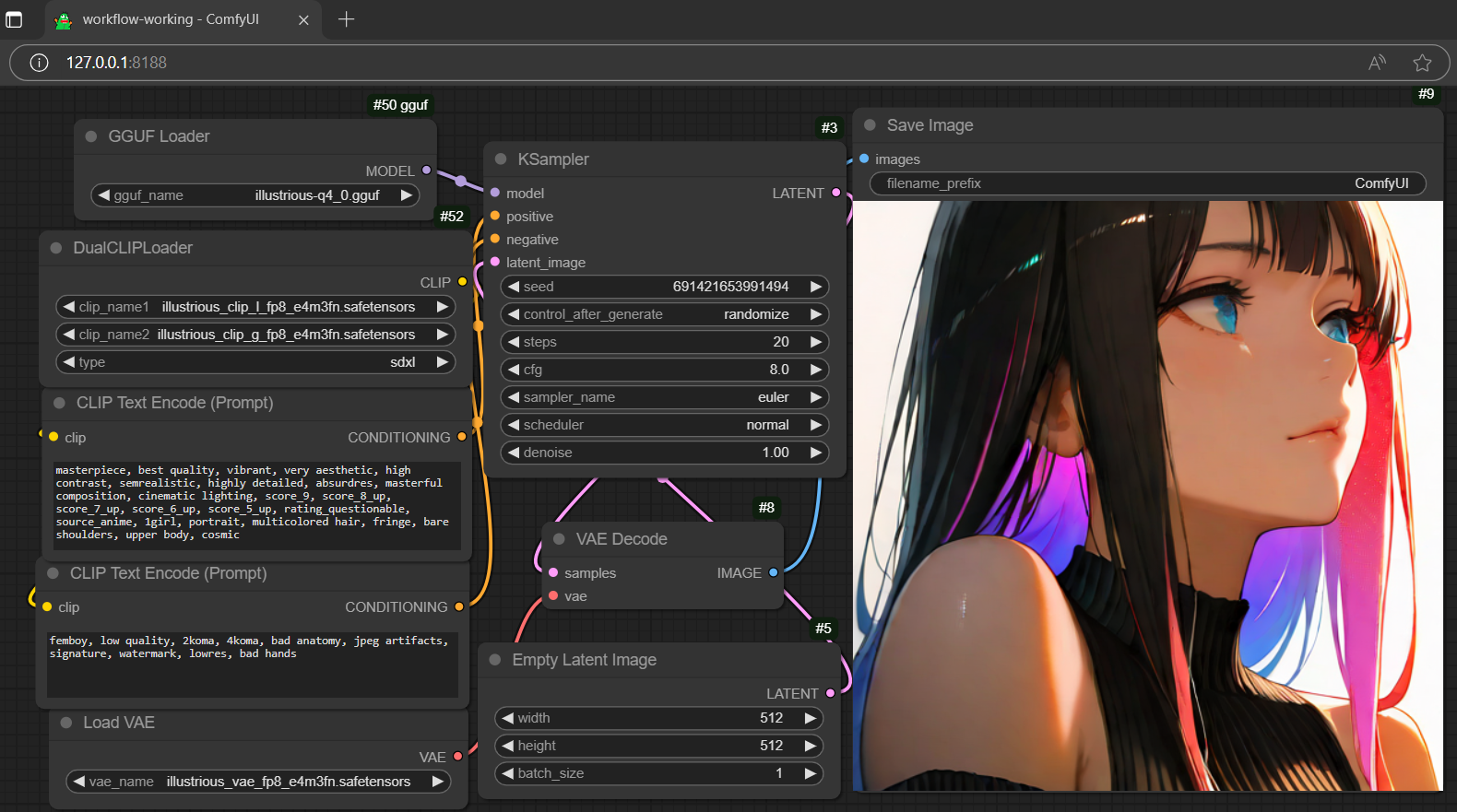

setup (in general)

- drag gguf file(s) to diffusion_models folder (./ComfyUI/models/diffusion_models)

- drag clip or encoder(s) to text_encoders folder (./ComfyUI/models/text_encoders)

- drag controlnet adapter(s), if any, to controlnet folder (./ComfyUI/models/controlnet)

- drag lora adapter(s), if any, to loras folder (./ComfyUI/models/loras)

- drag vae decoder(s) to vae folder (./ComfyUI/models/vae)

workflow

- drag the workflow json file to the activated browser; or

- drag any generated output file (i.e., picture, video, etc.; which contains the workflow metadata) to the activated browser

simulator

- design your own prompt; or

- generate a random prompt/descriptor by the simulator (though it might not be applicable for all)

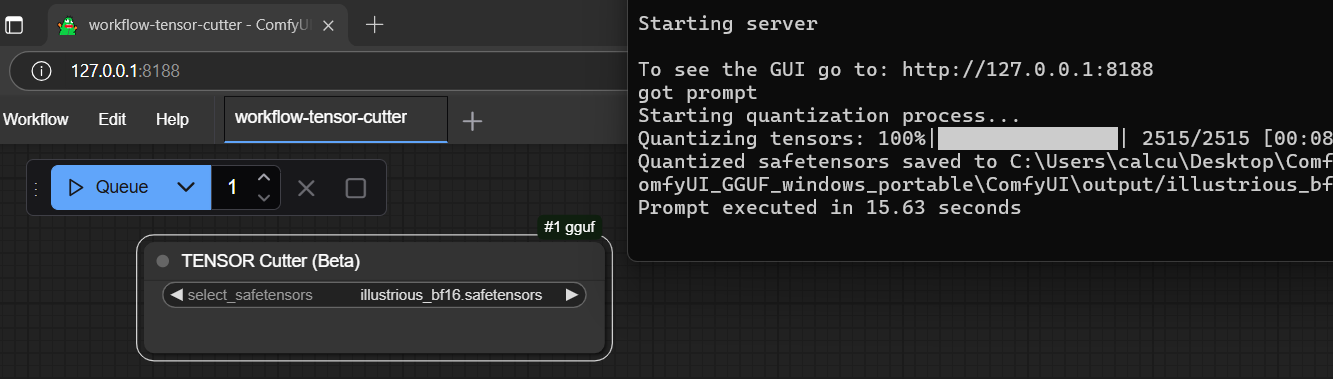

cutter (cut safetensors in half - bf16 to fp8)

- drag safetensors file(s) to diffusion_models folder (./ComfyUI/models/diffusion_models)

- choose the last option from the gguf menu:

TENSOR Cutter (Beta) - select your safetensors model inside the box; don't need to connect anything; it works independently

- click

Queue(run); then you can simply check the processing progress from console - when it was done; the quantized/half-cut safetensors file will be saved to the output folder (./ComfyUI/output)

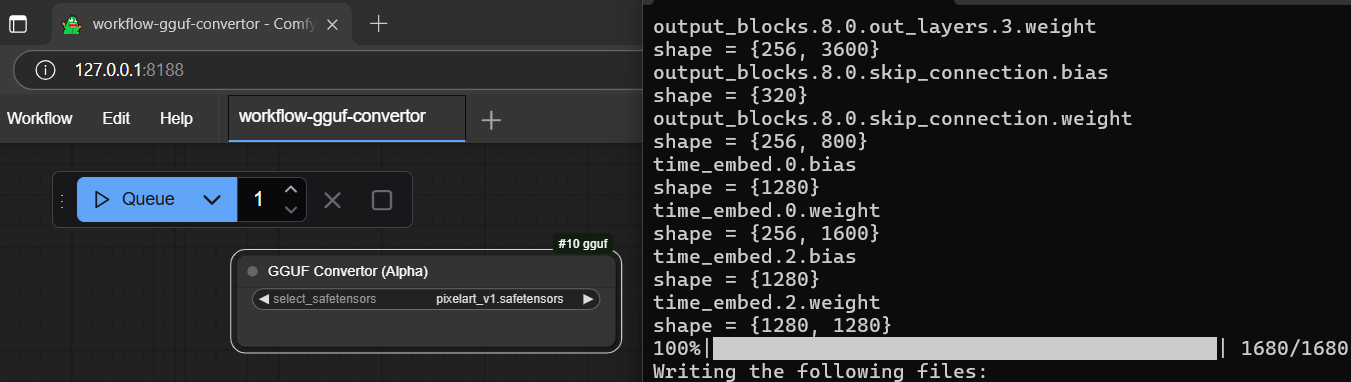

convertor (convert safetensors to gguf)

- drag safetensors file(s) to diffusion_models folder (./ComfyUI/models/diffusion_models)

- choose the third last option from the gguf menu:

GGUF Convertor (Alpha) - select your safetensors model inside the box; don't need to connect anything; it works independently also

- click

Queue(run); then you can simply check the processing progress from console - when it was done; the converted gguf file will be saved to the output folder (./ComfyUI/output)

fast model; could try to cut the selected model (bf16) half (use cutter) first; and convert the trimmed model (fp8) to gguf (pretty much the same file size with the bf16 quantized output but less tensors inside; load faster theoretically, but no guarantee, you should test it probably, and might also be prepared for the significant quality loss)

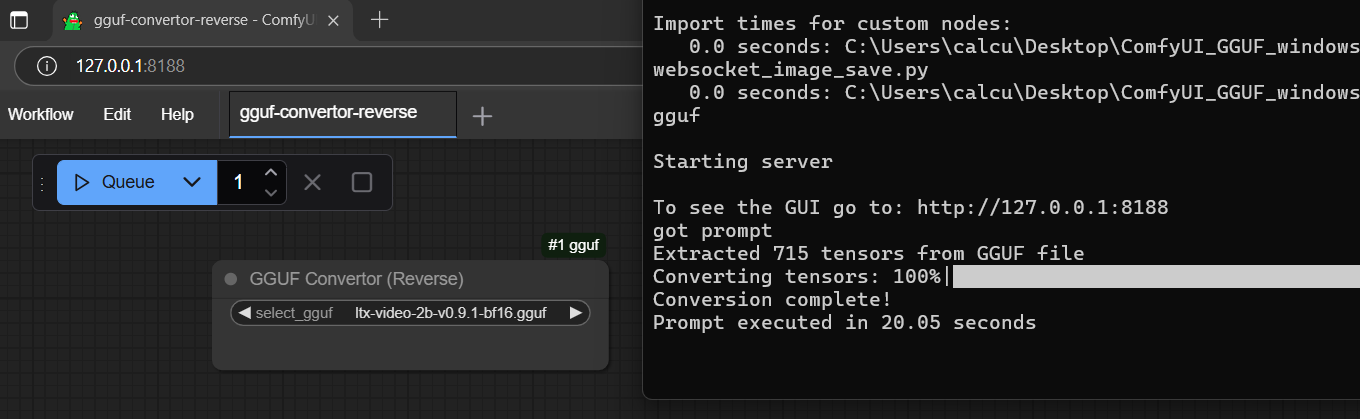

reverser (reverse convert gguf to safetensors)

- drag gguf file(s) to diffusion_models folder (./ComfyUI/models/diffusion_models)

- choose the seventh option from the gguf menu:

GGUF Convertor (Reverse) - select your gguf file inside the box; don't need to connect anything; it works independently as well

- click

Queue(run); then you can simply check the processing progress from console - when it was done; the converted safetensors file will be saved to the output folder (./ComfyUI/output)

convertor ZERO

new flagship feature: convert safetensors to gguf without any restriction; no unsupported models anymore; never

- drag safetensors file(s) to diffusion_models folder (./ComfyUI/models/diffusion_models)

- choose the second last option from the gguf menu:

GGUF Convertor (Zero) - select your safetensors model inside the box; don't need to connect anything; it works independently!

- click

Queue(run); then you can simply check the processing progress from console - when it was done; the converted gguf file will be saved to the output folder (./ComfyUI/output)

- 🐷pig architecture: not merely model conversion; it works for converting text encoder and vae as well; true! the amazing thing is - any form of safetensors can be converted! (but the file works or not; is another story)🐷

GGUF VAE Loader🐷

- you can't IMAGINE it works; but it really works!

- convert your safetensors vae to gguf vae using convertor zero; then try it out!

- gguf vae loader supports both gguf and safetensors which means you don't need to switch loader anymore

reference

comfyui confyui_vlm_nodes comfyui-gguf gguf-comfy gguf-connector testkit

parent

node is a member of family gguf

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file gguf_node-0.2.1.tar.gz.

File metadata

- Download URL: gguf_node-0.2.1.tar.gz

- Upload date:

- Size: 5.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ca1579d762b56ad611c7cda34b3c183490add486a1c18eebc5f6d439a40afacb

|

|

| MD5 |

16bf45420c0009f7c0553aff24c55a10

|

|

| BLAKE2b-256 |

495a00649f11955a73356852b5d6e135c8a7b6268f13340ae044d4fd27048860

|

File details

Details for the file gguf_node-0.2.1-py2.py3-none-any.whl.

File metadata

- Download URL: gguf_node-0.2.1-py2.py3-none-any.whl

- Upload date:

- Size: 5.7 kB

- Tags: Python 2, Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3ebd190ecd8528c16637a71a43fa6c8e9173be98c8504f608950549f22ec7279

|

|

| MD5 |

f59620fe0599bf1f6177f6c054e052d8

|

|

| BLAKE2b-256 |

ac173531f332bdef8bdeb5060fb89ec0155aa14f3774751c1eb12dd538498c80

|