Perfecting AI workflows with human intelligence

Project description

GoHumanLoop: A Python library empowering AI agents to dynamically request human input (approval/feedback/conversation) at critical stages. Core features:

Human-in-the-loop control: Lets AI agent systems pause and escalate decisions, enhancing safety and trust.Multi-channel integration: Supports Terminal, Email, API, and frameworks like LangGraph/CrewAI (soon).Flexible workflows: Combines automated reasoning with human oversight for reliable AI operations.

Ensures responsible AI deployment by bridging autonomous agents and human judgment.

Table of contents

🎹 Getting Started

To get started, check out the following example or jump straight into one of the Examples:

- 🦜⛓️ LangGraph

Installation

GoHumanLoop currently supports Python.

pip install gohumanloop

Example

The following example enhances the official LangGraph example with human-in-the-loop functionality.

💡 By default, it uses

Terminalas thelanggraph_adapterfor human interaction.

import os

from langchain.chat_models import init_chat_model

from typing import Annotated

from langchain_tavily import TavilySearch

from langchain_core.tools import tool

from typing_extensions import TypedDict

from langgraph.checkpoint.memory import MemorySaver

from langgraph.graph import StateGraph, START, END

from langgraph.graph.message import add_messages

from langgraph.prebuilt import ToolNode, tools_condition

# from langgraph.types import Command, interrupt # Don't use langgraph, use gohumanloop instead

from gohumanloop.adapters.langgraph_adapter import interrupt, create_resume_command

# Please replace with your Deepseek API Key from https://platform.deepseek.com/usage

os.environ["DEEPSEEK_API_KEY"] = "sk-xxx"

# Please replace with your Tavily API Key from https://app.tavily.com/home

os.environ["TAVILY_API_KEY"] = "tvly-xxx"

llm = init_chat_model("deepseek:deepseek-chat")

class State(TypedDict):

messages: Annotated[list, add_messages]

graph_builder = StateGraph(State)

@tool

def human_assistance(query: str) -> str:

"""Request assistance from a human."""

human_response = interrupt({"query": query})

return human_response

tool = TavilySearch(max_results=2)

tools = [tool, human_assistance]

llm_with_tools = llm.bind_tools(tools)

def chatbot(state: State):

message = llm_with_tools.invoke(state["messages"])

# Because we will be interrupting during tool execution,

# we disable parallel tool calling to avoid repeating any

# tool invocations when we resume.

assert len(message.tool_calls) <= 1

return {"messages": [message]}

graph_builder.add_node("chatbot", chatbot)

tool_node = ToolNode(tools=tools)

graph_builder.add_node("tools", tool_node)

graph_builder.add_conditional_edges(

"chatbot",

tools_condition,

)

graph_builder.add_edge("tools", "chatbot")

graph_builder.add_edge(START, "chatbot")

memory = MemorySaver()

graph = graph_builder.compile(checkpointer=memory)

user_input = "I need some expert guidance for building an AI agent. Could you request assistance for me?"

config = {"configurable": {"thread_id": "1"}}

events = graph.stream(

{"messages": [{"role": "user", "content": user_input}]},

config,

stream_mode="values",

)

for event in events:

if "messages" in event:

event["messages"][-1].pretty_print()

# LangGraph code:

# human_response = (

# "We, the experts are here to help! We'd recommend you check out LangGraph to build your agent."

# "It's much more reliable and extensible than simple autonomous agents."

# )

# human_command = Command(resume={"data": human_response})

# GoHumanLoop code:

human_command = create_resume_command() # Use this command to resume the execution,instead of using the command above

events = graph.stream(human_command, config, stream_mode="values")

for event in events:

if "messages" in event:

event["messages"][-1].pretty_print()

- Deployment & Test

Run the above code with the following steps:

# 1.Initialize environment

uv init gohumanloop-example

cd gohumanloop-example

uv venv .venv --python=3.10

# 2.Copy the above code to main.py

# 3.Deploy and test

uv pip install langchain

uv pip install langchain_tavily

uv pip install langgraph

uv pip install langchain-deepseek

uv pip install gohumanloop

python main.py

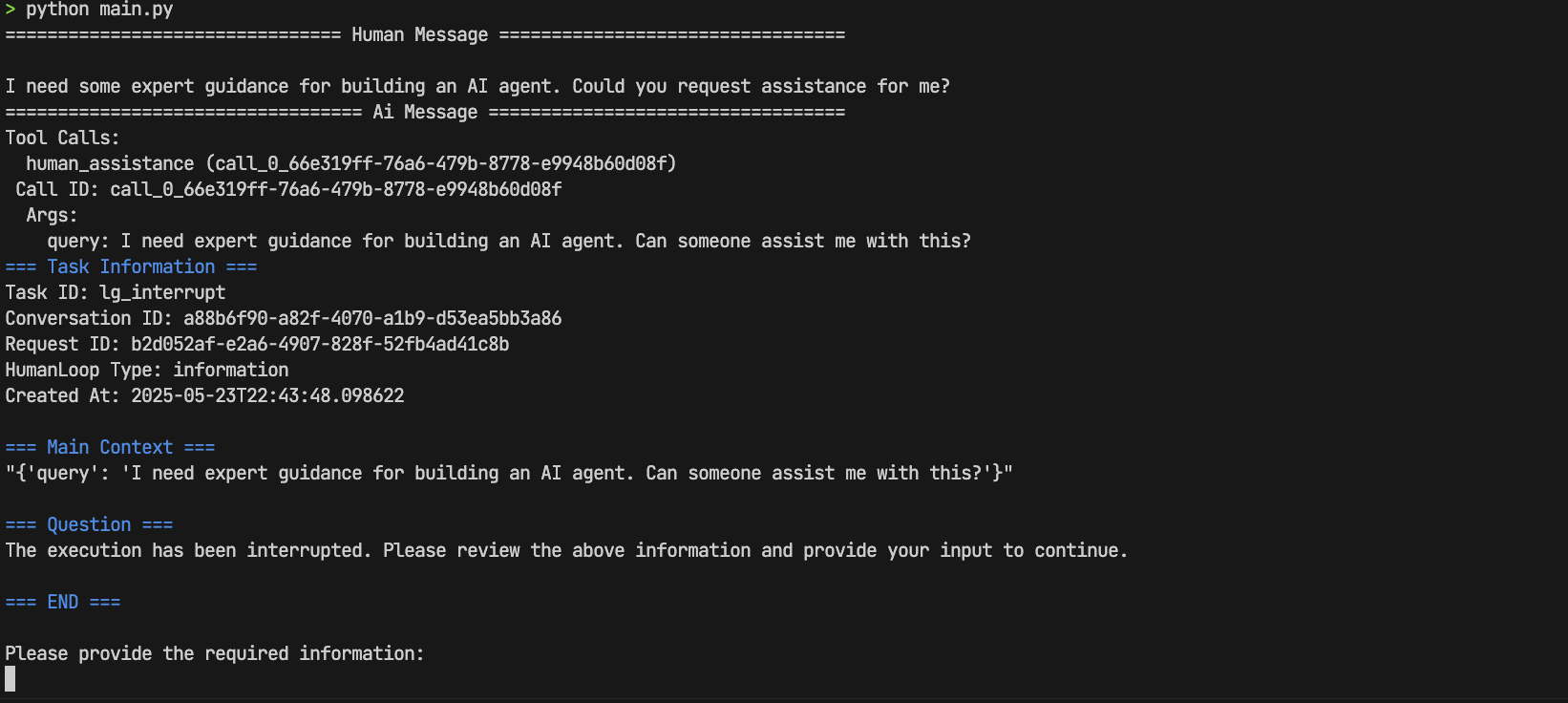

- Interaction Demo

Perform human-in-the-loop interaction by entering:

We, the experts are here to help! We'd recommend you check out LangGraph to build your agent.It's much more reliable and extensible than simple autonomous agents.

🚀🚀🚀 Completed successfully ~

➡️ Check out more examples in the Examples Directory and we look foward to your contributions!

🎵 Why GoHumanloop?

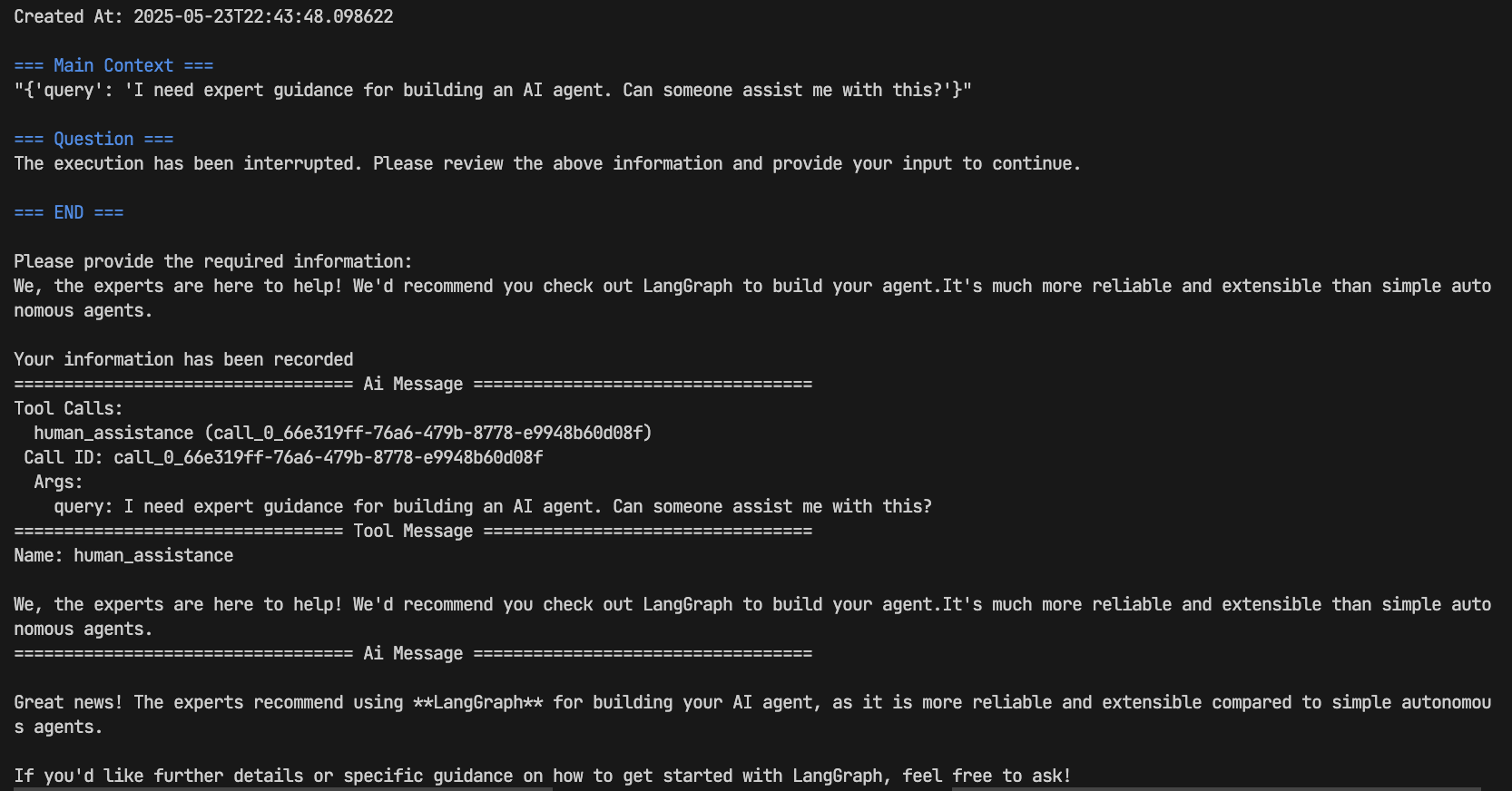

Human-in-the-loop

Even with state-of-the-art agentic reasoning and prompt routing, LLMs are not sufficiently reliable to be given access to high-stakes functions without human oversight

Human-in-the-loop is an AI system design philosophy that integrates human judgment and supervision into AI decision-making processes. This concept is particularly important in AI Agent systems:

- Safety Assurance: Allows human intervention and review at critical decision points to prevent potentially harmful AI decisions

- Quality Control: Improves accuracy and reliability of AI outputs through expert feedback

- Continuous Learning: AI systems can learn and improve from human feedback, creating a virtuous cycle

- Clear Accountability: Maintains ultimate human control over important decisions with clear responsibility

In practice, Human-in-the-loop can take various forms - from simple decision confirmation to deep human-AI collaborative dialogues - ensuring optimal balance between autonomy and human oversight to maximize the potential of AI Agent systems.

Typical Use Cases

A human can review and edit the output from the agent before proceeding. This is particularly critical in applications where the tool calls requested may be sensitive or require human oversight.

- 🛠️ Tool Call Review: Humans can review, edit or approve tool call requests initiated by LLMs before execution

- ✅ Model Output Verification: Humans can review, edit or approve content generated by LLMs (text, decisions, etc.)

- 💡 Context Provision: Allows LLMs to actively request human input for clarification, additional details or multi-turn conversation context

Secure and Efficient Go➡Humanloop

GoHumanloop provides a set of tools deeply integrated within AI Agents to ensure constant Human-in-the-loop oversight. It deterministically ensures high-risk function calls must undergo human review while also enabling human expert feedback, thereby improving AI system reliability and safety while reducing risks from LLM hallucinations.

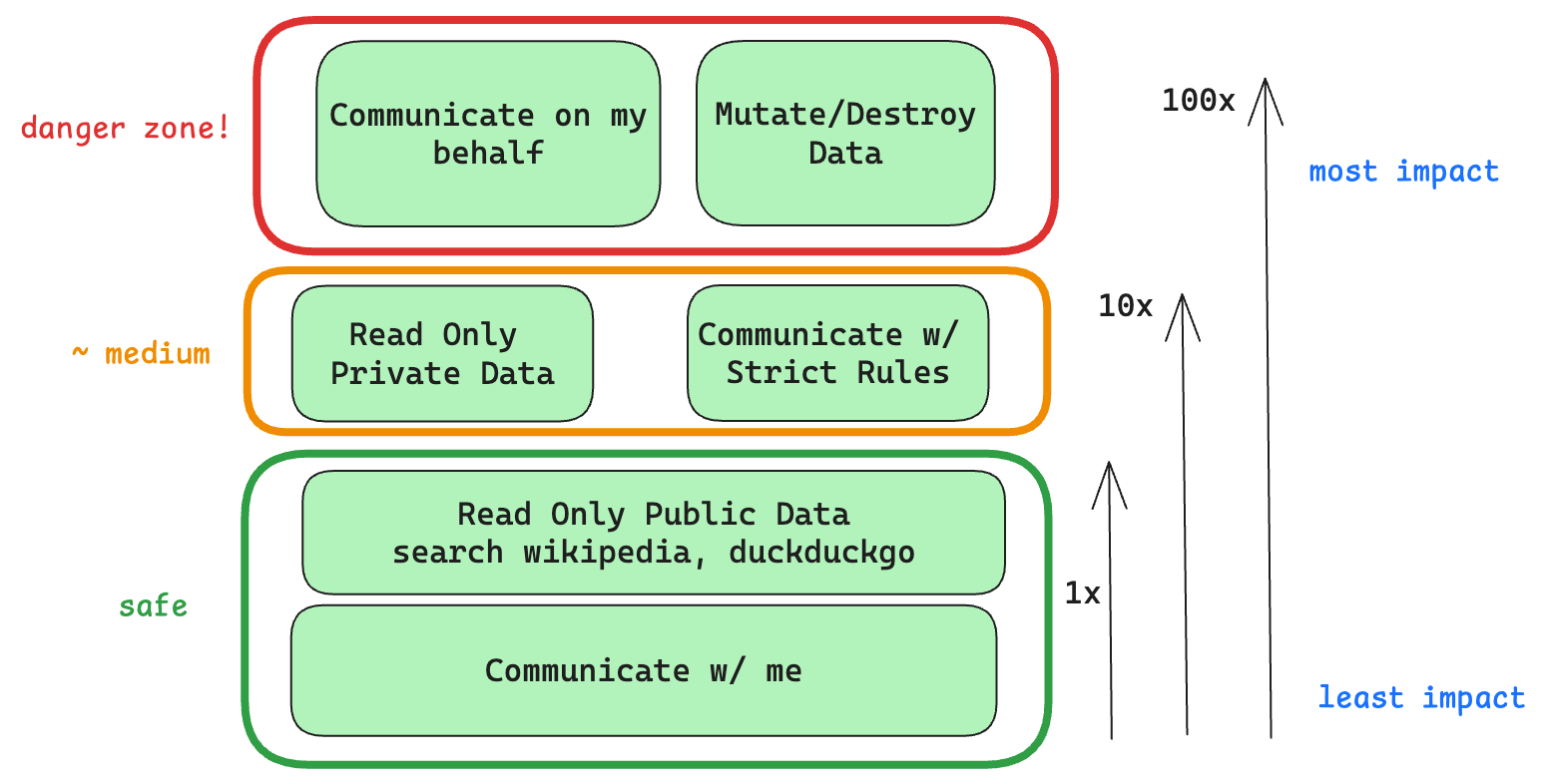

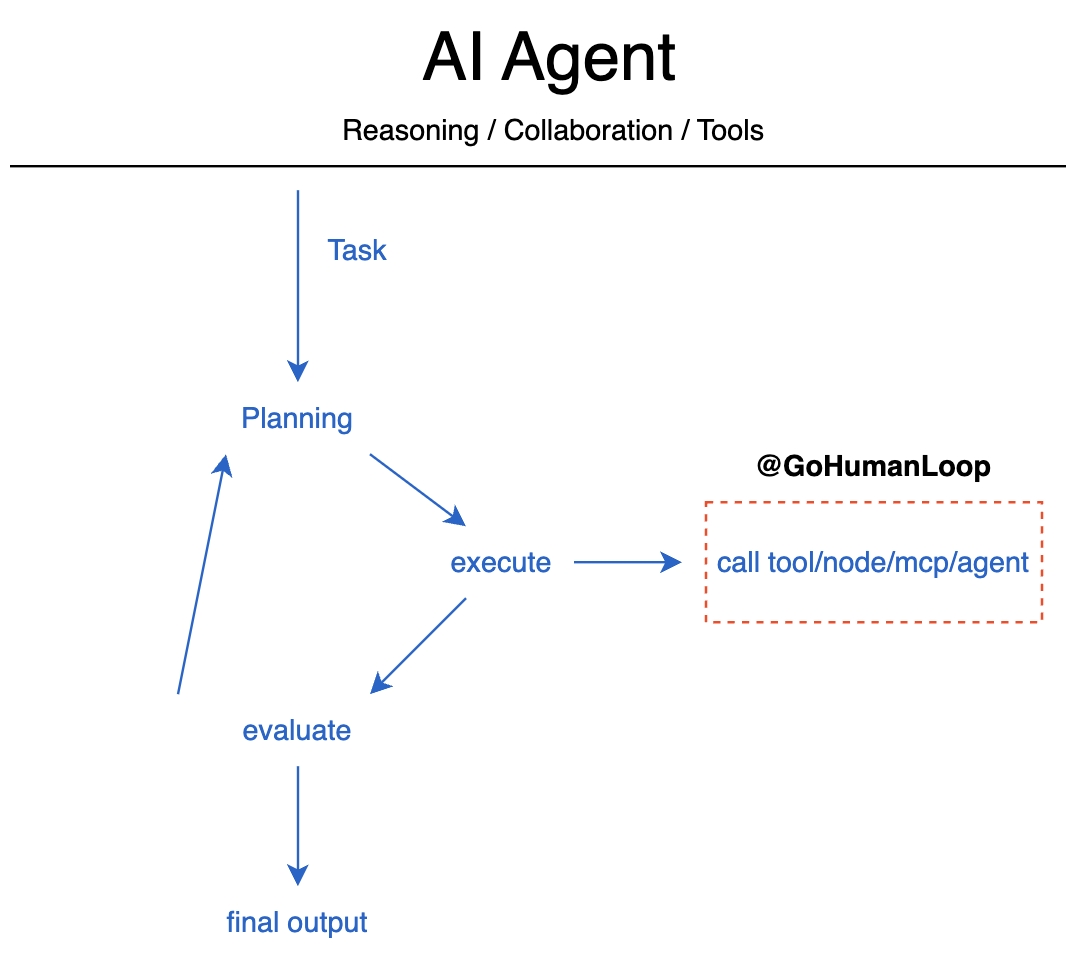

The Outer-Loop and Inversion of Control

Through GoHumanloop's encapsulation, you can implement secure and efficient Human-in-the-loop when requesting tools, Agent nodes, MCP services and other Agents.

📚 Key Features

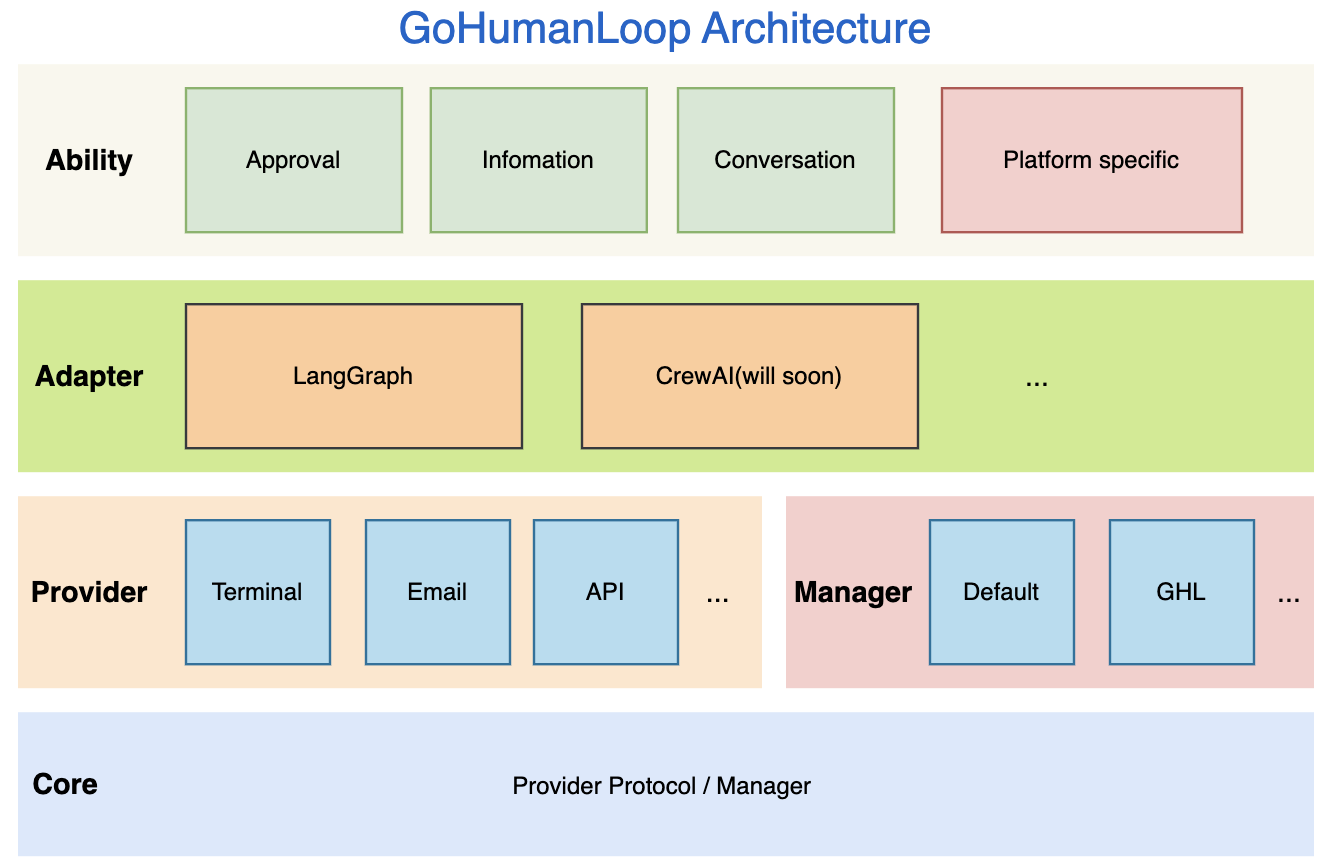

GoHumanLoop Architecture

GoHumanloop offers the following core capabilities:

- Approval: Requests human review or approval when executing specific tool calls or Agent nodes

- Information: Obtains critical human input during task execution to reduce LLM hallucination risks

- Conversation: Enables multi-turn interactions with humans through dialogue to acquire richer contextual information

- Framework-specific Integration: Provides specialized integration methods for specific Agent frameworks, such as

interruptandresumeforLangGraph

📅 Roadmap

| Feature | Status |

|---|---|

| Approval | ⚙️ Beta |

| Information | ⚙️ Beta |

| Conversation | ⚙️ Beta |

| Email Provider | ⚙️ Beta |

| Terminal Provider | ⚙️ Beta |

| API Provider | ⚙️ Beta |

| Default Manager | ⚙️ Beta |

| GLH Manager | 🗓️ Planned |

| Langchain Support | ⚙️ Beta |

| CrewAI Support | 🗓️ Planned |

- 💡 GLH Manager - GoHumanLoop Manager will integrate with the upcoming GoHumanLoop Hub platform to provide users with more flexible management options.

🤝 Contributing

The GoHumanLoop SDK and documentation are open source. We welcome contributions in the form of issues, documentation and PRs. For more details, please see CONTRIBUTING.md

📱 Contact

🎉 If you're interested in this project, feel free to scan the QR code to contact the author.

🌟 Star History

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file gohumanloop-0.0.8.tar.gz.

File metadata

- Download URL: gohumanloop-0.0.8.tar.gz

- Upload date:

- Size: 54.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.6.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f106e4ec60b3607a733c2cd85152e206ebfd4ce1e3ca81c3cd5eb40305272e18

|

|

| MD5 |

ecea2247d3bc609de65b5a791dd98276

|

|

| BLAKE2b-256 |

8c623b2090183986d74c8be2123e923777d9132191b9e3753f748af4eecfefe5

|

File details

Details for the file gohumanloop-0.0.8-py3-none-any.whl.

File metadata

- Download URL: gohumanloop-0.0.8-py3-none-any.whl

- Upload date:

- Size: 59.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.6.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cbaa2755f9b111cf6cf21baf78b5da5ca1b3fd8a3fefca3469bc762519244f70

|

|

| MD5 |

90b12aef886fae7c2da7c722a2010265

|

|

| BLAKE2b-256 |

981c977d741a03755a7c9cc5e0287958fd3e769ea3d3f82b24f389964a5d22f8

|