Visualize HuggingFace Byte-Pair Encoding (BPE) Tokenizer encoding process

Project description

HuggingFace Byte-Pair Encoding tokenizer visualizer library

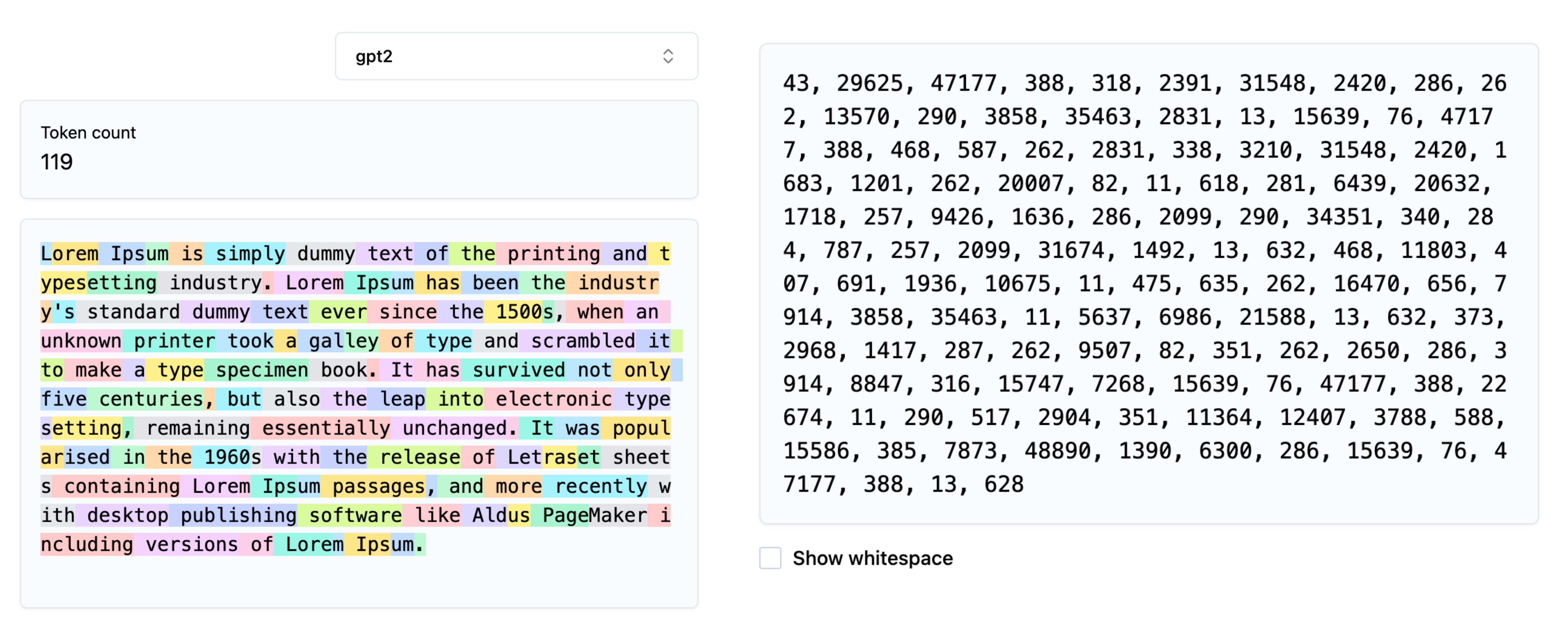

The library can help you visualize how the encoding process happens in the Byte-Pair Encoding tokenizer algorithm when you pass on your text content for tokenization.

Byte-Pair Encoding (BPE) was initially developed as an algorithm to compress texts, and then used by OpenAI for tokenization when pre-training the GPT model. It’s used by a lot of Transformer models, including GPT, GPT-2, RoBERTa, BART, and DeBERTa.

Byte-Pair Encoding tokenization

BPE training starts by computing the unique set of words used in the corpus (after the normalization and pre-tokenization steps are completed), then building the vocabulary by taking all the symbols used to write those words.

More about the algorithm here

Visualizing the Tokenization process

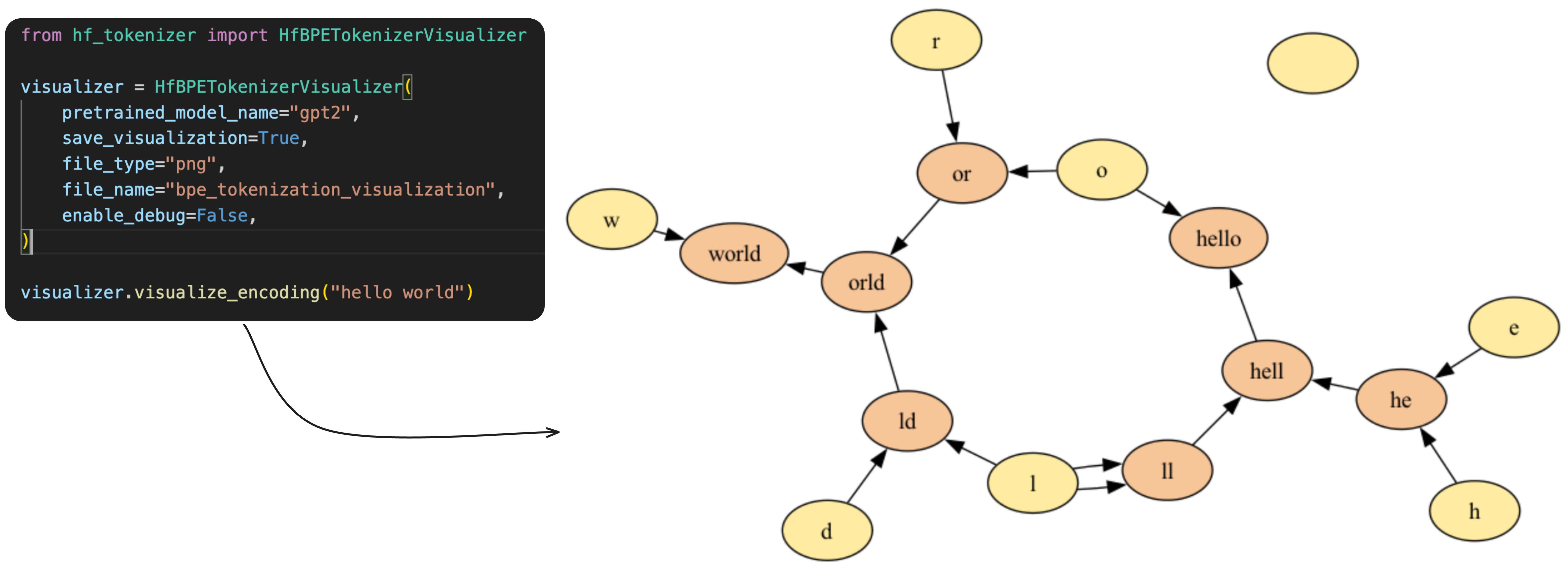

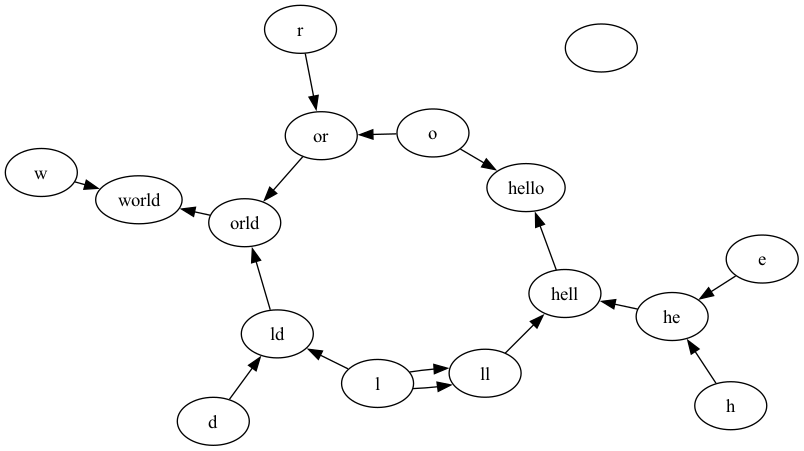

During the tokenization process the input content is compressed into the encoded IDs based on the trained BPE-Tokenizer. During the training process the token-pairs are merged into new token ID based on their frequency of existence in the training corpus.

This library helps in visualizing how the merging process looks like for a given string to be encoded. It generates a graph where the nodes are tokens / characters and if a pair of characters are merged, the nodes are connected via directed edges.

Installing the Library

pip install hf-tokenizer-visualizer

Using the Library

- Save your visualization in a

PNGor aPDFfile.

from hf_tokenizer_visualizer import HfBPETokenizerVisualizer

visualizer = HfBPETokenizerVisualizer(

pretrained_model_name="gpt2",

save_visualization=True,

file_type="png",

file_name="bpe_tokenization_visualization_2",

enable_debug=False,

)

visualizer.visualize_encoding('hello world')

Note: The file is saved in your current working directory. Note: You can choose between

pngand

- Get the raw encoding

from hf_tokenizer_visualizer import HfBPETokenizerVisualizer

visualizer = HfBPETokenizerVisualizer(

pretrained_model_name="gpt2",

save_visualization=True,

file_type="png",

file_name="bpe_tokenization_visualization_2",

enable_debug=False,

)

visualizer.encode('hello world')

Output: [31373, 995]

Output Graph generated

Building the Library and Uploading to PyPi (Owner Only)

python -m build

twine upload --repository pypi dist/*

Pre-requisites

Following are the libraries which are mandatory for running this:

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file hf_tokenizer_visualizer-0.0.7.tar.gz.

File metadata

- Download URL: hf_tokenizer_visualizer-0.0.7.tar.gz

- Upload date:

- Size: 4.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

221d0478fd0dfe65c666faf40f9c3d00c94d34868f502eab803ac3827b815e86

|

|

| MD5 |

d71c30dab82cbf1ea67ff3c1504b5edd

|

|

| BLAKE2b-256 |

fc57fdf935a77ef86f2c62bb84c10acec4e39dfa7e9540873840b55962f298fb

|

File details

Details for the file hf_tokenizer_visualizer-0.0.7-py3-none-any.whl.

File metadata

- Download URL: hf_tokenizer_visualizer-0.0.7-py3-none-any.whl

- Upload date:

- Size: 5.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c5ca1c0045c050ed239c031d48526e9cee0274501b556f73aefaca563374f8f0

|

|

| MD5 |

647beedd65e0977c9b5522b84f074e08

|

|

| BLAKE2b-256 |

1115804399ebf998ae711b954f5e288993bafc551a839d12962835392465a0f1

|