Token-efficient MCP server for source code exploration via tree-sitter AST parsing

Project description

Quickstart - https://github.com/jgravelle/jcodemunch-mcp/blob/main/QUICKSTART.md

FREE FOR PERSONAL USE

Use it to make money, and Uncle J. gets a taste. Fair enough? details

Documentation

| Doc | What it covers |

|---|---|

| QUICKSTART.md | Zero-to-indexed in three steps |

| USER_GUIDE.md | Full tool reference, workflows, and best practices |

| AGENT_HOOKS.md | Agent hooks and prompt policies |

| ARCHITECTURE.md | Internal design, storage model, and extension points |

| LANGUAGE_SUPPORT.md | Supported languages and parsing details |

| CONTEXT_PROVIDERS.md | dbt, Git, and custom context provider docs |

| TROUBLESHOOTING.md | Common issues and fixes |

Cut code-reading token usage by 95% or more

Most AI agents explore repositories the expensive way:

open entire files → skim thousands of irrelevant lines → repeat.

That is not “a little inefficient.” That is a token incinerator.

jCodeMunch indexes a codebase once and lets agents retrieve only the exact code they need: functions, classes, methods, constants, outlines, and tightly scoped context bundles, with byte-level precision.

In retrieval-heavy workflows, that routinely cuts code-reading token usage by 95%+ because the agent stops brute-reading giant files just to find one useful implementation.

| Task | Traditional approach | With jCodeMunch |

|---|---|---|

| Find a function | Open and scan large files | Search symbol → fetch exact implementation |

| Understand a module | Read broad file regions | Pull only relevant symbols and imports |

| Explore repo structure | Traverse file after file | Query outlines, trees, and targeted bundles |

Index once. Query cheaply. Keep moving. Precision context beats brute-force context.

jCodeMunch MCP

Structured code retrieval for serious AI agents

Commercial licenses

jCodeMunch-MCP is free for non-commercial use.

Commercial use requires a paid license.

jCodeMunch-only licenses

- Builder — $79 — 1 developer

- Studio — $349 — up to 5 developers

- Platform — $1,999 — org-wide internal deployment

Want both code and docs retrieval?

Stop paying your model to read the whole damn file.

jCodeMunch turns repo exploration into structured retrieval.

Instead of forcing an agent to open giant files, wade through imports, boilerplate, comments, helpers, and unrelated code, jCodeMunch lets it navigate by what the code is and retrieve only what matters.

That means:

- 95%+ lower code-reading token usage in many retrieval-heavy workflows

- less irrelevant context polluting the prompt

- faster repo exploration

- more accurate code lookup

- less repeated file-scanning nonsense

It indexes your codebase once using tree-sitter, stores structured symbol metadata plus byte offsets into the original source, and retrieves exact implementations on demand instead of re-reading entire files over and over.

Recent releases have also made that retrieval workflow sharper and more useful in real engineering work, with BM25-based symbol search, context bundles, compact search modes, query suggestions for unfamiliar repos, dependency graphs, class hierarchy traversal, blast-radius analysis, multi-symbol bundles, live watch-based reindexing, automatic Claude Code worktree discovery (watch-claude), and benchmark reproducibility improvements.

Real-world results

Reproducible token efficiency benchmark

Measured with tiktoken cl100k_base across three public repos. Workflow: search_symbols (top 5) + get_symbol_source × 3 per query. Baseline: all source files concatenated (minimum cost for an agent that reads everything). Full methodology and harness →

| Repository | Files | Symbols | Baseline tokens | jCodeMunch tokens | Reduction |

|---|---|---|---|---|---|

| expressjs/express | 34 | 117 | 73,838 | ~1,300 avg | 98.4% |

| fastapi/fastapi | 156 | 1,359 | 214,312 | ~15,600 avg | 92.7% |

| gin-gonic/gin | 40 | 805 | 84,892 | ~1,730 avg | 98.0% |

| Grand total (15 task-runs) | 1,865,210 | 92,515 | 95.0% |

Per-query results range from 79.7% (dense FastAPI router query) to 99.8% (sparse context-bind query on Express). The 95% figure is the aggregate. Run python benchmarks/harness/run_benchmark.py to reproduce.

A/B test on production codebase

Independent 50-iteration A/B test on a real Vue 3 + Firebase production codebase — JCodeMunch vs native tools (Grep/Glob/Read), Claude Sonnet 4.6, fresh session per iteration:

| Metric | Native | JCodeMunch |

|---|---|---|

| Success rate | 72% | 80% |

| Timeout rate | 40% | 32% |

| Mean cost/iteration | $0.783 | $0.738 |

| Mean cache creation | 104,135 | 93,178 (−10.5%) |

Tool-layer savings isolated from fixed overhead: 15–25%. One finding category appeared exclusively in the JCodeMunch variant: orphaned file detection via find_importers — a structural query native tools cannot answer without scripting.

Full report: benchmarks/ab-test-naming-audit-2026-03-18.md

Why agents need this

Most agents still inspect codebases like tourists trapped in an airport gift shop:

- open entire files to find one function

- re-read the same code repeatedly

- consume imports, boilerplate, and unrelated helpers

- burn context window on material they never needed in the first place

jCodeMunch fixes that by giving them a structured way to:

- search symbols by name, kind, or language

- inspect file and repo outlines before pulling source

- retrieve exact symbol implementations only

- grab a context bundle when surrounding imports matter

- fall back to text search when structure alone is not enough

Agents do not need bigger and bigger context windows.

They need better aim.

What you get

Symbol-level retrieval

Find and fetch functions, classes, methods, constants, and more without opening entire files.

Faster repo understanding

Inspect repository structure and file outlines before asking for source.

Lower token spend

Send the model the code it needs, not 1,500 lines of collateral damage.

Structural queries native tools can't answer

find_importers tells you what imports a file. get_blast_radius tells you what breaks if you change a symbol. get_class_hierarchy traverses inheritance chains. These are not "faster grep" — they are questions grep cannot answer at all.

Better engineering workflows

Useful for onboarding, debugging, refactoring, impact analysis, and exploring unfamiliar repos without brute-force file reading.

Local-first speed

Indexes are stored locally for fast repeated access.

How it works

jCodeMunch indexes local folders or GitHub repos, parses source with tree-sitter, extracts symbols, and stores structured metadata alongside raw file content in a local index. Each symbol includes enough information to be found cheaply and retrieved precisely later.

That includes metadata like:

- signature

- kind

- qualified name

- one-line summary

- byte offsets into the original file

So when the agent wants a symbol, jCodeMunch can fetch the exact source directly instead of loading and rescanning the full file.

Start fast

1. Install it

pip install jcodemunch-mcp

2. Add it to your MCP client

If you’re using Claude Code:

claude mcp add jcodemunch uvx jcodemunch-mcp

3. Tell your agent to actually use it

This matters more than people think.

Installing jCodeMunch makes the tools available. It does not guarantee the agent will stop its bad habit of brute-reading files unless you instruct it to prefer symbol search, outlines, and targeted retrieval. The changelog specifically calls out improved onboarding around this because it is a real source of confusion for first-time users.

A simple instruction like this helps:

Use jcodemunch-mcp for code lookup whenever available. Prefer symbol search, outlines, and targeted retrieval over reading full files.

Note: For a comprehensive guide on enforcing these rules through agent hooks and prompt policies, see AGENT_HOOKS.md.

Configuration

Settings are controlled by a JSONC config file (config.jsonc) with env var fallbacks for backward compatibility. Defaults are chosen so that a fresh install works without any configuration.

Quick setup

jcodemunch-mcp config --init # create ~/.code-index/config.jsonc from template

jcodemunch-mcp config # show effective configuration

jcodemunch-mcp config --check # validate config + verify prerequisites

--check validates that your config file is well-formed, your AI provider package is installed, your index storage path is writable, and HTTP transport packages are present. Exits non-zero on any failure — useful for CI/CD or first-run scripts.

Config file locations

| Layer | Path | Purpose |

|---|---|---|

| Global | ~/.code-index/config.jsonc |

Server-wide defaults |

| Project | {project_root}/.jcodemunch.jsonc |

Per-project overrides |

Project config merges over global config — closest to the work wins.

Token-control levers (reduce schema tokens per turn)

| Config key | What it controls | Typical savings |

|---|---|---|

disabled_tools |

Remove tools from schema entirely | ~100–400 tokens/tool |

languages |

Shrink language enum + gate features | ~2–86 tokens/turn |

meta_fields |

Filter _meta response fields |

~50–150 tokens/call |

descriptions |

Control description verbosity | ~0–600 tokens/turn |

See the full template for all available keys. Run jcodemunch-mcp config --init to generate one.

Deprecated env vars (v2.0 will remove)

The following env vars still work but are deprecated. Config file values take priority:

| Variable | Config key | Default |

|---|---|---|

JCODEMUNCH_USE_AI_SUMMARIES |

use_ai_summaries |

true |

JCODEMUNCH_TRUSTED_FOLDERS |

trusted_folders |

[] |

JCODEMUNCH_MAX_FOLDER_FILES |

max_folder_files |

2000 |

JCODEMUNCH_MAX_INDEX_FILES |

max_index_files |

10000 |

JCODEMUNCH_STALENESS_DAYS |

staleness_days |

7 |

JCODEMUNCH_MAX_RESULTS |

max_results |

500 |

JCODEMUNCH_EXTRA_IGNORE_PATTERNS |

extra_ignore_patterns |

[] |

JCODEMUNCH_CONTEXT_PROVIDERS |

context_providers |

true |

JCODEMUNCH_REDACT_SOURCE_ROOT |

redact_source_root |

false |

JCODEMUNCH_STATS_FILE_INTERVAL |

stats_file_interval |

3 |

JCODEMUNCH_SHARE_SAVINGS |

share_savings |

true |

JCODEMUNCH_SUMMARIZER_CONCURRENCY |

summarizer_concurrency |

4 |

JCODEMUNCH_ALLOW_REMOTE_SUMMARIZER |

allow_remote_summarizer |

false |

JCODEMUNCH_RATE_LIMIT |

rate_limit |

0 |

JCODEMUNCH_TRANSPORT |

transport |

stdio |

JCODEMUNCH_HOST |

host |

127.0.0.1 |

JCODEMUNCH_PORT |

port |

8901 |

JCODEMUNCH_LOG_LEVEL |

log_level |

WARNING |

AI provider keys (ANTHROPIC_API_KEY, GOOGLE_API_KEY, OPENAI_API_BASE, etc.) and CODE_INDEX_PATH are always read from env vars — they are never placed in config files.

AI provider priority: Anthropic → Gemini → local LLM → signature fallback. The first key that is set wins. jcodemunch-mcp config shows which provider is active.

When does it help?

A common question: does this only help during exploration, or also when the agent is prompted to read a file before editing?

It helps most when editing a specific function. The "read before edit" constraint doesn't require reading the whole file — it requires reading the code. get_symbol_source gives you exactly the function body you're about to touch, nothing else. Instead of reading 700 lines to edit one method, you read those 30 lines.

| Scenario | Native tool | jCodemunch | Savings |

|---|---|---|---|

| Edit one function (700-line file) | Read → 700 lines |

get_symbol_source → 30 lines |

~95% |

| Understand a file's structure | Read → full content |

get_file_outline → names + signatures |

~80% |

| Find which file to edit | Grep many files |

search_symbols → exact match |

comparable |

| Edit requires whole-file context | Read → full content |

get_file_content → full content |

~0% |

| "What breaks if I change X?" | not possible | get_blast_radius |

unique capability |

The cases where it doesn't help: edits that genuinely require understanding the entire file (restructuring file-level state, reordering logic that spans hundreds of lines). For those, get_file_content is roughly equivalent to Read. The cases where it helps most are targeted edits — one function, one method, one class — which is the majority of real editing work.

Best for

- large repositories

- unfamiliar codebases

- agent-driven code exploration

- refactoring and impact analysis

- teams trying to cut AI token costs without making agents dumber

- developers who are tired of paying premium rates for glorified file scrolling

New here?

Start with QUICKSTART.md for the fastest setup path.

Then index a repo, ask your agent what it has indexed, and have it retrieve code by symbol instead of reading entire files. That is where the savings start.

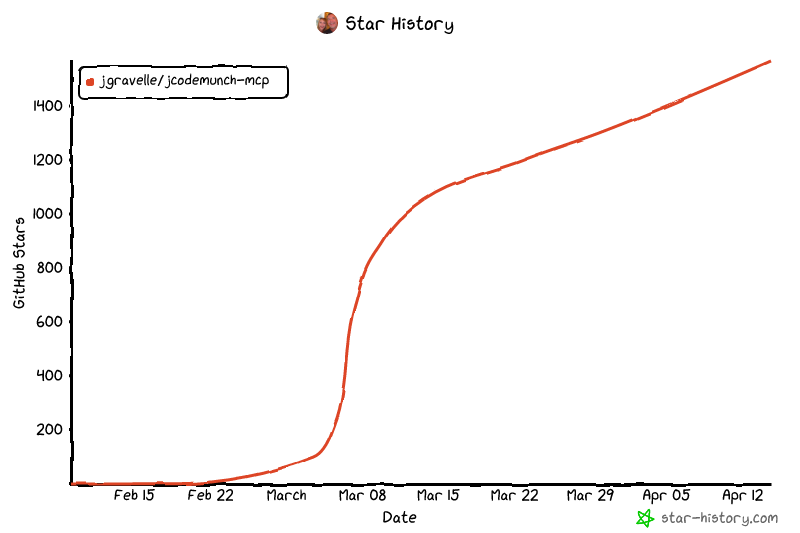

Star History

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file jcodemunch_mcp-1.11.15.tar.gz.

File metadata

- Download URL: jcodemunch_mcp-1.11.15.tar.gz

- Upload date:

- Size: 768.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

08906fd6574a53cd004790fd1d7c41d9fe066adfb60e7b8844f73ec5bd98f043

|

|

| MD5 |

d0679fa4a447b1aac2ae2cbf614ac552

|

|

| BLAKE2b-256 |

6fb140af3eda4096836042cfdfb3600f1c6683c09b0d569d5a7b4b361929a5f4

|

File details

Details for the file jcodemunch_mcp-1.11.15-py3-none-any.whl.

File metadata

- Download URL: jcodemunch_mcp-1.11.15-py3-none-any.whl

- Upload date:

- Size: 245.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1669cbcf79658ea7b7a7f5f6c16b9aaa082205c8e8f3e1a11456adfaec9cf3e0

|

|

| MD5 |

e884d76510a7a5ecdd992015b0d528af

|

|

| BLAKE2b-256 |

8684d05e0694b2f536fd482ba480706994b3d36602c3ea975e9766612ba12877

|