GPU Cluster Health Management

Project description

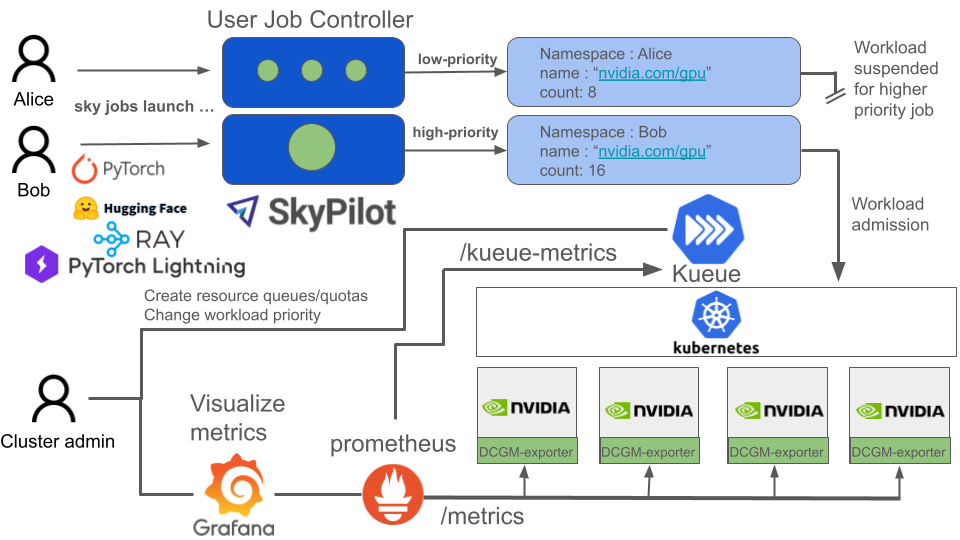

Built on Kubernetes. Konduktor uses existing open source tools to build a platform that makes it easy for ML Researchers to submit batch jobs and for administrative/infra teams to easily manage GPU clusters.

How it works

Konduktor uses a combination of open source projects. Where tools exist with MIT, Apache, or another compatible open license, we want to use and even contribute to that tool. Where we see gaps in tooling, we build it.

Architecture

Konduktor can be self-hosted and run on any certified Kubernetes distribution or managed by us. Contact us at founders@trainy.ai if you are just interested in the managed version. We're focused on tooling for clusters with NVIDIA cards for now but in the future we may expand to our scope to support other accelerators.

For ML researchers

- Konduktor CLI & SDK - user friendly batch job framework, where users only need to specify the resource requirements of their job and a script to launch that makes simple to scale work across multiple nodes. Works with most ML application frameworks out of the box.

num_nodes: 100

resources:

accelerators: H100:8

cloud: kubernetes

labels:

kueue.x-k8s.io/queue-name: user-queue

kueue.x-k8s.io/priority-class: low-priority

run: |

torchrun \

--nproc_per_node 8 \

--rdzv_id=1 --rdzv_endpoint=$master_addr:1234 \

--rdzv_backend=c10d --nnodes $num_nodes \

torch_ddp_benchmark.py --distributed-backend nccl

For cluster administrators

- DCGM Exporter, GPU operator, Network Operator - For installing NVIDIA driver, container runtime, and exporting node health metrics.

- Kueue - centralized creation of job queues, gang-scheduling, and resource quotas and sharing across projects.

- Prometheus - For publishing metrics about node health and workload queues.

- OpenTelemetry - For pushing logs from each node

- Grafana, Loki - Visualizations for metrics/logging solution.

Community & Support

Development Setup

Prerequisites

- Python 3.9+ (3.10+ recommended)

- Poetry for dependency management (installation guide)

- kubectl and access to a Kubernetes cluster (for integration/smoke tests)

Quick Start

# Clone the repository

git clone https://github.com/Trainy-ai/konduktor.git

cd konduktor

# Install dependencies (including dev tools)

poetry install --with dev

# Verify installation

poetry run konduktor --help

Running Tests

# Run unit tests

poetry run pytest tests/unit_tests/ -v

# Run smoke tests (requires Kubernetes cluster)

poetry run pytest tests/smoke_tests/ -v

Code Formatting

All code must pass linting before being merged. Run the format script to auto-fix issues:

bash format.sh

This runs:

- ruff - Python linter and formatter

- mypy - Static type checking

Local Kubernetes Cluster (Optional)

For running smoke tests locally, you can set up a kind cluster:

# Install kind and set up a local cluster with JobSet and Kueue

bash tests/kind_install.sh

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file konduktor_nightly-0.1.0.dev20260207105559.tar.gz.

File metadata

- Download URL: konduktor_nightly-0.1.0.dev20260207105559.tar.gz

- Upload date:

- Size: 253.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.1.1 CPython/3.10.19 Linux/6.14.0-1017-azure

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8f508d341ac44f8dc9b7f1b20579aef6bc7f6c1d04161090297cb6afb0fa9bc4

|

|

| MD5 |

ff5e362b19334ab91d40f911894a15c5

|

|

| BLAKE2b-256 |

9d90cbaa64cd8af409c473b4b4e3619732912f6f6acb77df5e5fe1b63394fa07

|

File details

Details for the file konduktor_nightly-0.1.0.dev20260207105559-py3-none-any.whl.

File metadata

- Download URL: konduktor_nightly-0.1.0.dev20260207105559-py3-none-any.whl

- Upload date:

- Size: 300.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.1.1 CPython/3.10.19 Linux/6.14.0-1017-azure

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3747920cb11f1cf887c482e26b2c79e47ed8a5d78020208d24138af121e25339

|

|

| MD5 |

30e47c28a3dffc845977dd9148c6c799

|

|

| BLAKE2b-256 |

b10e2cfbe8997d76fac86a3064f260498afbfe3a38e81b6d7b9ed18d55acc09a

|