Automatic performance capture and analysis for production applications in Python using a custom columnar database written in Rust.

Project description

Automatic performance capture and analytics for production applications in Python using a custom columnar database written in Rust.

View Kronicler on PyPi.org, Crates.io and GitHub.

[!IMPORTANT] Kronicler is still early in development.

Benefits of using Kronicler

- Automatic performance capturing

- Lightweight and concurrent*

- One Python dependency

- Works out-of-the-box without configuration

* concurrency is in development but not fully implemented as of version 0.1.1. Track concurrency in issue #41.

Why use Kronicler?

If you want to monitor the performance of a production application, kronicler offers efficient and lightweight logging with a single library. Kronicler lets you view runtime statistics for functions like mean and median as well as statistics for different percentiles.

A use-case for these statistics is to find functions that occasionally operate much slower than they do on average. By looking at the "slowest" speed, the standard error, and the mean, you can find functions that occasionally run much slower than expected. Sometimes it's hard to find and replicate these issues in a test environment, so keeping logs in your production application can improve your ability to find these issues.

What really is Kronicler?

| Name | Description | Link |

|---|---|---|

| Kronicler (Python) | A Python library that provides the @kronicler.capture decorator to save performance logs to a database. |

More about Python |

| Kronicler (Database) | Custom columnar database designed for log capturing and performance analytics. | More about Database |

| Kronicler (Rust) | A Rust library for accessing the columnar database and capture functions for Rust-based log capturing tasks. | More about Rust |

| Kronicler (CLI) | Rust-based CLI tool for analyzing performance logs captured with the capture methods. | More about CLI |

| Kronicler (Web) | Prototype web portal for remotely viewing performance logs. | More about Web |

Install (Python)

Install with Pip for Python

pip install kronicler

You may need to use a virtual environment.

Usage (Python)

Kronicler provides a Python decorator called capture that will calculate the time it takes to run the function.

import kronicler

@kronicler.capture()

def my_function():

pass

Architecture

Simplified version of the package and database architecture. The data is passed from the Python decorator called capture to the database's queue. It then consumes that queue to insert each field into its respective column. The column uses the bufferpool to operate on pages.

This does not include details on:

- How the

bufferpoolmanagespages. - How

pagesoperate. capture,index,row, or saving and loading withmetadata.

The Database

The columnar database is somewhat inspired by my previous database called RedoxQL. A lot of the structure and architecture is different as well as how data is stored.

The Bufferpool

The bufferpool is based on my bufferpool project. I had to modify it to work with the rest of this database.

Future Languages

Install and Usage for Rust is coming soon...

I plan to implement the Rust version as an attribute to be used like the following:

#[capture]

fn foo() { todo!() }

Examples

Using Kronicler (Basic Example)

Here is the most basic usage of Kronicler. Use it to capture the runtime of functions by adding the @kronicler.capture decorator.

import kronicler

@kronicler.capture

def foo():

print("Foo")

Using Kronicler with FastAPI

With just two lines of code, you can add Kronicler to your FastAPI server.

from fastapi import FastAPI

import uvicorn

import kronicler

app = FastAPI()

# Used only for optionally returning logs

DB = kronicler.Database(sync_consume=True)

# You need to wrap helper functions

@kronicler.capture

def foo():

return {"Hello": "World"}

# You cannot wrap routes right now

@app.get("/")

def read_root():

return foo()

# Return the logs to the user (optional)

@app.get("/logs")

def read_logs():

return DB.fetch_all_as_dict()

if __name__ == "__main__":

uvicorn.run(app, host="0.0.0.0", port=8000)

Code from tests/fastapi-test/main.py.

Using Kronicler manually

It's recommended to first use Kronicler's build-in capture decorator. However, if you want to write your own capture statements and fetch data yourself, you can use the functions in that come with the Python library.

import kronicler

DB = kronicler.Database(sync_consume=True)

def foo():

DB.capture("String Value", [], 100, 200)

# Call the function

foo()

fetched = DB.fetch(0)

print(fetched)

More functionality will be added to the Python library in future releases.

Using Kronicler's database directly

If you're interested in using Kronicler's database directly in Rust to add custom logging functions (or just to use a columnar database), the library is published to crates.io.

Install with Cargo for Rust

cargo install kronicler

Add as a dependency in your Cargo.toml.

[dependencies]

kronicler = "0.1.1"

To get a good idea of how to use Kronicler's internal Rust database, I'd recommended looking at some of the tests in the Rust files. You can also look at the source code for the kr binary in main.rs.

Here is an example of a function that fetches data based on index. It creates a Database from the new_reader trait.

use kronicler::database::Database;

fn fetch_one(index: usize) {

let mut db = Database::new_reader();

let row = db.fetch(index);

if let Some(r) = row {

println!("{}", r.to_string());

}

}

fn main() {

fetch_one(0);

fetch_one(1);

}

You can also make a database that writes new capture data with the new trait.

use kronicler::database::Database;

fn main() {

let mut db = Database::new();

db.capture("Name".to_string(), vec![], 100, 200);

}

Performance

Kronicler is designed to be as lightweight as possible. By adding logs to a queue concurrently*, Kronicler doesn't affect performance by much [PR #73, PR #76].

For accessing logs and running calculations, Kronicler uses a columnar database design to optimize file operations when looking at lots of data from only a few columns typical of analytics tasks.

* concurrency is in development but not fully implemented as of version 0.1.1. Track concurrency in issue #123.

Kronicler DB vs. SQLite

I implemented a test version of Kronicler logging that uses SQLite under the hood to benchmark against.

Experiment 1. Insert 1000 captures

Here is the function that we capture with kronicler_sqlite.capture defined by our test package that uses SQLite.

@kronicler_sqlite.capture

def foo_1():

val = 9

for x in range(4):

val += val + x

return val

Here is where we call the function CAPTURE_COUNT times. Which in this case is 1000.

def test_sqlite():

## Test for kronicler_sqlite

# Warmup

for _ in range(WARMUP_COUNT):

foo_1()

# Test

for _ in range(CAPTURE_COUNT):

foo_1()

We do the same for the Kronicler DB version with kronicler.capture.

We then run each of those functions 50 times and log the results:

if __name__ == "__main__":

insert_times_data = []

avg_times_data = []

for x in tqdm.tqdm(range(REPEATS)):

# TEST sqlite inserts

start = time.time_ns()

test_sqlite()

end = time.time_ns()

print(f"{test_sqlite.__name__} took {end - start}ns")

insert_times_data.append((test_sqlite.__name__, end - start))

# TEST sqlite avg

start = time.time_ns()

avg_val = avg_sqlite()

end = time.time_ns()

print(f"{avg_sqlite.__name__} = {avg_val} and took {end - start}ns")

avg_times_data.append((avg_sqlite.__name__, end - start))

# Wait for any cleanup to happen between SQLite and Columnar

time.sleep(2)

# TEST columnar inserts

start = time.time_ns()

test_columnar()

end = time.time_ns()

print(f"{test_columnar.__name__} took {end - start}ns")

insert_times_data.append((test_columnar.__name__, end - start))

# TEST columnar avg

start = time.time_ns()

avg_val = avg_columnar()

end = time.time_ns()

print(f"{avg_columnar.__name__} = {avg_val} and took {end - start}ns")

avg_times_data.append((avg_columnar.__name__, end - start))

# Wait for any cleanup to happen between Columnar and No log

time.sleep(2)

# TEST no log inserts

start = time.time_ns()

test_no_logging()

end = time.time_ns()

print(f"{test_no_logging.__name__} took {end - start}ns")

insert_times_data.append((test_no_logging.__name__, end - start))

# TEST no logging avg

start = time.time_ns()

avg_val = avg_no_logging()

end = time.time_ns()

print(f"{avg_no_logging.__name__} = {avg_val} and took {end - start}ns")

avg_times_data.append((avg_no_logging.__name__, end - start))

with open("sync_insert_data.json", "w") as file:

json.dump(insert_times_data, file)

with open("sync_avg_data.json", "w") as file:

json.dump(avg_times_data, file)

This test is called simple_sync.py. Note: concurrent mode is turned off as of v0.1.1, so those tests will not run. The concurrent version of the test is simple_concurrent.py and will exit with an error message explaining the lack of support in v0.1.1.

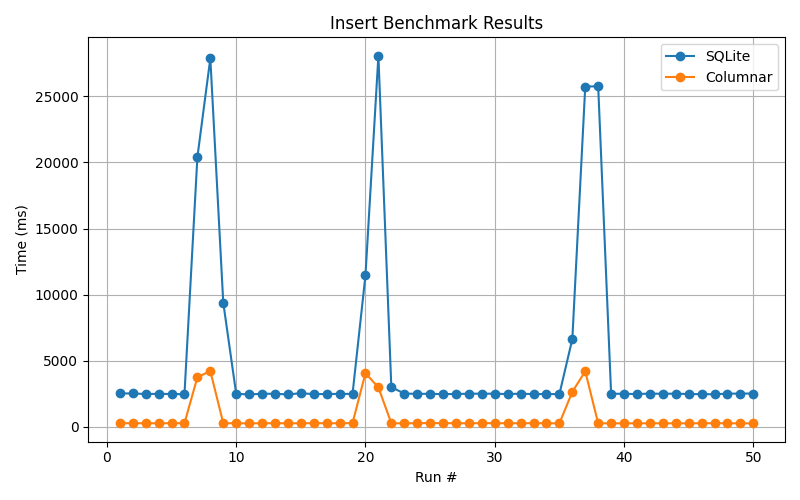

Insert (writing data to disk):

The data can be found at sync_insert_data.json.

Inserting is 7.71x faster than SQLite.

It's still unknown why SQLite has large latency spikes, but information about that will be mentioned in issue #101 if found.

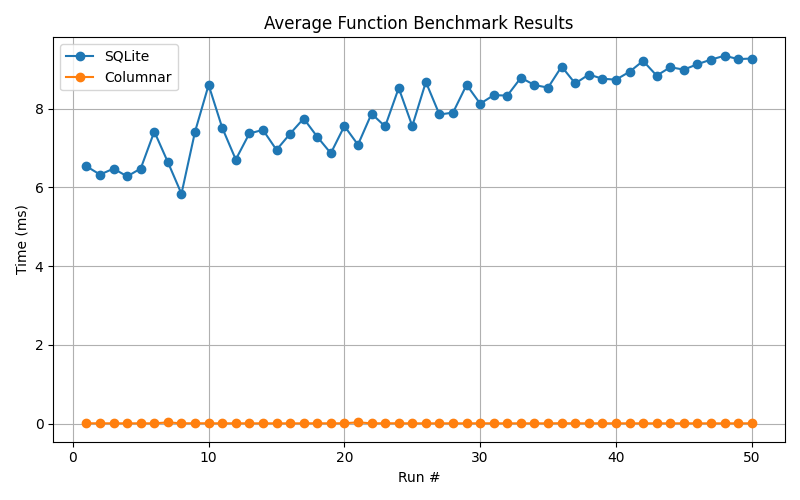

Average (calculating the mean):

The data can be found at sync_avg_data.json.

Kronicler uses amortized constant calculations to keep an average runtime for functions. This causes the mean calculation to be 837.24x faster than the average function in SQLite.

Here is how it's done in SQLite:

SELECT AVG(delta) FROM function_calls WHERE function_name = ?

Kronicler keeps an updated mean on insert. This same approach can be done with SQLite by having your own index on function names and a table to keep track of the mean function time the same way Kronicler does it, however that's not the default and you'd have to build that into your implementation.

Kronicler Mean with Large Data Test

Correctness for average (mean) calculation for large data

The test script

rm -rf .kronicler_data

sleep 2

python3 ./large_average.py

echo -e "Finished running large_average.\n\n"

rm -rf .kronicler_data

sleep 2

python3 ./large_average_no_in_mem_comp.py

echo -e "Finished running large_average_no_in_mem_comp.\n\n"

echo "Note: large_average has a higher insert time because it needs to store ground truth values in memory. The ground truth average will also be quicker because it uses only in-memory values."

This script is called run_large_avg.sh.

Importantly, we test that the average is correct using a "ground truth" calculation.

start = time.time()

kr_avg = DB.average("jake")

kr_avg_time = time.time() - start

print("Kronicler average: ", kr_avg, f"ran in {kr_avg_time}.")

start = time.time()

py_avg = mean(ground_truth_data)

py_avg_time = time.time() - start

print("Ground truth average: ", py_avg, f"ran in {py_avg_time}.")

assert kr_avg - py_avg < 0.0001

This is from large_average.py.

Results

Running insert took 76.3846685886383

First row: Row(id=0, fields=["jake", Epoch(169), Epoch(367), Epoch(198)])

Fetched all 100000 rows.

Kronicler average: 200.19449000000037 ran in 2.1696090698242188e-05.

Ground truth average: 200.19449 ran in 0.0007288455963134766.

Finished running large_average.

Running insert took 77.20267271995544

First row: Row(id=0, fields=["jake", Epoch(191), Epoch(372), Epoch(181)])

Fetched all 100000 rows.

Kronicler average: 199.92816000000082 ran in 2.3126602172851562e-05.

Finished running large_average_no_in_mem_comp.

As you can see, in the first test the kronicler_data amortized const average

took 2.1696090698242188e-05 seconds but the ground truth written in Python

0.0007288455963134766 seconds. The Rust amortized constant is faster.

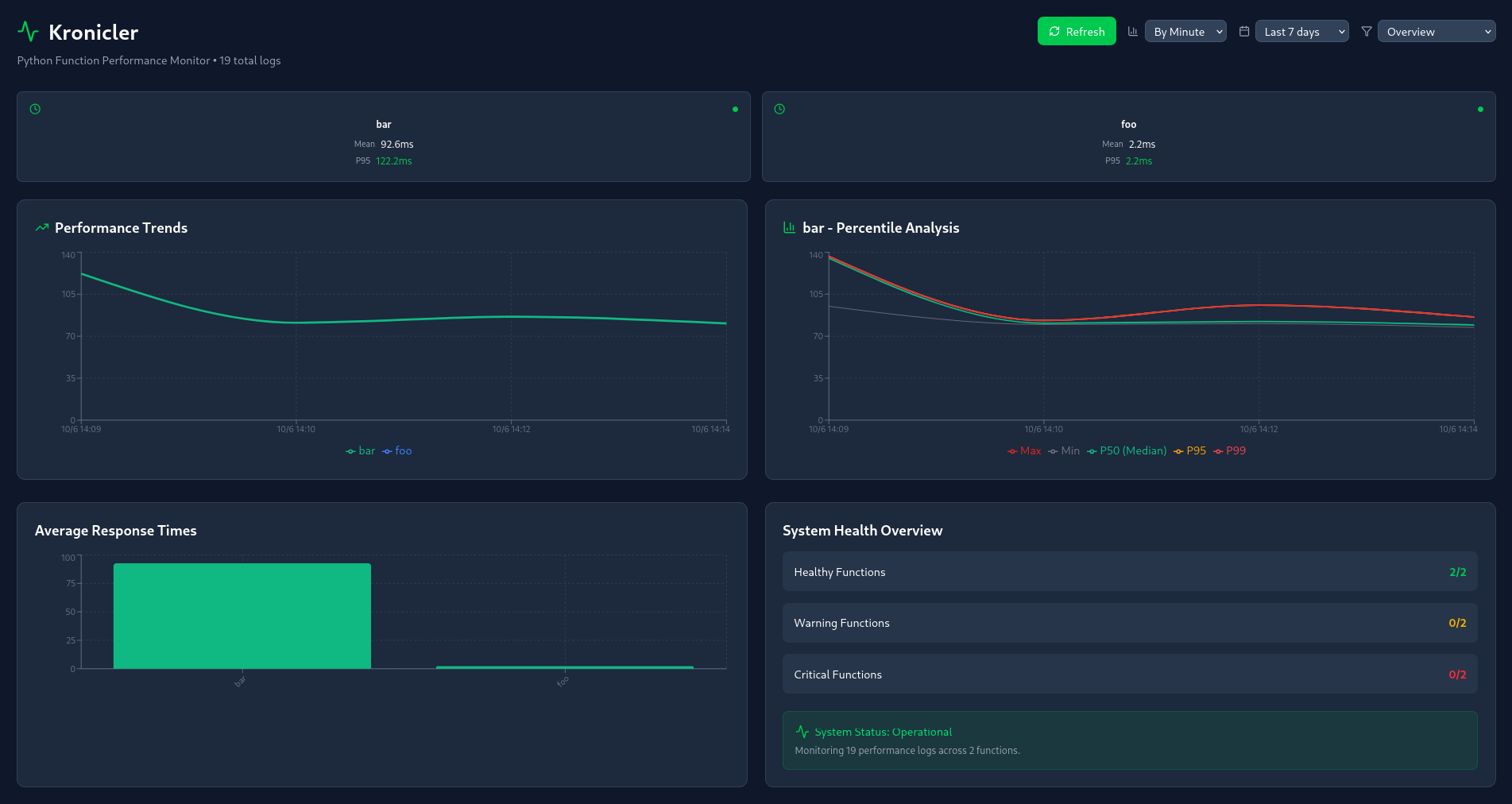

Analytics Web Dashboard

The Analytics Web Dashboard is still under construction. This feature will let you remotely view the logs collected from Kronicler.

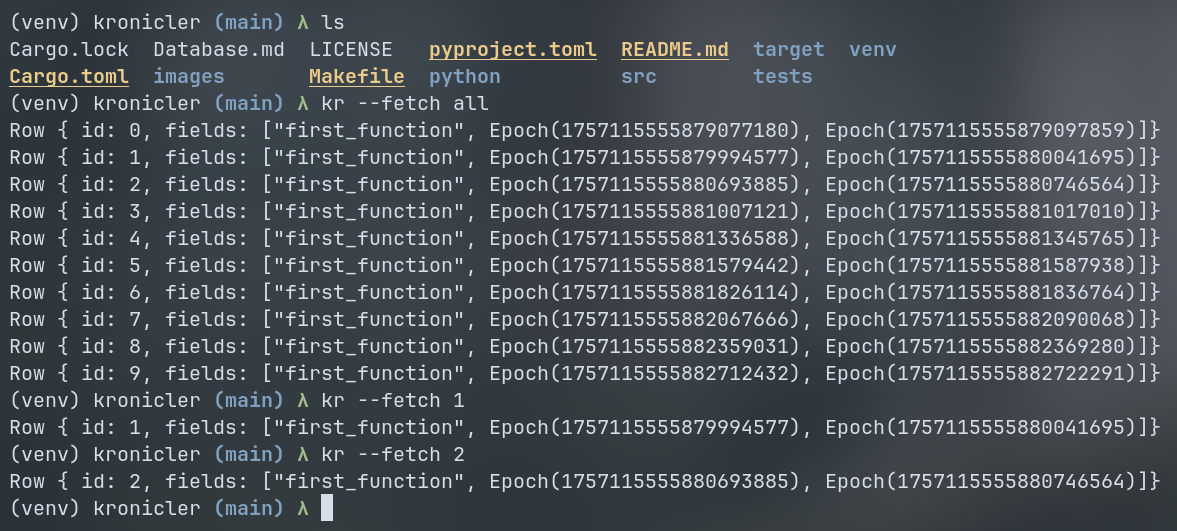

Analytics CLI

Install the Analytics CLI

cargo install kronicler

You can view all of your data by running kr in the directory of your data:

kr --fetch all

kr --fetch <index>

You should see the data collected:

In the future, there will be many options for sorting, filtering, and viewing specific statistics.

Logging

By adding the capture decorator to your code (as seen below), Kronicler will automatically test the runtime of your function when it gets called. The results of the test get added to the database. This data can later be viewed in the Analytics Web Dashboard or the Analytics CLI.

import kronicler

@kronicler.capture()

def my_function():

pass

Development

Building the Python package

1. Build the package

maturin build

You will need maturin installed, see Install Maturin.

2. Install the package

pip install --force-reinstall target/wheels/kronicler-*

Testing Python scrips

You can run the scripts in tests/ to test out the functionality of Kronicler.

python3 tests/many_test.py

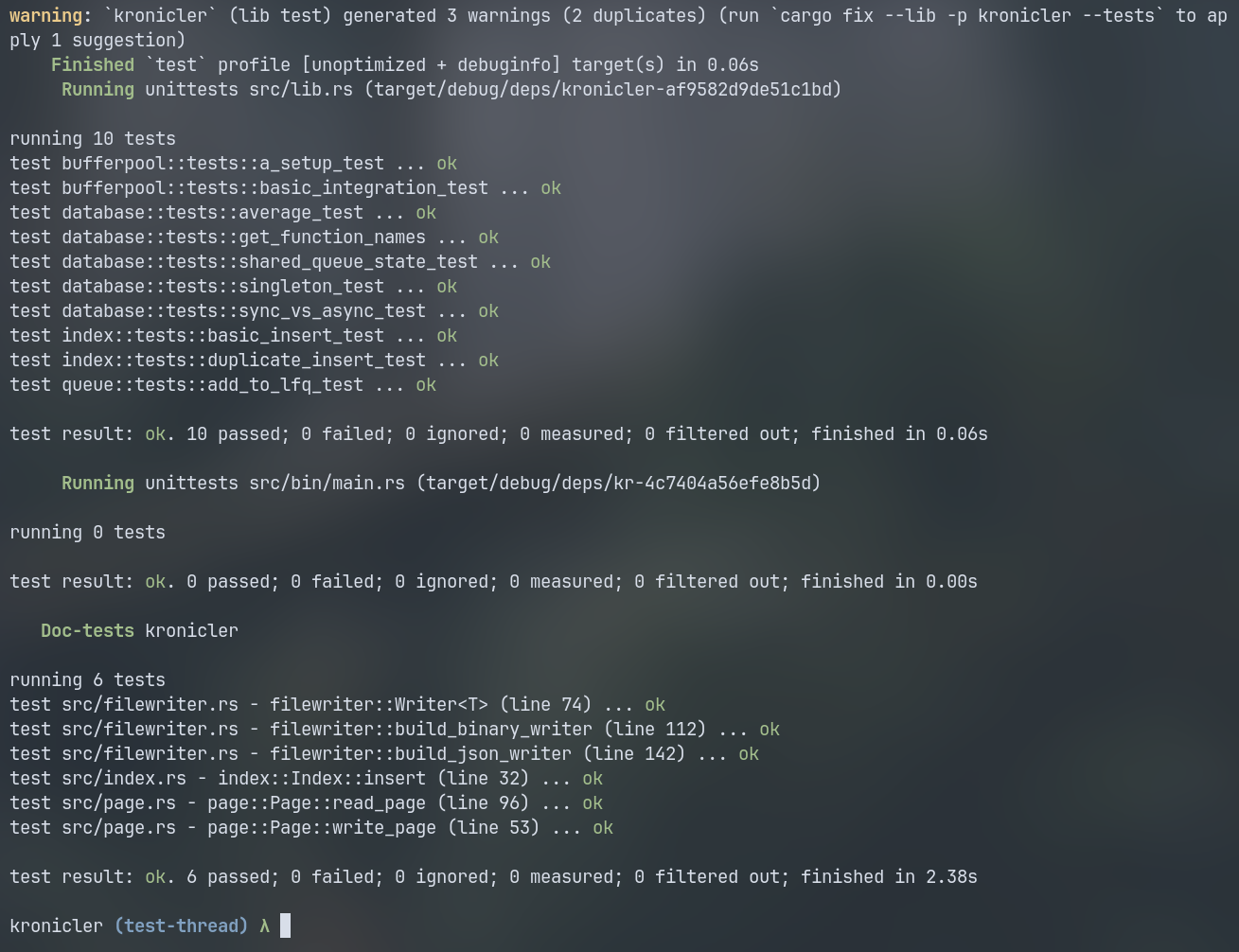

Testing Rust

You can run the testing suite with the following command:

cargo t

We use cargo t as an alias to run the tests in one thread.

The tests should all succeed

Publishing

Publishing to Crates.io

1. Increment the version everywhere

2. Run the publish dry-run

cargo publish --dry-run

3. Check package list

cargo package --list

Check that only the needed Rust files are included.

4. Publish!

cargo publish

Publishing to PyPi.org

Use maturin to publish the package

maturin publish --username __token__ --password <API Token>

You may need to get a new API token from PyPi.org.

Misc Docs

Python Virtual Environment

Create the virtual environment

python3 -m venv .venv

Enter the virtual environment

source .venv/bin/activate

I usually call my virtual environment either .venv or more frequently just venv

Install Maturin

pip install maturin

For more info, see the maturin docs.

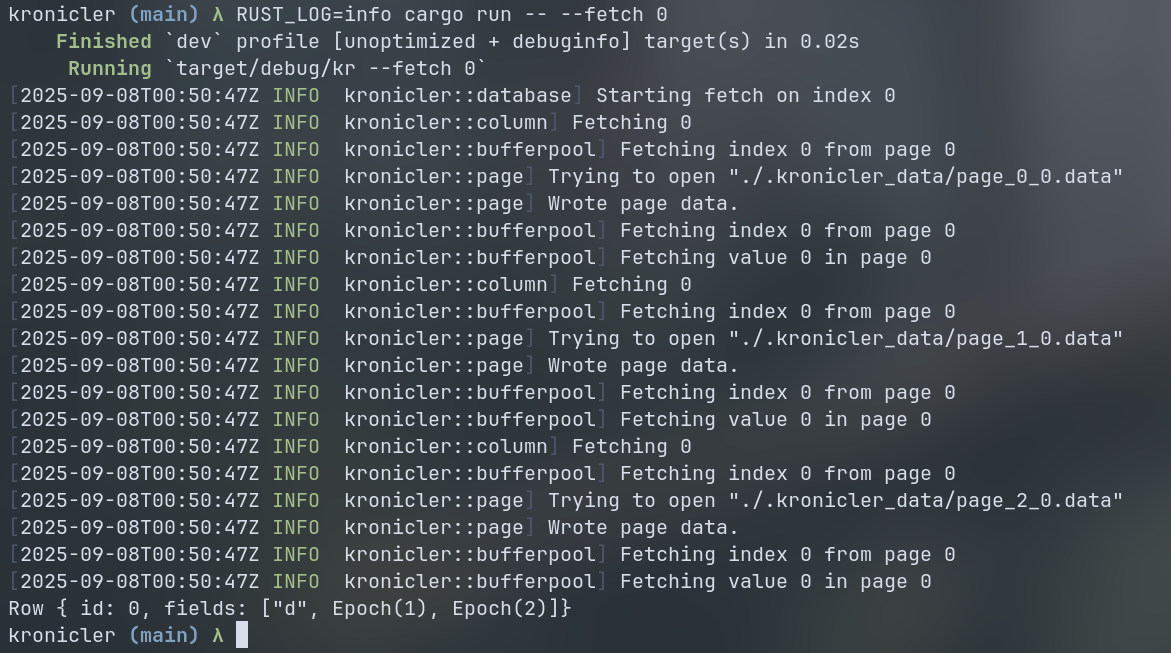

Rust Logging

Kronicler uses env_logger to log internals. To view these logs, you add the RUST_LOG env var.

For instance, you can include the logger for cargo run with the fetch argument.

RUST_LOG=info cargo run -- --fetch 0

This will print the logs:

Adding a log

You can add logs with the info! macro. There are also debug!, warn!, trace!, and error! variants.

use log::info;

fn main() {

let _ = env_logger::try_init();

info!("Some info here");

}

You only need to call try_init once, and that's already done in lib.rs and main.rs.

Formatting for Python

Kronicler uses Ruff for formatting.

You can format all Python files with:

ruff format *.py

You can also check that they have the right format with:

ruff check *.py

Formatting for Rust

Kronicler uses cargo fmt

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file kronicler-0.1.1.tar.gz.

File metadata

- Download URL: kronicler-0.1.1.tar.gz

- Upload date:

- Size: 35.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.9.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a4f98568d6f42d160709700bbca5a148eb5bfca5dfcff1c4f4196a1b9ba3e994

|

|

| MD5 |

6dcfde0033fb2c98cbe9b4774720912d

|

|

| BLAKE2b-256 |

762a047a6abc0322ee5374365d140ad3b43a291141eb53e08742a899a8a0dd19

|

File details

Details for the file kronicler-0.1.1-cp313-cp313-manylinux_2_34_x86_64.whl.

File metadata

- Download URL: kronicler-0.1.1-cp313-cp313-manylinux_2_34_x86_64.whl

- Upload date:

- Size: 826.4 kB

- Tags: CPython 3.13, manylinux: glibc 2.34+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.9.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

45e2d3d140e688108f7ea0a898b5d634158620508c78269b4a95f2b52d7d60c9

|

|

| MD5 |

0f3256f24f13353ab34152c86a4067e6

|

|

| BLAKE2b-256 |

cf200f3a93dad0724bbb02fa6e77f9d8325586c13c5ac8bf7dcc480b9699b745

|