Hyperparameter search for image classifiers using Ray Tune + SkyPilot

Project description

krunic

Automated hyperparameter search for image classifiers - from dataset to tuned model with one command. Distributed across GPUs and across hosts, locally and on the cloud (AWS).

krunic uses off-the-shelf models and packages, so you won't get SOTA performance. That said, it can get surprisingly close, with almost zero effort. Useful as a baseline, or experimentation with architectures, GPUs etc.

Built on Ray Tune, Optuna, timm, and SkyPilot. NOTE: Requires Python ≥ 3.12. Ubuntu 22.04 users need to install it via deadsnakes or upgrade their OS.

Install (Mac and Linux)

$ pipx install krunic

This installs three commands: tunic (local training), krunic (cloud launcher), and tunic-plotter (results visualizer). The command takes a couple of minutes.

Install (Windows)

Note: 1. This is untested because I don't have Windows access. But I'm guessing it should work. 2. The SkyPilot support for Windows is poor. Thus, only the tunic (local classifier tuning) should work

winget install Python.Python.3 # if Python not already installed

py -m pip install --user pipx

py -m pipx ensurepath # restart terminal after this

pipx install krunic

Quick start

Local:

$ tunic --data /path/to/dataset --model resnet50 --n_trials 30 --epochs 30 --output results.json

Cloud (AWS):

This requires, obviously, an AWS account. The image data must be copied to S3 prior to the run, for example like this:

$ aws s3 sync ~/image_data/tin s3://image.data/tin

$ krunic \

--cluster skya \

--s3-path my-dataset \

--model resnet50 \

--accelerator T4:4 \

--num-nodes 4 \

--n-trials 48 \

--n-epochs 50 \

--prefix kaws

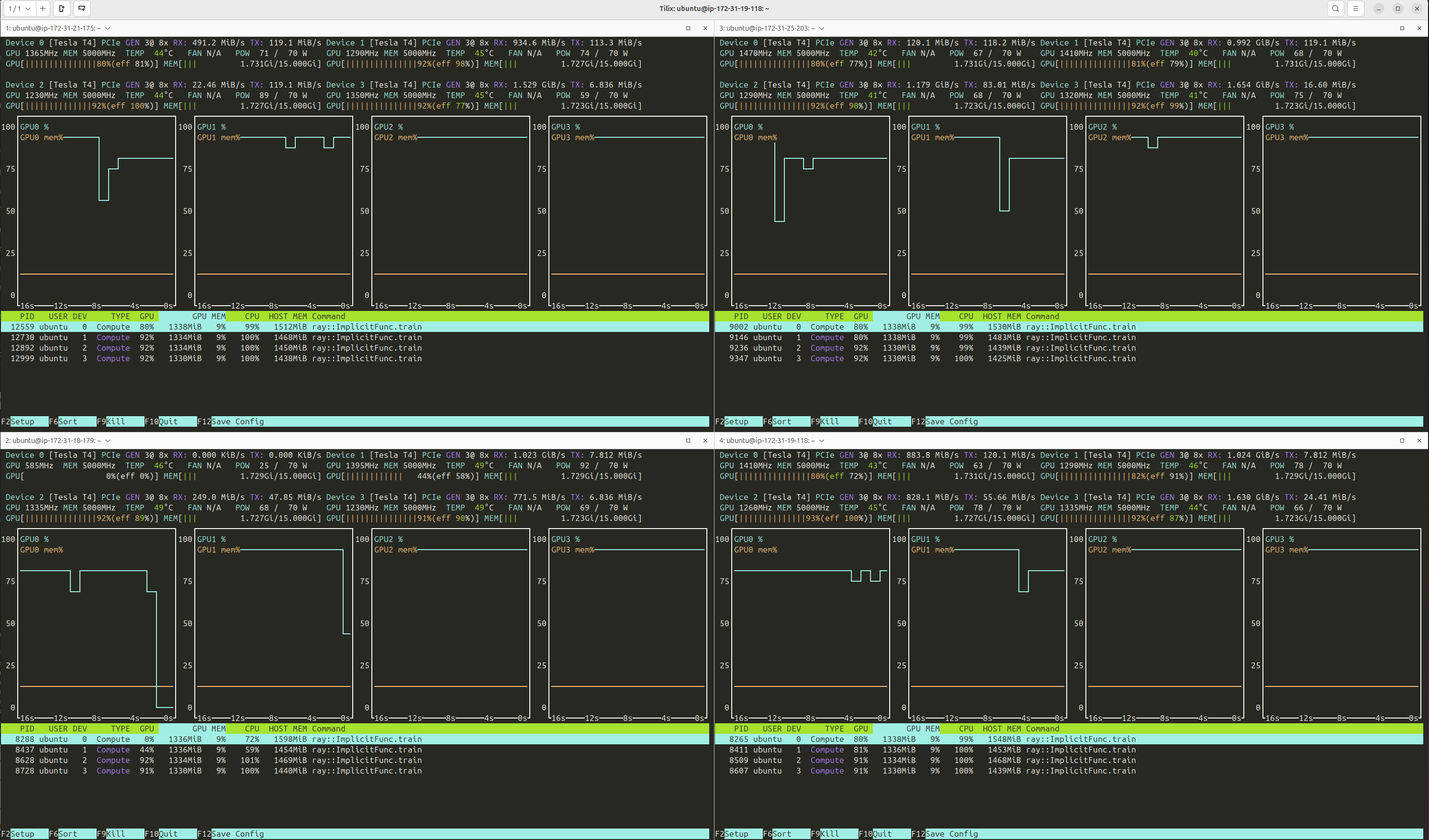

SkyPilot creates the cluster, Ray distributes the load across the GPUs. In my experiments, it achieves near-perfect utilization:

Upon completion, get the best model hyperparameters:

$ aws s3 cp s3://image.data/ray-results/tin6/kaws_results.json .

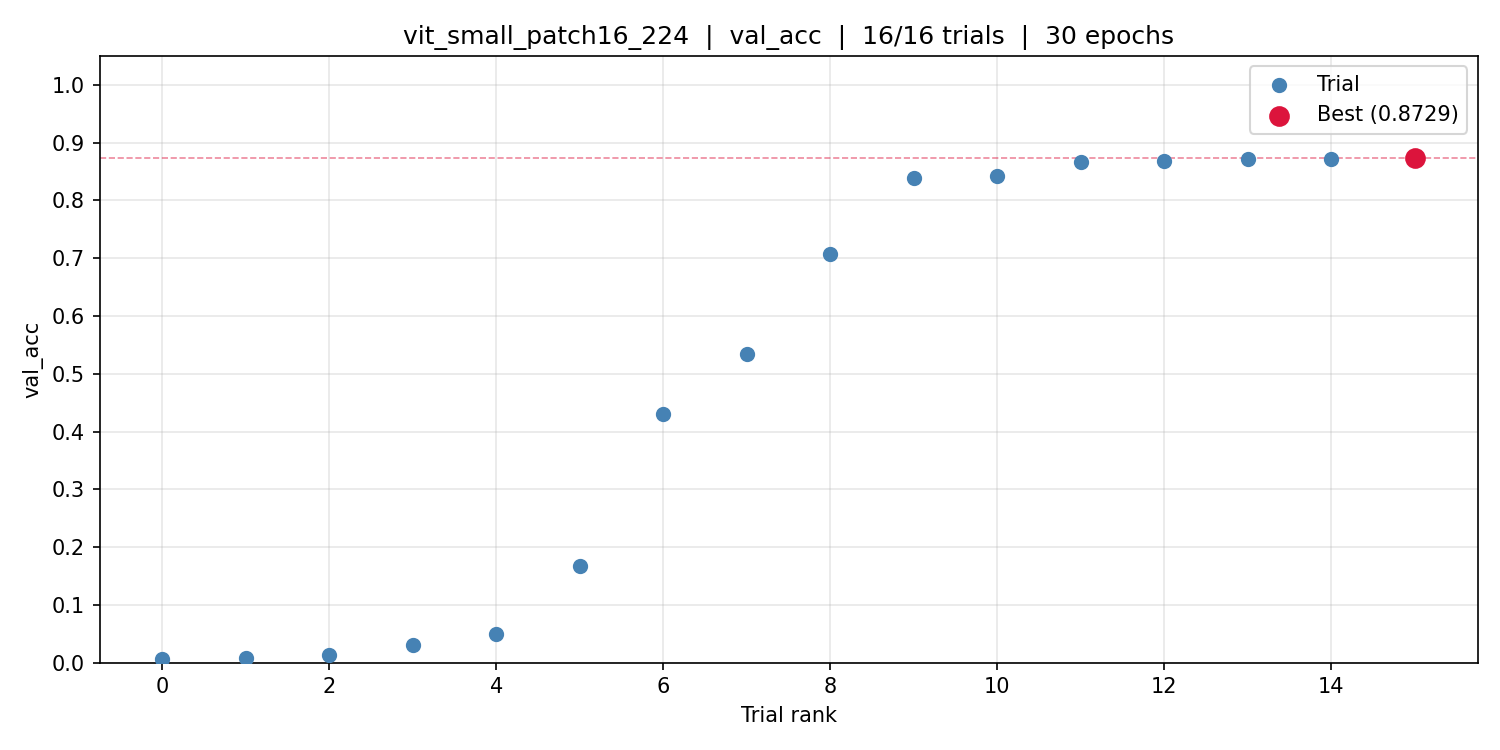

Plot metric per trial:

$ tunic-plotter kaws_results.json

Remember to take down the cluster after downloading the results.

$ yes | sky down skya

Train final model from tuning results (locally):

$ tunic --final kaws_results.json --data /path/to/dataset --epochs 50 --amp

Results on common benchmarks

| Dataset | Model | Metric | Validation | Test | SOTA |

|---|---|---|---|---|---|

| PCam | ResNet18 | AUROC | 0.96 | 0.96 | 0.96 |

| TinyImageNet | ViT-Small | Accuracy | 0.87 | 0.91 | |

| ChestMNIST | ResNet18 | AUROC | 0.75 | 0.75 | 0.77 |

| TissueMNIST | ResNet18 | AUROC | 0.92 | 0.94 | 0.93 |

All runs use generic off-the-shelf models with no domain-specific modifications.

Search space

| Parameter | Range |

|---|---|

| Optimizer | AdamW, SGD |

| Learning rate | 1e-5 – 1e-1 (log) |

| Weight decay | 1e-6 – 1e-1 (log) |

| Label smoothing | 0 – 0.3 |

| Dropout rate | 0 – 0.5 |

| RandAugment magnitude | 1 – 15 |

| RandAugment num ops | 1 – 4 |

| Mixup alpha | 0 – 0.5 |

| CutMix alpha | 0 – 1.0 |

Override any part with a YAML file via --search-space.

tunic - local hyperparameter search

tunic --data PATH --model MODEL [options]

| Flag | Default | Description |

|---|---|---|

--data |

required | Dataset root (ImageFolder or WebDataset) |

--model |

required | Any timm model name |

--n_trials |

80 | Number of Optuna trials |

--epochs |

30 | Training epochs per trial (also used for --final) |

--tune-metric |

val_auroc |

Metric for trial selection and pruning |

--training_fraction |

1.0 | Fraction of training data (val always uses 1.0) |

--batch-size |

32 | Batch size per trial |

--amp |

Enable automatic mixed precision | |

--resume |

Warm-start from a previous experiment directory | |

--final |

Skip tuning; train final model from results JSON | |

--combine |

Train final model on train+val combined | |

--final-model |

tunic_final.pt |

Output path for final model weights |

--final-stats |

Output path for final model stats (JSON) | |

--device |

auto |

auto, cuda, mps, or cpu |

--smoke-test |

Quick end-to-end test with synthetic data |

krunic - cloud launcher

krunic generates a SkyPilot YAML and launches the job. The dataset is S3-mounted (or copied); results are uploaded to S3 when the job completes.

Prerequisites

1. AWS credentials

aws configure

Prompts for your Access Key ID, Secret Access Key, and region (e.g. us-east-1). Your IAM user needs EC2 and S3 permissions. SkyPilot uses these credentials directly - no separate SkyPilot account or configuration needed.

2. Verify SkyPilot sees AWS

sky check

Should show AWS: enabled.

3. Dataset in S3

aws s3 sync ~/image_data/my-dataset s3://my-bucket/my-dataset

Monitor and tear down

krunic launches the cluster and streams logs. Once the job completes, download results and tear down:

sky status # check cluster state

sky logs my-cluster 1 # stream logs (job ID increments with each run)

aws s3 cp s3://my-bucket/ray-results/prefix/prefix_results.json .

yes | sky down my-cluster # terminate cluster

--workdir defaults to the installed package directory (contains tunic.py and requirements.txt). Override it only if you are developing from a local source checkout and want to test unpublished changes.

krunic --cluster NAME --workdir DIR --s3-path PATH --model MODEL [options]

| Flag | Default | Description |

|---|---|---|

--cluster |

required | SkyPilot cluster name |

--workdir |

package dir | Local directory synced to the cluster. Used for development |

--s3-path |

required | Dataset path within the S3 bucket |

--model |

required | Any timm model name |

--accelerator |

T4:4 |

GPU spec (e.g. T4:4, A10G:1, A100:8) |

--num-nodes |

1 | Number of cluster nodes |

--n-trials |

30 | Number of Optuna trials |

--n-epochs |

30 | Training epochs per trial |

--batch-size |

32 | Batch size per trial |

--training-fraction |

1.0 | Fraction of training data per trial |

--tune-metric |

val_auroc |

Metric for trial selection and pruning |

--bucket |

image.data |

S3 bucket name |

--prefix |

tunic |

Prefix for output files and S3 paths |

--spot |

— | Use spot instances (with retry-until-up) |

--copy |

— | Copy data from S3 to local disk instead of mounting |

--idle-minutes |

60 | Auto-stop cluster after N idle minutes |

--no-autostop |

— | Disable auto-stop |

Results are uploaded to s3://<bucket>/ray-results/<prefix>/<prefix>_results.json.

tunic-plotter - visualize results

tunic-plotter results.json # plots val_auroc and val_acc

tunic-plotter results.json --metric val_acc # single metric

tunic-plotter results.json --trial_sort # keep original trial order, show running best

Saves PNG files alongside the results JSON.

Dataset format

tunic auto-detects the dataset format:

- ImageFolder - standard

split/class/image.extlayout - WebDataset - sharded TAR files; detected when

wds/dataset_info.jsonexists

Scaling

Concurrent trials = total GPUs: --num-nodes 4 --accelerator T4:4 --> 16 concurrent trials.

Optuna's TPE needs ~20 trials before it outperforms random search. 32–64 trials is a practical range for most problems.

Output format

{

"model": "resnet18",

"best_val_auroc": 0.963,

"best_val_acc": 0.891,

"best_params": {

"optimizer": "AdamW",

"lr": 0.0028,

"weight_decay": 3.6e-06,

"label_smoothing": 0.058,

"drop_rate": 0.183

},

"n_trials": 48,

"completed_trials": 48,

"epochs": 50,

"all_trials": [...]

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file krunic-0.1.9.tar.gz.

File metadata

- Download URL: krunic-0.1.9.tar.gz

- Upload date:

- Size: 42.1 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.8.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

11cb53781ec22e3773137f2f3ee90b3aa2af8d7760130753bca46d2073a2755d

|

|

| MD5 |

c7eab079b78c6edfeb9f6e63364553f5

|

|

| BLAKE2b-256 |

4872511cb6392855ed9f394a3fc263b79216e8a496f29d5a34fe6df8c8f177ba

|

File details

Details for the file krunic-0.1.9-py3-none-any.whl.

File metadata

- Download URL: krunic-0.1.9-py3-none-any.whl

- Upload date:

- Size: 23.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.8.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

22214997b03f8f30f4c57a8d4e2adba7561f19841f92b758c93d56890a13203d

|

|

| MD5 |

c3df70d266abb9ef551a5f46cbbfbec1

|

|

| BLAKE2b-256 |

3b4f4cfbf877e5d5caea24be3485f46de985a8e725e6d0a5e2f8c172b71be292

|