A LLM serving engine extension to reduce TTFT and increase throughput, especially under long-context scenarios.

Project description

| Blog | Documentation | Join Slack | Interest Form | Roadmap

Summary

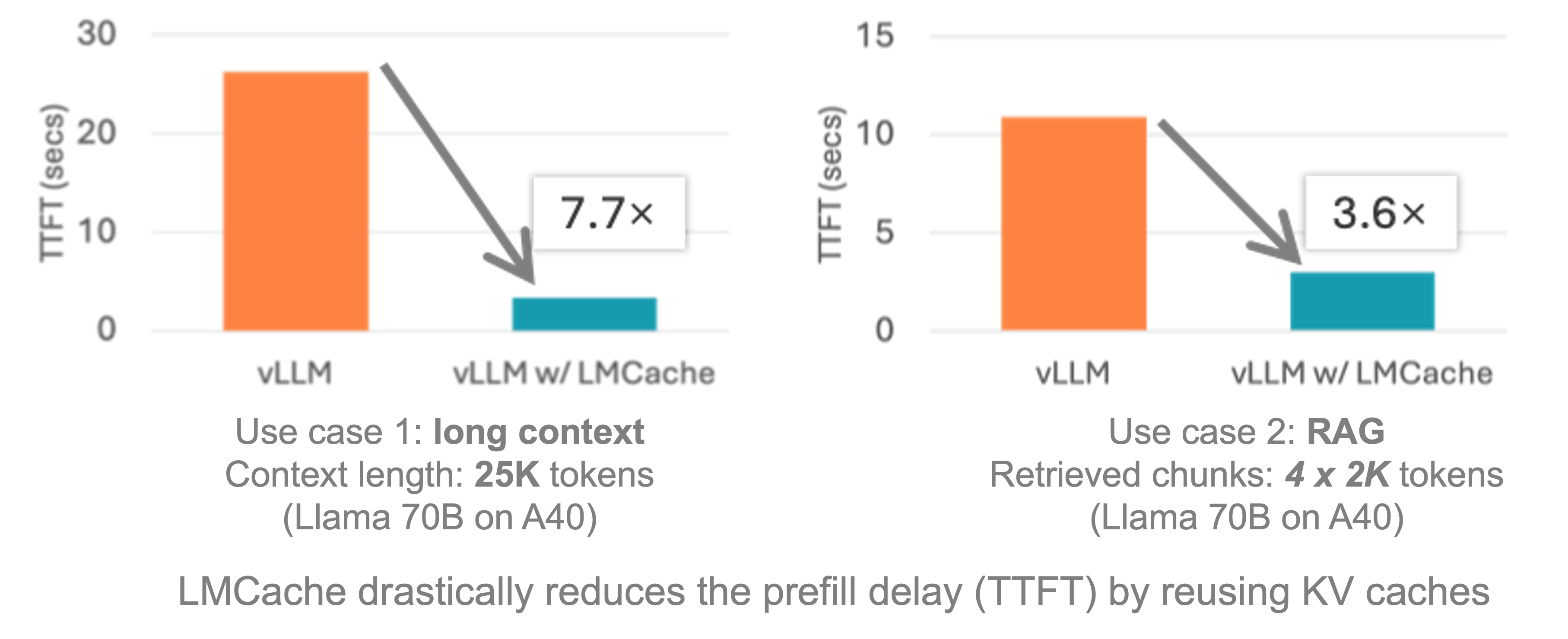

LMCache is an LLM serving engine extension to reduce TTFT and increase throughput, especially under long-context scenarios. By storing the KV caches of reusable texts all over the datacenter (including GPU, CPU, Disk and even S3) with a wide range of acceleration technqiue (zero cpu copy, NIXL, GDS and more). LMCache reuses the KV caches of any reused text (not necessarily prefix) in any serving engine instance. Thus, LMCache saves precious GPU cycles and reduces user response delay.

By combining LMCache with vLLM, developers achieve 3-10x delay savings and GPU cycle reduction in many LLM use cases, including multi-round QA and RAG.

LMCache is used, integrated, or referenced across a growing ecosystem of LLM serving platforms, infrastructure providers, and open-source projects:

- Initiated and officially supported by: Tensormesh

- Adopted by inference providers: GMI cloud (blog post), Google cloud (blog post), CoreWeave (blog post) and more

- Integrated with data and storage infrastructure providers: Redis (blog post), Weka (blog post), PliOps (blog post) and more

- Used by open-source projects and platforms: vLLM

, SGLang

, vLLM Production Stack

, llm-d

, NVIDIA dynamo

, KServe

and more.

For more details, please check our Ray Summit talk and technical report.

Features

- 🔥 Integration with vLLM v1 with the following features:

- High performance CPU KVCache offloading

- Disaggregated prefill

- P2P KVCache sharing

- Integration with SGLang for KV cache offloading

- Storage support as follows:

- CPU

- Disk

- NIXL

- Installation support through pip and latest vLLM

Installation

To use LMCache, simply install lmcache from your package manager, e.g. pip:

pip install lmcache

Works on Linux NVIDIA GPU platform.

More detailed installation instructions are available in the docs, particularly if you are not using the latest stable version of vllm or using another serving engine with different dependencies. Any "undefined symbol" or torch mismatch versions can be resolved in the documentation.

Getting started

The best way to get started is to checkout the Quickstart Examples in the docs.

Documentation

Check out the LMCache documentation which is available online.

We also post regularly in LMCache blogs.

Examples

Go hands-on with our examples, demonstrating how to address different use cases with LMCache.

Interested in Connecting?

Fill out the interest form, sign up for our newsletter, join LMCache slack, or drop an email, and our team will reach out to you!

Community meeting

The community meeting Zoom Link for LMCache is hosted bi-weekly. All are welcome to join!

Meetings are held bi-weekly on: Tuesdays at 9:00 AM PT – Add to Google Calendar

We keep notes from each meeting on this document for summaries of standups, discussion, and action items.

Recordings of meetings are available on the YouTube LMCache channel.

Contributing

We welcome and value all contributions and collaborations. Please check out Contributing Guide on how to contribute.

We continually update [Onboarding] Welcoming contributors with good first issues!

Citation

If you use LMCache for your research, please cite our papers:

@inproceedings{liu2024cachegen,

title={Cachegen: Kv cache compression and streaming for fast large language model serving},

author={Liu, Yuhan and Li, Hanchen and Cheng, Yihua and Ray, Siddhant and Huang, Yuyang and Zhang, Qizheng and Du, Kuntai and Yao, Jiayi and Lu, Shan and Ananthanarayanan, Ganesh and others},

booktitle={Proceedings of the ACM SIGCOMM 2024 Conference},

pages={38--56},

year={2024}

}

@article{cheng2024large,

title={Do Large Language Models Need a Content Delivery Network?},

author={Cheng, Yihua and Du, Kuntai and Yao, Jiayi and Jiang, Junchen},

journal={arXiv preprint arXiv:2409.13761},

year={2024}

}

@inproceedings{10.1145/3689031.3696098,

author = {Yao, Jiayi and Li, Hanchen and Liu, Yuhan and Ray, Siddhant and Cheng, Yihua and Zhang, Qizheng and Du, Kuntai and Lu, Shan and Jiang, Junchen},

title = {CacheBlend: Fast Large Language Model Serving for RAG with Cached Knowledge Fusion},

year = {2025},

url = {https://doi.org/10.1145/3689031.3696098},

doi = {10.1145/3689031.3696098},

booktitle = {Proceedings of the Twentieth European Conference on Computer Systems},

pages = {94–109},

}

@article{cheng2025lmcache,

title={LMCache: An Efficient KV Cache Layer for Enterprise-Scale LLM Inference},

author={Cheng, Yihua and Liu, Yuhan and Yao, Jiayi and An, Yuwei and Chen, Xiaokun and Feng, Shaoting and Huang, Yuyang and Shen, Samuel and Du, Kuntai and Jiang, Junchen},

journal={arXiv preprint arXiv:2510.09665},

year={2025}

}

Socials

License

The LMCache codebase is licensed under Apache License 2.0. See the LICENSE file for details.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file lmcache-0.4.1.tar.gz.

File metadata

- Download URL: lmcache-0.4.1.tar.gz

- Upload date:

- Size: 2.1 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

baaa503095d1d37b841930b824ba3c7787b7348c467d799c665eb08849099f36

|

|

| MD5 |

e044bff3e89d3a52286de7874ad8c68d

|

|

| BLAKE2b-256 |

2229e5217174baf209f958343319817952fc9bfd844eabc33419872f34a07a12

|

Provenance

The following attestation bundles were made for lmcache-0.4.1.tar.gz:

Publisher:

publish.yml on LMCache/LMCache

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

lmcache-0.4.1.tar.gz -

Subject digest:

baaa503095d1d37b841930b824ba3c7787b7348c467d799c665eb08849099f36 - Sigstore transparency entry: 1092167896

- Sigstore integration time:

-

Permalink:

LMCache/LMCache@dfc914cba5e16fa814221a96e0de6709d4152dc4 -

Branch / Tag:

refs/tags/v0.4.1 - Owner: https://github.com/LMCache

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@dfc914cba5e16fa814221a96e0de6709d4152dc4 -

Trigger Event:

release

-

Statement type:

File details

Details for the file lmcache-0.4.1-cp313-cp313-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl.

File metadata

- Download URL: lmcache-0.4.1-cp313-cp313-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl

- Upload date:

- Size: 8.0 MB

- Tags: CPython 3.13, manylinux: glibc 2.27+ x86-64, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

98fcb1dfb42e54f8e5947b67bfc6d3887edf9fdfc3de625f7b34ae5b81d17ffd

|

|

| MD5 |

dcc671298a69627ea2c6d294675a2db8

|

|

| BLAKE2b-256 |

e931aeb6750827de0a9a92f6c3756051a7aeb831531afcdeae2d06a7998a2477

|

Provenance

The following attestation bundles were made for lmcache-0.4.1-cp313-cp313-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl:

Publisher:

publish.yml on LMCache/LMCache

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

lmcache-0.4.1-cp313-cp313-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl -

Subject digest:

98fcb1dfb42e54f8e5947b67bfc6d3887edf9fdfc3de625f7b34ae5b81d17ffd - Sigstore transparency entry: 1092167900

- Sigstore integration time:

-

Permalink:

LMCache/LMCache@dfc914cba5e16fa814221a96e0de6709d4152dc4 -

Branch / Tag:

refs/tags/v0.4.1 - Owner: https://github.com/LMCache

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@dfc914cba5e16fa814221a96e0de6709d4152dc4 -

Trigger Event:

release

-

Statement type:

File details

Details for the file lmcache-0.4.1-cp312-cp312-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl.

File metadata

- Download URL: lmcache-0.4.1-cp312-cp312-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl

- Upload date:

- Size: 8.0 MB

- Tags: CPython 3.12, manylinux: glibc 2.27+ x86-64, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

adb9a61da0724af0b1558b09aa3b477e291a5cdaf1f5ccd611ab8d8a65a771d2

|

|

| MD5 |

566b041c13cd5d56724b8817af431cc8

|

|

| BLAKE2b-256 |

3f0455a9beb5919672ba9866f39731dde0cece69929beb23a3b9c3348e99963b

|

Provenance

The following attestation bundles were made for lmcache-0.4.1-cp312-cp312-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl:

Publisher:

publish.yml on LMCache/LMCache

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

lmcache-0.4.1-cp312-cp312-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl -

Subject digest:

adb9a61da0724af0b1558b09aa3b477e291a5cdaf1f5ccd611ab8d8a65a771d2 - Sigstore transparency entry: 1092167910

- Sigstore integration time:

-

Permalink:

LMCache/LMCache@dfc914cba5e16fa814221a96e0de6709d4152dc4 -

Branch / Tag:

refs/tags/v0.4.1 - Owner: https://github.com/LMCache

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@dfc914cba5e16fa814221a96e0de6709d4152dc4 -

Trigger Event:

release

-

Statement type:

File details

Details for the file lmcache-0.4.1-cp311-cp311-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl.

File metadata

- Download URL: lmcache-0.4.1-cp311-cp311-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl

- Upload date:

- Size: 8.0 MB

- Tags: CPython 3.11, manylinux: glibc 2.27+ x86-64, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d84f22ee9bbc2526ed21d13e5be232a167c722b4bbea2ed318e5e998e321bc77

|

|

| MD5 |

8f05cfc26e1bdaf57cd72313672cb419

|

|

| BLAKE2b-256 |

f6584784a027ce27833a5ef0fa88eb822daccc836a6df1ea44daa94ae4dfe523

|

Provenance

The following attestation bundles were made for lmcache-0.4.1-cp311-cp311-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl:

Publisher:

publish.yml on LMCache/LMCache

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

lmcache-0.4.1-cp311-cp311-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl -

Subject digest:

d84f22ee9bbc2526ed21d13e5be232a167c722b4bbea2ed318e5e998e321bc77 - Sigstore transparency entry: 1092167905

- Sigstore integration time:

-

Permalink:

LMCache/LMCache@dfc914cba5e16fa814221a96e0de6709d4152dc4 -

Branch / Tag:

refs/tags/v0.4.1 - Owner: https://github.com/LMCache

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@dfc914cba5e16fa814221a96e0de6709d4152dc4 -

Trigger Event:

release

-

Statement type:

File details

Details for the file lmcache-0.4.1-cp310-cp310-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl.

File metadata

- Download URL: lmcache-0.4.1-cp310-cp310-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl

- Upload date:

- Size: 7.9 MB

- Tags: CPython 3.10, manylinux: glibc 2.27+ x86-64, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

85984fdddfc13f4a5efed092d7c3af80460153164f57ea185b608a85e911c19f

|

|

| MD5 |

d82e2eb962acc4135429c6b49d1db29d

|

|

| BLAKE2b-256 |

993ee4aac1c1774c0571d549edbd49581ef413c5425a54d8855c3e69538ef999

|

Provenance

The following attestation bundles were made for lmcache-0.4.1-cp310-cp310-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl:

Publisher:

publish.yml on LMCache/LMCache

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

lmcache-0.4.1-cp310-cp310-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl -

Subject digest:

85984fdddfc13f4a5efed092d7c3af80460153164f57ea185b608a85e911c19f - Sigstore transparency entry: 1092167917

- Sigstore integration time:

-

Permalink:

LMCache/LMCache@dfc914cba5e16fa814221a96e0de6709d4152dc4 -

Branch / Tag:

refs/tags/v0.4.1 - Owner: https://github.com/LMCache

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@dfc914cba5e16fa814221a96e0de6709d4152dc4 -

Trigger Event:

release

-

Statement type: