Interactive CLI tool for multi-format log analysis, filtering and export.

Project description

Logan-iq: Multi-Log Analysis Tool

An interactive command-line tool for parsing, filtering, summarizing, and exporting log files. Supports multiple log formats with flexible regex patterns and fully customizable user preferences.

⚠️ Installation Notice

Version 1.0.1 contains unintended scripts and should not be used.

Please install v1.0.2 or later for proper functionality and stability.

Features

- Parse logs using default or custom regex patterns

- Interactive and user-friendly CLI interface

- Filter logs by level, date range, keyword or result limit

- Generate summary tables with counts per level or per day

- Export logs in CSV or JSON formats

- Clean, colorful, and easy-to-read terminal output

Install

Package is available on PyPI

pip install logan-iq

logan-iq interactive

This makes the logan-iq command available in your terminal and then runs the interactive mode.

🖥️ Startup

How it Works

Core flow:

- Load Config or Defaults:

Loads user preferences from config.json if it exists. CLI arguments always override the config file. If no file is provided and no config exists, the app prompts the user to specify a file.

- Parse Log File:

Each log line is converted into a structured dictionary with fields like datetime, level, message,and optionally ip or other fields depending on the format.

- Filter (Optional):

Narrow results by log level, date range, keyword(s) search or limit the number of entries displayed.

NOTE: Keyword search covers message, method, path and status/status_code if they exist in json logs.

- Analyze or Summarize:

Display logs in a terminal table or generate summary reports.

- Export (Optional):

Export filtered data to CSV or JSON for further analysis.

Available Formats

You can supply your own regex directly via CLI or in saved configs custom_regex.

Or use the Built-in formats:

- simple → generic logs with

datetime,levelandmessage

2025-08-28 12:34:56 [INFO] Server started: Listening on port 8080

- apache → Apache access logs (common format)

192.200.2.2 - - [28/Aug/2025:12:34:56 +0000] "GET /index.html HTTP/1.1" 200 512

- nginx → Nginx access logs (combined format, includes referrer & user-agent)

192.100.1.1 - - [28/Aug/2025:12:34:56 +0000] "GET /index.html HTTP/1.1" 200 1024 "http://example.com" "Mozilla/5.0"

- json → JSON formatted logs (one object per line)

{

"datetime": "2025-08-28 12:34:56",

"level": "INFO",

"message": "Server started",

"method": "GET",

"status": 200,

"path": "/api/v1/users"

}

- custom → Any user-defined regex Example (inline via CLI):

logan-iq analyze --file logs/app.log --format custom --regex "^(?P<ts>\S+) (?P<level>\w+) (?P<msg>.*)$"

Or define in saved configs:

{

"default_file": "logs/custom_app.log",

"format": "custom",

"custom_regex": "^(?P<ts>\\S+) (?P<level>\\w+) (?P<msg>.*)$"

}

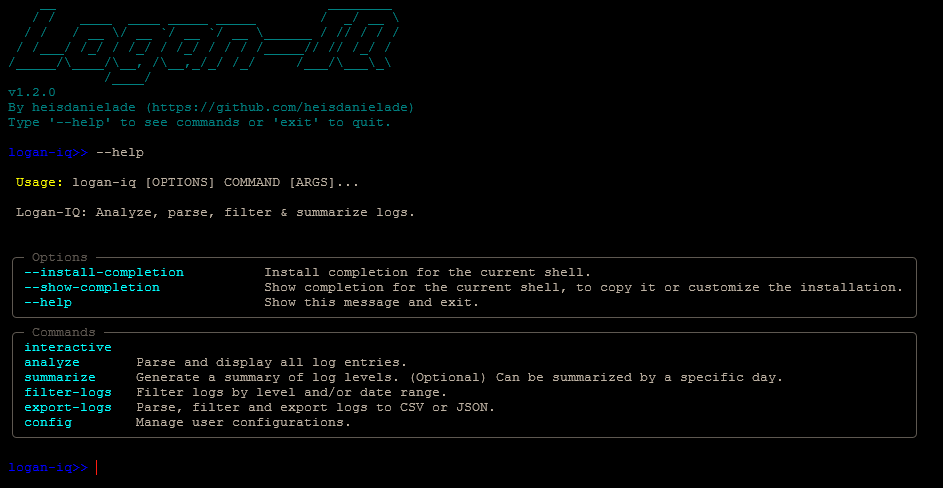

Running the CLI

Once installed, commands can be run directly via logan-iq:

- Help

logan-iq --help # General help

# Command specific help

logan-iq analyze --help

logan-iq summarize --help

logan-iq filter-logs --help

logan-iq export-logs --help

logan-iq config --help

logan-iq config set --help

logan-iq config show --help

logan-iq config delete --help

- Interactive Mode

logan-iq interactive

logan-iq>> analyze --file app.log

logan-iq>> summarize --file app.log --format simple

logan-iq>> filter-logs --search "500 Server Error"

logan-iq>> filter-logs --level ERROR --start 2025-11-01 --end 2025-11-08

logan-iq>> export-logs csv --file app.log --output exported_logs/output.csv

logan-iq>> export-logs json --file app.log --output exported_logs/output.json

logan-iq>> export-logs json --search "'404 Client Error" --file app.log --output exported_logs/output.json

logan-iq>> config set --default-file app.log --format simple

logan-iq>> config show

logan-iq>> config delete --key default_file

logan-iq>> exit

- Analyze Logs

logan-iq analyze --file/ path/to/logfile.log --format apache

- Custom Regex

logan-iq analyze --file app.log --format custom --regex "^(?P<ts>\\S+) (?P<msg>.*)$"

- Summarize Log Levels

logan-iq summarize --file path/to/logfile.log

- Filter Log Levels

logan-iq filter-logs --file app.log --level ERROR --search "500 Server Error" --start 2025-11-01 --end 2025-11-08

- Export Logs

logan-iq export-logs --file path/to/logfile.log --output csv

- Shorthand flags

You can also use shorthand flags like:

-f # --file

-df # --default-file

-r # --custom-regex

-cr # --custom-regex (for configs)

-l # --level

-s # --search

-lm # --limit

-st # --start

-e # --end

-d # --day

-o # --output

-k # --key

Configuration

On installation, a .logan-iq_config.json is created on the user's system in the root directory.

- To show configurations

logan-iq interactive

logan-iq>> config show

- To modify configurations

logan-iq>> config set --default-file logs/access.log --format nginx

logan-iq>> config set --format custom --custom-regex "^(?P<ts>\\S+) (?P<msg>.*)$"

Example with built-in format:

{

"default_file": "logs/server_logs.log",

"format": "nginx"

}

Example with custom format:

{

"default_file": "logs/app.log",

"format": "custom",

"custom_regex": "^(?P<ts>\\S+) (?P<msg>.*)$"

}

-

CLI args always override config values.

-

If neither CLI args nor config exist, the app prompts for a file.

Deleting configuration entries

You can delete configuration entries using the config delete command.

- Delete a single key:

logan-iq config delete default_file

- Delete all keys (requires confirmation):

logan-iq config delete all

If you run the command without either --key or --all you'll receive a helpful message explaining the available options.

Dependencies

- CLI built with

Typer - Pretty tables via

Tabulate - Colored output via

PyFiglet - Unit testing via

Pytest

Contributing

Contributions are welcome!

NOTE: Codebase docs i.e., docstrings, comments, files... must be in ENGLISH)

- Fork the repo and clone it locally.

- Create a virtual environment and install dependencies:

pip install -e .. - Run tests:

pytest. - Create a branch from

main:git checkout -b feat/nameorgit checkout -b fix/name. - Make changes, write tests, and follow PEP8.

- Commit with clear messages and push your branch.

- Open a Pull Request for review.

- Report bugs via Issues with clear steps and logs.

- By contributing, you agree to the repo’s license and Code of Conduct.

- Be creative!

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file logan_iq-1.2.3.tar.gz.

File metadata

- Download URL: logan_iq-1.2.3.tar.gz

- Upload date:

- Size: 20.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b629ea6b28708d2bf65b7a844a758bbf88068ddb19b34e28a55daec03b247dec

|

|

| MD5 |

291d0e5d3b92c3baceffe390dd1db999

|

|

| BLAKE2b-256 |

00c4b203b55b53a7187756fc8bbfa0342db7cbe79b1fcf896f1c2403e9b75df6

|

File details

Details for the file logan_iq-1.2.3-py3-none-any.whl.

File metadata

- Download URL: logan_iq-1.2.3-py3-none-any.whl

- Upload date:

- Size: 22.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0a0eeb05ac342633b7fcca038ff7cae324c55b2fee3577dfde77629bff0dc6ff

|

|

| MD5 |

00cf9f8dac2e0101f22d40d799359b80

|

|

| BLAKE2b-256 |

a9e883eccc5542de7dae16f1fefe9e10d0466a65e046294312e319ec0be96374

|