A CLI tool for automatically translating manga pages from Japanese to English. Detects speech bubbles, extracts Japanese text using OCR, translates to English, and renders the translated text back onto images with proper alignment.

Project description

Manga Translation CLI

Fully automated and offline manga translation pipeline. Intelligently detects speech bubbles, extracts Japanese text with OCR, translates to English, and seamlessly renders translated text back onto pages with proper alignment and customizable fonts. Supports both single images and batch folder processing with GPU acceleration.

Showcase

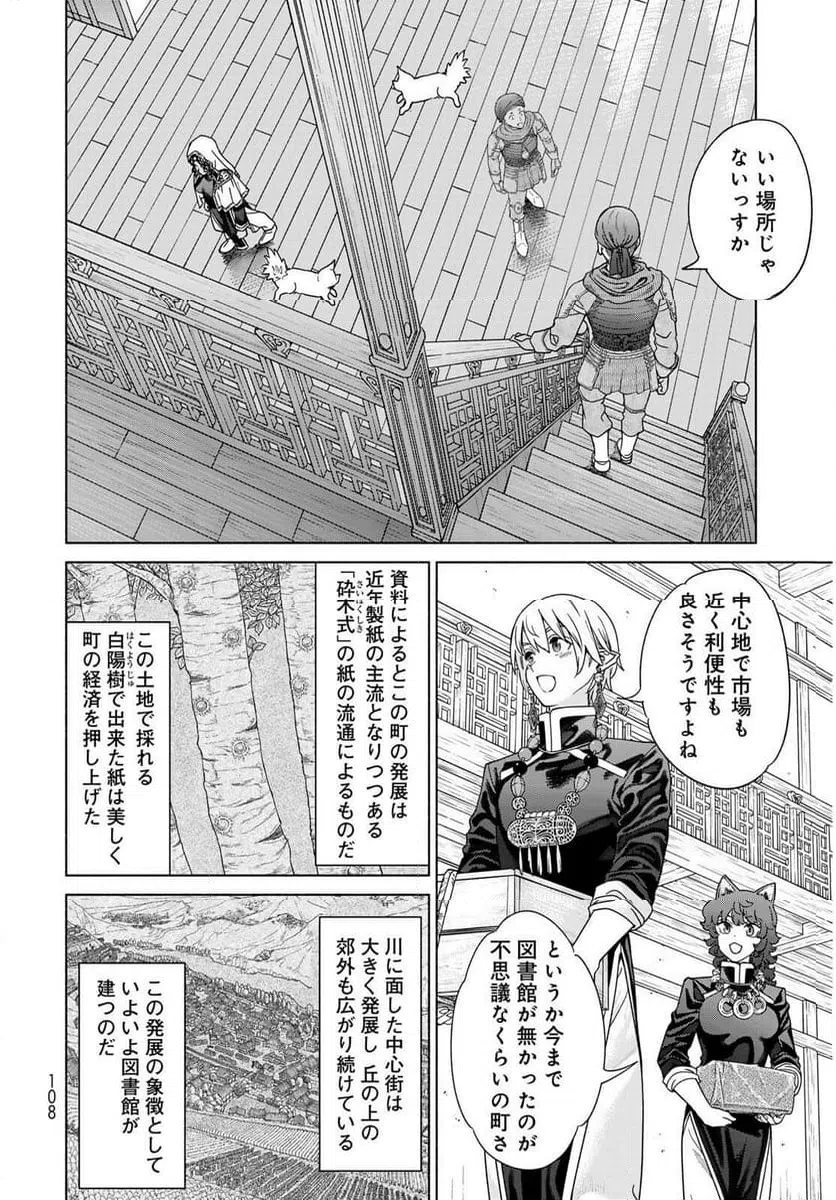

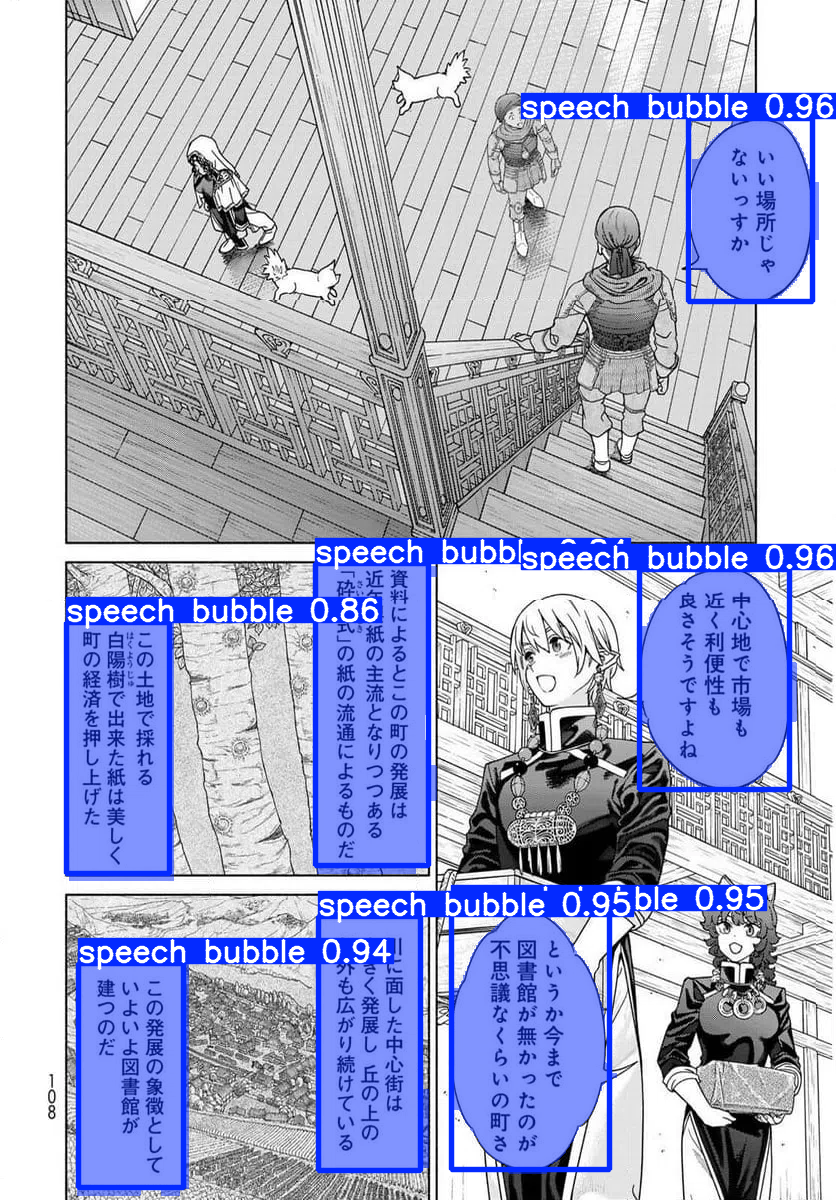

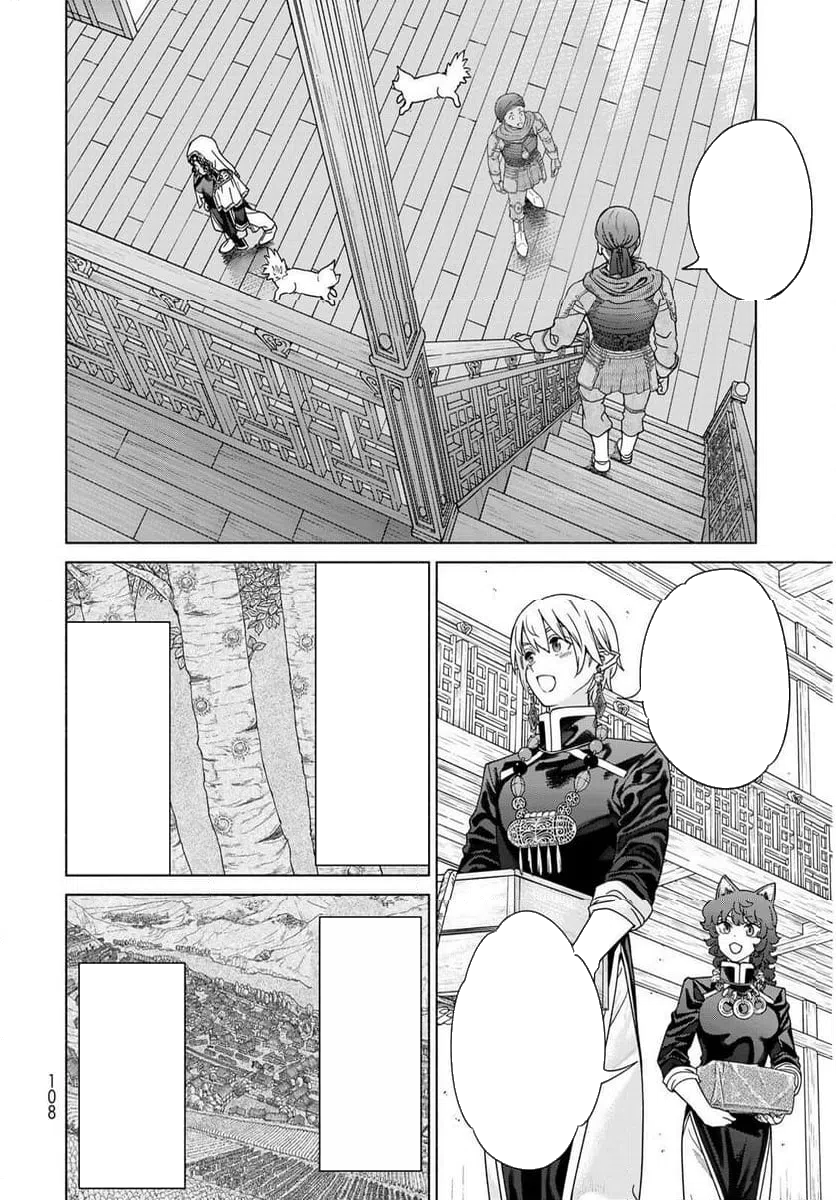

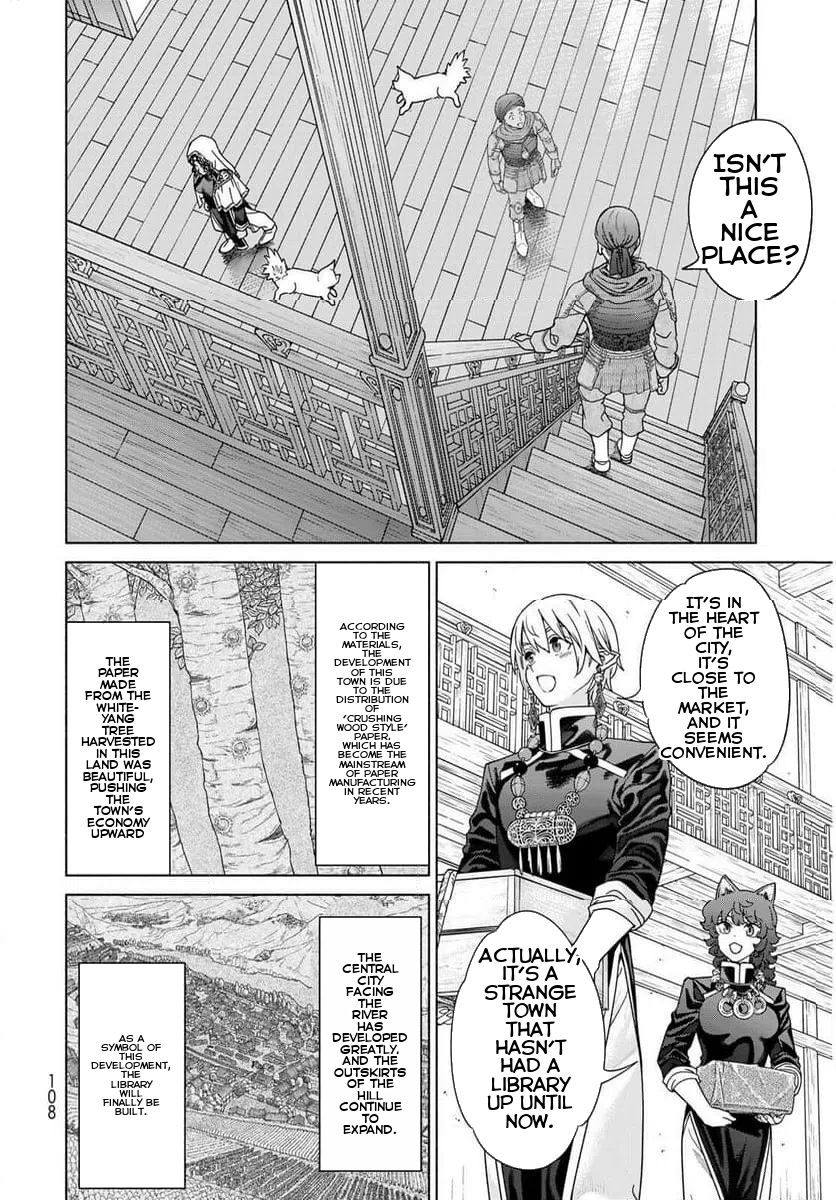

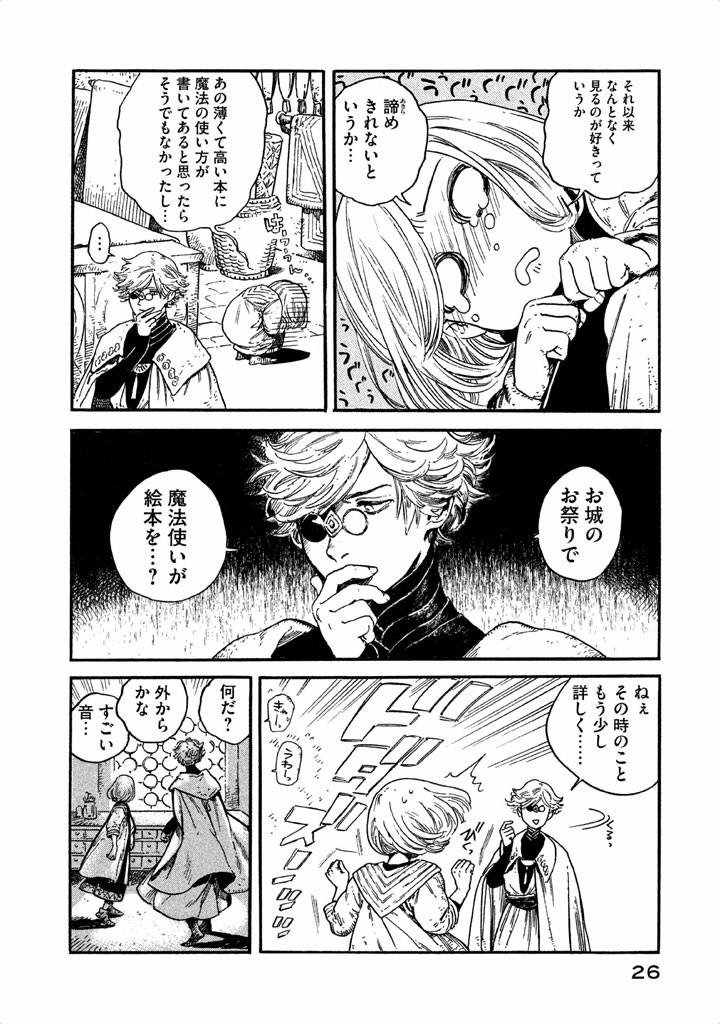

Example 1: Complete Pipeline

| Original | Detection | Cleaned | Translated |

|---|---|---|---|

|

|

|

|

Source: Magus of the Library

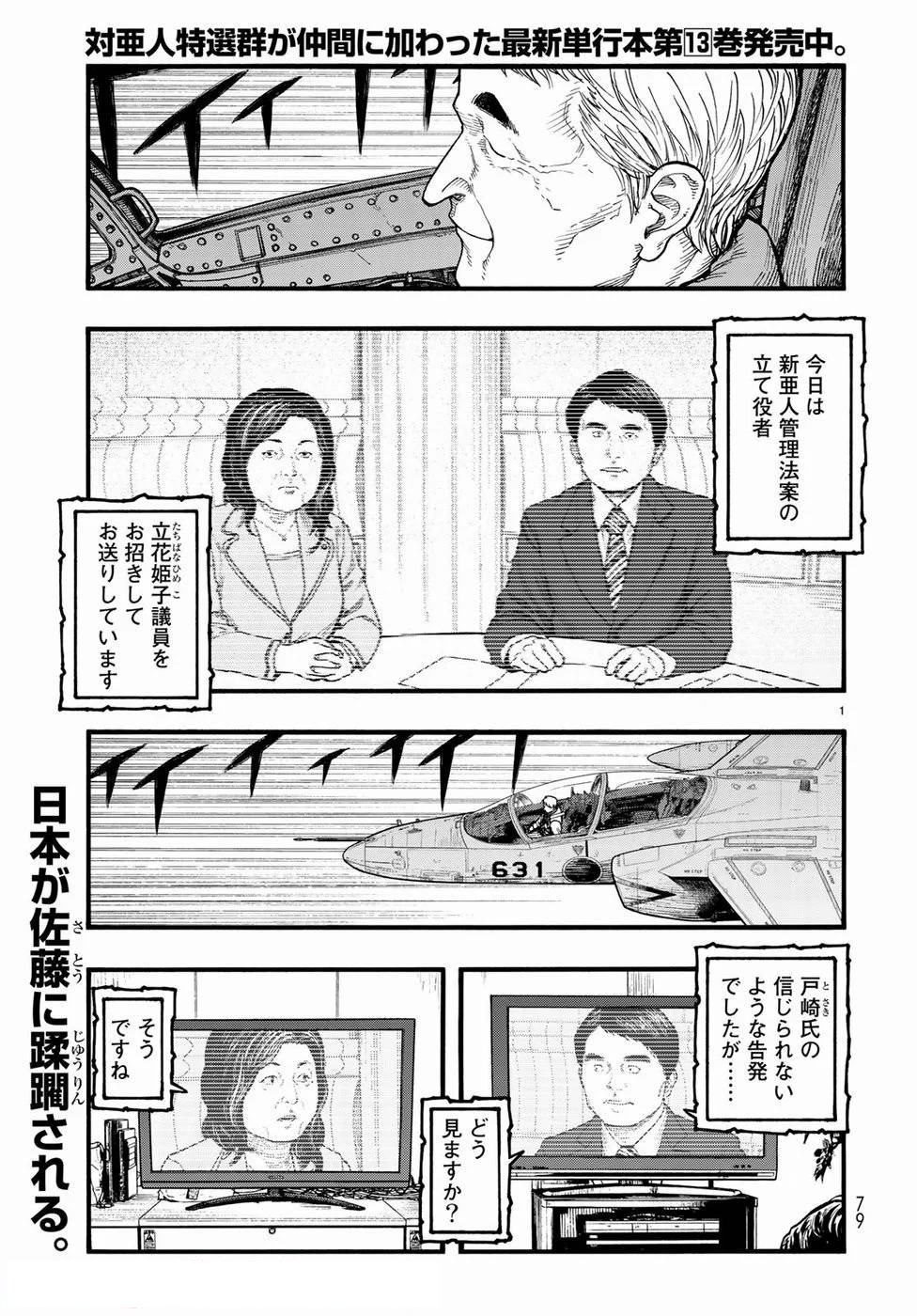

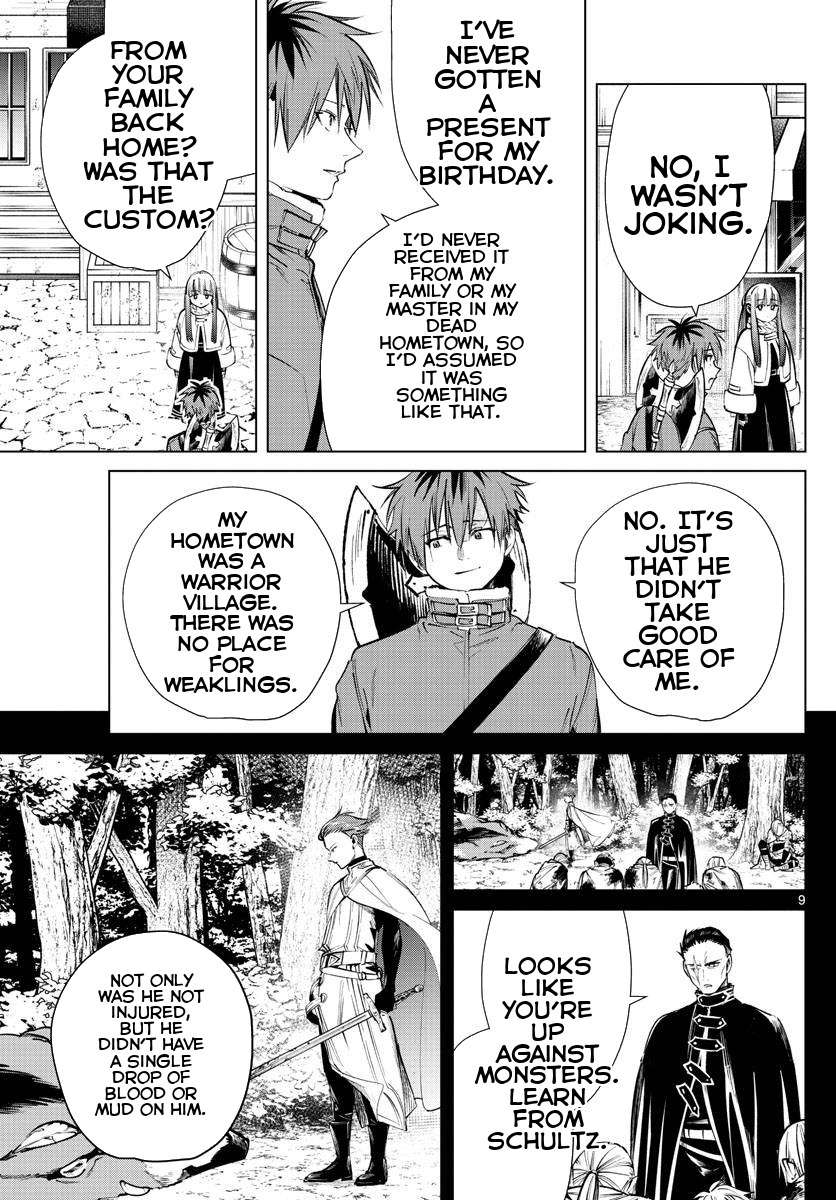

Example 2: Translation Result

| Original | Translated |

|---|---|

|

|

Source: Witch Hat Atelier

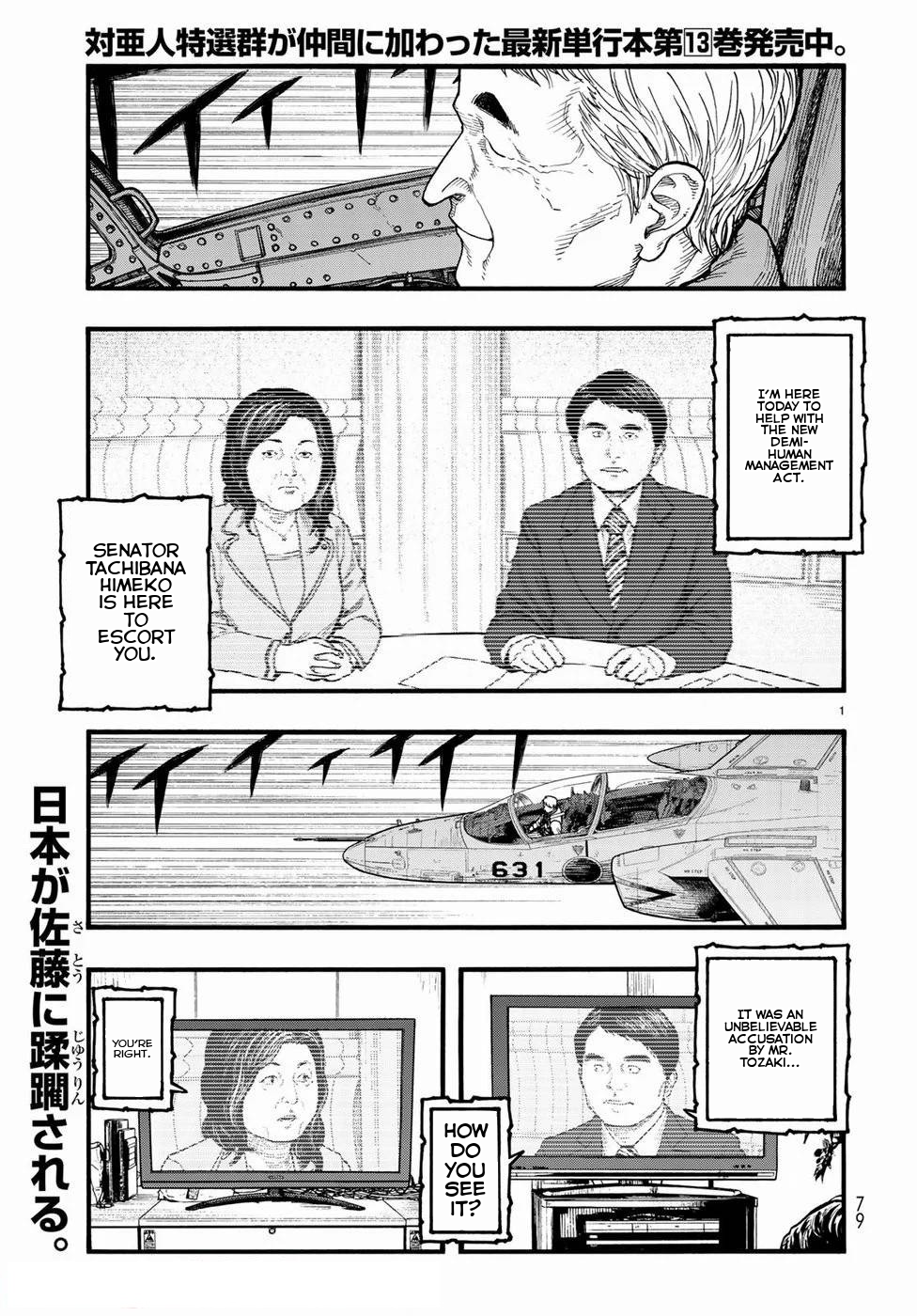

| Original | Translated |

|---|---|

|

|

Source: Ajin: Demi-Human

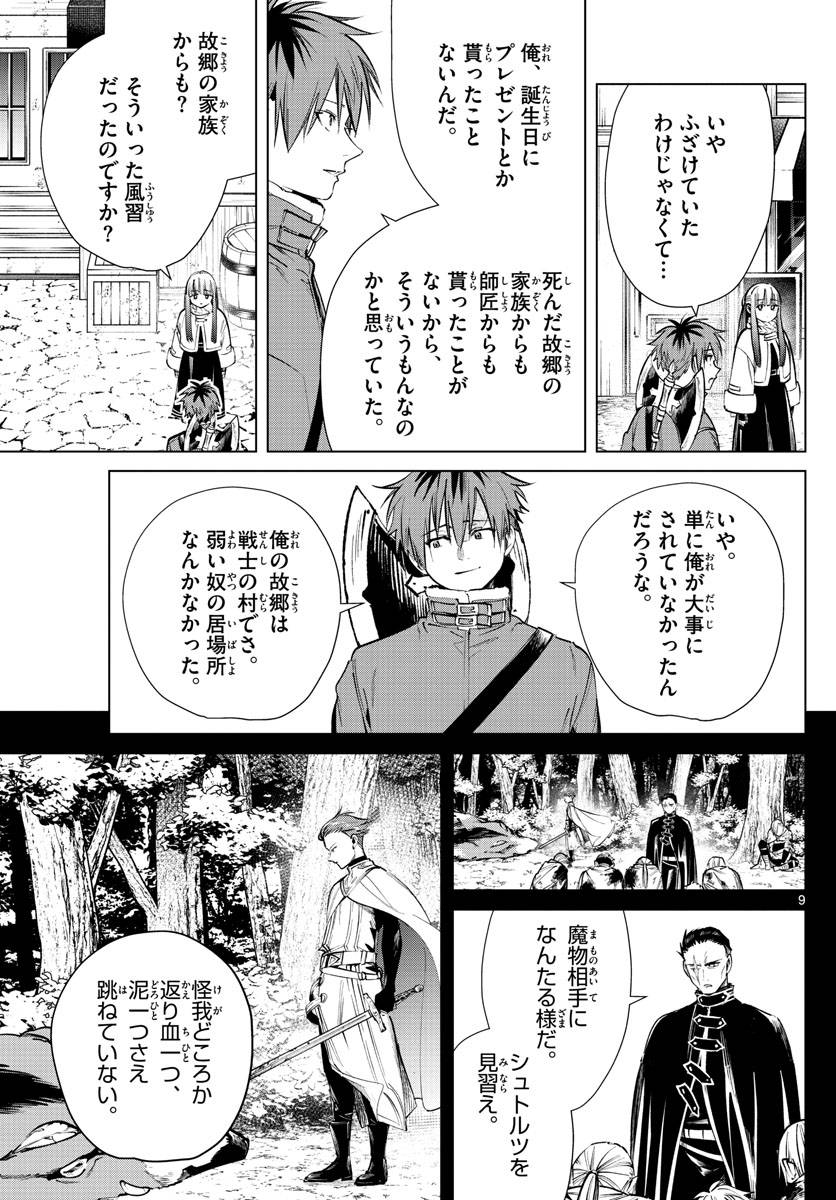

| Original | Translated |

|---|---|

|

|

Source: Frieren: Beyond Journey's End

Pipeline stages:

- Original: Input manga page with Japanese text

- Detection: YOLO model identifies speech bubble locations (green boxes)

- Cleaned: Bubble interiors filled with base color, text removed

- Translated: English text rendered within bubble shapes

Disclaimer: Example images are from published manga and used for demonstration purposes only. All rights belong to their respective copyright holders. This tool is intended for personal use with legally obtained content.

Table of Contents

- Showcase

- Features

- Installation

- Usage

- Output Structure

- Dependencies

- How It Works

- Limitations

- Known Issues

- Contributing

- Credits

- Changelog

- Notes

Features

- Automatic speech bubble detection using YOLO (YOLOv8m)

- Japanese text extraction using PaddleOCR-VL transformer model

- High-quality translation using Sugoi-v4 (specialized for Japanese→English)

- Smart text rendering with automatic font sizing and alignment within bubble shapes

- Custom font support for personalized text styling

- Batch processing for entire folders with optimized GPU utilization

- GPU acceleration with CUDA support for faster processing

- Configurable detection with adjustable confidence and IoU thresholds

- Intermediate outputs for debugging (bubble masks, cleaned images, detections)

Installation

Requirements

- Python 3.13 or higher

- UV package manager (recommended) or pip (currently untested)

For uv installation, visit: https://github.com/astral-sh/uv

Install from PyPI

GPU Installation (CUDA 12.8)

Installs with CUDA support for GPU acceleration. Requires CUDA-compatible NVIDIA GPU.

Using uv (recommended):

uv tool install manga-translator-cli[cuda]

Using pip:

pip install manga-translator-cli[cuda] --extra-index-url https://download.pytorch.org/whl/cu128

CPU-Only Installation

For systems without a GPU or to save disk space.

Using uv (recommended):

uv tool install manga-translator-cli[cpu]

Using pip:

pip install manga-translator-cli[cpu] --extra-index-url https://download.pytorch.org/whl/cpu

Install from Source

For development or to use the latest unreleased changes.

- Clone the repository:

git clone https://github.com/zanbowie138/manga-translator-cli.git

cd manga-translator-cli

- Install with your preferred backend:

GPU (CUDA 12.8):

uv sync --extra cuda

CPU-only:

uv sync --extra cpu

Models

Models will be automatically downloaded on first use:

- YOLO model for bubble detection

- PaddleOCR-VL model for text extraction

- Sugoi-v4 model for translation

Usage

Single Image Translation

manga-translate input/page1.png

Folder Translation (batch mode recommended)

manga-translate input/ --batch

Common Options

Change output folder:

manga-translate input/page1.png --output folder --save-all

Save all intermediate outputs:

manga-translate input/page1.png --save-all

Use custom font:

manga-translate input/page1.png --font "fonts/CC Astro City Int Regular.ttf"

Adjust detection sensitivity:

manga-translate input/page1.png --conf-threshold 0.3 --iou-threshold 0.5

Force CPU mode (GPU is used by default if available):

manga-translate input/page1.png --device cpu

Quiet mode:

manga-translate input/page1.png --quiet

Available Options

--output, -o: Output folder path (default: output)--folder, -f: Process entire folder instead of single file--conf-threshold: Confidence threshold for bubble detection (0-1, default: 0.25)--iou-threshold: IoU threshold for NMS (0-1, default: 0.45)--font: Path to font file for translated text--device: Device for OCR and translation (cpu or cuda, default: auto-detect, uses cuda if available). Controls which device is used for both text extraction and translation.--save-all: Save all intermediate outputs--save-speech-bubbles: Save annotated detection images--save-bubble-interiors: Save bubble interior visualizations--save-cleaned: Save cleaned images before text drawing--quiet, -q: Suppress progress messages--stop-on-error: Stop processing on first error (folder mode)

For complete list of options:

manga-translate --help

Output Structure

When processing files, outputs are organized in subdirectories:

translated/: Final translated images (always saved)speech_bubbles/: Annotated images with detected bubbles (enabled with--save-speech-bubbles)bubble_interiors/: Visualization of bubble interiors (enabled with--save-bubble-interiors)cleaned/: Images with bubbles filled before text rendering (enabled with--save-cleaned)

Use --save-all to enable all intermediate outputs at once.

Example output structure:

output/

├── translated/

│ ├── page1.png

│ └── page2.png

├── speech_bubbles/ # if --save-speech-bubbles or --save-all

│ ├── page1.png

│ └── page2.png

├── bubble_interiors/ # if --save-bubble-interiors or --save-all

│ ├── page1.png

│ └── page2.png

└── cleaned/ # if --save-cleaned or --save-all

├── page1.png

└── page2.png

Dependencies

- ultralytics: YOLO model for bubble detection

- transformers: PaddleOCR-VL for text extraction

- ctranslate2: Fast translation inference

- sentencepiece: Text tokenization

- torch: Deep learning framework

- opencv-python: Image processing

- pillow: Image manipulation

How It Works

- Loads a YOLO model to detect speech bubbles in the image

- Filters out parent boxes that contain smaller child boxes

- For each bubble, extracts Japanese text using PaddleOCR-VL

- Detects if text contains Japanese characters

- Translates Japanese text to English using Sugoi-v4

- Cleans bubble interiors by filling with base color

- Renders translated text within bubble shapes using binary search for optimal font size

- Saves the final translated image

Limitations

- Dense panels with overlapping bubbles have detection issues

- Text outside of bubbles won't be translated

- Complex bubble backgrounds may not fill cleanly

- Currently Japanese→English only; other languages not supported

Known Issues

- Bubble detection can sometimes break on compound bubbles, causing them to not be processed properly.

Please open an issue if you encounter problems.

Contributing

Contributions welcome! To contribute:

- Fork the repository

- Create a feature branch (

git checkout -b feature/improvement) - Make your changes

- Run tests if applicable

- Commit with clear messages (

git commit -m "Add feature") - Push to your fork (

git push origin feature/improvement) - Open a Pull Request

Development setup:

git clone <your-fork>

cd manga-translator-cli

uv sync --extra cuda # GPU (or use --extra cpu for CPU-only)

uv tool install .

Areas for contribution:

- Improved bubble detection algorithms

- Fix bubble detection for compound bubbles

- Improved translation accuracy

- Translation for text outside of bubbles

- Support for additional languages

- UI/web interface

- Performance optimizations

- Documentation improvements

Credits

Models:

- YOLOv8m Manga Bubbles by Oguzhan61 - Speech bubble detection

- PaddleOCR-VL For Manga by jzhang533 - Japanese text extraction

- Sugoi-v4 JA-EN by entai2965 - Japanese to English translation

Libraries:

- Ultralytics - YOLO implementation

- Transformers - HuggingFace transformers library

- CTranslate2 - Fast inference engine

- PyTorch - Deep learning framework

- UV - Fast Python package manager

Notes

- First run downloads models (can take several minutes)

- Translation quality depends on text clarity and font style

- GPU requires CUDA-compatible NVIDIA GPU + drivers

--devicecontrols both OCR and translation device- Supported formats: PNG, JPG, JPEG, WEBP

- To switch PyTorch backend, reinstall with

[cuda]or[cpu]extra as shown in Installation section

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file manga_translator_cli-1.0.2.tar.gz.

File metadata

- Download URL: manga_translator_cli-1.0.2.tar.gz

- Upload date:

- Size: 8.8 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.9.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

16f197c778653df88f6453ed269bc9f0b1fd352ab1cc63dfe9f20a593afe12b8

|

|

| MD5 |

9751d987edcffac13938b9ad8c5c30e4

|

|

| BLAKE2b-256 |

683b5c5e5047db4be8fb629c10770fabf713237f578f9b4d6ba670284e5a3bac

|

File details

Details for the file manga_translator_cli-1.0.2-py3-none-any.whl.

File metadata

- Download URL: manga_translator_cli-1.0.2-py3-none-any.whl

- Upload date:

- Size: 83.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.9.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

79dbebaff53095f78b99786f5e7eb9f1663531f0e3a7aa76109b196beaa918c9

|

|

| MD5 |

01ce63c13d6f80df42bfb0d19bda8b83

|

|

| BLAKE2b-256 |

da51a701d5a16bf645ce7b258f6da35b7d98a9eb310c872c191d958468297233

|