Marine Extremes Detection and Tracking

Project description

Marine Extremes Python Package

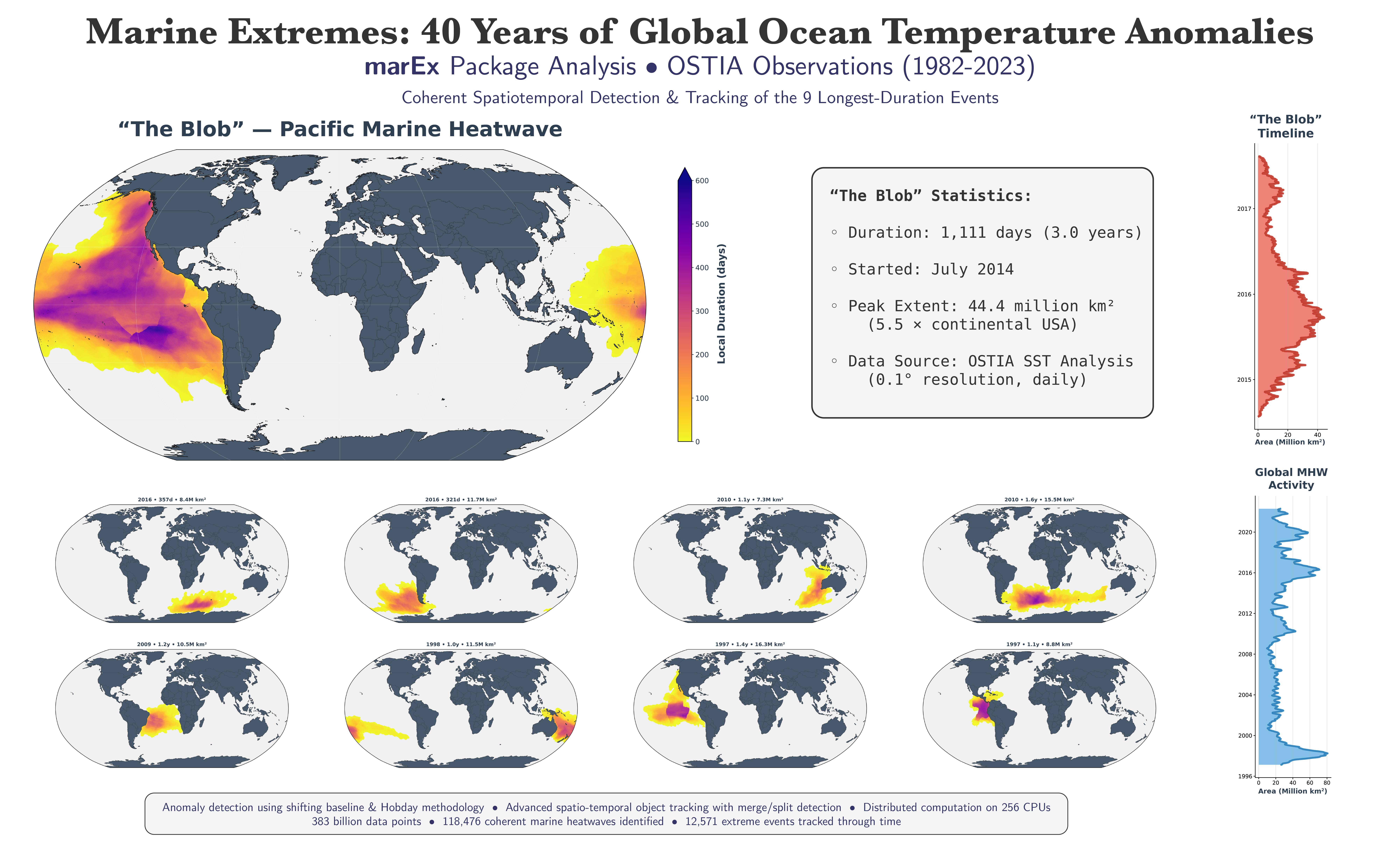

Efficient & scalable Marine Extremes detection, identification, & tracking for Exascale Climate Data.

MarEx is a high-performance Python framework for identifying and tracking extreme oceanographic events (such as Marine Heatwaves or Acidity Extremes) in massive climate datasets. Built on advanced statistical methods and distributed computing, it processes decades of daily-resolution global ocean data with unprecedented efficiency and scalability.

Key Capabilities

- ⚡ Extreme Performance: Process 100+ years of high-resolution daily global data in minutes

- 🔬 Advanced Analytics: Multiple statistical methodologies for robust extreme event detection

- 📈 Complex Event Tracking: Seamlessly handles coherent object splitting, merging, and evolution

- 🌐 Universal Grid Support: Native support for both regular (lat/lon) grids and unstructured ocean models

- ☁️ Cloud-Native Scaling: Identical codebase scales from laptop to a supercomputer using up to 1024+ cores

- 🧠 Memory Efficient: Intelligent chunking and lazy evaluation for datasets larger than memory

View 20 Years of marEx Tracking (Click to expand)

https://github.com/user-attachments/assets/36ee3150-c869-4cba-be68-628dc37e4775

Features

Data Pre-processing Pipeline

MarEx implements a highly-optimised preprocessing pipeline powered by dask for efficient parallel computation and scaling to very large spatio-temporal datasets. Included are two complementary methods for calculating anomalies and detecting extremes:

Anomaly Calculation:

- Shifting Baseline — Scientifically-rigorous definition of anomalies relative to a backwards-looking rolling smoothed climatology.

- Detrended Baseline — Efficiently removes trend & season cycle using a 6+ coefficient model (mean, annual & semi-annual harmonics, and arbitrary polynomial trends). (Highly efficient, but this approximation may lead to biases in certain statistics.)

Extreme Detection:

- Hobday Extreme — Implements a similar methodology to Hobday et al. (2016) with local day-of-year specific thresholds determined based on the quantile within a rolling window.

- Global Extreme — Applies a global-in-time percentile threshold at each point across the entire dataset. Optionally renormalises anomalies using a 30-day rolling standard deviation. (Highly efficient, but may misrepresent seasonal variability and differs from common definitions in literature.)

Object Detection & Tracking

Object Detection:

- Implements efficient algorithms for object detection in 2D geographical data.

- Fully-parallelised workflow built on

daskfor extremely fast & larger-than-memory computation. - Uses morphological opening & closing to fill small holes and gaps in binary features.

- Filters out small objects based on area thresholds.

- Identifies and labels connected regions in binary data representing arbitrary events (e.g. SST or SSS extrema, tracer presence, eddies, etc...).

- Performance/Scaling Test: 100 years of daily 0.25° resolution binary data with 64 cores...

- Takes ~5 wall-minutes per century

- Requires only 1 Gb memory per core (with

daskchunks of 25 days)

Object Tracking:

- Implements strict event tracking conditions to avoid very few, very large objects.

- Permits temporal gaps (of

T_filldays) between objects, to allow more continuous event tracking. - Requires objects to overlap by at least

overlap_thresholdfraction of the smaller objects's area to be considered the same event and continue tracking with the same ID. - Accounts for & keeps a history of object splitting & merging events, ensuring objects are more coherent and retain their previous identities & histories.

- Improves upon the splitting & merging logic of Sun et al. (2023):

- In this New Version: Partition the child object based on the parent of the nearest-neighbour cell (not the nearest parent centroid).

- Provides much more accessible and usable tracking outputs:

- Tracked object properties (such as area, centroid, and any other user-defined properties) are mapped into

ID-timespace - Details & Properties of all Merging/Splitting events are recorded.

- Provides other useful information that may be difficult to extract from the large

object ID field, such as:- Event presence in time

- Event start/end times and duration

- etc...

- Tracked object properties (such as area, centroid, and any other user-defined properties) are mapped into

- Performance/Scaling Test: 100 years of daily 0.25° resolution binary data with 64 cores...

- Takes ~8 wall-minutes per decade (cf. Old Method, i.e. without merge-split-tracking, time-gap filling, overlap-thresholding, et al., but here updated to leverage

dask, now takes 1 wall-minute per decade!) - Requires only ~2 Gb memory per core (with

daskchunks of 25 days)

- Takes ~8 wall-minutes per decade (cf. Old Method, i.e. without merge-split-tracking, time-gap filling, overlap-thresholding, et al., but here updated to leverage

Visualisation

Plotting:

- Provides a few helper functions to create pretty plots, wrapped subplots, and animations (e.g. below).

cf. Old (Basic) ID Method vs. New Tracking & Merging Algorithm:

https://github.com/user-attachments/assets/5acf48eb-56bf-43e5-bfc4-4ef1a7a90eff

Technical Architecture

Distributed Computing Stack:

- Framework:

Daskfor distributed computation with asyncronous task scheduling - Parallelism: Multi-level spatio-temporal parallelisation

- Memory Management: Lazy evaluation with automatic spilling and graph optimisation

- I/O Optimisation: Zarr-based intermediate storage with compression

Performance Optimisations:

- JIT Compilation: Numba-accelerated critical paths for numerical kernels

- GPU Acceleration: Optional JAX backend for tensor operations

- Sparse Operations: Custom sparse matrix algorithms for unstructured grids

- Cache-Aware: Memory access patterns optimised for modern CPU architectures

Computational Workflow

- Preprocess: Remove trends & seasonal cycles and identify anomalous extremes

- Detect: Filter & label connected regions using morphological operations

- Track: Follow objects through time, handling complex evolution patterns

- Analyse: Extract event statistics, duration, and spatial properties

Quick Start Example

import xarray as xr

import marEx

# Load sea surface temperature data

sst = xr.open_dataset('sst_data.nc', chunks={}).sst

# Pre-process SST Data to identify extremes: cf. `01_preprocess_extremes.ipynb`

extreme_events_ds = marEx.preprocess_data(

sst,

threshold_percentile=95,

method_anomaly='shifting_baseline',

method_extreme='hobday_extreme'

)

# Identify & Track Marine Heatwaves through time: cf. `02_id_track_events.ipynb`

events_ds = marEx.tracker(

extreme_events_ds.extreme_events,

extreme_events_ds.mask,

R_fill=8,

area_filter_quartile=0.5,

allow_merging=True

).run()

# Visualise results: cf. `03_visualise_events.ipynb`

fig, ax, im = (events_ds.ID_field > 0).mean("time").plotX.single_plot(marEx.PlotConfig(var_units="MHW Frequency", cmap="hot_r", cperc=[0, 96]))

Installation & Setup

Full Installation

# Complete HPC installation with all optional dependencies

pip install marEx[full,hpc]

Development Installation

# Clone and install for development

git clone https://github.com/wienkers/marEx.git

cd marEx

pip install -e .[dev]

# Install pre-commit hooks

pre-commit install

Getting Help

If you encounter installation issues:

- Documentation: Check the full documentation for detailed guides and API reference

- Check Dependencies: Run

marEx.print_dependency_status()to identify missing components - Search Issues: Check the GitHub Issues for similar problems

- System Information: Include your OS, Python version, and error messages when reporting issues

- Support: Reach out to Aaron Wienkers

Funding

This project has received funding through:

- The EERIE (European Eddy-Rich ESMs) Project

- The European Union's Horizon Europe research and innovation programme under Grant Agreement No. 101081383

- The Swiss State Secretariat for Education, Research and Innovation (SERI) under contract #22.00366

Please contact Aaron Wienkers with any questions, comments, issues, or bugs.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file marex-3.1.tar.gz.

File metadata

- Download URL: marex-3.1.tar.gz

- Upload date:

- Size: 8.1 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

90f19492af4b6d6c3050906ab8d3f27268c8ae033655430b0e43cf94e043754d

|

|

| MD5 |

d0640a04fd3f83b6831506da352b350a

|

|

| BLAKE2b-256 |

e0c6ea9d4055d47c8add1ce166edb49d995b4f9a45179aade38265567b0924d3

|

Provenance

The following attestation bundles were made for marex-3.1.tar.gz:

Publisher:

release.yml on wienkers/marEx

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

marex-3.1.tar.gz -

Subject digest:

90f19492af4b6d6c3050906ab8d3f27268c8ae033655430b0e43cf94e043754d - Sigstore transparency entry: 421376159

- Sigstore integration time:

-

Permalink:

wienkers/marEx@c7920c7417a73d1a7762734327808d3aa4bd272c -

Branch / Tag:

refs/tags/v3.1 - Owner: https://github.com/wienkers

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@c7920c7417a73d1a7762734327808d3aa4bd272c -

Trigger Event:

push

-

Statement type:

File details

Details for the file marex-3.1-py3-none-any.whl.

File metadata

- Download URL: marex-3.1-py3-none-any.whl

- Upload date:

- Size: 102.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b17c30b70ed9c8400b1375954c8e4a50c017afdb9a2b6d5bbb62f9bdca78e921

|

|

| MD5 |

a8d67d89d1976b8992dd19d5560188c2

|

|

| BLAKE2b-256 |

ef273b0534c367c66679e2de103cbcc1101dd5e1ba708b298d0bfff77d03b472

|

Provenance

The following attestation bundles were made for marex-3.1-py3-none-any.whl:

Publisher:

release.yml on wienkers/marEx

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

marex-3.1-py3-none-any.whl -

Subject digest:

b17c30b70ed9c8400b1375954c8e4a50c017afdb9a2b6d5bbb62f9bdca78e921 - Sigstore transparency entry: 421376177

- Sigstore integration time:

-

Permalink:

wienkers/marEx@c7920c7417a73d1a7762734327808d3aa4bd272c -

Branch / Tag:

refs/tags/v3.1 - Owner: https://github.com/wienkers

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@c7920c7417a73d1a7762734327808d3aa4bd272c -

Trigger Event:

push

-

Statement type: