mellea is a library for writing generative programs

Project description

Mellea

Mellea is a library for writing generative programs. Generative programming replaces flaky agents and brittle prompts with structured, maintainable, robust, and efficient AI workflows.

Features

- A standard library of opinionated prompting patterns.

- Sampling strategies for inference-time scaling.

- Clean integration between verifiers and samplers.

- Batteries-included library of verifiers.

- Support for efficient checking of specialized requirements using activated LoRAs.

- Train your own verifiers on proprietary classifier data.

- Compatible with many inference services and model families. Control cost and quality by easily lifting and shifting workloads between: - inference providers - model families - model sizes

- Easily integrate the power of LLMs into legacy code-bases (mify).

- Sketch applications by writing specifications and letting

melleafill in the details (generative slots). - Get started by decomposing your large unwieldy prompts into structured and maintainable mellea problems.

Getting Started

You can get started with a local install, or by using Colab notebooks.

Getting Started with Local Inference

Install with uv:

uv pip install mellea

Install with pip:

pip install mellea

[!NOTE]

melleacomes with some additional packages as defined in ourpyproject.toml. If you would like to install all the extra optional dependencies, please run the following commands:uv pip install "mellea[hf]" # for Huggingface extras and Alora capabilities uv pip install "mellea[watsonx]" # for watsonx backend uv pip install "mellea[docling]" # for docling uv pip install "mellea[smolagents]" # for HuggingFace smolagents tools uv pip install "mellea[all]" # for all the optional dependenciesYou can also install all the optional dependencies with

uv sync --all-extras

[!NOTE] If running on an Intel mac, you may get errors related to torch/torchvision versions. Conda maintains updated versions of these packages. You will need to create a conda environment and run

conda install 'torchvision>=0.22.0'(this should also install pytorch and torchvision-extra). Then, you should be able to runuv pip install mellea. To run the examples, you will need to usepython <filename>inside the conda environment instead ofuv run --with mellea <filename>.

[!NOTE] If you are using python >= 3.13, you may encounter an issue where outlines cannot be installed due to rust compiler issues (

error: can't find Rust compiler). You can either downgrade to python 3.12 or install the rust compiler to build the wheel for outlines locally.

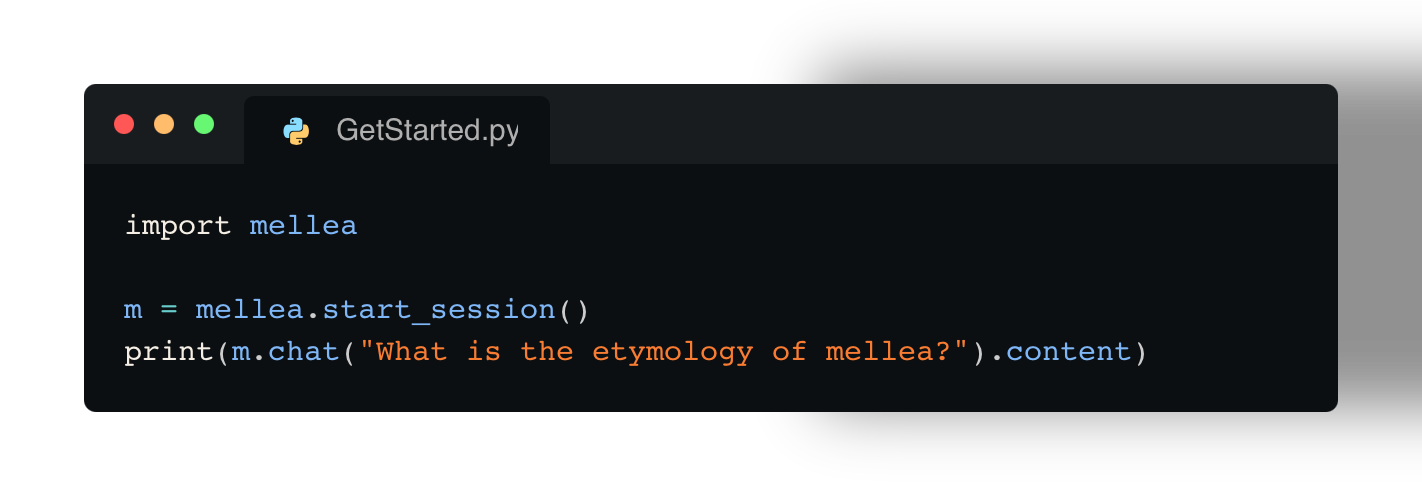

For running a simple LLM request locally (using Ollama with Granite model), this is the starting code:

# file: https://github.com/generative-computing/mellea/blob/main/docs/examples/tutorial/example.py

import mellea

m = mellea.start_session()

print(m.chat("What is the etymology of mellea?").content)

Then run it:

[!NOTE] Before we get started, you will need to download and install ollama. Mellea can work with many different types of backends, but everything in this tutorial will "just work" on a Macbook running IBM's Granite 4 Micro 3B model.

uv run --with mellea docs/examples/tutorial/example.py

Get Started with Colab

Installing from Source

If you want to contribute to Mellea or need the latest development version, see the Getting Started section in our Contributing Guide for detailed installation instructions.

Getting started with validation

Mellea supports validation of generation results through a instruct-validate-repair pattern. Below, the request for "Write an email.." is constrained by the requirements of "be formal" and "Use 'Dear interns' as greeting.". Using a simple rejection sampling strategy, the request is sent up to three (loop_budget) times to the model and the output is checked against the constraints using (in this case) LLM-as-a-judge.

# file: https://github.com/generative-computing/mellea/blob/main/docs/examples/instruct_validate_repair/101_email_with_validate.py

from mellea import MelleaSession

from mellea.backends import ModelOption

from mellea.backends.ollama import OllamaModelBackend

from mellea.backends import model_ids

from mellea.stdlib.sampling import RejectionSamplingStrategy

# create a session with Mistral running on Ollama

m = MelleaSession(

backend=OllamaModelBackend(

model_id=model_ids.MISTRALAI_MISTRAL_0_3_7B,

model_options={ModelOption.MAX_NEW_TOKENS: 300},

)

)

# run an instruction with requirements

email_v1 = m.instruct(

"Write an email to invite all interns to the office party.",

requirements=["be formal", "Use 'Dear interns' as greeting."],

strategy=RejectionSamplingStrategy(loop_budget=3),

)

# print result

print(f"***** email ****\n{str(email_v1)}\n*******")

Getting Started with Generative Slots

Generative slots allow you to define functions without implementing them.

The @generative decorator marks a function as one that should be interpreted by querying an LLM.

The example below demonstrates how an LLM's sentiment classification

capability can be wrapped up as a function using Mellea's generative slots and

a local LLM.

# file: https://github.com/generative-computing/mellea/blob/main/docs/examples/tutorial/sentiment_classifier.py#L1-L13

from typing import Literal

from mellea import generative, start_session

@generative

def classify_sentiment(text: str) -> Literal["positive", "negative"]:

"""Classify the sentiment of the input text as 'positive' or 'negative'."""

if __name__ == "__main__":

m = start_session()

sentiment = classify_sentiment(m, text="I love this!")

print("Output sentiment is:", sentiment)

Contributing

We welcome contributions to Mellea! There are several ways to contribute:

- Contributing to this repository - Core features, bug fixes, standard library components

- Applications & Libraries - Build tools using Mellea (host in your own repo with

mellea-prefix) - Community Components - Contribute to mellea-contribs

Please see our Contributing Guide for detailed information on:

- Getting started with development

- Coding standards and workflow

- Testing guidelines

- How to contribute specific types of components

Questions? Join our Discord!

IBM ❤️ Open Source AI

Mellea has been started by IBM Research in Cambridge, MA.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file mellea-0.4.1.tar.gz.

File metadata

- Download URL: mellea-0.4.1.tar.gz

- Upload date:

- Size: 3.9 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a2d58f73c916005593a727ad86068d3b768dd49706dafde3633d591c58939336

|

|

| MD5 |

c48b414f4c8c808b4e660389dc979bba

|

|

| BLAKE2b-256 |

e754beb9763444c9a99a1cce35f18c2b67c855c40add2ddb1e5ef500fc4cdc8a

|

Provenance

The following attestation bundles were made for mellea-0.4.1.tar.gz:

Publisher:

pypi.yml on generative-computing/mellea

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

mellea-0.4.1.tar.gz -

Subject digest:

a2d58f73c916005593a727ad86068d3b768dd49706dafde3633d591c58939336 - Sigstore transparency entry: 1161388051

- Sigstore integration time:

-

Permalink:

generative-computing/mellea@2f2c20e33622fdbb40c8ba9b17546aa5f17ca653 -

Branch / Tag:

refs/tags/v0.4.1 - Owner: https://github.com/generative-computing

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

pypi.yml@2f2c20e33622fdbb40c8ba9b17546aa5f17ca653 -

Trigger Event:

release

-

Statement type:

File details

Details for the file mellea-0.4.1-py3-none-any.whl.

File metadata

- Download URL: mellea-0.4.1-py3-none-any.whl

- Upload date:

- Size: 4.3 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

990e8ca4e6fee15f00c6ee9adc36854465e0ec9c4affda6ba05581807fc1c293

|

|

| MD5 |

13f23d0131eead4c8d6e93ca3dbd420f

|

|

| BLAKE2b-256 |

25c087696db7d6374c68310d9110acee44c41844c8591610a039177d24d81c69

|

Provenance

The following attestation bundles were made for mellea-0.4.1-py3-none-any.whl:

Publisher:

pypi.yml on generative-computing/mellea

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

mellea-0.4.1-py3-none-any.whl -

Subject digest:

990e8ca4e6fee15f00c6ee9adc36854465e0ec9c4affda6ba05581807fc1c293 - Sigstore transparency entry: 1161388124

- Sigstore integration time:

-

Permalink:

generative-computing/mellea@2f2c20e33622fdbb40c8ba9b17546aa5f17ca653 -

Branch / Tag:

refs/tags/v0.4.1 - Owner: https://github.com/generative-computing

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

pypi.yml@2f2c20e33622fdbb40c8ba9b17546aa5f17ca653 -

Trigger Event:

release

-

Statement type: