MemMachine Server - The complete MemMachine memory system server with episodic and profile memory

Project description

MemMachine

The open-source memory layer for AI agents.

Stop building stateless agents. Give your AI persistent memory with just 5 lines of code.

What is MemMachine?

MemMachine is an open-source long-term memory layer for AI agents and LLM-powered applications. It enables your AI to learn, store, and recall information from past sessions—transforming stateless chatbots into personalized, context-aware assistants.

Key Capabilities

- Episodic Memory: Graph-based conversational context that persists across sessions

- Profile Memory: Long-term user facts and preferences stored in SQL

- Working Memory: Short-term context for the current session

- Agent Memory Persistence: Memory that survives restarts, sessions, and even model changes

Quick Start

Get up and running in under 5 minutes:

Prerequisites: This code requires a running MemMachine Server. Start a server locally or create a free account on the MemMachine Platform.

pip install memmachine-client

from memmachine_client import import MemMachineClient

# Initialize the client

client = MemMachineClient(base_url="http://localhost:8080")

# Get or create a project

project = client.get_or_create_project(org_id="my_org", project_id="my_project")

# Create a memory instance for a user session

memory = project.memory(

group_id="default",

agent_id="travel_agent",

user_id="alice",

session_id="session_001"

)

# Add a memory

memory.add("I prefer aisle seats on flights", metadata={"category": "travel"})

# => [AddMemoryResult(uid='...')]

# Search memories

results = memory.search("What are my flight preferences?")

print(results.content.episodic_memory.long_term_memory.episodes[0].content)

# => "I prefer aisle seats on flights"

For full installation options (Docker, self-hosted, cloud), visit the Quick Start Guide.

Integrations

MemMachine works seamlessly with your favorite AI frameworks:

| Framework | Description |

|---|---|

| LangChain | Memory provider for LangChain agents |

| LangGraph | Stateful memory for LangGraph workflows |

| CrewAI | Persistent memory for CrewAI multi-agent systems |

| LlamaIndex | Memory integration for LlamaIndex applications |

| AWS Strands | Memory for AWS Strands Agent SDK |

| n8n | No-code workflow automation integration |

| Dify | Memory backend for Dify AI applications |

| FastGPT | Integration with FastGPT platform |

MCP Server Support

MemMachine includes a native Model Context Protocol (MCP) server for seamless integration with Claude Desktop, Cursor, and other MCP-compatible clients:

# Stdio mode (for Claude Desktop)

memmachine-mcp-stdio

# HTTP mode (for web clients)

memmachine-mcp-http

See the MCP documentation for setup instructions.

Who Is MemMachine For?

- Developers building AI agents, assistants, or autonomous workflows

- Researchers experimenting with agent architectures and cognitive models

- Teams who need persistent, cross-session memory for their LLM applications

Key Features

- Multiple Memory Types: Working (short-term), Episodic (long-term conversational), and Profile (user facts) memory

- Developer-Friendly APIs: Python SDK, RESTful API, TypeScript SDK, and MCP server interfaces

- Flexible Storage: Graph database (Neo4j) for episodic memory, SQL for profiles

- LLM Agnostic: Works with OpenAI, Anthropic, Bedrock, Ollama, and any LLM provider

- Self-Hosted or Cloud: Run locally, in Docker, or use our managed service

For more information, refer to the API Reference Guide.

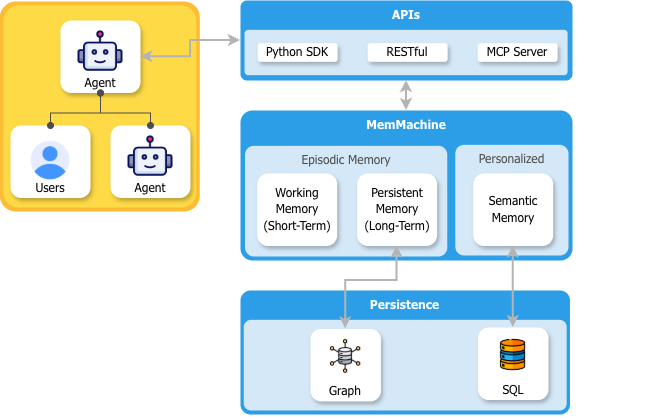

Architecture

- Agents interact via the API Layer: Users interact with an agent, which connects to MemMachine through a RESTful API, Python SDK, or MCP Server.

- MemMachine manages memory: Processes interactions and stores them as Episodic Memory (conversational context) and Profile Memory (long-term user facts).

- Data is persisted: Episodic memory is stored in a graph database; profile memory is stored in SQL.

Use Cases & Example Agents

MemMachine's versatile memory architecture can be applied across any domain. Explore our examples to see memory-powered agents in action:

| Agent | Description |

|---|---|

| CRM Agent | Recalls client history and deal stages to help sales teams close faster |

| Healthcare Navigator | Remembers medical history and tracks treatment progress |

| Personal Finance Advisor | Stores portfolio preferences and risk tolerance for personalized insights |

| Writing Assistant | Learns your style guide and terminology for consistent content |

Built with MemMachine

Are you using MemMachine in your project? We'd love to feature you!

- Share your project in GitHub Discussions → Showcase

- Drop a message in our Discord #showcase channel

Growing Community

MemMachine is a growing community of builders and developers. Help us grow by clicking the ⭐ Star button above!

Documentation

- Main Website – Learn about MemMachine

- Docs & API Reference – Full documentation

- Quick Start Guide – Get started in minutes

Community & Support

- Discord: Join our community for support, updates, and discussions: https://discord.gg/usydANvKqD

- Issues & Feature Requests: Use GitHub Issues

Contributing

We welcome contributions! Please see our CONTRIBUTING.md for guidelines.

References

@misc{luo2025agentlightningtrainai,

title={Agent Lightning: Train ANY AI Agents with Reinforcement Learning},

author={Xufang Luo and Yuge Zhang and Zhiyuan He and Zilong Wang and Siyun Zhao and Dongsheng Li and Luna K. Qiu and Yuqing Yang},

year={2025},

eprint={2508.03680},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2508.03680},

}

License

MemMachine is released under the Apache 2.0 License.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file memmachine_server-0.3.4.tar.gz.

File metadata

- Download URL: memmachine_server-0.3.4.tar.gz

- Upload date:

- Size: 432.3 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d024a67aba7adb24d144f3079d85d051a98c98e577665a20f80193e94b1bad59

|

|

| MD5 |

2d83a4b49d966f5cd432080e07f7ddf0

|

|

| BLAKE2b-256 |

2e6fea992c8a90f75496a4eb93ecd607af3a86fd82f41f26dd35042d1be137d9

|

Provenance

The following attestation bundles were made for memmachine_server-0.3.4.tar.gz:

Publisher:

pypi-publish.yml on MemMachine/MemMachine

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

memmachine_server-0.3.4.tar.gz -

Subject digest:

d024a67aba7adb24d144f3079d85d051a98c98e577665a20f80193e94b1bad59 - Sigstore transparency entry: 1288684903

- Sigstore integration time:

-

Permalink:

MemMachine/MemMachine@9741826587850fa287f3503a26a726721b10ce78 -

Branch / Tag:

refs/tags/v0.3.4 - Owner: https://github.com/MemMachine

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

pypi-publish.yml@9741826587850fa287f3503a26a726721b10ce78 -

Trigger Event:

release

-

Statement type:

File details

Details for the file memmachine_server-0.3.4-py3-none-any.whl.

File metadata

- Download URL: memmachine_server-0.3.4-py3-none-any.whl

- Upload date:

- Size: 347.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4b34a2e79035ae5325573799e79dcb9e999ae94306c3c46030b16efed5211b2e

|

|

| MD5 |

cad14a0de5bb56059bec6c98f9c2f7ce

|

|

| BLAKE2b-256 |

0c946fe4e57234b3e2fdb8913c440683924271982b26f236e69b8324387612e0

|

Provenance

The following attestation bundles were made for memmachine_server-0.3.4-py3-none-any.whl:

Publisher:

pypi-publish.yml on MemMachine/MemMachine

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

memmachine_server-0.3.4-py3-none-any.whl -

Subject digest:

4b34a2e79035ae5325573799e79dcb9e999ae94306c3c46030b16efed5211b2e - Sigstore transparency entry: 1288685055

- Sigstore integration time:

-

Permalink:

MemMachine/MemMachine@9741826587850fa287f3503a26a726721b10ce78 -

Branch / Tag:

refs/tags/v0.3.4 - Owner: https://github.com/MemMachine

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

pypi-publish.yml@9741826587850fa287f3503a26a726721b10ce78 -

Trigger Event:

release

-

Statement type: