Preflight checks for LLM prototypes.

Project description

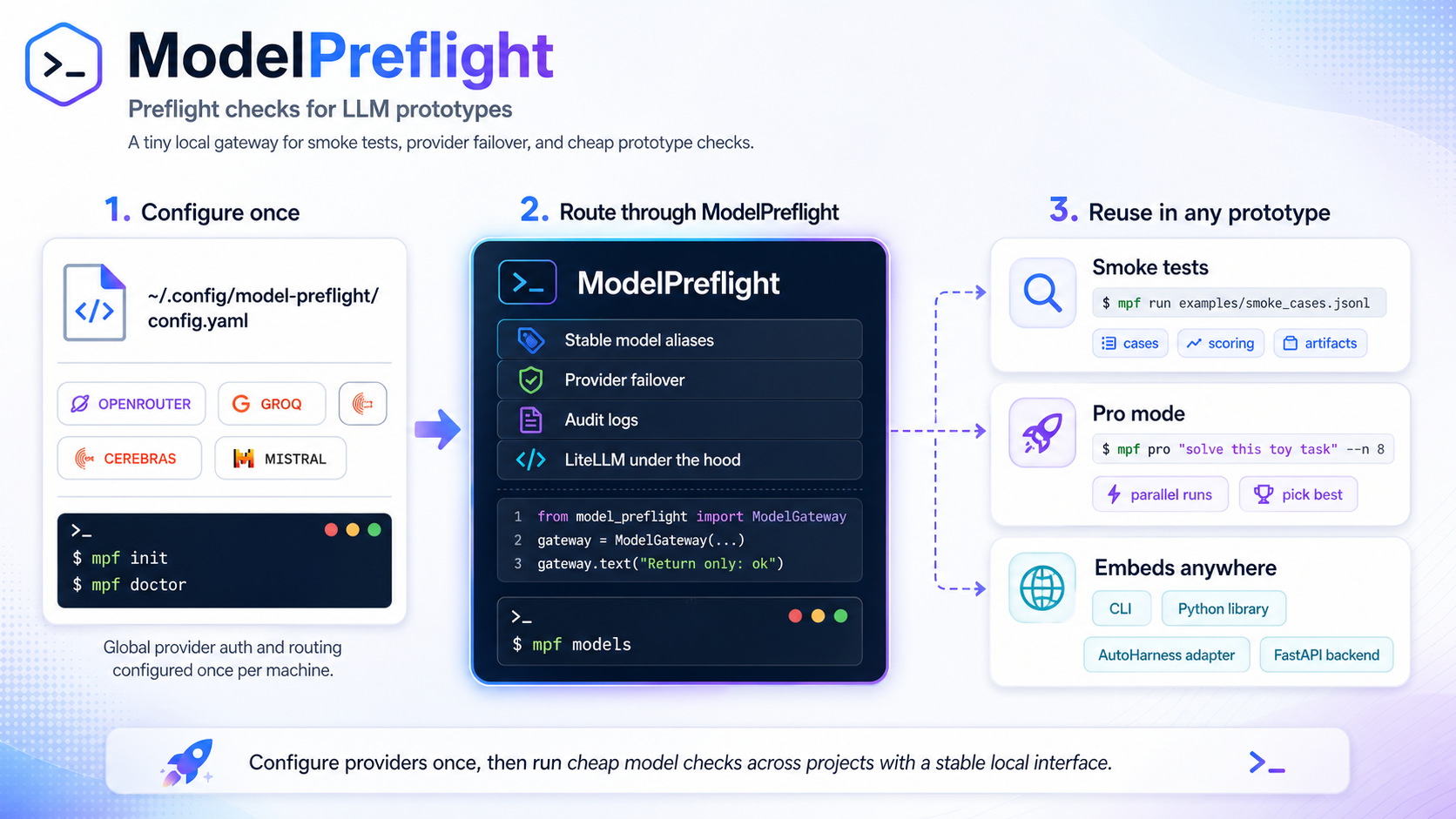

ModelPreflight

ModelPreflight

Find out which cheap or free-ish LLM endpoints can carry your prototype before you wire them into your app.

ModelPreflight turns scattered provider keys into stable local groups like free_reasoning

and free_fast, then lets you smoke-test prompts, fan out one-off questions, and fail over

between providers without hard-coding model IDs everywhere.

| If you want to... | Start here |

|---|---|

| Ask one model through a configured route | Ask your first prompt |

| See the free/dev endpoint menu | Free endpoint map |

| Compare several cheap samples before deciding | Fan out with Pro Mode |

| Test the CLI without provider keys | No-key demo path |

| Run project smoke cases | Smoke tests |

| Hand setup to a coding agent | Agent operations |

| Compare adjacent tools | ModelPreflight vs LiteLLM vs promptfoo vs Langfuse |

| Use it from code or agent hooks | Library usage and adapters |

ModelPreflight keeps provider setup machine-local and smoke cases project-local. It is not a hosted gateway, model leaderboard, or pricing oracle. It is the fast local preflight layer between "I found a promising free/dev endpoint" and "this provider is now wired into my product."

Ask your first prompt

Install the CLI and ask one routed model call:

uv tool install model-preflight

export OPENROUTER_API_KEY=...

mpf init --provider openrouter

mpf ask "In one sentence, explain why checking an LLM route before wiring it into an app saves time."

Expected shape:

Checking the route first catches missing keys, model drift, and provider failures before they break

the prototype path that depends on them.

If a key, provider, or route is missing, mpf ask prints a direct error with the missing env var or

route name. Use mpf doctor --live when you want a fuller diagnostics table:

mpf doctor --live

No provider key yet? Use the local echo preset to verify install, config loading, and project smoke test wiring without contacting an external API:

mpf init --preset minimal

mpf demo

The minimal preset is intentionally boring: one offline echo deployment that returns predictable

text. It proves the CLI and local files work; it does not prove provider auth, quota, latency, or

model quality.

Want an agent to initialize this repo? Paste this into Codex, Claude Code, Copilot, Gemini CLI, or Cursor while you keep reading:

Initialize ModelPreflight in this repository. Use the no-key minimal preset first, keep provider

secrets out of the repo, run `mpf demo`, then add project-local smoke cases if this repo has LLM

prompts or provider calls. Use this public workflow if you need details:

https://github.com/pylit-ai/model-preflight/blob/main/docs/agent-operations.md

Free endpoint map

The high-value path is simple: collect provider keys once, let ModelPreflight group them, then

ask free_reasoning or free_fast instead of memorizing every provider's model slug and quota

page.

Provider notes last reviewed: 2026-04-30. Catalogs, free tiers, quotas, and model IDs can change

without a ModelPreflight release; use each provider console plus mpf doctor --live as the current

truth for your account.

| Provider | What it gives a prototype | Default group | Key env var | Setup |

|---|---|---|---|---|

| OpenRouter | Lowest-friction first run; one API key can route to free-tagged and paid models | free_reasoning |

OPENROUTER_API_KEY |

Auth docs |

| NVIDIA Build / NIM | High-capability open/open-weight hosted endpoints while the current dev access fits | free_reasoning |

NVIDIA_NIM_API_KEY |

API keys |

| Groq | Very fast repeated calls for fanout and smoke checks when free-plan limits fit | free_fast |

GROQ_API_KEY |

Console keys |

| Cerebras | Fast inference experiments for short prototype loops | free_fast |

CEREBRAS_API_KEY |

Inference docs |

| Mistral | First-party checks against Mistral model families | free_reasoning |

MISTRAL_API_KEY |

Account setup |

The bundled presets are intentionally conservative starter data, not a claim that a provider will

remain free, available, or quota-identical for every account. Provider catalogs, free tiers, and

rate limits move; mpf doctor --live is the truth test for your machine today.

Secondary routes worth adding once the first pool works: Google Gemini/Gemma, Cloudflare Workers AI,

GitHub Models, Hugging Face Inference Providers, and SambaNova. See

docs/PROVIDER_PRESETS.md for the broader preset notes.

Fan out with Pro Mode

Use ask for one routed call and pro when a prompt is worth sampling several times before a

judge pass:

mpf ask "Write a shell-safe tagline for this LLM prototype."

Self-Consistency Improves Chain of Thought Reasoning in Language Models from Google Research / ICLR 2023 shows the broader value of sampling multiple reasoning paths instead of trusting one greedy answer; ModelPreflight applies a practical version of that idea to prototype prompts and provider routes.

PROMPT=$(cat <<'PROMPT'

Write the strongest short pitch for ModelPreflight Pro Mode: explain why fanout

across cheap or free endpoints plus a judge pass is better than trusting one

brittle LLM call for a prototype decision. Include one caveat.

PROMPT

)

mpf pro "$PROMPT" \

-n 8

-n 8 means "sample 8 candidate answers before the judge pass." Start lower when testing paid

routes; fanout multiplies provider calls.

The console prints only the final answer by default. Add --artifact when you want the prompt,

routes, candidate responses, candidate errors, group winners, and final judge output saved for

inspection:

mpf pro "Compare three JSON schema strategies for this extraction task" \

-n 4 \

--artifact .model-preflight/artifacts/schema-pro.json

For repeatable project checks, write JSONL smoke cases once and run:

mpf init-project

mpf run

mpf demo proves the configured route works. mpf ask is for a single one-off prompt. mpf pro

is for fanout plus synthesis. mpf run is for project-owned smoke files that should keep passing

as prompts, providers, and model slugs drift.

For the snappiest CLI startup, install once with uv tool install model-preflight or

pipx install model-preflight, then run mpf ... directly. uv run mpf ... may print package

sync messages before ModelPreflight starts.

Why this repo exists

Why this repo exists

Early LLM prototypes often need a quick answer to a practical question: "Can this prompt, model group, or provider route work well enough to keep building?"

ModelPreflight gives you a lightweight layer for that stage:

- one global config for provider credentials and routing

- project-local JSONL smoke cases

- stable aliases such as

free_reasoningandfree_fast - best-effort failover through LiteLLM

- audit records for live calls

When to use it

When to use it

Use ModelPreflight when:

- a prototype needs cheap LLM smoke checks before deeper eval work

- several projects should share the same local provider setup

- you want logical groups instead of hard-coding provider/model IDs everywhere

- provider quotas, model slugs, or dev-tier availability may drift

- you need enough provenance to debug "which model answered this?"

What it is not

What it is not

ModelPreflight is not:

- a model leaderboard

- a formal benchmark framework

- a hosted inference gateway

- a provider catalog authority

- proof that an endpoint is free, fast, or available today

Bundled provider presets are starter data. Check each provider's current catalog and terms before relying on a route.

60-second start

uvx model-preflight --help

# In a persistent tool or project environment:

uv tool install model-preflight

# or:

pipx install model-preflight

Set one supported provider key, initialize, and run one live check:

export OPENROUTER_API_KEY=...

# or: export NVIDIA_NIM_API_KEY=...

# or: export GROQ_API_KEY=...

# or: export CEREBRAS_API_KEY=...

# or: export MISTRAL_API_KEY=...

mpf init

mpf doctor --live

mpf demo

Expected signal:

mpf initwrites your machine-local config for the first visible provider key. If no supported key is visible, it writes the OpenRouter starter config and tells you to exportOPENROUTER_API_KEY.mpf doctor --liveprints a deployments table, thenlive check ok: group=....mpf demoprints JSON with"passed": trueand an empty"failures": []list.

Add checks to a project:

cd my-project

mpf init-project

mpf run

Expected signal:

mpf init-projectwritesevals/smoke.jsonl, writes.model-preflight/README.md, and updates.gitignore.mpf runprints JSON results for the starter cases. Every passing case has"passed": true.- A failing case exits non-zero and includes strings under

"failures"so you know what drifted.

Both mpf and model-preflight are installed as console scripts.

ModelPreflight catches missing keys, broken provider routes, prompt formatting regressions, output-shape drift, accidental model/provider changes, and "this worked yesterday" prototype failures before you wire the LLM call into something larger.

No-key demo path

Use the minimal offline preset when you want to test the CLI and project workflow without a provider account:

mpf init --preset minimal

mpf doctor --live

mpf demo

mpf init-project

mpf run

What this proves:

- Config loading works without secrets.

- The CLI can run a live-style check through the offline echo provider.

- Project bootstrap works by creating

evals/smoke.jsonl. - Smoke scoring works when

mpf runreturns JSON where every case has"passed": true.

What it does not prove: remote provider auth, quota, latency, or model quality. Use the OpenRouter path below for that.

Install options

Install options

PyPI or isolated tool install

uv tool install model-preflight

# or:

pipx install model-preflight

mpf --help

Project dependency

uv add --dev model-preflight

# or:

pip install model-preflight

Editable checkout

git clone https://github.com/pylit-ai/model-preflight.git

cd model-preflight

uv pip install -e .

# or from another repo:

uv add --dev --editable /absolute/path/to/model-preflight

ModelPreflight requires Python 3.11+.

Configuration and secrets

ModelPreflight keeps provider routing in your OS-specific user config directory and smoke cases in each project. Print the exact paths with:

mpf paths

Provider keys stay in environment variables or a private linked dotenv file:

mpf setup --env-file /path/to/private/.env

mpf doctor --group free_reasoning --json

Use docs/configuration.md for provider-selection order, custom config

paths, JSON diagnostics, the YAML shape, and preset discipline.

Smoke tests

Smoke cases are JSONL files owned by the project that is doing the prototype work.

{"id":"basic-ok","prompt":"Return only: ok","expected_substrings":["ok"]}

{"id":"avoid-word","prompt":"Answer yes without using the word nope","forbidden_substrings":["nope"]}

Run them with:

mpf run

# or:

mpf run path/to/smoke_cases.jsonl

mpf run prints JSON results and exits non-zero if any case fails.

Case fields

Each smoke case supports:

id: stable case identifierprompt: user prompt sent to the configured model groupgroup: optional model group overrideexpected_substrings: strings that must appear in the answerforbidden_substrings: strings that must not appear in the answer

These checks are intentionally simple. They are meant to catch obvious routing, prompt, and regression problems before you spend time on heavier evals.

Ask

mpf ask sends one prompt through one configured model group and prints the model text to stdout.

Plain text streams as tokens arrive. Progress and route metadata go to stderr by default, so stdout

stays clean for pipes and command substitution. In an interactive terminal, stderr status lines are

styled and separated from the answer by a blank line. Use --quiet to suppress all stderr status

lines, or --hide-route to hide provider/model route metadata while keeping progress visible. JSON

output is buffered so it remains valid JSON and includes route metadata unless --hide-route is set.

PROMPT="Write a poem about how ModelPreflight is the easiest way to use free LLM endpoints."

mpf ask "$PROMPT"

mpf ask "Write a shell-safe tagline" --quiet

mpf ask "Which model route is this using?"

mpf ask "Keep route metadata hidden, but show progress" --hide-route

mpf ask "Summarize why free endpoint preflight matters" --no-stream

mpf ask "Return JSON only: {\"ok\": true}" --group free_reasoning --json

Use ask for quick manual checks, demos, and shell snippets. Use run when the same prompt should

become a repeatable smoke case.

Pro Mode

mpf pro fans out a one-off prompt, then synthesizes a final answer through a judge group.

By default, stdout contains only the final synthesized answer. Diagnostics go to stderr. Use

--json to print the full candidate payload, or --artifact to save it while keeping the console

focused on the final answer.

PROMPT=$(cat <<'PROMPT'

Use fanout plus synthesis to choose a robust JSON schema strategy for extracting

renewal terms from messy SaaS contracts. Return the final schema, validation

rules, and the main failure mode.

PROMPT

)

mpf pro "$PROMPT" \

-n 8

Defaults:

| Option | Default | Role |

|---|---|---|

--n, -n |

8 |

number of sampled answers |

--sample-group |

configured default group | fanout group |

--judge-group |

configured default group | synthesis group |

--artifact path/to/pro.json |

unset | write prompt, routes, candidates, group winners, and final answer to a JSON artifact |

--json |

false |

print full candidate payload to stdout instead of only the final answer |

mpf pro prints route/progress diagnostics to stderr before the fanout starts:

[mpf] pro fanout n=2 sample_group=free_reasoning judge_group=free_reasoning

[mpf] sample nvidia: nvidia_nim/nvidia/nemotron-3-super-120b-a12b

[mpf] sample openrouter: openrouter/nvidia/nemotron-3-super-120b-a12b:free

[mpf] pro candidates ok=2/2

For post-run inspection:

mpf pro "Compare three prompt strategies for this extraction task" \

-n 4 \

--artifact .model-preflight/artifacts/pro-run.json

If every sample fails or returns empty text, the CLI exits non-zero and prints the first candidate

errors instead of showing a Python traceback. The full candidate list is still available through

--artifact.

Cost and quota note

Cost and quota note

Fanout multiplies live provider calls. Keep --n low while testing, use restricted provider keys where available, and review provider dashboards when running against paid endpoints.

ModelPreflight records audit rows for live calls, but it does not enforce provider billing limits beyond your configured routing and provider-side controls.

Library usage and adapters

Most users should start with the CLI. Use the Python API when a Python project wants to reuse the same machine-local config and routing directly:

from model_preflight import ModelGateway, load_config, pro_mode

config = load_config()

gateway = ModelGateway(config)

print(gateway.text("Return only: ok", group="free_reasoning"))

result = pro_mode(

gateway,

"Solve this toy puzzle",

n=8,

sample_group=config.router.default_group,

judge_group=config.router.default_group,

)

print(result["final"])

The library API is intentionally thin:

load_config()reads the same machine-local config as the CLIModelGatewaywraps LiteLLM Router with stable group aliases and audit loggingpro_mode()runs fanout plus synthesis for one-off prototype prompts

There is no TypeScript SDK yet. JavaScript and TypeScript projects can call the CLI directly or use

the bridge in examples/node_hook_example.mjs. A dedicated

TypeScript package is probably only worth adding after real users need typed in-process APIs rather

than shellable mpf commands.

Audit artifacts

By default, ModelPreflight writes audit logs under:

~/.cache/model-preflight/artifacts/audit.jsonl

Each live call should be traceable enough to debug provider drift:

- timestamp

- logical group

- resolved provider/model when returned by the provider

- prompt or case metadata

- latency

- token usage when available

- response id when available

See docs/EVAL_PROVENANCE.md for provenance expectations.

Repo adapters

| Path | Purpose |

|---|---|

examples/autoharness_provider.py |

Drop-in provider wrapper for AutoHarness-style experiments |

examples/gpt_pro_mode_refactor.py |

Example refactor from single-provider Pro Mode to shared routing |

examples/node_hook_example.mjs |

CLI bridge for JS or agent-hook projects |

skills/model-preflight/SKILL.md |

Optional coding-agent skill for consistent usage |

Command reference

mpf init --provider openrouter

mpf doctor --live

mpf demo

mpf ask "write a tiny launch blurb for ModelPreflight"

mpf init-project

mpf run

mpf providers list

mpf providers guide openrouter

mpf models

mpf pro "solve this toy task" \

-n 4 \

--artifact .model-preflight/artifacts/pro-run.json

Contributor workflow

uv sync

uv run pytest

uv run ruff check .

uv run mypy src

Package metadata lives in pyproject.toml. Tests live under tests/.

Agent operations

ModelPreflight ships agent-ready setup and operations prompts in

docs/agent-operations.md. Use them when you want Codex, Claude

Code, Copilot, Gemini CLI, Cursor, or another coding agent to add preflight checks to a target repo

without pasting the whole README into context.

Agent entrypoints:

| Artifact | Use |

|---|---|

docs/agent-operations.md |

copy-paste prompts and tool-specific placement |

docs/agent-specs/setup-model-preflight.md |

self-contained setup spec |

docs/agent-specs/provider-drift-check.md |

provider drift diagnostic spec |

skills/model-preflight/SKILL.md |

optional agent skill for runtimes that support skills |

Before changing provider routes, README examples, or release-surface docs in this repo, run:

uv sync

uv run pytest tests/test_readme_quality.py

uv run pytest

uv run ruff check .

uv run mypy src

README verification should pass before release-oriented README edits land. The focused README check asserts that the first useful prompt stays above the endpoint map, media URLs remain package-registry safe, provider claims include a review date, and agent-facing commands stay discoverable.

Stable public paths:

| Path | Use |

|---|---|

README.md |

landing page, quickstart, and navigation hub |

docs/quickstart.md |

deeper first-run walkthrough |

docs/configuration.md |

provider routing, secrets, and config shape |

docs/troubleshooting.md |

recovery steps after failed checks |

docs/PROVIDER_PRESETS.md |

provider preset notes and drift warnings |

docs/release-verification.md |

release and package-rendering checks |

Do not copy private specs, non-public provider/account names, internal URLs, or private agent protocol files into this public repository. Use environment variables or user-local config for secrets.

Design principles

- Global provider routing lives in the path printed by

mpf paths. - Project-local checks define cases, scoring, fixtures, and artifacts.

- LiteLLM handles provider-specific API quirks.

- ModelPreflight adds stable aliases, lightweight failover, and audit logs.

- Deterministic tests should run before live provider checks.

For the product scope and non-goals, see docs/NORTHSTAR.md.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file model_preflight-0.1.16.tar.gz.

File metadata

- Download URL: model_preflight-0.1.16.tar.gz

- Upload date:

- Size: 1.5 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: uv/0.11.8 {"installer":{"name":"uv","version":"0.11.8","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true}

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a3916ce6fd7b5db5d27c0145207542a31aa393ad6ef60e4b4453312bb53dc5f5

|

|

| MD5 |

6ec427cbc8f1e986626cee8bb55e15bb

|

|

| BLAKE2b-256 |

efb53ab925b320e7c82b0ec625e88b5201a878e70ecedb7280199d269afcf256

|

File details

Details for the file model_preflight-0.1.16-py3-none-any.whl.

File metadata

- Download URL: model_preflight-0.1.16-py3-none-any.whl

- Upload date:

- Size: 27.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: uv/0.11.8 {"installer":{"name":"uv","version":"0.11.8","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true}

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4a84a33e7266aef8836ee33704e6b9b766eb191716ea53724549c12d920bcfa4

|

|

| MD5 |

65c685e504e55d20d76e2d1e9533cd0b

|

|

| BLAKE2b-256 |

9f64c7b69fb6b7ec929536a213c7c226eef628673a0513083ed9740a036987b8

|