A FastAPI-based load balancer for vLLM servers with OpenAI-compatible API

Project description

vLLM Router

Intelligent load balancer for distributed vLLM server clusters

What problem does it solve?

When you have multiple GPU servers running vLLM, you face:

- Fragmented Resources: Multiple independent GPUs cannot be managed unifiedly

- Unbalanced Load: Some servers are overloaded while others are idle

- Poor Availability: Single server failure affects overall service

vLLM Router provides a unified entry point that intelligently distributes requests to the best servers.

Key Advantages

🎯 Intelligent Load Balancing

- Real-time Monitoring: Direct metrics from vLLM

/metricsendpoints - Smart Algorithm:

(running + waiting * 0.5) / capacity - Priority Selection: Prefers servers with load < 50%

- Zero Queue: Direct forwarding without intermediate queues

🔄 High Availability

- Automatic Failover: Detects and removes unhealthy servers

- Smart Retry: Automatically retries failed requests on other servers

- Hot Reload: Configuration changes without service restart

Quick Start

Installation

git clone https://github.com/xerrors/mvllm.git

pip install -e .

mvllm run

Configuration

Create server configuration file:

cp servers.example.toml servers.toml

Edit servers.toml:

[servers]

servers = [

{ url = "http://gpu-server-1:8081", max_concurrent_requests = 3 },

{ url = "http://gpu-server-2:8088", max_concurrent_requests = 5 },

{ url = "http://gpu-server-3:8089", max_concurrent_requests = 4 },

]

[config]

health_check_interval = 10

request_timeout = 120

max_retries = 3

Running

# Production mode (fullscreen monitoring)

mvllm run

# Development mode (console logging)

mvllm run --console

# Custom port

mvllm run --port 8888

Usage Examples

Chat Completions

curl -X POST http://localhost:8888/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "llama3.1:8b",

"messages": [

{"role": "user", "content": "Hello, please introduce yourself"}

]

}'

Check Load Status

curl http://localhost:8888/health

curl http://localhost:8888/load-stats

API Endpoints

POST /v1/chat/completions- Chat completionsPOST /v1/completions- Text completionsGET /v1/models- Model listingGET /health- Health statusGET /load-stats- Load statistics

Deployment

Docker

docker build -t mvllm .

docker run -d -p 8888:8888 -v $(pwd)/servers.toml:/app/servers.toml mvllm

Docker Compose

version: '3.8'

services:

mvllm:

build: .

ports:

- "8888:8888"

volumes:

- ./servers.toml:/app/servers.toml

Configuration

Server Configuration

url: vLLM server addressmax_concurrent_requests: Maximum concurrent requests

Global Configuration

health_check_interval: Health check interval (seconds)request_timeout: Request timeout (seconds)max_retries: Maximum retry attempts

Monitoring

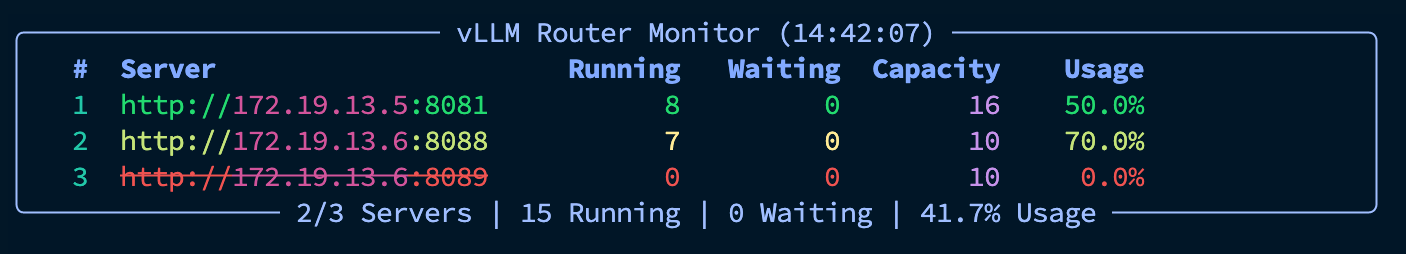

- Real-time Load Monitoring: Shows running and waiting requests per server

- Health Status: Real-time server availability monitoring

- Resource Utilization: GPU cache usage and other metrics

Chinese Version

For Chinese documentation, see README.zh.md

License

MIT License

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file mvllm-0.1.0.tar.gz.

File metadata

- Download URL: mvllm-0.1.0.tar.gz

- Upload date:

- Size: 108.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.6.17

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

deaa66dc44b8db39e7b33d79cd106cd00af7792cc2f0491fa5acb98be7f1b96e

|

|

| MD5 |

9b3700b40b56dbfd1de35f6f8b590b2e

|

|

| BLAKE2b-256 |

be91e8554498c021b84d453e1322b75013d6b8a616da637ae66b1cb97d589792

|

File details

Details for the file mvllm-0.1.0-py3-none-any.whl.

File metadata

- Download URL: mvllm-0.1.0-py3-none-any.whl

- Upload date:

- Size: 23.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.6.17

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a8fb247902541e2cddb191b568358996887c55a338b3d8aae6ce474ec67662fc

|

|

| MD5 |

0225162065e3caca094d7bdbd87e28f4

|

|

| BLAKE2b-256 |

c73da3d7ba919778089c3cca413a81041a95e09ec8cf374c10c7b9ba6fdf37a8

|