A domain-specific language and debugger for neural networks

Project description

Neural: A Neural Network Programming Language

Neural is a domain-specific language (DSL) designed for defining, training, debugging, and deploying neural networks. With declarative syntax, cross-framework support, and built-in execution tracing (NeuralDbg), it simplifies deep learning development.

Example: Auto-generated architecture diagram and shape propagation report

🚀 Features

- YAML-like Syntax: Define models intuitively without framework boilerplate.

- Shape Propagation: Catch dimension mismatches before runtime.

- Multi-Backend Export: Generate code for TensorFlow, PyTorch, or ONNX.

- Training Orchestration: Configure optimizers, schedulers, and metrics in one place.

- Visual Debugging: Render interactive 3D architecture diagrams.

- Extensible: Add custom layers/losses via Python plugins.

🛠 NeuralDbg: Built-in Neural Network Debugger

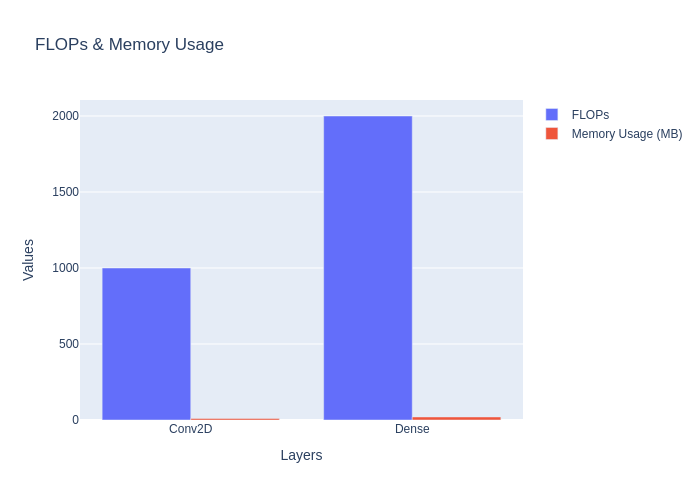

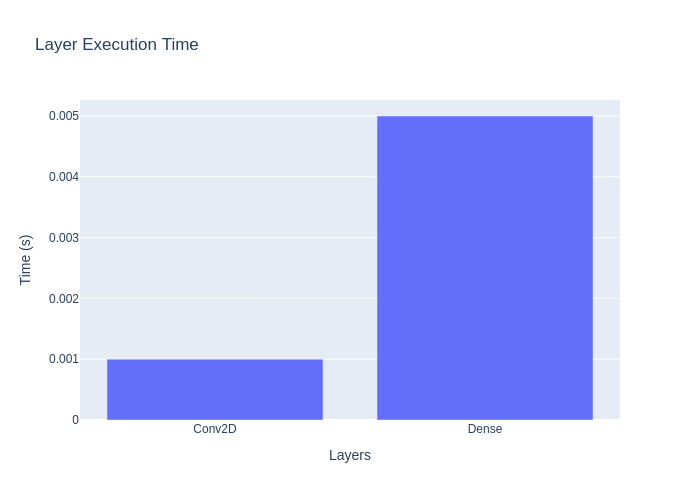

NeuralDbg provides real-time execution tracing, profiling, and debugging, allowing you to visualize and analyze deep learning models in action.

✅ Real-Time Execution Monitoring – Track activations, gradients, memory usage, and FLOPs.

✅ Shape Propagation Debugging – Visualize tensor transformations at each layer.

✅ Gradient Flow Analysis – Detect vanishing & exploding gradients.

✅ Dead Neuron Detection – Identify inactive neurons in deep networks.

✅ Anomaly Detection – Spot NaNs, extreme activations, and weight explosions.

✅ Step Debugging Mode – Pause execution and inspect tensors manually.

📦 Installation

# Clone the repository

git clone https://github.com/yourusername/neural.git

cd neural

# Create a virtual environment (recommended)

python -m venv venv

source venv/bin/activate # Linux/macOS

venv\Scripts\activate # Windows

# Install dependencies

pip install -r requirements.txt

Prerequisites: Python 3.8+, pip

🛠️ Quick Start

1. Define a Model

Create mnist.neural:

network MNISTClassifier {

input: (28, 28, 1) # Channels-last format

layers:

Conv2D(filters=32, kernel_size=(3,3), activation="relu")

MaxPooling2D(pool_size=(2,2))

Flatten()

Dense(units=128, activation="relu")

Dropout(rate=0.5)

Output(units=10, activation="softmax")

loss: "sparse_categorical_crossentropy"

optimizer: Adam(learning_rate=0.001)

metrics: ["accuracy"]

train {

epochs: 15

batch_size: 64

validation_split: 0.2

}

}

3. Run Or Compile The Model

neural run mnist.neural --backend tensorflow --output mnist_tf.py

# Or for PyTorch:

neural run mnist.neural --backend pytorch --output mnist_torch.py

neural compile mnist.neural --backend tensorflow --output mnist_tf.py

# Or for PyTorch:

neural compile mnist.neural --backend pytorch --output mnist_torch.py

4. Visualize Architecture

neural visualize mnist.neural --format png

This will create architecture.png, shape_propagation.html, and tensor_flow.html for inspecting the network structure and shape propagation.

5. Debug with NeuralDbg

neural debug mnist.neural

Open your browser to http://localhost:8050 to monitor execution traces, gradients, and anomalies interactively.

6. Use The No-Code Interface

neural --no_code

Open your browser to http://localhost:8051 to build and compile models via a graphical interface.

🛠 Debugging with NeuralDbg

🔹 1️⃣ Start Real-Time Execution Tracing

python neural.py debug mnist.neural

Features:

✅ Layer-wise execution trace

✅ Memory & FLOP profiling

✅ Live performance monitoring

🔹 2️⃣ Analyze Gradient Flow

python neural.py debug --gradients mnist.neural

🚀 Detect vanishing/exploding gradients with interactive charts.

🔹 3️⃣ Identify Dead Neurons

python neural.py debug --dead-neurons mnist.neural

🛠 Find layers with inactive neurons (common in ReLU networks).

🔹 4️⃣ Detect Training Anomalies

python neural.py debug --anomalies mnist.neural

🔥 Flag NaNs, weight explosions, and extreme activations.

🔹 5️⃣ Step Debugging (Interactive Tensor Inspection)

python neural.py debug --step mnist.neural

🔍 Pause execution at any layer and inspect tensors manually.

🌟 Why Neural?

| Feature | Neural | Raw TensorFlow/PyTorch |

|---|---|---|

| Shape Validation | ✅ Auto | ❌ Manual |

| Framework Switching | 1-line flag | Days of rewriting |

| Architecture Diagrams | Built-in | Third-party tools |

| Training Config | Unified | Fragmented configs |

🔄 Cross-Framework Code Generation

| Neural DSL | TensorFlow Output | PyTorch Output |

|---|---|---|

Conv2D(filters=32) |

tf.keras.layers.Conv2D(32) |

nn.Conv2d(in_channels, 32) |

Dense(units=128) |

tf.keras.layers.Dense(128) |

nn.Linear(in_features, 128) |

🏆 Benchmarks

| Task | Neural | Baseline (TF/PyTorch) |

|---|---|---|

| MNIST Training | 1.2x ⚡ | 1.0x |

| Debugging Setup | 5min 🕒 | 2hr+ |

📚 Documentation

Explore advanced features:

📚 Examples

Explore common use cases in examples/ with step-by-step guides in docs/examples/:

🤝 Contributing

We welcome contributions! See our:

To set up a development environment:

git clone https://github.com/yourusername/neural.git

cd neural

pip install -r requirements-dev.txt # Includes linter, formatter, etc.

pre-commit install # Auto-format code on commit

🌐 Supported Integrations

| Service | Status | Docs |

|---|---|---|

| TensorBoard | ✅ | Link |

| Weights & Biases | Beta | Link |

| AWS SageMaker | Q3'24 | Roadmap |

| NVIDIA Triton | Q4'24 | Roadmap |

📬 Community

- Discord Server: Chat with developers

- Twitter @NLang4438: Updates & announcements

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file neural_dsl-0.1.0.tar.gz.

File metadata

- Download URL: neural_dsl-0.1.0.tar.gz

- Upload date:

- Size: 5.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b4e2bc47f0a6d584c6e2d6d7f09a6b372d87fff8389ac244463b036f687dff19

|

|

| MD5 |

c8f3717789ec9303c00ac7e98f1f833d

|

|

| BLAKE2b-256 |

f38c3bd0fd7aafc48d1ff21f90a6e7eae18aab64bb94d86ba92829729f2a0456

|

File details

Details for the file neural_dsl-0.1.0-py3-none-any.whl.

File metadata

- Download URL: neural_dsl-0.1.0-py3-none-any.whl

- Upload date:

- Size: 4.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

70c0f712d507663b91d04863f1009c5be3344a9469d52034a3aba18bc3211c89

|

|

| MD5 |

ce6308e91cdf33207f38d87dd9bbbafe

|

|

| BLAKE2b-256 |

37d2cbbc9481dc68d0e3fc01d887eddca07d896e26c7d5dd26b747416b42bc23

|