Neurosurfer: production-ready AI agent framework with multi-LLM, RAG, tools, and FastAPI server

Project description

Neurosurfer helps you build intelligent apps that blend LLM reasoning, tools, and retrieval with a ready-to-run FastAPI backend and a React dev UI. Start lean, add power as you go — CPU-only or GPU-accelerated.

🚀 What’s in the box

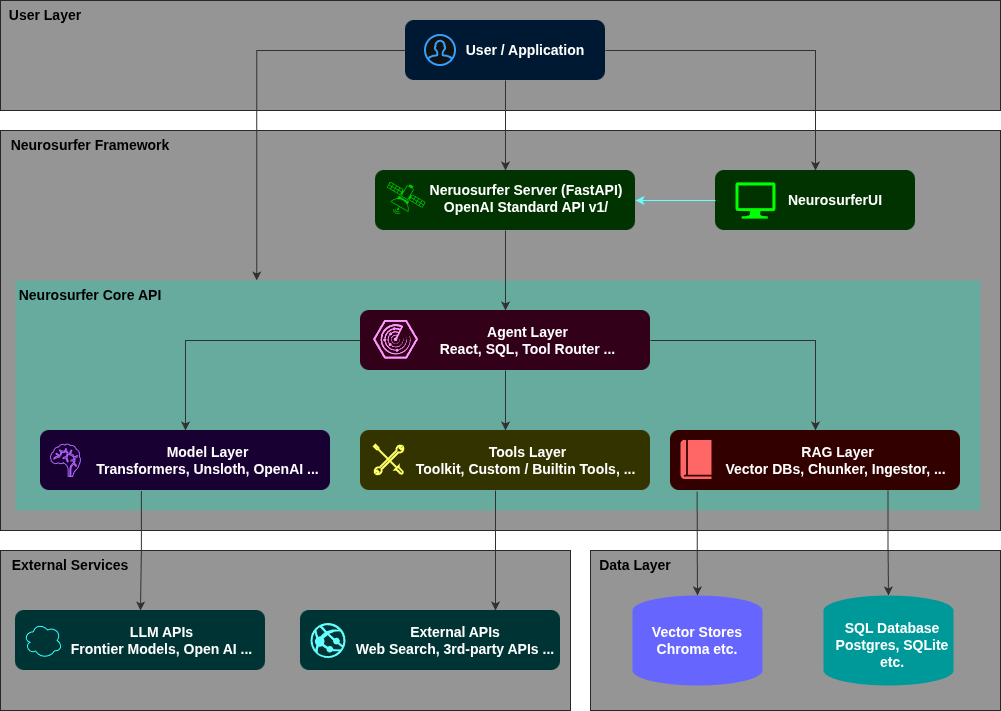

- 🤖 Agents: Production-ready patterns for ReAct, SQL, RAG, Router etc. think → act → observe → answer

- 🧠 Models: Unified interface for OpenAI-style and local backends like Transformers/Unsloth, vLLM, Llama.cpp etc.

- 📚 RAG: Simple, swappable retrieval core: embed → search → format → token-aware trimming

- ⚙️ FastAPI Server: OpenAI-compatible endpoints for chat + tools — custom endpoints, chat handlers, RAG etc.

- 🖥️ NeurowebUI: React chat UI (GPT-style) that communicates with the server out-of-the-box

- 🧪 CLI:

neurosurfer serveto run server/UI — custom backend app and UI support

🎓 Tutorials

| # | Tutorial | Link | Description |

|---|---|---|---|

| 1 | Neurosurfer Quickstart |

|

Learn how to load local and OpenAI models, stream responses, and build your first RAG and tool-based agents directly in Jupyter or Colab. |

More tutorials coming soon — covering RAG, Custom Tools, More on Agents, FastAPI integration and more.

🗞️ News

- Agents: ReAct & SQLAgent upgraded with bounded retries, spec-aware input validation, and better final-answer streaming; new ToolsRouterAgent for quick one-shot tool picks.

- Models: Cleaner OpenAI-style responses across backends; smarter token budgeting + fallbacks when tokenizer isn’t available.

- Server: Faster startup, better logging/health endpoints, and safer tool execution paths; OpenAI-compatible routes refined for streaming/tool-calling.

- CLI:

servenow runs backend-only or UI-only and auto-injectsVITE_BACKEND_URL; new subcommands for ingest/traces to standardize local workflows.

Looking for older updates? Check the repo Releases and Changelog.

⚡ Quick Start

A 60-second path from install → dev server → your first inference.

Install (minimal core):

pip install -U neurosurfer

Or full LLM stack (torch, transformers, bnb, unsloth):

pip install -U "neurosurfer[torch]"

Run the dev server (backend + UI):

neurosurfer serve

- Auto-detects UI; pass

--ui-rootif needed. First run maynpm install. - Backend binds to config defaults; override with flags or envs.

Before running the CLI, make sure you have environment ready with dependencies installed. For the default UI, cli requires

npm,nodejsandserveto be installed on your system.

Hello LLM Example:

from neurosurfer.models.chat_models.transformers import TransformersModel

llm = TransformersModel(

model_name="unsloth/Llama-3.2-1B-Instruct-bnb-4bit",

load_in_4bit=True

)

res = llm.ask(user_prompt="Say hi!", system_prompt="Be concise.", stream=False)

print(res.choices[0].message.content)

🏗️ High-Level Architecture

Neurosurfer Architecture

✨ Key Features

-

Production API — FastAPI backend with auth, chat APIs, and OpenAI-compatible endpoints → Server setup

-

Intelligent Agents — Build ReAct, SQL, and RAG agents with minimal code, optimized for specific tasks → Learn about agents

-

Rich Tool Ecosystem — Built-in tools (calculator, web calls, files) plus easy custom tools → Explore tools

-

RAG System — Ingest, chunk, and retrieve relevant context for your LLMs → RAG System

-

Vector Databases — Built-in ChromaDB with an extensible interface for other stores → Vector stores

-

Multi-LLM Support — OpenAI, Transformers/Unsloth, vLLM, Llama.cpp, and OpenAI-compatible APIs → Model docs

📦 Install Options

pip (recommended)

pip install -U neurosurfer

pip + full LLM stack

pip install -U "neurosurfer[torch]"

From source

git clone https://github.com/NaumanHSA/neurosurfer.git

cd neurosurfer && pip install -e ".[torch]"

CUDA notes (Linux x86_64):

# Wheels bundle CUDA; you just need a compatible NVIDIA driver.

pip install -U torch --index-url https://download.pytorch.org/whl/cu124

# or CPU-only:

pip install -U torch --index-url https://download.pytorch.org/whl/cpu

📝 License

Licensed under Apache-2.0. See LICENSE.

🌟 Support

- ⭐ Star the project on GitHub.

- 💬 Ask & share in Discussions: Discussions.

- 🧠 Read the Docs.

- 🐛 File Issues.

- 🔒 Security: report privately to naumanhsa965@gmail.com.

📚 Citation

If you use Neurosurfer in your work, please cite:

@software{neurosurfer,

author = {Nouman Ahsan and Neurosurfer contributors},

title = {Neurosurfer: A Production-Ready AI Agent Framework},

year = {2025},

url = {https://github.com/NaumanHSA/neurosurfer},

version = {0.1.0},

license = {Apache-2.0}

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file neurosurfer-0.1.2.tar.gz.

File metadata

- Download URL: neurosurfer-0.1.2.tar.gz

- Upload date:

- Size: 566.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3006e775b469eb9e417e17b6bce0d4dd0289469d3390dbd4d6bfd67f61faf24b

|

|

| MD5 |

32b9f5ddb5f6525ed224e5db8b74ce7d

|

|

| BLAKE2b-256 |

fb7710aa4aee4893e0c9e6f6419f48e44d74ebbed0f171dbbfdbf47602fd1265

|

Provenance

The following attestation bundles were made for neurosurfer-0.1.2.tar.gz:

Publisher:

publish.yml on NaumanHSA/neurosurfer

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

neurosurfer-0.1.2.tar.gz -

Subject digest:

3006e775b469eb9e417e17b6bce0d4dd0289469d3390dbd4d6bfd67f61faf24b - Sigstore transparency entry: 676632916

- Sigstore integration time:

-

Permalink:

NaumanHSA/neurosurfer@70f9ffa72cc0a45b48cc7a4224667aa2b8b44c80 -

Branch / Tag:

refs/tags/v0.1.2 - Owner: https://github.com/NaumanHSA

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@70f9ffa72cc0a45b48cc7a4224667aa2b8b44c80 -

Trigger Event:

push

-

Statement type:

File details

Details for the file neurosurfer-0.1.2-py3-none-any.whl.

File metadata

- Download URL: neurosurfer-0.1.2-py3-none-any.whl

- Upload date:

- Size: 603.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d77124f0c1e451e4d41b4da88196d6f37b4294975e416ddedb0cbcc2c1114600

|

|

| MD5 |

2aca4de645b30ca79381af6d246115b3

|

|

| BLAKE2b-256 |

a76e2914b678e2be1f9b91572453e0e483e0329c1110d0c12c8a63ab0a94f663

|

Provenance

The following attestation bundles were made for neurosurfer-0.1.2-py3-none-any.whl:

Publisher:

publish.yml on NaumanHSA/neurosurfer

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

neurosurfer-0.1.2-py3-none-any.whl -

Subject digest:

d77124f0c1e451e4d41b4da88196d6f37b4294975e416ddedb0cbcc2c1114600 - Sigstore transparency entry: 676632921

- Sigstore integration time:

-

Permalink:

NaumanHSA/neurosurfer@70f9ffa72cc0a45b48cc7a4224667aa2b8b44c80 -

Branch / Tag:

refs/tags/v0.1.2 - Owner: https://github.com/NaumanHSA

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@70f9ffa72cc0a45b48cc7a4224667aa2b8b44c80 -

Trigger Event:

push

-

Statement type: