Model Context Protocol server for OME-Zarr image conversion

Project description

ngff-zarr MCP Server

ngff-zarr-mcp is a Model Context Protocol (MCP) server that provides AI agents

with the ability to convert images to OME-Zarr format using the ngff-zarr

library.

Features

Tools

- convert_images_to_ome_zarr: Convert various image formats to OME-Zarr with full control over metadata, compression, and multiscale generation

- read_ome_zarr_store: Read OME-Zarr data with support for remote storage options

- get_ome_zarr_info: Inspect existing OME-Zarr stores and get detailed information

- validate_ome_zarr_store: Validate OME-Zarr structure and metadata

- optimize_ome_zarr_store: Optimize existing stores with new compression and chunking

Resources

- supported-formats: List of supported input/output formats and backends

- downsampling-methods: Available downsampling methods for multiscale generation

- compression-codecs: Available compression codecs and their characteristics

Input Support

- Local files (all formats supported by ngff-zarr)

- Local directories (Zarr stores)

- Network URLs (HTTP/HTTPS)

- S3 URLs (with optional s3fs dependency)

- Remote storage with authentication (AWS S3, Google Cloud Storage, Azure)

Advanced Features

- RFC 4 - Anatomical Orientation: Support for medical imaging orientation systems (LPS, RAS)

- Method Metadata: Enhanced multiscale metadata with downsampling method information

- Storage Options: Cloud storage authentication and configuration support

- Multiscale Type Tracking: Automatic detection and preservation of downsampling methods

Output Optimization

- Multiple compression codecs (gzip, lz4, zstd, blosc variants)

- Configurable compression levels

- Flexible chunk sizing

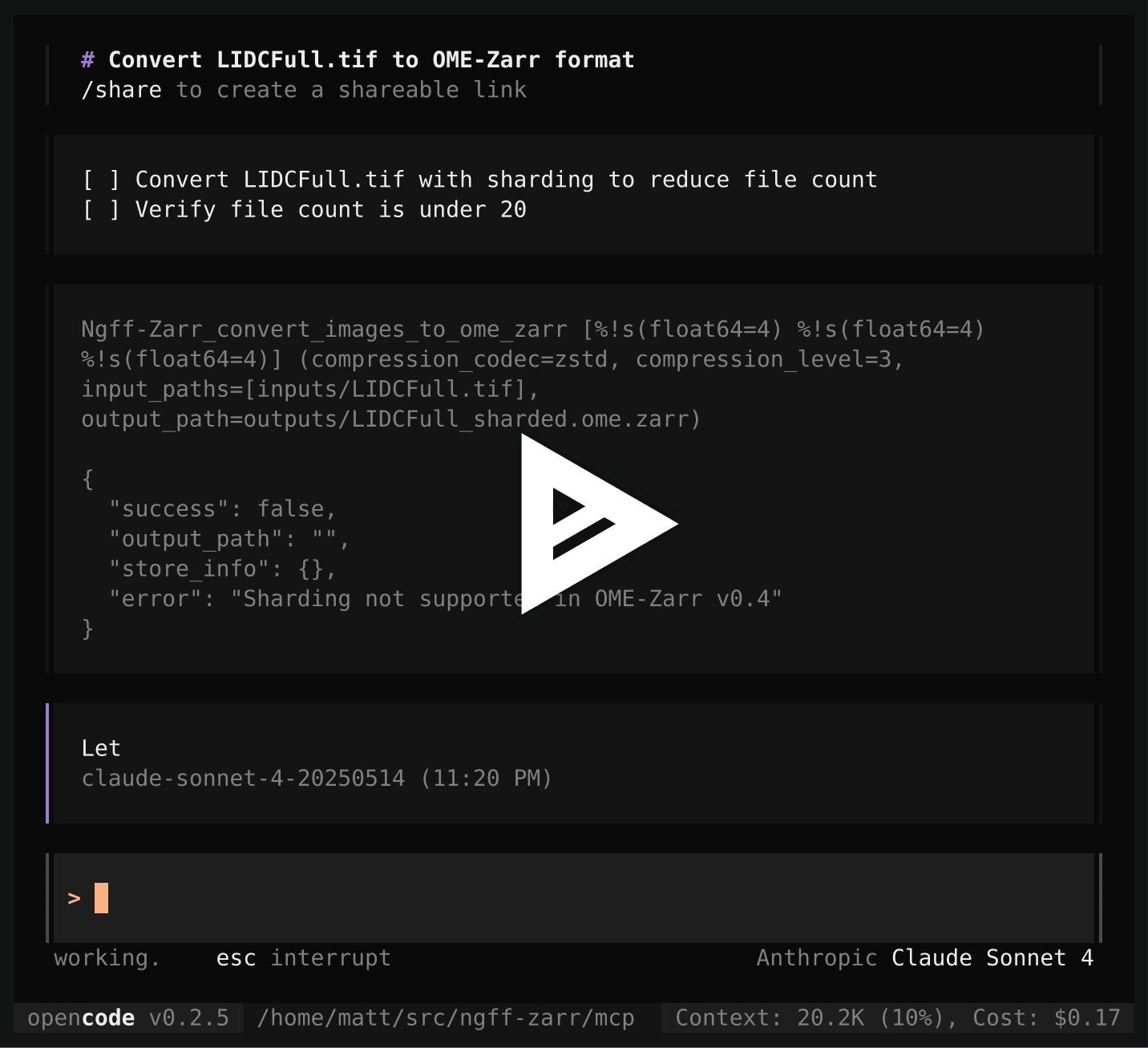

- Sharding support (Zarr v3/OME-Zarr v0.5)

- OME-Zarr version selection (0.4 or 0.5)

Installation

Requirements

- Python >= 3.10

- Cursor, Windsurf, Claude Desktop, VS Code, or another MCP Client

Quick Install

The easiest way to use ngff-zarr MCP server is with uvx:

# Install uvx if not already installed

pip install uvx

# Run the MCP server directly from PyPI

uvx ngff-zarr-mcp

Install in Cursor

Go to: Settings -> Cursor Settings -> MCP -> Add new global MCP server

Pasting the following configuration into your Cursor ~/.cursor/mcp.json file

is the recommended approach. You may also install in a specific project by

creating .cursor/mcp.json in your project folder. See

Cursor MCP docs for

more info.

Using uvx (recommended)

{

"mcpServers": {

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

}

Using direct Python

{

"mcpServers": {

"ngff-zarr": {

"command": "python",

"args": ["-m", "pip", "install", "ngff-zarr-mcp", "&&", "ngff-zarr-mcp"]

}

}

}

Install in Windsurf

Add this to your Windsurf MCP config file. See Windsurf MCP docs for more info.

Using uvx (recommended)

{

"mcpServers": {

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

}

SSE Transport

{

"mcpServers": {

"ngff-zarr": {

"url": "http://localhost:8000/sse",

"description": "ngff-zarr server running with SSE transport"

}

}

}

Install in VS Code

Add this to your VS Code MCP config file. See VS Code MCP docs for more info.

Using uvx (recommended)

"mcp": {

"servers": {

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

}

Using pip install

"mcp": {

"servers": {

"ngff-zarr": {

"command": "python",

"args": ["-c", "import subprocess; subprocess.run(['pip', 'install', 'ngff-zarr-mcp']); import ngff_zarr_mcp.server; ngff_zarr_mcp.server.main()"]

}

}

}

Install in OpenCode

OpenCode is a Go-based CLI application that provides an AI-powered coding assistant in the terminal. It supports MCP servers through JSON configuration files. See OpenCode MCP docs for more details.

Add this to your OpenCode configuration file (~/.config/opencode/config.json

for global or opencode.json for project-specific):

Using uvx (recommended)

{

"$schema": "https://opencode.ai/config.json",

"mcp": {

"ngff-zarr": {

"type": "local",

"command": ["uvx", "ngff-zarr-mcp"],

"enabled": true

}

}

}

Using pip install

{

"mcp": {

"ngff-zarr": {

"type": "local",

"command": [

"python",

"-c",

"import subprocess; subprocess.run(['pip', 'install', 'ngff-zarr-mcp']); import ngff_zarr_mcp.server; ngff_zarr_mcp.server.main()"

],

"enabled": true

}

}

}

After adding the configuration, restart OpenCode. The ngff-zarr tools will be available in the terminal interface with automatic permission prompts for tool execution.

Install in Claude Desktop

Add this to your Claude Desktop claude_desktop_config.json file. See

Claude Desktop MCP docs for

more info.

Using uvx (recommended)

{

"mcpServers": {

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

}

Using direct installation

{

"mcpServers": {

"ngff-zarr": {

"command": "ngff-zarr-mcp"

}

}

}

Install in Claude Code

Run this command. See Claude Code MCP docs for more info.

Using uvx

claude mcp add ngff-zarr -- uvx ngff-zarr-mcp

Using pip

claude mcp add ngff-zarr -- python -m pip install ngff-zarr-mcp && ngff-zarr-mcp

Install in Gemini CLI

Add this to your .gemini/settings.json Gemini CLI MCP configuration. See the Gemini CLI configuration docs for more info.

Using uvx (recommended)

{

"mcpServers": {

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

}

Install in Cline

- Open Cline.

- Click the hamburger menu icon (☰) to enter the MCP Servers section.

- Add a new server with the following configuration:

Using uvx (recommended)

{

"mcpServers": {

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

}

Install in BoltAI

Open the "Settings" page of the app, navigate to "Plugins," and enter the following JSON:

{

"mcpServers": {

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

}

Once saved, you can start using ngff-zarr tools in your conversations. More information is available on BoltAI's Documentation site.

Install in Zed

Add this to your Zed settings.json. See

Zed Context Server docs for

more info.

{

"context_servers": {

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

}

Install in Augment Code

A. Using the Augment Code UI

- Click the hamburger menu.

- Select Settings.

- Navigate to the Tools section.

- Click the + Add MCP button.

- Enter the following command:

uvx ngff-zarr-mcp - Name the MCP: ngff-zarr.

- Click the Add button.

B. Manual Configuration

- Press Cmd/Ctrl Shift P or go to the hamburger menu in the Augment panel

- Select Edit Settings

- Under Advanced, click Edit in settings.json

- Add the server configuration:

"augment.advanced": {

"mcpServers": [

{

"name": "ngff-zarr",

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

]

}

Install in JetBrains AI Assistant

See JetBrains AI Assistant Documentation for more details.

- In JetBrains IDEs go to

Settings->Tools->AI Assistant->Model Context Protocol (MCP) - Click

+ Add. - Click on

Commandin the top-left corner of the dialog and select the As JSON option from the list - Add this configuration and click

OK

{

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

- Click

Applyto save changes.

Install in Qodo Gen

See Qodo Gen docs for more details.

- Open Qodo Gen chat panel in VSCode or IntelliJ.

- Click Connect more tools.

- Click + Add new MCP.

- Add the following configuration:

{

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

Install in Roo Code

Add this to your Roo Code MCP configuration file. See Roo Code MCP docs for more info.

{

"mcpServers": {

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

}

Install in Amazon Q Developer CLI

Add this to your Amazon Q Developer CLI configuration file. See Amazon Q Developer CLI docs for more details.

{

"mcpServers": {

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

}

Install in Zencoder

To configure ngff-zarr MCP in Zencoder, follow these steps:

- Go to the Zencoder menu (...)

- From the dropdown menu, select Agent tools

- Click on the Add custom MCP

- Add the name and server configuration from below, and make sure to hit the Install button

{

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

Once the MCP server is added, you can easily continue using it.

Install in Warp

See Warp Model Context Protocol Documentation for details.

- Navigate

Settings>AI>Manage MCP servers. - Add a new MCP server by clicking the

+ Addbutton. - Paste the configuration given below:

{

"ngff-zarr": {

"command": "uvx",

"args": ["ngff-zarr-mcp"]

}

}

- Click

Saveto apply the changes.

Development Installation

For development work, use pixi (recommended) or pip:

Using pixi (Recommended)

# Install pixi if not already installed

curl -fsSL https://pixi.sh/install.sh | bash

# Clone and setup environment

git clone <repository>

cd mcp/

pixi install

# Development environment (includes all dev tools)

pixi shell -e dev

# Run development server

pixi run dev-server

# Run tests and checks

pixi run test

pixi run lint

pixi run typecheck

Using pip

# Clone and install in development mode

git clone <repository>

cd mcp/

pip install -e ".[all]"

# Run the server

ngff-zarr-mcp

Usage

As MCP Server

The server can be run in different transport modes:

# STDIO transport (default)

ngff-zarr-mcp

# Server-Sent Events transport

ngff-zarr-mcp --transport sse --host localhost --port 8000

Transport Options

- STDIO: Default transport for most MCP clients

- SSE: Server-Sent Events for web-based clients or when HTTP transport is preferred

See the installation section above for client-specific configuration examples.

Examples

Convert a Single Image

# Through MCP client, the agent can:

result = await convert_images_to_ome_zarr(

input_paths=["image.tif"],

output_path="output.ome.zarr",

ome_zarr_version="0.4",

scale_factors=[2, 4, 8],

method="itkwasm_gaussian",

compression_codec="zstd"

)

Convert with Metadata

result = await convert_images_to_ome_zarr(

input_paths=["image.nii.gz"],

output_path="brain.ome.zarr",

dims=["z", "y", "x"],

scale={"z": 2.0, "y": 0.5, "x": 0.5},

units={"z": "micrometer", "y": "micrometer", "x": "micrometer"},

name="Brain MRI",

scale_factors=[2, 4]

)

Optimize Existing Store

result = await optimize_ome_zarr_store(

input_path="large.ome.zarr",

output_path="optimized.ome.zarr",

compression_codec="blosc:zstd",

chunks=[64, 64, 64]

)

Get Store Information

info = await get_ome_zarr_info("data.ome.zarr")

print(f"Size: {info.size_bytes} bytes")

print(f"Scales: {info.num_scales}")

print(f"Dimensions: {info.dimensions}")

Supported Formats

Input Formats

- ITK/ITK-Wasm: .nii, .nii.gz, .mha, .mhd, .nrrd, .dcm, .jpg, .png, .bmp, etc.

- TIFF: .tif, .tiff, .svs, .ndpi, .scn, etc. via tifffile

- Video: .webm, .mp4, .avi, .mov, .gif, etc. via imageio

- Zarr: .zarr, .ome.zarr

Output Formats

- OME-Zarr (.ome.zarr, .zarr)

Performance Options

Memory Management

- Set memory targets to control RAM usage

- Use caching for large datasets

- Configure Dask LocalCluster for distributed processing

Compression

- Choose from multiple codecs: gzip, lz4, zstd, blosc variants

- Adjust compression levels for speed vs. size tradeoffs

- Use sharding to reduce file count (Zarr v3)

Chunking

- Optimize chunk sizes for your access patterns

- Configure sharding for better performance with cloud storage

Development

Using pixi (Recommended)

Pixi provides reproducible, cross-platform environment management. All Python

dependencies are defined in pyproject.toml and automatically managed by pixi.

# Clone and setup environment

git clone <repository>

cd mcp/

pixi install

# Development environment (includes all dev tools)

pixi shell -e dev

# Run tests

pixi run test

pixi run test-cov

# Lint and format code

pixi run lint

pixi run format

pixi run typecheck

# Run all checks

pixi run all-checks

Pixi Environments

- default: Runtime dependencies only (from

[project.dependencies]) - dev: Development tools (pytest, black, mypy, ruff)

- cloud: Cloud storage support (s3fs, gcsfs)

- all: Complete feature set (all ngff-zarr dependencies + cloud)

pixi shell -e dev # Development work

pixi shell -e cloud # Cloud storage testing

pixi shell -e all # Full feature testing

Using traditional tools

# Clone and install in development mode

git clone <repository>

cd mcp/

pip install -e ".[all]"

# Run tests

pytest

# Lint code

black .

ruff check .

Dependencies

Core

- mcp: Model Context Protocol implementation

- ngff-zarr: Core image conversion functionality

- pydantic: Data validation

- httpx: HTTP client for remote files

- aiofiles: Async file operations

Optional

- s3fs: S3 storage support

- gcsfs: Google Cloud Storage support

- dask[distributed]: Distributed processing

🚨 Troubleshooting

Python Version Issues

The ngff-zarr-mcp server requires Python 3.10 or higher. If you encounter version errors:

# Check your Python version

python --version

# Use uvx to automatically handle Python environments

uvx ngff-zarr-mcp

Package Not Found Errors

If you encounter package not found errors with uvx:

# Update uvx

pip install --upgrade uvx

# Try installing the package explicitly first

uvx install ngff-zarr-mcp

uvx ngff-zarr-mcp

Permission Issues

If you encounter permission errors during installation:

# Use user installation

pip install --user uvx

# Or create a virtual environment

python -m venv venv

source venv/bin/activate # On Windows: venv\Scripts\activate

pip install ngff-zarr-mcp

Memory Issues with Large Images

For large images, you may need to adjust memory settings:

# Start server with memory limit

ngff-zarr-mcp --memory-target 8GB

# Or use chunked processing in your conversion calls

# convert_images_to_ome_zarr(chunks=[512, 512, 64])

Network Issues with Remote Files

If you have issues accessing remote files:

# Test basic connectivity

curl -I <your-url>

# For S3 URLs, ensure s3fs is installed

pip install s3fs

# Configure AWS credentials if needed

aws configure

General MCP Client Errors

- Ensure your MCP client supports the latest MCP protocol version

- Check that the server starts correctly:

uvx ngff-zarr-mcp --help - Verify JSON configuration syntax in your client config

- Try restarting your MCP client after configuration changes

- Check client logs for specific error messages

License

MIT License - see LICENSE file for details.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file ngff_zarr_mcp-0.6.0.tar.gz.

File metadata

- Download URL: ngff_zarr_mcp-0.6.0.tar.gz

- Upload date:

- Size: 6.7 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

468c4187deca5512559123524a691582390c136585455944699957efb58601d1

|

|

| MD5 |

94a641147d749b41925de788eb50d6a8

|

|

| BLAKE2b-256 |

9ddef16b531c6ddbe8d6e1a4dc5dfe310780ad76920e519fbb342898334b9065

|

File details

Details for the file ngff_zarr_mcp-0.6.0-py3-none-any.whl.

File metadata

- Download URL: ngff_zarr_mcp-0.6.0-py3-none-any.whl

- Upload date:

- Size: 22.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

911ef92cdbf051dd71dacfd3c6eb243d1e8c329f4445b6b692650306fae9fa10

|

|

| MD5 |

d7c194e11af4c438608ccc73a3d04fe2

|

|

| BLAKE2b-256 |

1a26d849ba4b66904fb0a50a45485b668842f312830b9085de7d13ad3385f0e8

|