Data Model for the OCF nowcasting project

Project description

nowcasting_datamodel

Datamodel for the nowcasting project

:warning: Note this repo will soon be deprecated in favour of a new Data Platform

The data model has been made using sqlalchemy with a mirrored model in pydantic.

⚠️ Database tables are currently made automatically, but in the future there should be a migration process

Future: The data model could be moved, to be a more modular solution.

nowcasting_datamodel

models.py

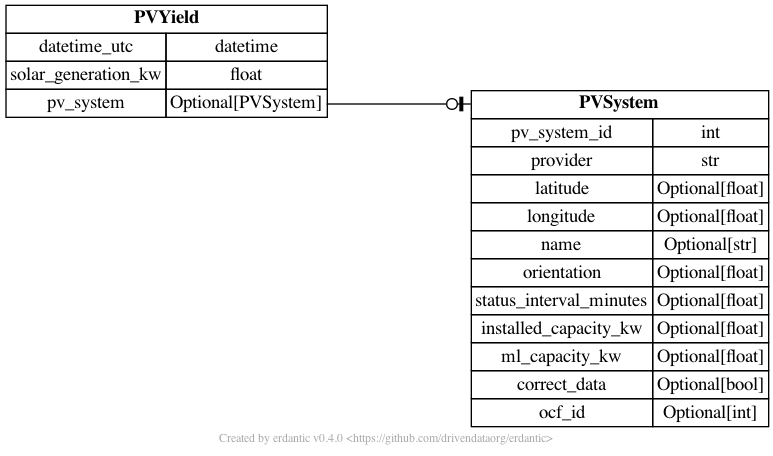

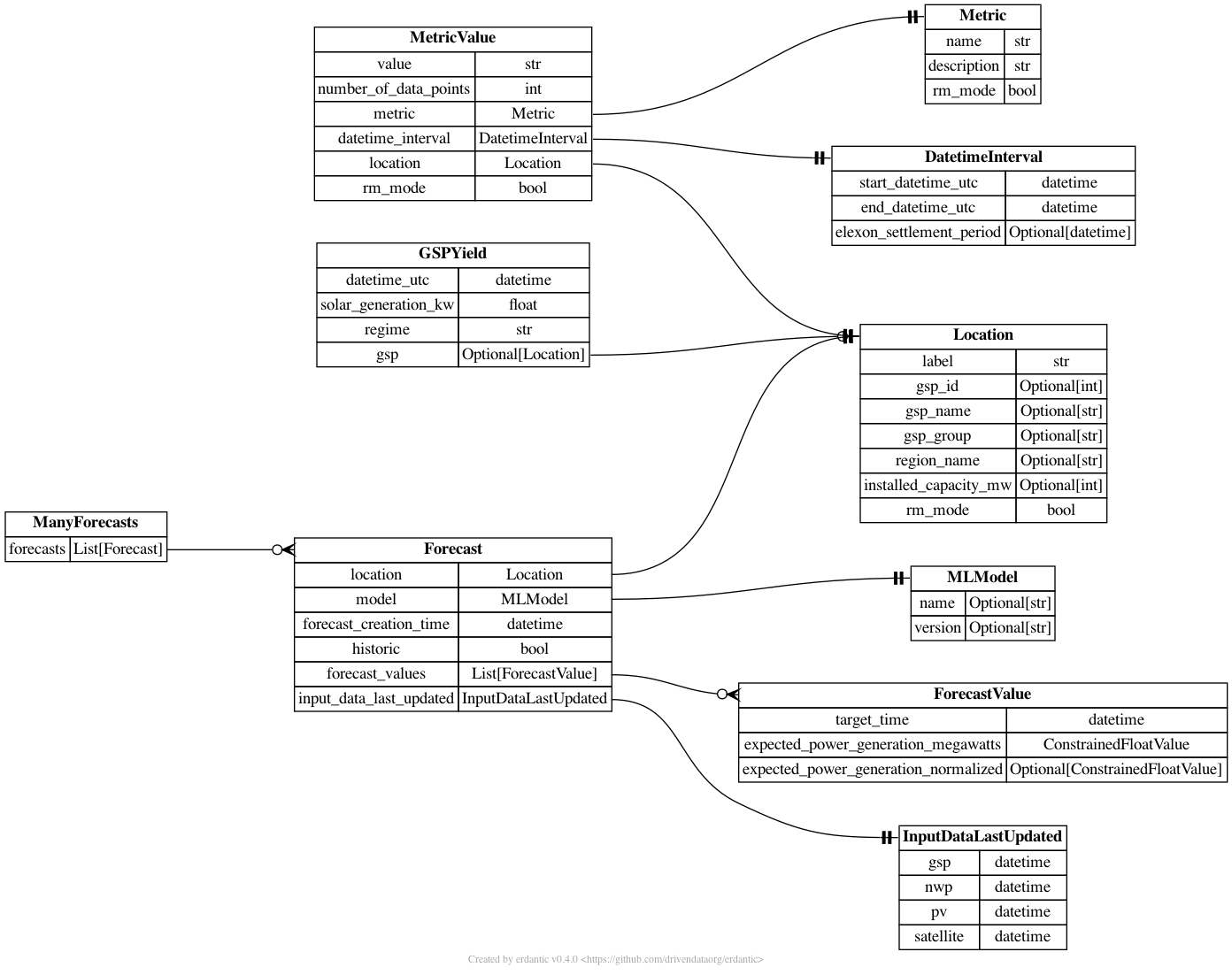

All models are in nowcasting_datamodel.models.py.

The diagram below shows how the different tables are connected.

connection.py

nowcasting_datamodel.connection.py contains a connection class which can be used to make a sqlalchemy session.

from nowcasting_datamodel.connection import DatabaseConnection

# make connection object

db_connection = DatabaseConnection(url='sqlite:///test.db')

# make sessions

with db_connection.get_session() as session:

# do something with the database

pass

👓 read.py

nowcasting_datamodel.read.py contains functions to read the database.

The idea is that these are easy to use functions that query the database in an efficient and easy way.

- get_latest_forecast: Get the latest

Forecastfor a specific GSP. - get_all_gsp_ids_latest_forecast: Get the latest

Forecastfor all GSPs. - get_forecast_values: Gets the latest

ForecastValuefor a specific GSP - get_latest_national_forecast: Returns the latest national forecast

- get_location: Gets a

Locationobject

from nowcasting_datamodel.connection import DatabaseConnection

from nowcasting_datamodel.read import get_latest_forecast

# make connection object

db_connection = DatabaseConnection(url='sqlite:///test.db')

# make sessions

with db_connection.get_session() as session:

f = get_latest_forecast(session=session, gsp_id=1)

💾 save.py

nowcasting_datamodel.save.py has one functions to save a list of Forecast to the database

🇬🇧 national.py

nowcasting_datamodel.fake.py has a useful function for adding up forecasts for all GSPs into a national Forecast.

fake.py

nowcasting_datamodel.fake.py

Functions used to make fake model data.

🩺 Testing

Tests are run by using the following command

docker stop $(docker ps -a -q)

docker-compose -f test-docker-compose.yml build

docker-compose -f test-docker-compose.yml run tests

These sets up postgres in a docker container and runs the tests in another docker container.

This slightly more complicated testing framework is needed (compared to running pytest)

as some queries can not be fully tested on a sqlite database

Mac M1 users

An upstream builds issue of libgp may cause the following error:

sqlalchemy.exc.OperationalError: (psycopg2.OperationalError) SCRAM authentication requires libpq version 10 or above

As suggested in this thread, a temporary fix is to set the env variable DOCKER_DEFAULT_PLATFORM=linux/amd64 prior to building the test images - although this reportedly comes with performance penalties.

🛠️ infrastructure

.github/workflows contains a number of CI actions

- linters.yaml: Runs linting checks on the code

- release.yaml: Make and pushes docker files on a new code release

- test-docker.yaml': Runs tests on every push

The docker file is in the folder infrastructure/docker/

The version is bumped automatically for any push to main.

Environmental Variables

- DB_URL: The database url which the forecasts will be saved too

Contributors ✨

Thanks goes to these wonderful people (emoji key):

Brandon Ly 💻 |

Chris Lucas 💻 |

James Fulton 💻 |

Rosheen Naeem 💻 |

Henri Dewilde 💻 |

Sahil Chhoker 💻 |

Abdallah salah 💻 |

tmi 💻 |

Database Missing no1 💻 |

This project follows the all-contributors specification. Contributions of any kind welcome!

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file nowcasting_datamodel-1.7.8.tar.gz.

File metadata

- Download URL: nowcasting_datamodel-1.7.8.tar.gz

- Upload date:

- Size: 218.3 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.9.25

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b81931cf34bf22627d37a4a5bf08f57682e6b8f02c03c7963120ebf74807a508

|

|

| MD5 |

06538b5a9ec5cb0ef0a4e6f8143c9e27

|

|

| BLAKE2b-256 |

c0ed6b398e322e0acc015d634572d8ae487252dbc18b50819a18e16afcbb08a0

|

File details

Details for the file nowcasting_datamodel-1.7.8-py3-none-any.whl.

File metadata

- Download URL: nowcasting_datamodel-1.7.8-py3-none-any.whl

- Upload date:

- Size: 47.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.9.25

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d4851c9f1859be0772d1ac8d1410bbdaa7aef516e31c16c7c821b409090e545e

|

|

| MD5 |

5382b3dde6c5f6d05a5d8b5711255261

|

|

| BLAKE2b-256 |

c10034ecd202c68f1f9d21d7bc9ee49f9031b8d36fae373d8dcb6683b69b85c3

|