Framework for Image Precompensation

Project description

Pyolimp

This project provides a machine learning-based framework for image precompensation targeting vision defects, specifically color vision deficiencies (CVD) and refractive visual impairments (RVI). The framework incorporates both neural network and non-neural network modules to address precompensation effectively across different approaches.

Project Overview

This project focuses on precompensating for visual impairments using machine learning techniques. It includes a comprehensive framework that supports both neural network (NN) and non-neural network (non-NN) methods to restore image quality for those affected by:

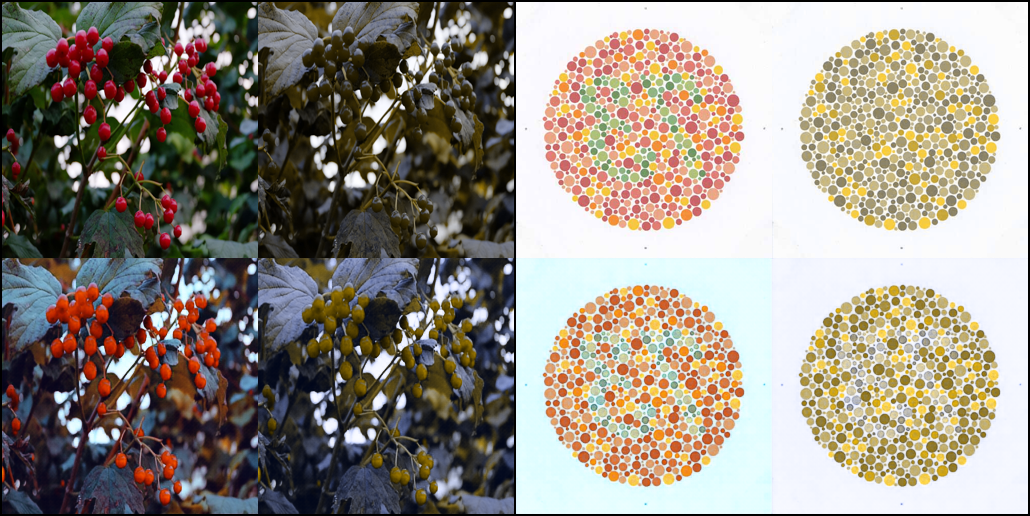

- Color Vision Deficiencies (CVD): Compensates for color blindness (e.g., protanopia, deuteranopia).

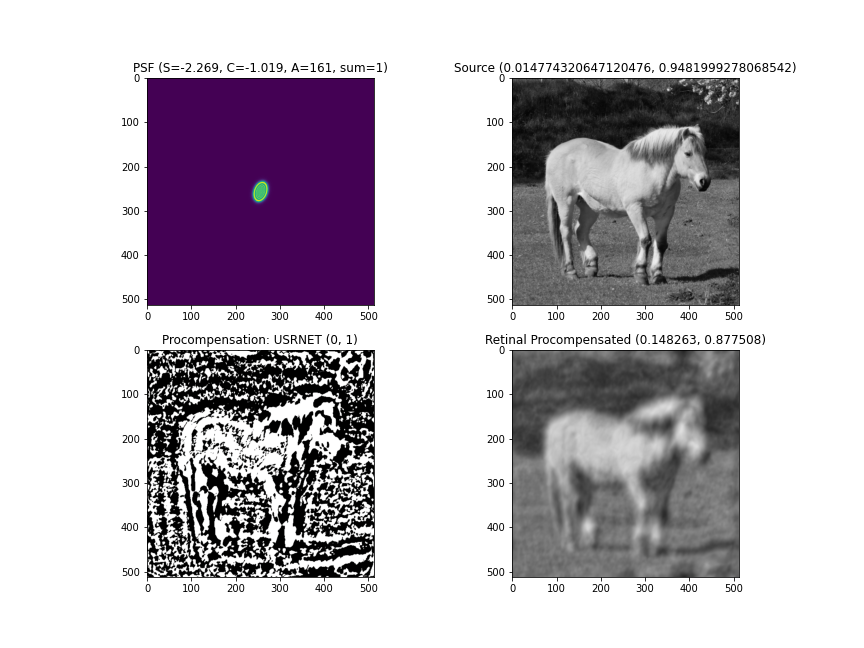

- Refractive Visual Impairments (RVI): Addresses distortions caused by refractive errors, improving clarity and sharpness.

Requirements

- Python 3.10+

- Pytorch 2.4+

- Additional dependencies listed in pyproject.toml

Installation

pip install olimp

or

pip install git+https://github.com/pyolimp/pyolimp.git

Usage

1. Non-Neural Network Modules for CVD and RVI Precompensation

To run the RVI optimization algorithm for precompensation using the Bregman-Jumbo method, execute:

python3 -m olimp.precompensation.optimization.bregman_jumbo

To run the RVI optimization algorithm for precompensation using the Montalto method, execute:

python3 -m olimp.precompensation.optimization.montalto

To run the CVD optimization algorithm for precompensation using the Tennenholtz Zachevsky method, execute:

python3 -m olimp.precompensation.optimization.tennenholtz_zachevsky

You can also call examples from the directory olimp.precompensation.basic

and olimp.precompensation.analytics as in the examples given.

2. Neural Network Modules for CVD and RVI Precompensation

To run the RVI nn model for precompensation using the USRNET method, execute:

python3 -m olimp.precompensation.nn.models.usrnet

To run the CVD nn model for precompensation using the USRNET method, execute:

python3 -m olimp.precompensation.nn.models.cvd_swin.Generator_transformer_pathch4_844_48_3_nouplayer_server5

3. Training models

To train neural network models for precompensation, use the following command:

python3 -m olimp.precompensation.nn.train.train --config ./olimp/precompensation/nn/pipeline/usrnet.json

you can also train other models, please see olimp/precompensation/nn/pipeline. Also we have json schema and you can generate it, use the following command:

python3 -m olimp.precompensation.nn.train.train --update-schema

Examples

CVD demo example

RVI demo example

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file olimp-0.1.2.tar.gz.

File metadata

- Download URL: olimp-0.1.2.tar.gz

- Upload date:

- Size: 94.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.10.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6ce401e4f1a061942c35b8d4477e0bf068dc23ba64916071330b14341f6dd5e3

|

|

| MD5 |

1906857026e65c806bf8f09b45672f46

|

|

| BLAKE2b-256 |

a0b48cf429838567ee15395227a4657b3470f349cb7542f1a668144b682954b7

|

File details

Details for the file olimp-0.1.2-py3-none-any.whl.

File metadata

- Download URL: olimp-0.1.2-py3-none-any.whl

- Upload date:

- Size: 154.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.10.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ccf35683eb08feed5c8be72a232c5241e2a913ec9f800650dc3bfcb423798ebc

|

|

| MD5 |

c1f5a9586d9bab36b0675075488fe515

|

|

| BLAKE2b-256 |

0c36e8b3f125ead8b1131bdf3e8b3f751d814e66efc60b330b9a80fd69fa6344

|