Modern Data Centric AI system for Large Language Models

Project description

DataFlow

🎉 If you like our project, please give us a star ⭐ on GitHub for the latest update.

Beginner-friendly learning resources (continuously updated): 🎬 DataFlow Video Tutorials; 📚 DataFlow Written Tutorials

简体中文 | English

📰 1. News

-

[2025-11-20] Introducing New Data Agents for DataFlow! 🤖 You can try them out now and follow the tutorial on Bilibili for a quick start.

-

[2025-06-28] 🎉 We’re excited to announce that DataFlow, our Data-centric AI system, is now released! Stay tuned for future updates.

🔍 2. Overview

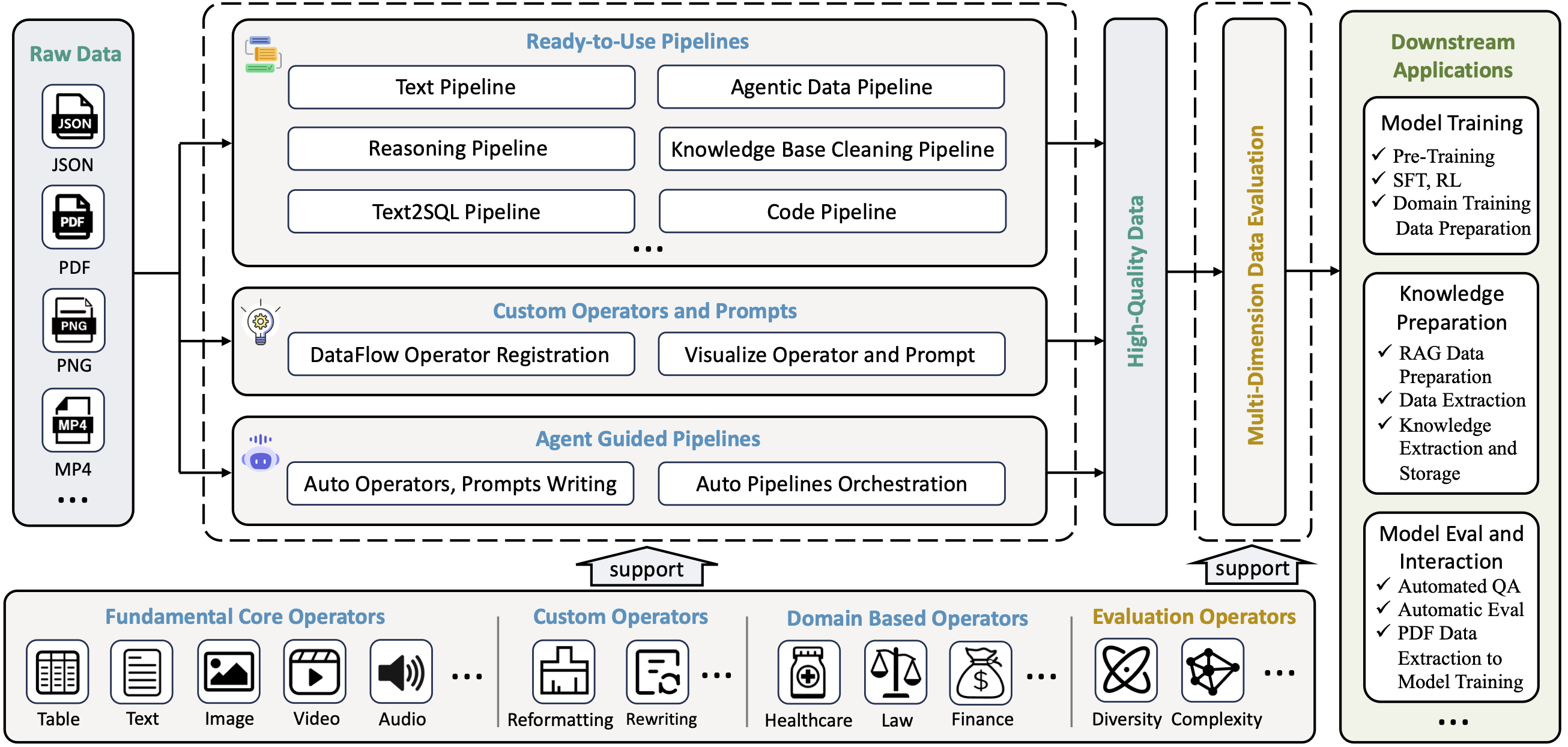

DataFlow is a data preparation and training system designed to parse, generate, process, and evaluate high-quality data from noisy sources (PDF, plain-text, low-quality QA), thereby improving the performance of large language models (LLMs) in specific domains through targeted training (Pre-training, Supervised Fine-tuning, RL training) or RAG using knowledge base cleaning. DataFlow has been empirically validated to improve domain-oriented LLMs' performance in fields such as healthcare, finance, and law.

Specifically, we are constructing diverse operators leveraging rule-based methods, deep learning models, LLMs, and LLM APIs. These operators are systematically integrated into distinct pipelines, collectively forming the comprehensive DataFlow system. Additionally, we develop an intelligent DataFlow-agent capable of dynamically assembling new pipelines by recombining existing operators on demand.

🛠️ 3. Operators Functionality

🔧 3.1 How Operators Work

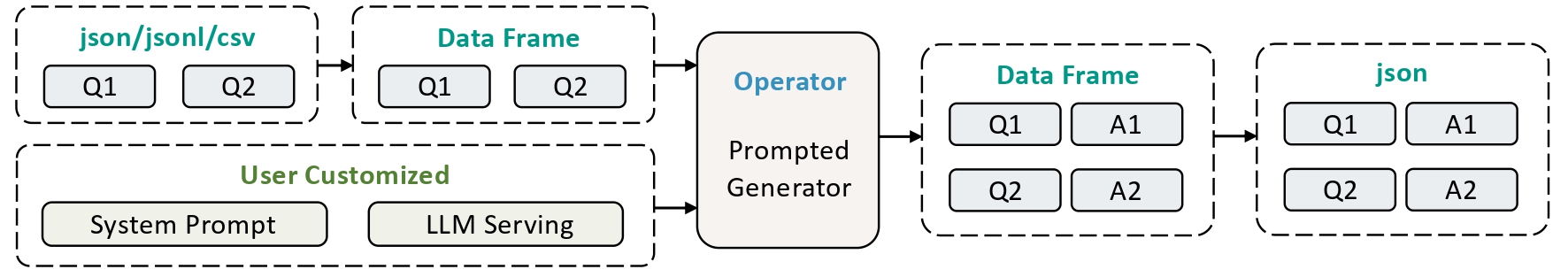

DataFlow adopts a modular operator design philosophy, building flexible data processing pipelines by combining different types of operators. As the basic unit of data processing, an operator can receive structured data input (such as in json/jsonl/csv format) and, after intelligent processing, output high-quality data results. For a detailed guide on using operators, please refer to the Operator Documentation.

📊 3.2 Operator Classification System

In the DataFlow framework, operators are divided into three core categories based on their functional characteristics:

| Operator Type | Quantity | Main Function |

|---|---|---|

| Generic Operators | 80+ | Covers general functions for text evaluation, processing, and synthesis |

| Domain-Specific Operators | 40+ | Specialized processing for specific domains (e.g., medical, financial, legal) |

| Evaluation Operators | 20+ | Comprehensively evaluates data quality from 6 dimensions |

🛠️ 4. Pipelines Functionality

🔧 4.1 Ready-to-Use PipeLines

Current Pipelines in Dataflow are as follows:

- 📝 Text Pipeline: Mine question-answer pairs from large-scale plain-text data (mostly crawed from InterNet) for use in SFT and RL training.

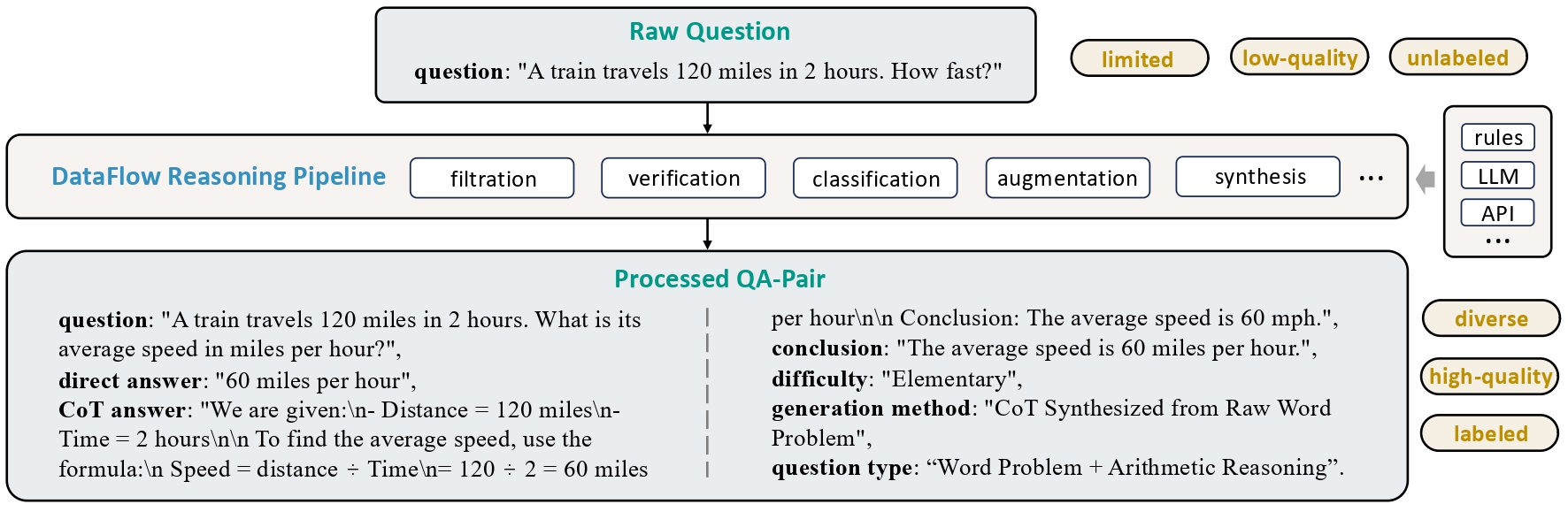

- 🧠 Reasoning Pipeline: Enhances existing question–answer pairs with (1) extended chain-of-thought, (2) category classification, and (3) difficulty estimation.

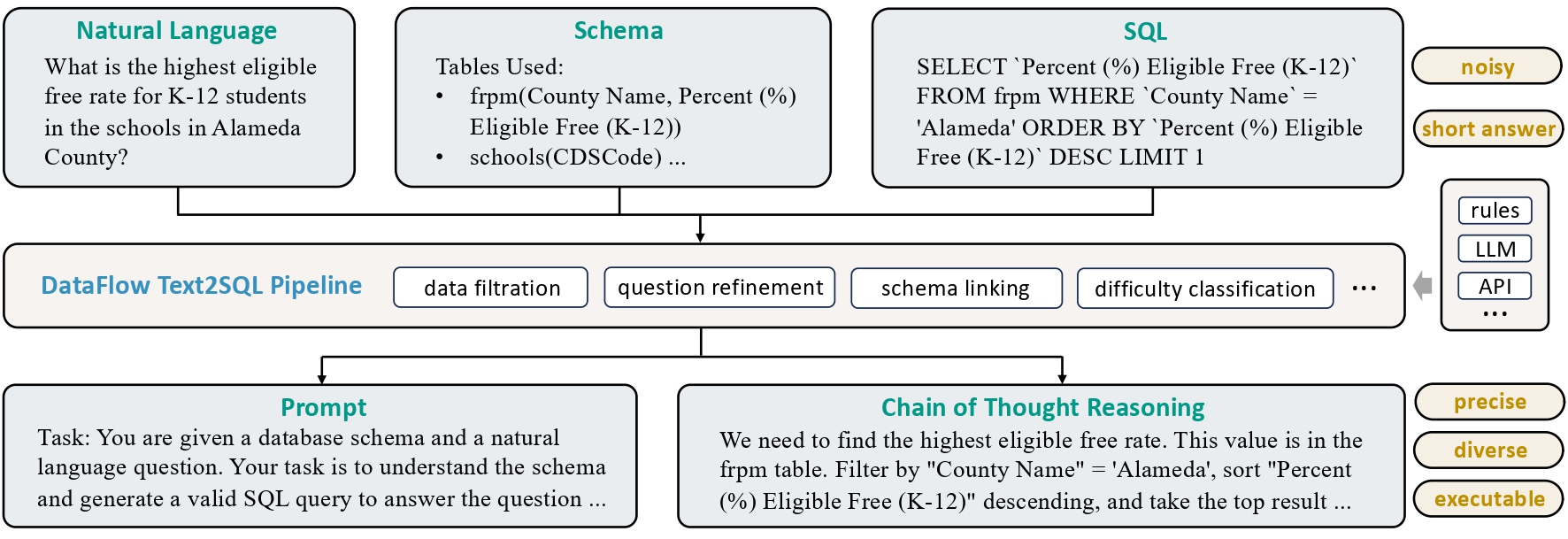

- 🗃️ Text2SQL Pipeline: Translates natural language questions into SQL queries, supplemented with explanations, chain-of-thought reasoning, and contextual schema information.

- 📚 Knowlege Base Cleaning Pipeline: Extract and structure knowledge from unorganized sources like tables, PDFs, and Word documents into usable entries for downstream RAG or QA pair generation.

- 🤖 Agentic RAG Pipeline: Identify and extract QA pairs from existing QA datasets or knowledge bases that require external knowledge to answer, for use in downstream training of Agnetic RAG tasks.

⚙️ 4.2 Flexible Operator PipeLines

In this framework, operators are categorized into Fundamental Operators, Generic Operators, Domain-Specific Operators, and Evaluation Operators, etc., supporting data processing and evaluation functionalities. Please refer to the documentation for details.

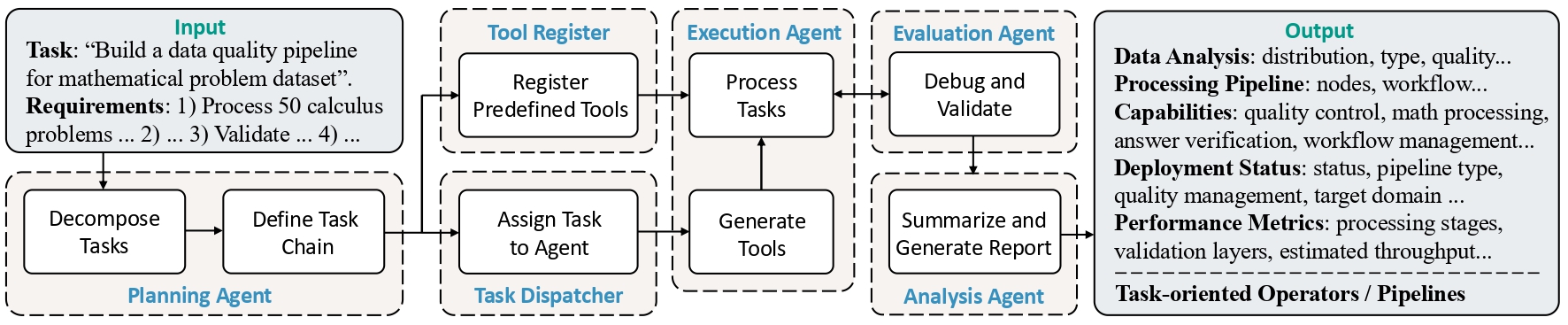

🤖 4.3 Agent Guided Pipelines

-

DataFlow Agent: An intelligent assistant that performs data analysis, writes custom

operators, and automatically orchestrates them intopipelinesbased on specific task objectives.

⚡ 5. Quick Start

🛠️ 5.1 Environment Setup and Installation

Please use the following commands for environment setup and installation👇

conda create -n dataflow python=3.10

conda activate dataflow

pip install open-dataflow

If you want to use your own GPU for local inference, please use:

pip install open-dataflow[vllm]

DataFlow supports Python>=3.10 environments

After installation, you can use the following command to check if dataflow has been installed correctly:

dataflow -v

If installed correctly, you should see:

open-dataflow codebase version: 1.0.0

Checking for updates...

Local version: 1.0.0

PyPI newest version: 1.0.0

You are using the latest version: 1.0.0.

🐳 5.1.1 Docker Installation (Alternative)

We also provide a Dockerfile for easy deployment and a pre-built Docker image for immediate use.

Option 1: Use Pre-built Docker Image

You can directly pull and use our pre-built Docker image:

# Pull the pre-built image

docker pull molyheci/dataflow:cu124

# Run the container with GPU support

docker run --gpus all -it molyheci/dataflow:cu124

# Inside the container, verify installation

dataflow -v

Option 2: Build from Dockerfile

Alternatively, you can build the Docker image from the provided Dockerfile:

# Clone the repository (HTTPS)

git clone https://github.com/OpenDCAI/DataFlow.git

# Or use SSH

# git clone git@github.com:OpenDCAI/DataFlow.git

cd DataFlow

# Build the Docker image

docker build -t dataflow:custom .

# Run the container

docker run --gpus all -it dataflow:custom

# Inside the container, verify installation

dataflow -v

Note: The Docker image includes CUDA 12.4.1 support and comes with vLLM pre-installed for GPU acceleration. Make sure you have NVIDIA Container Toolkit installed to use GPU features.

📖 5.2 Reference Project Documentation

For detailed usage instructions and getting started guide, please visit our Documentation.

🧪 6. Experimental Results

For Detailed Experiments setting, please visit our documentation.

📝 6.1 Text Pipeline

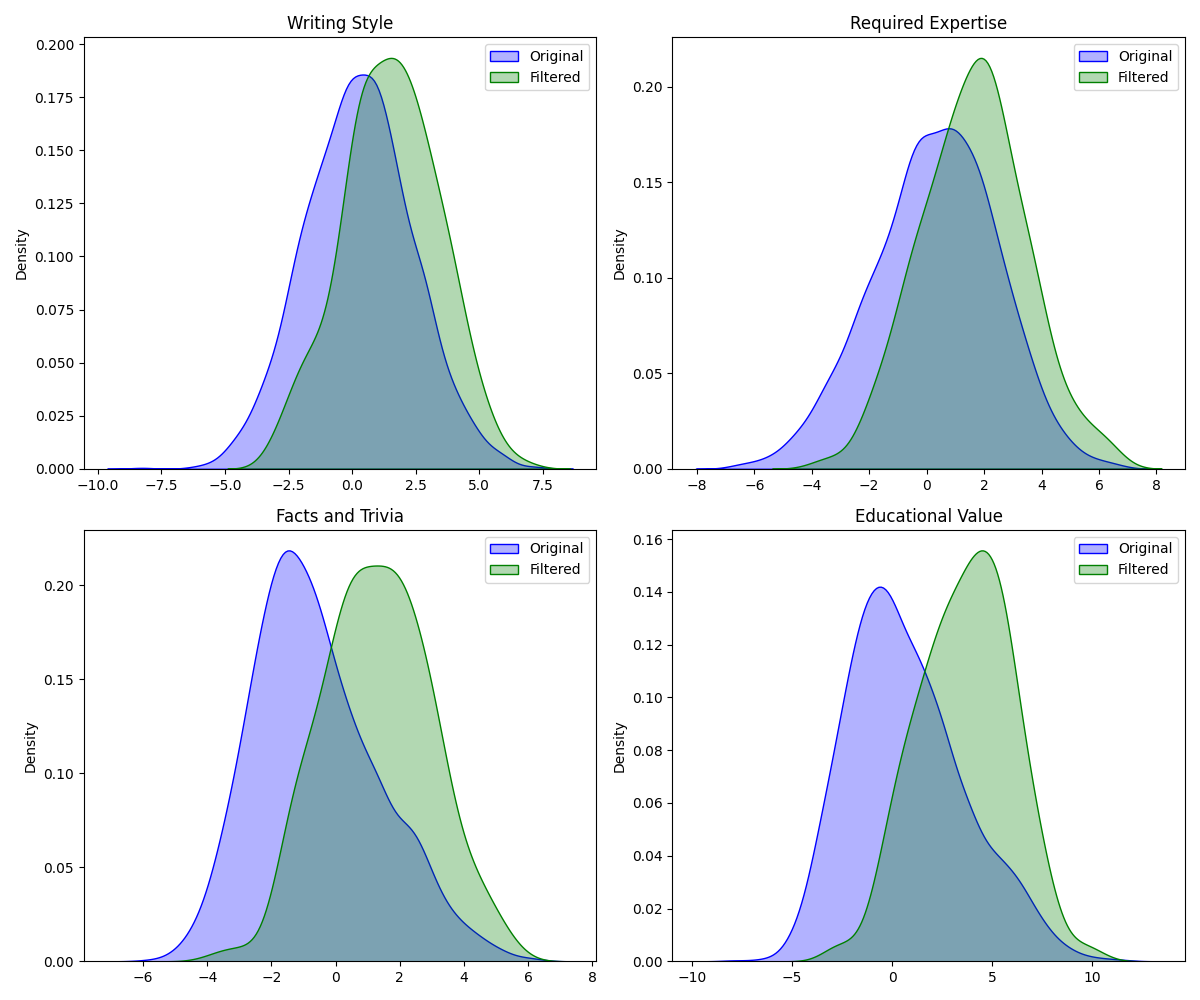

6.1.1 Pre-training data filter pipeline

The pre-training data processing pipeline was applied to randomly sampled data from the RedPajama dataset, resulting in a final data retention rate of 13.65%. The analysis results using QuratingScorer are shown in the figure. As can be seen, the filtered pretraining data significantly outperforms the original data across four scoring dimensions: writing style, requirement for expert knowledge, factual content, and educational value. This demonstrates the effectiveness of the DataFlow pretraining data processing.

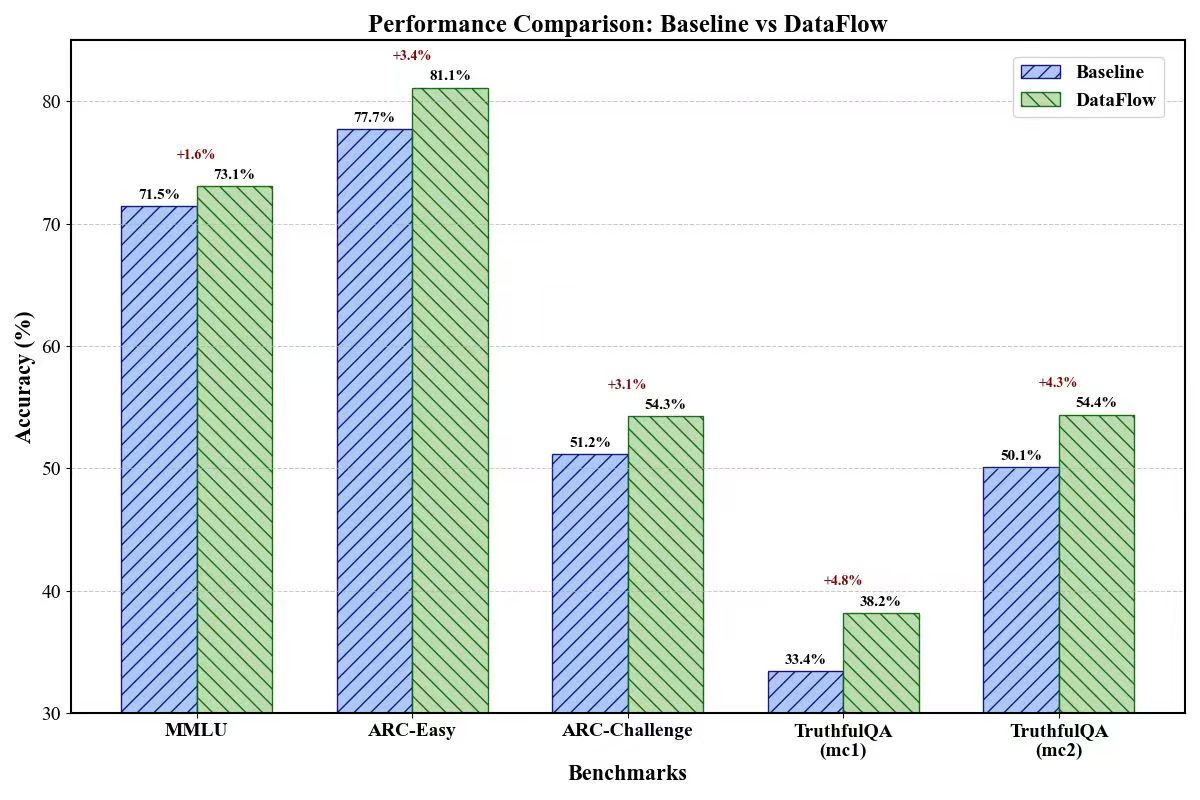

6.1.2 SFT data filter pipeline

We filtered 3k records from alpaca dataset and compared it with randomly selected 3k data from alpaca dataset by training it on Qwen2.5-7B. Results are:

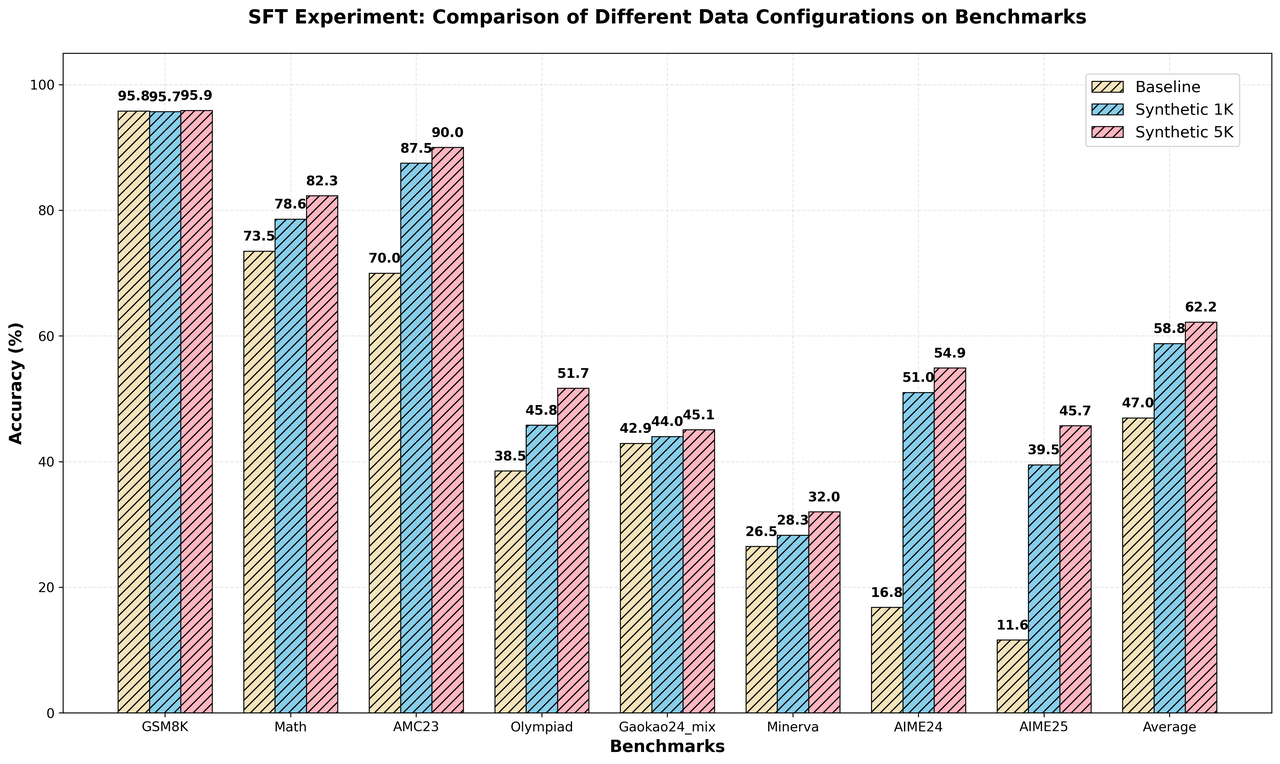

🧠 6.2 Reasoning Pipeline

We verify our reasoning pipeline by SFT on a Qwen2.5-32B-Instruct with Reasoning Pipeline synsthized data. We generated 1k and 5k SFT data pairs. Results are:

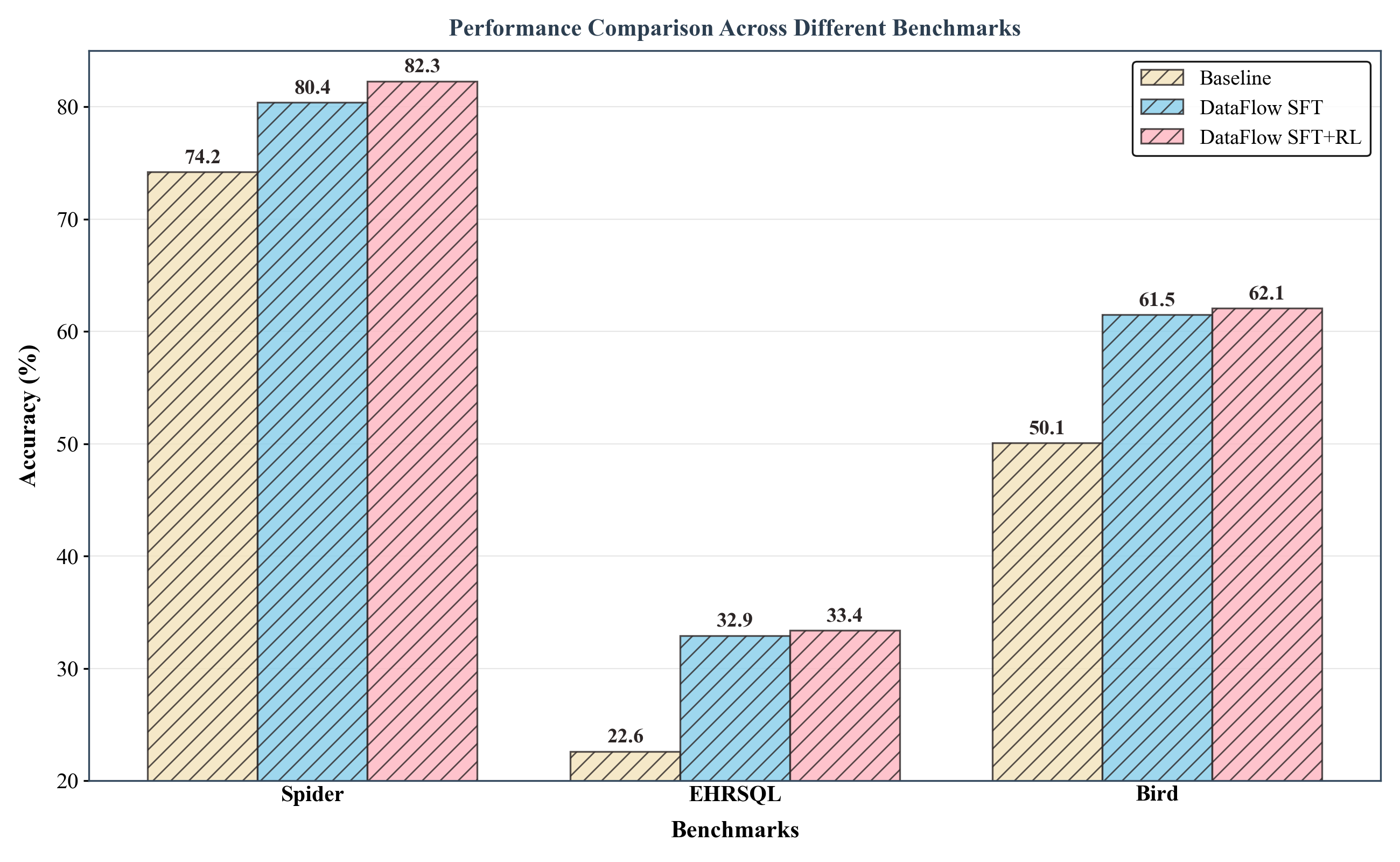

🗃️ 6.3 Text2SQL PipeLine

We fine-tuned the Qwen2.5-Coder-7B-Instruct model using both Supervised Fine-tuning (SFT) and Reinforcement Learning (RL), with data constructed via the DataFlow-Text2SQL Pipeline. Results are:

📄 7. Publications

Our team has published the following papers that form core components of the DataFlow system:

| Paper Title | DataFlow Component | Venue | Year |

|---|---|---|---|

| MM-Verify: Enhancing Multimodal Reasoning with Chain-of-Thought Verification | Multimodal reasoning verification framework for data processing and evaluation | ACL | 2025 |

| Efficient Pretraining Data Selection for Language Models via Multi-Actor Collaboration | Multi-actor collaborative data selection mechanism for enhanced data filtering and processing | ACL | 2025 |

Contributing Institutions:

🏆 8. Awards & Achievements

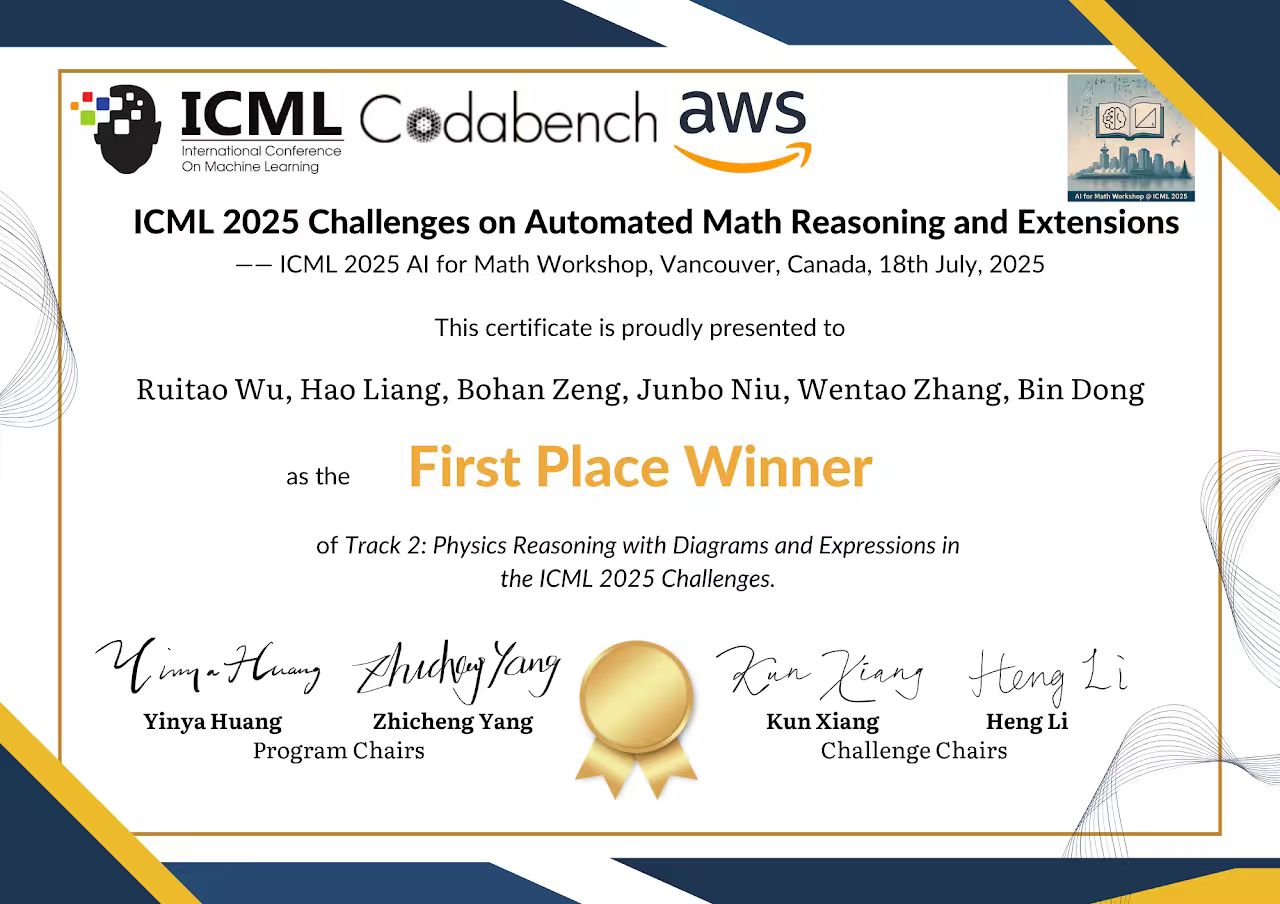

We are honored to have received first-place awards in two major international AI competitions, recognizing the excellence and robustness of DataFlow and its reasoning capabilities:

| Competition | Track | Award | Organizer | Date |

|---|---|---|---|---|

| ICML 2025 Challenges on Automated Math Reasoning and Extensions | Track 2: Physics Reasoning with Diagrams and Expressions | 🥇 First Place Winner | ICML AI for Math Workshop & AWS Codabench | July 18, 2025 |

| 2025 Language and Intelligence Challenge (LIC) | Track 2: Beijing Academy of Artificial Intelligence | 🥇 First Prize | Beijing Academy of Artificial Intelligence (BAAI) & Baidu | August 10, 2025 |

ICML 2025 Automated Math Reasoning Challenge — First Place Winner |

BAAI Language & Intelligence Challenge 2025 — First Prize |

💐 9. Acknowledgements

We sincerely appreciate MinerU's outstanding contribution, particularly its robust text extraction capabilities from PDFs and documents, which greatly facilitate data loading.

🤝 10. Community & Support

Join the DataFlow open-source community to ask questions, share ideas, and collaborate with other developers!

• 📮 GitHub Issues: Report bugs or suggest features

• 🔧 GitHub Pull Requests: Contribute code improvements

• 💬 Join our community groups to connect with us and other contributors!

📜 11. Citation

If you use DataFlow in your research, feel free to give us a cite.

@misc{dataflow2025,

author = {DataFlow Develop Team},

title = {DataFlow: A Unified Framework for Data-Centric AI},

year = {2025},

howpublished = {\url{https://github.com/OpenDCAI/DataFlow}},

note = {Accessed: 2025-07-08}

}

📊 12. Statistics

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file open_dataflow-1.0.7.tar.gz.

File metadata

- Download URL: open_dataflow-1.0.7.tar.gz

- Upload date:

- Size: 2.4 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

15217e0f955015445a3c4cce39f775280c27d8e199a2a489f1ac410653fe1c79

|

|

| MD5 |

58282b9f4fb2a1db1d236f01cd64a1bf

|

|

| BLAKE2b-256 |

43fc2543d4a22b77fe3c7fc36ee64745fc267a741ecc10929f1395d32ffd9126

|

Provenance

The following attestation bundles were made for open_dataflow-1.0.7.tar.gz:

Publisher:

python-publish.yml on OpenDCAI/DataFlow

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

open_dataflow-1.0.7.tar.gz -

Subject digest:

15217e0f955015445a3c4cce39f775280c27d8e199a2a489f1ac410653fe1c79 - Sigstore transparency entry: 709684168

- Sigstore integration time:

-

Permalink:

OpenDCAI/DataFlow@b716ba8523a2705284a77d915364979a2d2221a1 -

Branch / Tag:

refs/tags/v1.0.7 - Owner: https://github.com/OpenDCAI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

python-publish.yml@b716ba8523a2705284a77d915364979a2d2221a1 -

Trigger Event:

release

-

Statement type:

File details

Details for the file open_dataflow-1.0.7-py3-none-any.whl.

File metadata

- Download URL: open_dataflow-1.0.7-py3-none-any.whl

- Upload date:

- Size: 2.7 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f500c0e6c22616df32f584c9dda47723765bbfaf698ca8d3fd2307fc43148497

|

|

| MD5 |

652551bd837e9729715fedcaa9a1d0ca

|

|

| BLAKE2b-256 |

4c4c26ddb740eac4f96e999b922b287dd6fb0768f877079978788d09a0f51714

|

Provenance

The following attestation bundles were made for open_dataflow-1.0.7-py3-none-any.whl:

Publisher:

python-publish.yml on OpenDCAI/DataFlow

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

open_dataflow-1.0.7-py3-none-any.whl -

Subject digest:

f500c0e6c22616df32f584c9dda47723765bbfaf698ca8d3fd2307fc43148497 - Sigstore transparency entry: 709684170

- Sigstore integration time:

-

Permalink:

OpenDCAI/DataFlow@b716ba8523a2705284a77d915364979a2d2221a1 -

Branch / Tag:

refs/tags/v1.0.7 - Owner: https://github.com/OpenDCAI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

python-publish.yml@b716ba8523a2705284a77d915364979a2d2221a1 -

Trigger Event:

release

-

Statement type: