An Open-source Factuality Evaluation Demo for LLMs

Project description

An Open-source Factuality Evaluation Demo for LLMs

Overview • Installation • Usage • HuggingFace Demo • Documentation

Overview

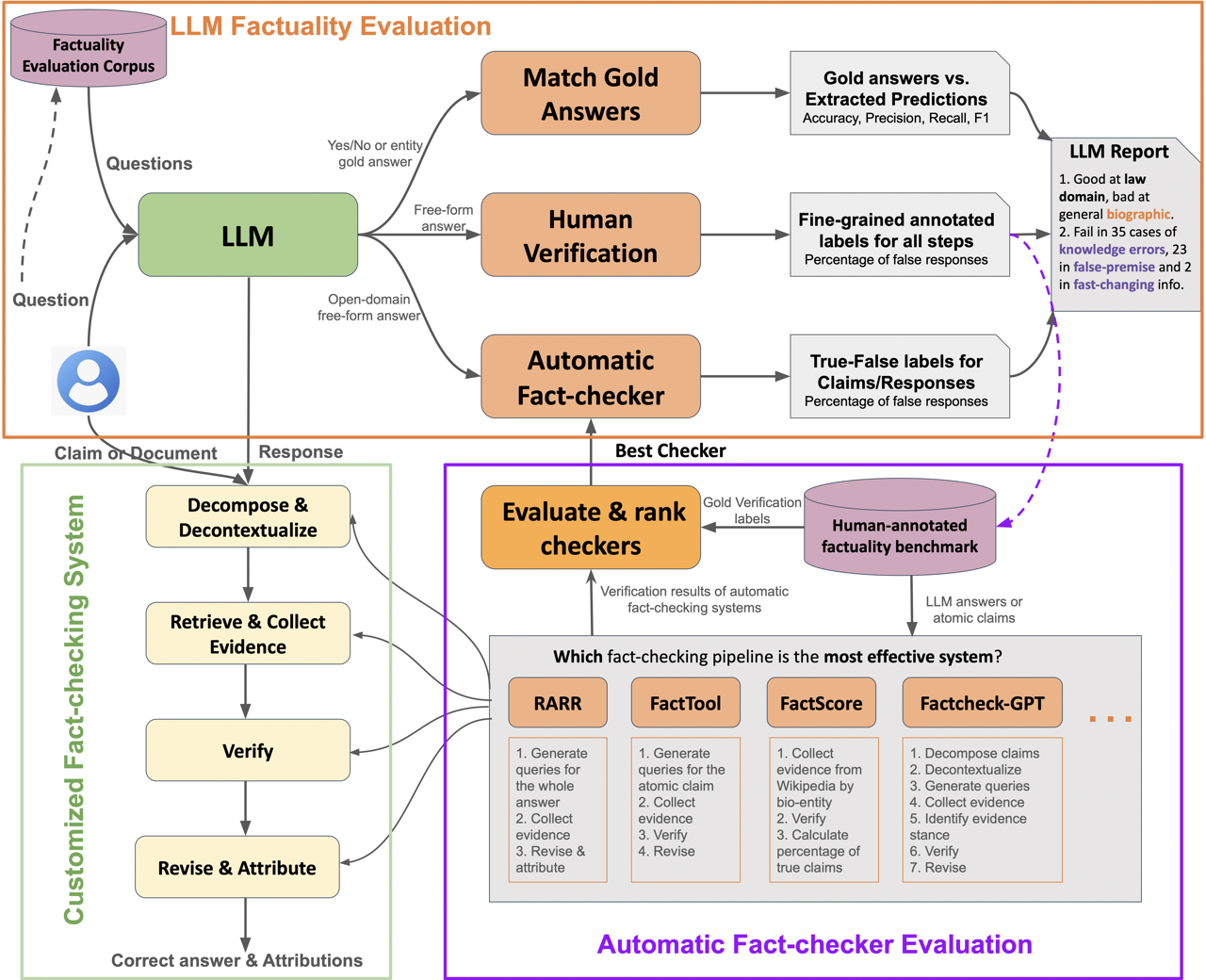

OpenFactCheck is an open-source repository designed to facilitate the evaluation and enhancement of factuality in responses generated by large language models (LLMs). This project aims to integrate various fact-checking tools into a unified framework and provide comprehensive evaluation pipelines, with built-in solvers for English and five additional languages (Arabic, Bulgarian, Chinese, Italian, and Urdu).

Supported Solvers

OpenFactCheck ships with several fact-checking pipelines you can use out of the box:

English

- factool — pipeline from FacTool

- factcheckgpt — pipeline from FactCheck-GPT

- rarr — Retrieval-Augmented Research and Revision

Multilingual (Arabic, Bulgarian, Chinese, and Italian are new in v1.1.0)

- arabicfactcheck — Arabic claim verification

- bulgarianfactcheck — Bulgarian claim verification

- chinesefactcheck — Chinese claim verification

- italianfactcheck — Italian claim verification

- urdufactcheck — Urdu claim verification

Each multilingual solver follows the same five-stage pattern: cp (claim processing) → rtv / rtv_tr / rtv_thtr (retrieval variants) → vfr (verification).

Utility

- dummy — passthrough/no-op (testing)

- tutorial — minimal example for building your own solver

- webservice — wrap any HTTP API as a solver

Installation

You can install the package from PyPI using pip:

pip install openfactcheck

Usage

First, you need to initialize the OpenFactCheckConfig object and then the OpenFactCheck object.

from openfactcheck import OpenFactCheck, OpenFactCheckConfig

# Initialize the OpenFactCheck object

config = OpenFactCheckConfig()

ofc = OpenFactCheck(config)

Response Evaluation

You can evaluate a response using the ResponseEvaluator class.

# Evaluate a response

result = ofc.ResponseEvaluator.evaluate(response: str)

LLM Evaluation

We provide FactQA, a dataset of 6480 questions for evaluating LLMs. Onc you have the responses from the LLM, you can evaluate them using the LLMEvaluator class.

# Evaluate an LLM

result = ofc.LLMEvaluator.evaluate(model_name: str,

input_path: str)

Checker Evaluation

We provide FactBench, a dataset of 4507 claims for evaluating fact-checkers. Once you have the responses from the fact-checker, you can evaluate them using the CheckerEvaluator class.

# Evaluate a fact-checker

result = ofc.CheckerEvaluator.evaluate(checker_name: str,

input_path: str)

Cite

If you use OpenFactCheck in your research, please cite the following:

@article{wang2024openfactcheck,

title = {OpenFactCheck: A Unified Framework for Factuality Evaluation of LLMs},

author = {Wang, Yuxia and Wang, Minghan and Iqbal, Hasan and Georgiev, Georgi and Geng, Jiahui and Nakov, Preslav},

journal = {arXiv preprint arXiv:2405.05583},

year = {2024}

}

@article{iqbal2024openfactcheck,

title = {OpenFactCheck: A Unified Framework for Factuality Evaluation of LLMs},

author = {Iqbal, Hasan and Wang, Yuxia and Wang, Minghan and Georgiev, Georgi and Geng, Jiahui and Gurevych, Iryna and Nakov, Preslav},

journal = {arXiv preprint arXiv:2408.11832},

year = {2024}

}

@software{hasan_iqbal_2024_13358665,

author = {Hasan Iqbal},

title = {hasaniqbal777/OpenFactCheck: v1.1.0},

month = {aug},

year = {2024},

publisher = {Zenodo},

version = {v1.1.0},

doi = {10.5281/zenodo.13358665},

url = {https://doi.org/10.5281/zenodo.13358665}

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file openfactcheck-1.1.1.tar.gz.

File metadata

- Download URL: openfactcheck-1.1.1.tar.gz

- Upload date:

- Size: 6.1 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2928c8ee8bdc63aff709640f7f8cc3f37af2b0324668d05822f6848713a512f9

|

|

| MD5 |

041fe46b3a118e725c4a7a6590782c39

|

|

| BLAKE2b-256 |

8a72fb6269ede2a403291da74080d59b77ed710c6e925e0b1898b50f08b30aca

|

File details

Details for the file openfactcheck-1.1.1-py3-none-any.whl.

File metadata

- Download URL: openfactcheck-1.1.1-py3-none-any.whl

- Upload date:

- Size: 6.2 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1dbaf53479e82f491d6d000e98a03b5abd7a5c42a30cc81a7fa115a088c2348a

|

|

| MD5 |

341625e03afd9a41cc8c62089a10060a

|

|

| BLAKE2b-256 |

0eb628ac357f650458d3faca86d9388b9218e1dc6935e92727b9e85008b71189

|