A Python package to benchmark query performance on PostgreSQL Database.

Project description

pgbenchmark

Python package to benchmark query performance on a PostgreSQL database. It allows you to measure the execution time of queries over multiple runs, providing detailed metrics about each run's performance.

Please consider

- Main purpose of this library is to easily microbenchmark queries without boilerplate.

- This tool is not for Database Administrators (Yet).

- There's a lot of re-thinking and work in progress ongoing on this project

- Most of the things will be backwards-compatible, but some things might deprecate/break in future releases.

- Since I'm developing this library as I go, mostly for my personal use, code here and there is sub-optimal.

Installation

pip install pgbenchmark

Example

For ParallelBenchmark, please scroll down....

import psycopg2

from pgbenchmark import Benchmark

conn = psycopg2.connect(

dbname="postgres",

user="postgres",

password=" << Your Password >> ",

host="localhost",

port="5432"

)

benchmark = Benchmark(db_connection=conn, number_of_runs=1000)

benchmark.set_sql("SELECT 1;")

for result in benchmark:

# {'run': X, 'sent_at': <DATETIME WITH MS>, 'duration': '0.000064'}

pass

""" View Summary """

print(benchmark.get_execution_results())

# {'runs': 1000,

# 'min_time': '0.000576',

# 'max_time': '0.014741',

# 'avg_time': '0.0007',

# 'median_time': '0.000642',

# 'percentiles': {'p25': '0.000612',

# 'p50': '0.000642',

# 'p75': '0.000696',

# 'p99': '0.001331'}

# }

You can also pass SQL file, instead of query string

benchmark.set_sql("./test.sql")

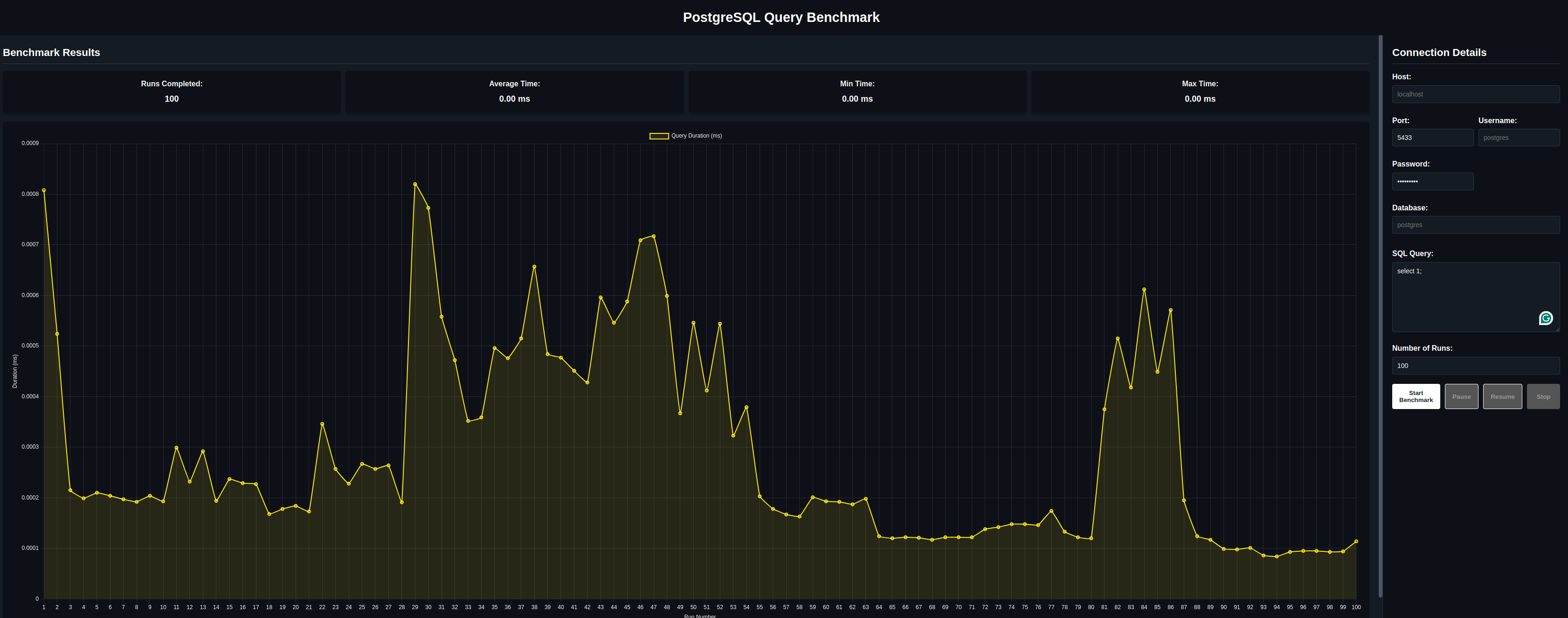

Interactive | No-Code Mode

Simply run in your terminal:

pgbenchmark

You'll see the ouput

[ http://127.0.0.1:8000 ] Click to open pgbenchmark Interface

Configuration on the right, rest is very intuitive.

Pause and Resume buttons are not working for now :(

More Examples

Standard 'Benchmark' class allow all kinds of connections

- Providing Nothing at all. Benchmark will use standard default factory values

from pgbenchmark import Benchmark

benchmark = Benchmark(number_of_runs=1000)

benchmark.set_sql("SELECT 1;")

for iteration in benchmark:

pass

- Providing Connection Details as Dict.

from pgbenchmark import Benchmark

params = {

"dbname": "postgres",

"host": "localhost",

"port": "5432",

"user": "postgres",

"password": "postgres",

}

benchmark = Benchmark(db_connection=params, number_of_runs=1000)

benchmark.set_sql("SELECT 1;")

for iteration in benchmark:

pass

- Psycopg2 connection object directly

from pgbenchmark import Benchmark

params = {

"dbname": "postgres",

"host": "localhost",

"port": "5432",

"user": "postgres",

"password": "postgres",

}

benchmark = Benchmark(db_connection=params, number_of_runs=1000)

benchmark.set_sql("SELECT 1;")

for iteration in benchmark:

pass

Example with Parallel execution

⚠️ Please be careful. If you are running on Linux, pgbenchmark will load your cores on 100% !!!⚠️

from pgbenchmark import ParallelBenchmark

pg_conn_params = {

"dbname": "postgres",

"user": "postgres",

"password": "",

"host": "localhost",

"port": "5432"

}

# --- Configuration ---

N_PROCS = 20

N_RUNS_PER_PROC = 1000

SQL_QUERY = "SELECT 1;"

parallel_bench = ParallelBenchmark(

num_processes=N_PROCS,

number_of_runs=N_RUNS_PER_PROC,

db_connection_info=pg_conn_params

)

parallel_bench.set_sql(SQL_QUERY)

if __name__ == '__main__':

print("===================== Simply `run()` and get results at the end ==============================")

parallel_bench.run()

print("===================== Or... Iterate Live and get results per-process =========================")

for result_from_process in parallel_bench.iter_successful_results():

print(result_from_process)

final_results = parallel_bench.get_execution_results()

Example with Template Engine

From version 0.1.0 pgbenchmark supports simple Template Engine for queries.

To emulate "real" scenarios, with different random or pre-defined queries, you can use set_sql_formatter method

to generate queries. Same syntax as Jinja2 using {{ [X] }} for variables.

def generate_random_value():

return round(random.randint(10, 1000), 2)

N_RUNS_PER_PROC = 1000

SQL_QUERY = "SELECT {{random_value}};"

# ....

parallel_bench.set_sql(SQL_QUERY)

"""===================== use `ANY` function to generate values for your query =============================="""

parallel_bench.set_sql_formatter(for_placeholder="random_value", generator=generate_random_value)

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file pgbenchmark-0.1.8.tar.gz.

File metadata

- Download URL: pgbenchmark-0.1.8.tar.gz

- Upload date:

- Size: 25.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

733f9e2ff6b6ec2f9ce300b0e07fc9826ad2277b4274701af19ef7599a27ccfc

|

|

| MD5 |

5e768873fc3044e1a90dcbbda4261768

|

|

| BLAKE2b-256 |

f12a0bc2726a27ee9b75d6a5cbe2d5fab0a4f32444632dc17d2c3f9db8511341

|

File details

Details for the file pgbenchmark-0.1.8-py3-none-any.whl.

File metadata

- Download URL: pgbenchmark-0.1.8-py3-none-any.whl

- Upload date:

- Size: 27.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e9d3969ea4978a352117e9ce8f9a8fc4631cea81d6ddb4ec4dd4cc960049b55c

|

|

| MD5 |

2ad024991df4fcc03bdb59d166629ca5

|

|

| BLAKE2b-256 |

5a2896d59188c0acfe3c834ac3587733ac3fba94e07681feff51da34caa0a9c6

|