AI Toolkit for Engineers

Project description

phidata

A collection of AI Apps that you can run with 1 command 🚀

⭐️ it for when you need to spin up an AI project quickly.

⭐ Features:

- Powerful: Get a production-ready LLM App with 1 command.

- Simple: Built using a human-like

Conversationinterface to language models. - Local first: Your app runs locally on docker with 1 command.

- Production Ready: Your app can be deployed to aws with 1 command.

🚀 How it works

- Create your codebase using a template:

phi ws create - Run your app locally:

phi ws up dev:docker - Run your app on AWS:

phi ws up prd:aws

💻 Quickstart: Build a RAG LLM App

Let's build a RAG LLM App with GPT-4. We'll use PgVector for Knowledge Base and Storage and serve the app using Streamlit and FastApi. Read the full tutorial here.

Install docker desktop to run this app locally.

Installation

Open the Terminal and create an ai directory with a python virtual environment.

mkdir ai && cd ai

python3 -m venv aienv

source aienv/bin/activate

Install phidata

pip install phidata

Create your codebase

Create your codebase using the llm-app template pre-configured with FastApi, Streamlit and PgVector. Use this codebase as a starting point for your LLM product.

phi ws create -t llm-app -n llm-app

This will create a folder named llm-app

Serve your LLM App using Streamlit

Streamlit allows us to build micro front-ends for our LLM App and is extremely useful for building basic applications in pure python. Start the app group using:

phi ws up --group app

Press Enter to confirm and give a few minutes for the image to download (only the first time). Verify container status and view logs on the docker dashboard.

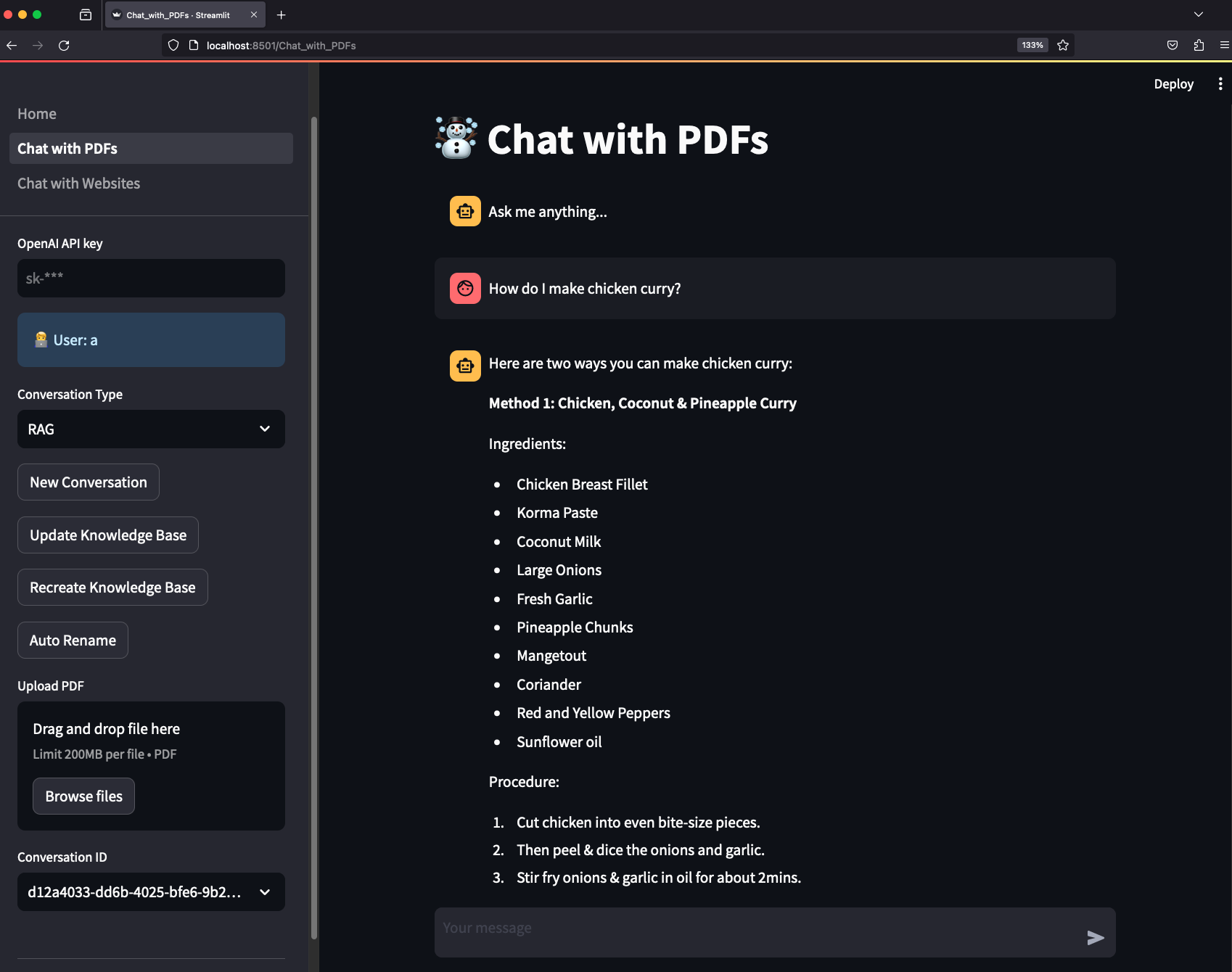

Example: Chat with PDFs

- Open localhost:8501 to view streamlit apps that you can customize and make your own.

- Click on Chat with PDFs in the sidebar

- Enter a username and wait for the knowledge base to load.

- Choose the

RAGConversation type. - Ask "How do I make chicken curry?"

- Upload PDFs and ask questions

Serve your LLM App using FastApi

Streamlit is great for building micro front-ends but any production application will be built using a front-end framework like next.js backed by a RestApi built using a framework like FastApi.

Your LLM App comes ready-to-use with FastApi endpoints, start the api group using:

phi ws up --group api

Press Enter to confirm and give a few minutes for the image to download.

View API Endpoints

- Open localhost:8000/docs to view the API Endpoints.

- Load the knowledge base using

/v1/pdf/conversation/load-knowledge-base - Test the

v1/pdf/conversation/chatendpoint with{"message": "How do I make chicken curry?"} - The LLM Api comes pre-built with endpoints that you can integrate with your front-end.

Optional: Run Jupyterlab

A jupyter notebook is a must have for AI development and your llm-app comes with a notebook pre-installed with the required dependencies. Enable it by updating the workspace/settings.py file:

...

ws_settings = WorkspaceSettings(

...

# Uncomment the following line

dev_jupyter_enabled=True,

...

Start jupyter using:

phi ws up --group jupyter

Press Enter to confirm and give a few minutes for the image to download (only the first time). Verify container status and view logs on the docker dashboard.

View Jupyterlab UI

- Open localhost:8888 to view the Jupyterlab UI. Password: admin

- Play around with cookbooks in the

notebooksfolder.

Delete local resources

Play around and stop the workspace using:

phi ws down

Run your LLM App on AWS

Read how to run your LLM App on AWS here.

📚 More Information:

- Read the documentation

- Chat with us on Discord

- Email us at help@phidata.com

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file phidata-2.0.60.tar.gz.

File metadata

- Download URL: phidata-2.0.60.tar.gz

- Upload date:

- Size: 319.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.18

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2ee1436edd821c72dbbdebe3d277b4fc0b7660a9a540e66e806d02bacb48c1c1

|

|

| MD5 |

e32ef2ee628ac649826c594ca20548fb

|

|

| BLAKE2b-256 |

368f3b504504a61127c6bccdb644b7f86977b38568c97440613ecdfd80139244

|

File details

Details for the file phidata-2.0.60-py3-none-any.whl.

File metadata

- Download URL: phidata-2.0.60-py3-none-any.whl

- Upload date:

- Size: 475.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.18

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

30e0c7d6602731877f21625904ecd42211c92a3449510f26f6996e644879b729

|

|

| MD5 |

a12fa95c70fad1447a294715295978a9

|

|

| BLAKE2b-256 |

46c1257a704901353fdebc6ab20ab0ed66cb1ef1bc511a4ed49e7debdd738e53

|