One-command local streaming server for PiEEG (8/16-channel EEG shield for Raspberry Pi)

Project description

PiEEG-server

Real-time EEG streaming platform for PiEEG (8/16 ch) and IronBCI (8 ch BLE)

Reads at 250 Hz · streams over WebSocket · live dashboard with spectral analysis, topographic maps, experiences gallery, VRChat OSC, Lab Streaming Layer, webhook automation — Raspberry Pi (SPI) or IronBCI (Bluetooth LE).

Try it now — no hardware needed, runs in demo mode with synthetic EEG data. Documentation — full guides, API reference, and integration docs. Chat with us — Join PiEEG Discord community

curl -sSL https://raw.githubusercontent.com/pieeg-club/PiEEG-server/main/install.sh | bash

|

Getting Started |

Features |

Integrations |

Reference |

Install

Pick one method — they all get you

pieeg-serverready to run.

| Method | Command | Notes |

|---|---|---|

| A) One-line (recommended) | curl -sSL https://raw.githubusercontent.com/pieeg-club/PiEEG-server/main/install.sh | bash |

Run sudo reboot first time to enable SPI |

| B) Clone & setup | git clone https://github.com/pieeg-club/PiEEG-server.git && cd PiEEG-server && ./setup.sh |

Same reboot note |

| C) pip | pip install pieeg-server |

Requires Python 3.10+ |

| D) pip + IronBCI | pip install pieeg-server[ironbci] |

Adds bleak for Bluetooth LE |

IronBCI / EAREEG users: install the BLE extra for Bluetooth Low Energy support:

pip install pieeg-server[ironbci]Then run with

pieeg-server --device ironbci8. See CLI Reference for BLE options.

Run

pieeg-server # stream 16 ch (PiEEG)

pieeg-server --device pieeg8 # 8-channel PiEEG shield

pieeg-server --device ironbci8 # IronBCI via Bluetooth LE

pieeg-server --device ironbci8 --ble-name MyBoard # custom BLE name

pieeg-server --filter # 1–40 Hz bandpass

pieeg-server --monitor # terminal sparklines

pieeg-server --mock # synthetic data, no hardware

pieeg-server --auth # require 6-digit access code

pieeg-server --osc # VRChat OSC bridge

pieeg-server --lsl # Lab Streaming Layer outlet

pieeg-server doctor # diagnose everything

| Port | Purpose |

|---|---|

:1616 |

WebSocket data stream |

:1617 |

Web dashboard |

Open http://raspberrypi.local:1617 in any browser on your network.

Quick Start

1. Install & launch

curl -sSL https://raw.githubusercontent.com/pieeg-club/PiEEG-server/main/install.sh | bash

sudo reboot # first time only, enables SPI

pieeg-server # or: pieeg-server --mock (no hardware)

2. Open the dashboard

Browse to http://raspberrypi.local:1617 — you'll see live waveforms immediately.

3. Connect from code

import asyncio, json, websockets

async def main():

async with websockets.connect("ws://raspberrypi.local:1616") as ws:

async for msg in ws:

frame = json.loads(msg)

print(f"#{frame['n']}: {frame['channels']}")

asyncio.run(main())

const ws = new WebSocket("ws://raspberrypi.local:1616");

ws.onmessage = (e) => {

const frame = JSON.parse(e.data);

console.log(`#${frame.n}:`, frame.channels);

};

websocat ws://raspberrypi.local:1616 # CLI one-liner

That's it. Every frame is plain JSON — no SDK, no binary protocol, works in any language that has WebSocket support.

Server Features

| Feature | Description |

|---|---|

| 250 Hz streaming | WebSocket (ws://<host>:1616), plain JSON, language-agnostic |

| 8 & 16 channel | PiEEG-8, PiEEG-16 (SPI) and IronBCI-8 (BLE) |

| IronBCI BLE | Bluetooth Low Energy support for IronBCI / EAREEG boards; auto-scan or direct MAC address |

| Bandpass filter | Butterworth IIR (SOS), per-channel state, adjustable live via WebSocket |

| CSV recording | Start/stop from dashboard or CLI; auto-timestamped; optional duration limit |

| Session annotations | Text notes on any frame; sidecar .annotations.json |

| Terminal monitor | Rich TUI with per-channel sparklines and µV readout; works over SSH |

| Mock mode | Realistic synthetic EEG (alpha rhythm, drift, noise, blink artifacts) |

| Authentication | Optional 6-digit code, rate limiting, HMAC timing-safe verify, HttpOnly cookies |

| VRChat OSC | Band powers via UDP OSC; chatbox + avatar parameters; rolling normalization |

| LSL | Push raw samples to LSL network; discoverable by OpenViBE, MNE, LabRecorder |

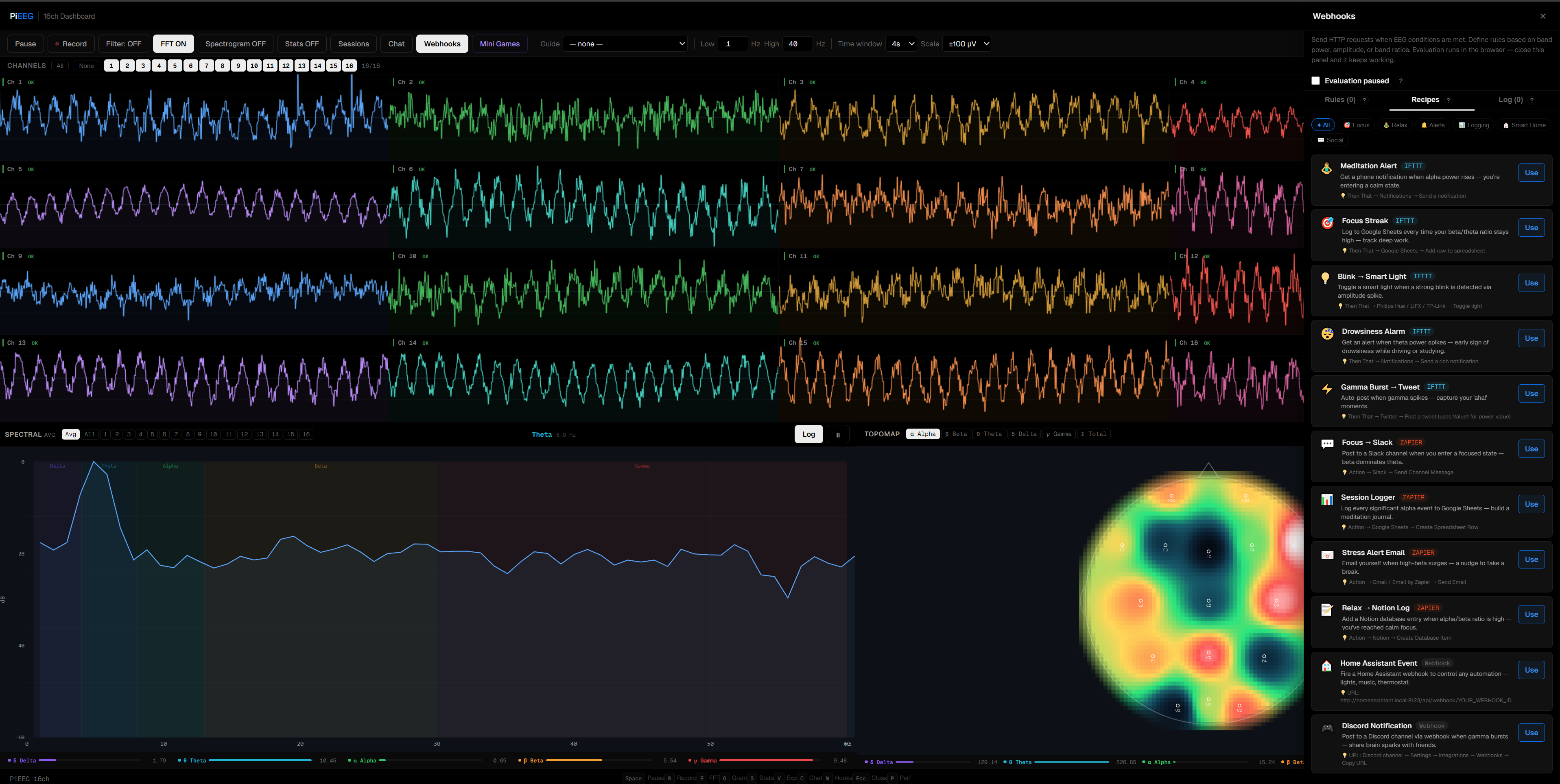

| Webhooks | HTTP callbacks on EEG events; IFTTT & Zapier presets; per-rule cooldown |

| Self-diagnostics | pieeg-server doctor checks Pi model, SPI/GPIO, ports, deps, systemd |

| Self-update | Detects pip/git install; checks PyPI or remote; one-click upgrade from dashboard |

| Systemd service | Auto-starts on boot; standard systemctl management |

| Zero-dep GPIO | Direct Linux chardev v1 ioctl; stable ABI since Linux 4.8 |

| Spike rejection | Auto-resets after sustained electrode contact changes |

| Cloud-ready | Dockerfile + Fly.io config for mock-mode demo hosting |

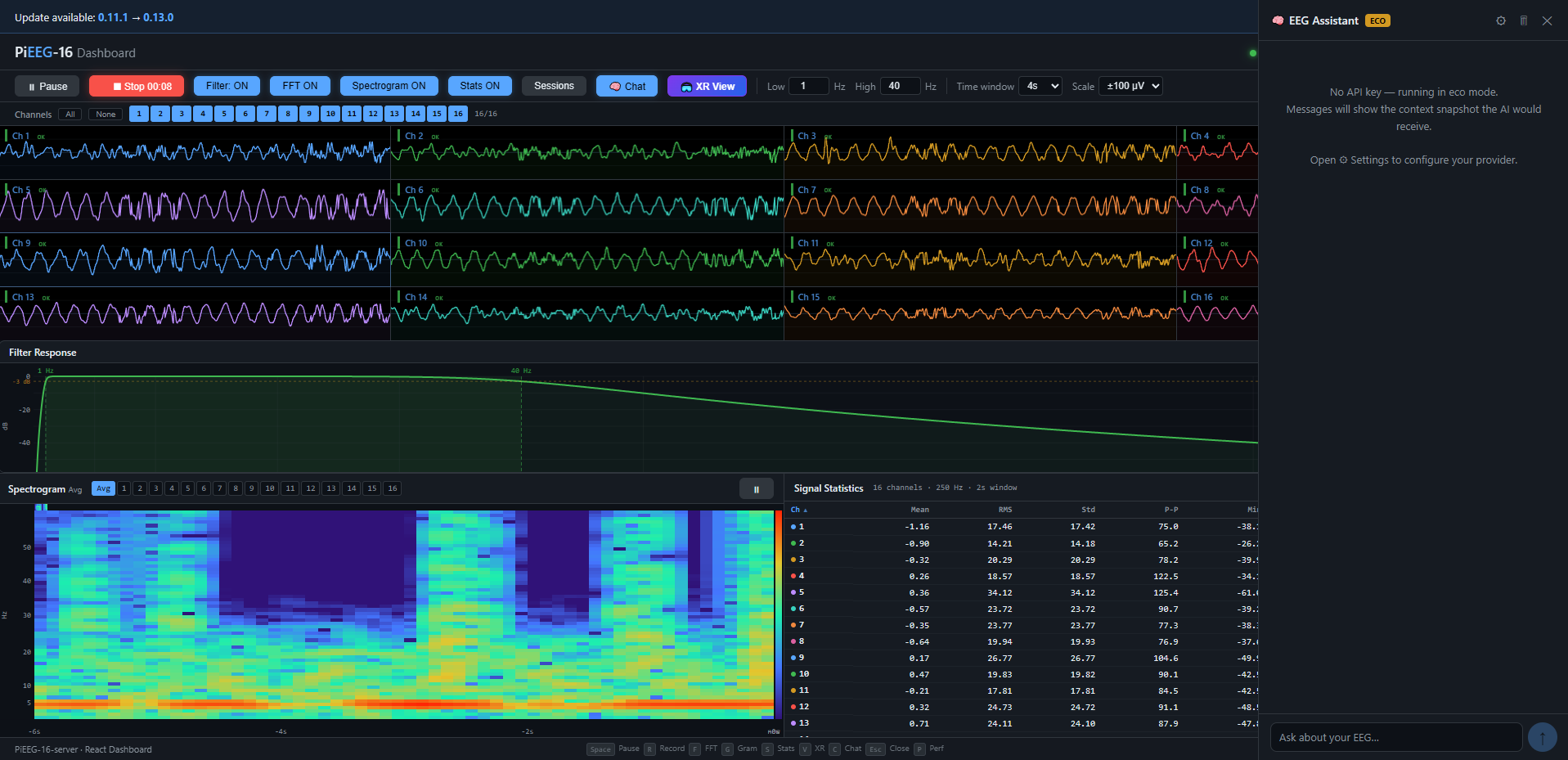

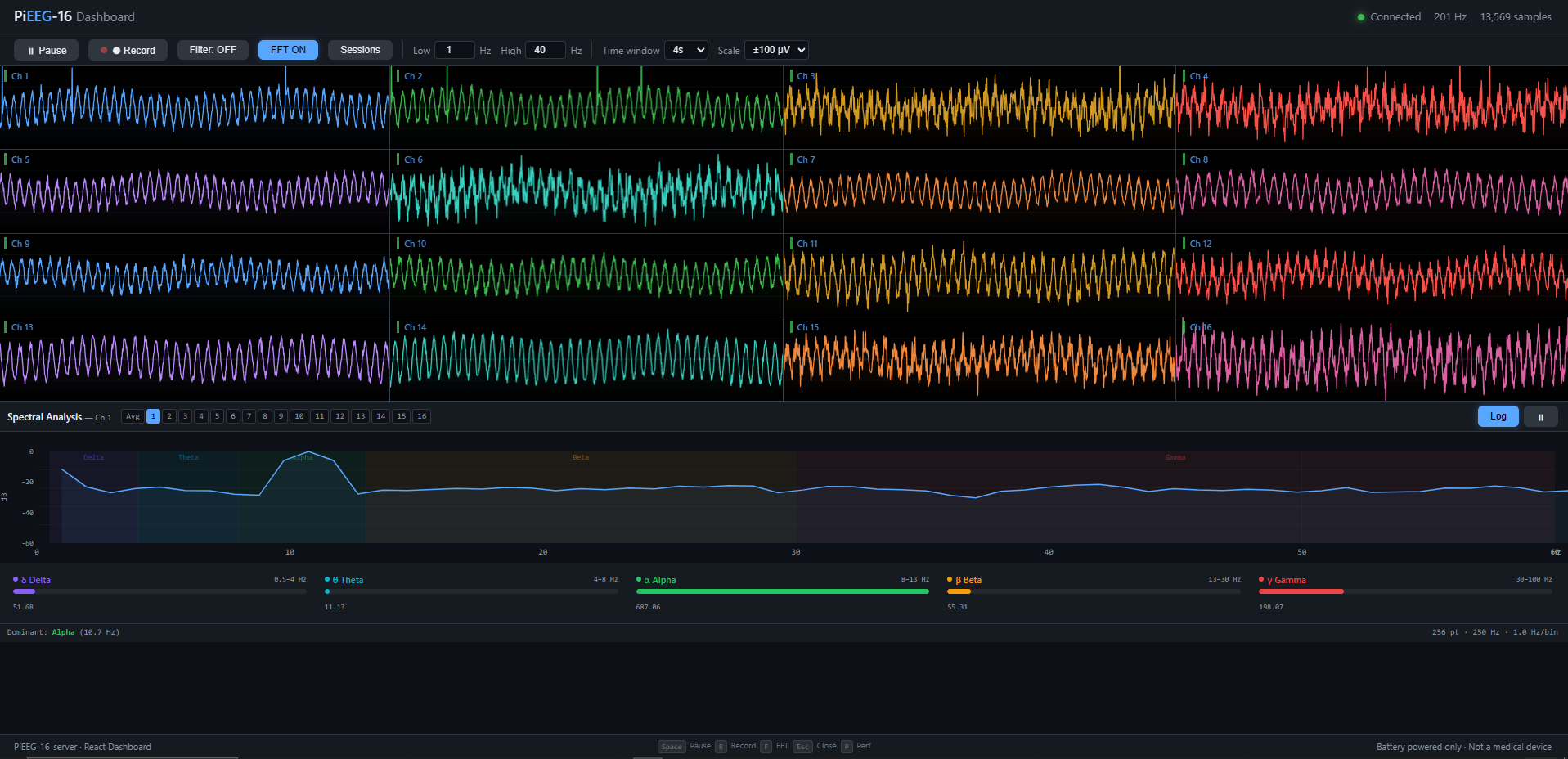

Dashboard Features

| Feature | Description |

|---|---|

| Real-time waveforms | Canvas 2D, adaptive quality; time window 2–16 s, Y-scale ±50–500 µV |

| Signal quality badges | Live per-channel RMS with color feedback (green / yellow / red / gray) |

| Channel detail panel | Click to expand: zoomed trace, FFT, band power bars, histogram, statistics |

| Spectral analysis | 256-point FFT in Web Worker; PSD (log dB / linear); band power bars δ θ α β γ |

| Spectrogram | Scrolling time-frequency heatmap (Turbo colormap, –60 → 0 dB) |

| Topographic map | IDW-interpolated scalp heatmap over 10-20 montage; selectable band metric |

| Statistics panel | 10 metrics per channel; sortable columns; CSV export |

| Filter preview | Live Butterworth magnitude response with –3 dB reference |

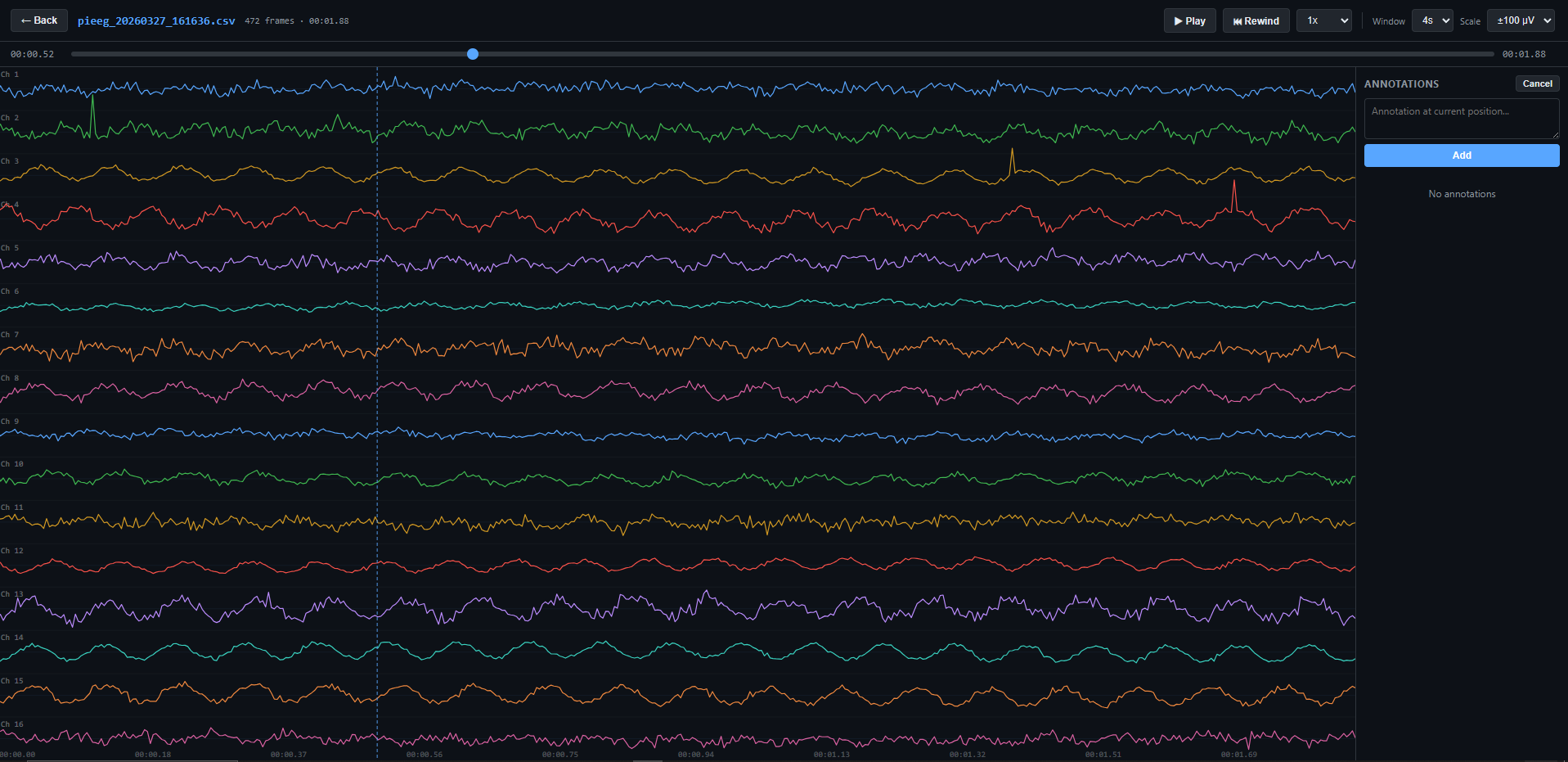

| Session library | Browse, replay recordings; play/pause, seek, speed control (0.5×–2×); annotations |

| AI chat assistant | BYO provider (OpenAI, Anthropic, Ollama, Groq, LM Studio); SSE streaming |

| Webhook panel | Visual rule builder; POST/PUT/PATCH/GET; Authorization headers; IFTTT & Zapier |

| Webcam feed | Optional video overlay |

| Performance monitor | FPS, frame time, JS heap overlay |

| Update banner | Version upgrade notification |

| Responsive | Desktop: all 16 channels. Mobile: 4-channel subset |

Keyboard Shortcuts

| Key | Action | Key | Action |

|---|---|---|---|

Space |

Toggle pause | V |

Experiences gallery |

R |

Toggle record | C |

Toggle chat |

F |

Toggle FFT | W |

Toggle webhooks |

G |

Toggle spectrogram | P |

Toggle perf monitor |

S |

Toggle stats | Esc |

Close overlays |

? |

Show shortcut help |

Experiences Gallery

Community-driven immersive EEG visualizations — lazy-loaded, card-based launcher, simple registry for contributors.

| Experience | Description |

|---|---|

| Neural Wave Space | Three.js 3D arc of 16 wave strips with amplitude-responsive color, starfield, WebXR + hand tracking |

| Blink Browser | Scroll articles via eye blinks; per-user calibration; frontal electrode monitoring |

| Neural Sonification | Brainwaves → live music; bands mapped to drone, FM pad, lead, harmonics, shimmer; DJ controls |

| VRChat OSC | Stream band powers into VRChat; chatbox + avatar parameter output; live config UI |

| Spoon Bend | Matrix-style telekinesis controlled by focus/beta/gamma; 3D spoon + digital rain |

| Webhook Wizard | Guided 60-second first-webhook setup; live EEG feedback; IFTTT/Zapier templates |

Creating an Experience

Time to first playable: ~15 minutes. One

.tsxfile + one line in the registry.

1. Create your component in dashboard/src/experiences/my-game/MyGame.tsx:

import { useRef, useEffect } from "react";

import type { ExperienceProps } from "../registry";

import { useFocus, useRelax, useBlink } from "../../hooks/detectors";

export default function MyGame({ eegData, onExit }: ExperienceProps) {

const { state: focus } = useFocus(eegData);

const { state: relax } = useRelax(eegData);

const { state: blink } = useBlink(eegData);

const canvasRef = useRef<HTMLCanvasElement>(null);

useEffect(() => {

let raf: number;

function loop() {

const f = focus.current.focus; // 0–1

const r = relax.current.relaxation; // 0–1

const b = blink.current.blinked; // true for one cycle per blink

// --- your game logic here ---

raf = requestAnimationFrame(loop);

}

raf = requestAnimationFrame(loop);

return () => cancelAnimationFrame(raf);

}, []);

return (

<div style={{ position: "fixed", inset: 0, background: "#000" }}>

<canvas ref={canvasRef} />

<button onClick={onExit} style={{ position: "absolute", top: 12, left: 12 }}>

← Exit

</button>

</div>

);

}

2. Register in dashboard/src/experiences/registry.ts:

const MyGameExperience = lazy(() => import("./my-game/MyGame"));

// Add to EXPERIENCES array:

{

id: "my-game",

name: "My Game",

description: "One-sentence summary.",

tag: "Focus",

gradient: ["#ec4899", "#8b5cf6"],

component: MyGameExperience,

author: "Your Name",

}

The gallery picks it up automatically. Each experience is code-split — no impact on initial load.

Client-side Detectors

Browser-side EEG processing hooks — React refs, zero re-renders. Read .current in your requestAnimationFrame loop.

import { useBandPowers, useBlink, useFocus, useRelax } from "../../hooks/detectors";

useBandPowers(eegData, config?)

Foundation layer. Single FFT instance, averaged spectral band powers.

| Field | Type | Description |

|---|---|---|

absolute |

BandPowers |

Absolute power per band (µV²/Hz) — Delta, Theta, Alpha, Beta, Gamma |

relative |

BandPowers |

Normalized to sum = 1 |

totalPower |

number |

Sum across all bands |

dominantFrequency |

number |

Peak PSD bin (Hz) |

Config: { updateHz?, channels?, smoothing? }

useBlink(eegData, config?)

Ocular artifact detector. Amplitude-threshold state machine on frontal channels (Fp1/Fp2).

| Field | Type | Description |

|---|---|---|

blinked |

boolean |

true for exactly one poll cycle per blink |

count |

number |

Cumulative blink count |

amplitude |

number |

Current peak-to-peak µV |

lastBlinkTime |

number |

Epoch ms of last confirmed blink |

Config: { channels?, threshold?, windowMs?, minDurationMs?, maxDurationMs?, refractoryMs?, pollHz? }

useFocus(eegData, config?)

Cortical engagement index — (Beta + Gamma) / (Alpha + Theta + Delta).

| Field | Type | Description |

|---|---|---|

focus |

number |

0 (relaxed) – 1 (highly focused), smoothed |

raw |

number |

Unsmoothed, uncalibrated ratio |

calibrated |

boolean |

Whether baseline has been captured |

Config: { channels?, updateHz?, smoothing?, scaleDivisor? } · Returns: { state, calibrate(), resetCalibration(), calibrating }

useRelax(eegData, config?)

Alpha-dominance + theta-beta ratio composite relaxation index.

| Field | Type | Description |

|---|---|---|

relaxation |

number |

0 (alert) – 1 (deeply relaxed), smoothed |

alphaRelative |

number |

Alpha / total power (0–1) |

thetaBetaRatio |

number |

θ / β raw ratio |

calibrated |

boolean |

Whether baseline has been captured |

Config: { channels?, updateHz?, smoothing?, alphaWeight?, tbrCeiling? } · Returns: { state, calibrate(), resetCalibration(), calibrating }

WebSocket API

Plain WebSocket, UTF-8 JSON frames on ws://<host>:1616. No SDK needed.

Connection

| Scenario | URL |

|---|---|

| No auth | ws://raspberrypi.local:1616 |

| With auth | ws://raspberrypi.local:1616?token=<ws-token> |

Authenticated connection flow (TypeScript)

With --auth enabled: POST /auth with the 6-digit code → GET /auth/ws-token → connect with ?token=....

const dashboardBase = "http://raspberrypi.local:1617";

const wsBase = "ws://raspberrypi.local:1616";

async function connectAuthenticated(code: string): Promise<WebSocket> {

await fetch(`${dashboardBase}/auth`, {

method: "POST",

headers: { "Content-Type": "application/json" },

credentials: "include",

body: JSON.stringify({ code }),

});

const { token } = await fetch(`${dashboardBase}/auth/ws-token`, {

credentials: "include",

}).then((r) => r.json());

const ws = new WebSocket(`${wsBase}?token=${encodeURIComponent(token)}`);

ws.addEventListener("message", (e) => {

const frame = JSON.parse(e.data as string);

console.log(`#${frame.n}:`, frame.channels);

});

return ws;

}

Commands (Client → Server)

Filter

{"cmd": "set_filter", "enabled": true, "lowcut": 1.0, "highcut": 40.0}

{"cmd": "set_filter", "enabled": false}

Recording

{"cmd": "start_record"}

{"cmd": "stop_record"}

Webhooks

{"cmd": "webhook_list"}

{"cmd": "webhook_create", "rule": {"name": "Alpha alert", "trigger_type": "band_power_above", "params": {"band": "alpha", "threshold": 20}, "url": "https://example.com/hook", "method": "POST", "headers": {"Authorization": "Bearer ..."}, "cooldown": 30}}

{"cmd": "webhook_update", "rule": {"id": "abc123", "enabled": false}}

{"cmd": "webhook_delete", "id": "abc123"}

{"cmd": "webhook_test", "id": "abc123"}

VRChat OSC

{"cmd": "osc_start"}

{"cmd": "osc_stop"}

{"cmd": "osc_configure", "host": "127.0.0.1", "port": 9000, "mode": "both", "interval": 0.25}

{"cmd": "osc_status"}

Lab Streaming Layer

{"cmd": "lsl_start"}

{"cmd": "lsl_stop"}

{"cmd": "lsl_status"}

Data Format

Every frame from the WebSocket is a JSON object:

{ "t": 1711234567.123, "n": 42, "channels": [12.34, -5.67, 8.90, ...] }

| Field | Type | Description |

|---|---|---|

t |

float | Unix timestamp (seconds, 6-decimal precision) |

n |

int | Sample number (monotonic, incremental) |

channels |

float[] | µV per channel (8 or 16 elements) |

Welcome message (on connect)

{

"status": "connected",

"sample_rate": 250,

"channels": 16,

"filter": false,

"recording": false

}

EEG Frequency Bands

| Band | Range | Color |

|---|---|---|

| Delta (δ) | 0.5–4 Hz | 🟣 #8b5cf6 |

| Theta (θ) | 4–8 Hz | 🔵 #06b6d4 |

| Alpha (α) | 8–13 Hz | 🟢 #22c55e |

| Beta (β) | 13–30 Hz | 🟡 #f59e0b |

| Gamma (γ) | 30–100 Hz | 🔴 #ef4444 |

Webhooks

HTTP callbacks fired when EEG conditions are met. Build rules in the dashboard or via WebSocket commands.

Trigger Types

| Type | Fires when… |

|---|---|

band_power_above |

Band power exceeds threshold |

band_power_below |

Band power drops below threshold |

amplitude_above |

Peak amplitude exceeds threshold |

amplitude_below |

Peak amplitude drops below threshold |

band_ratio_above |

Band ratio (e.g. β/θ) exceeds threshold |

band_ratio_below |

Band ratio drops below threshold |

Payload

{

"event": "band_power_above",

"rule": "Alpha alert",

"value": 23.45,

"threshold": 20,

"channel": 0,

"timestamp": 1711234567.12

}

Each rule has an independent cooldown (seconds) to prevent spam. Enforced server-side.

IFTTT & Zapier

Webhook rules support three service modes — Generic, IFTTT, Zapier — selectable per rule.

| Service | Setup | Payload shape |

|---|---|---|

| Get key at ifttt.com/maker_webhooks → Documentation | value1 (value), value2 (trigger type), value3 (full JSON) |

|

| Create Zap → Webhooks trigger (Catch Hook) → paste URL | Flat JSON: event, rule, value, threshold, channel, band, timestamp, source |

|

| Generic | Any POST-accepting endpoint (n8n, Home Assistant, Node-RED…) | event, rule, value, threshold, channel, timestamp |

Example payloads

// IFTTT

{"value1": "23.45", "value2": "band_power_above", "value3": "{\"event\":\"band_power_above\",\"rule\":\"Alpha alert\",\"value\":23.45,\"threshold\":20}"}

// Zapier

{"event": "band_power_above", "rule": "Alpha alert", "value": 23.45, "threshold": 20, "channel": 0, "band": "alpha", "timestamp": 1711234567.12, "source": "pieeg"}

// Generic

{"event": "band_power_above", "rule": "Alpha alert", "value": 23.45, "threshold": 20, "channel": 0, "timestamp": 1711234567.12}

VRChat OSC

Stream EEG band powers to VRChat via UDP OSC. Enable with --osc or from the dashboard.

pieeg-server --osc # defaults

pieeg-server --osc --osc-mode chatbox --osc-interval 0.5 # chatbox only, 2 Hz

| Mode | Output |

|---|---|

chatbox |

Band powers as text in VRChat chatbox |

parameters |

Avatar parameter floats (normalized 0–1) |

both |

Chatbox + avatar parameters (default) |

WebSocket commands: osc_start, osc_stop, osc_configure, osc_status — see WebSocket API.

Lab Streaming Layer

Push raw EEG samples to the LSL network. Discoverable by OpenViBE, MNE-LSL, BCI2000, NeuroPype, LabRecorder.

pieeg-server --lsl # enable on startup

pieeg-server --lsl --lsl-name MyEEG # custom stream name

Toggle at runtime via dashboard or WebSocket (lsl_start / lsl_stop).

Receive in Python:

from pylsl import StreamInlet, resolve_stream

streams = resolve_stream('name', 'PiEEG')

inlet = StreamInlet(streams[0])

sample, timestamp = inlet.pull_sample()

Record & Replay

pieeg-server record session.csv # standalone, Ctrl-C to stop

pieeg-server record session.csv --duration 300 # 5 minutes

pieeg-server --record session.csv # record while streaming

| Feature | Details |

|---|---|

| Format | CSV: timestamp, ch1, ch2, ..., chN |

| Location | recordings/pieeg_YYYYMMDD_HHMMSS.csv |

| Annotations | Frame-based text markers; sidecar .annotations.json |

| Playback | Dashboard session library: play/pause, seek, speed (0.5×–2×) |

| Export | CSV with annotation column, or JSON with metadata |

Jupyter Notebooks

| # | Notebook | Description |

|---|---|---|

| 1 | 01_load_and_plot_session |

Load CSV or stream live, plot all channels |

| 2 | 02_detect_blinks |

Eye-blink artifact detection (threshold-based) |

| 3 | 03_bandpower_and_alpha |

Band powers via Welch's method, alpha tracking |

| 4 | 04_export_features_for_ml |

Windowed features → ML-ready CSV (scikit-learn) |

cd notebooks && pip install -r requirements.txt

pieeg-server --mock &

jupyter lab

CLI Reference

pieeg-server [OPTIONS] [COMMAND]

Commands

| Command | Description |

|---|---|

doctor |

Diagnose hardware, software, and configuration |

record FILE |

Record EEG to CSV (standalone, no server) |

monitor |

Live terminal display (standalone, no server) |

Server Options

| Flag | Default | Description |

|---|---|---|

--device DEVICE |

pieeg16 |

pieeg8, pieeg16, or ironbci8 |

--ble-name NAME |

EAREEG |

BLE advertised device name (IronBCI only) |

--ble-address ADDR |

— | BLE MAC address — skip scan, connect directly |

--host HOST |

0.0.0.0 |

Bind address |

--port PORT |

1616 |

WebSocket port |

--dashboard-port PORT |

1617 |

Dashboard HTTP port |

--no-dashboard |

— | Disable web dashboard |

--auth |

— | Enable 6-digit access code |

--gpio-chip PATH |

/dev/gpiochip4 |

GPIO chip device |

--filter |

— | Enable 1–40 Hz bandpass filter |

--lowcut HZ |

1.0 |

Filter low cutoff |

--highcut HZ |

40.0 |

Filter high cutoff |

--record FILE |

— | Record to CSV while streaming |

--record-duration SEC |

— | Stop recording after N seconds |

--monitor |

— | Show terminal monitor alongside server |

--mock |

— | Synthetic EEG (no hardware needed) |

--no-webhooks |

— | Disable webhooks |

--osc |

— | Enable VRChat OSC bridge |

--osc-host HOST |

127.0.0.1 |

VRChat receiver |

--osc-port PORT |

9000 |

VRChat OSC port |

--osc-mode MODE |

both |

chatbox, parameters, or both |

--osc-interval SEC |

0.25 |

OSC update rate |

--lsl |

— | Enable Lab Streaming Layer outlet |

--lsl-name NAME |

PiEEG |

LSL stream name |

-v, --verbose |

— | Debug logging |

Architecture

┌──────────────────────────────────────────────────────────┐

│ Raspberry Pi + PiEEG Shield (8 or 16 ch) │

│ — or — IronBCI / EAREEG board (8 ch, Bluetooth LE) │

│ │

│ hardware.py → SPI/GPIO init, ADS1299 config (PiEEG) │

│ ironbci.py → BLE scan + GATT notifications (IronBCI)│

│ ↓ │

│ acquisition.py → 250 Hz read loop (SPI or BLE) │

│ ↓ pub/sub │

│ ├── server.py → WebSocket broadcast │

│ ├── recorder.py → CSV writer │

│ ├── monitor.py → Terminal sparklines │

│ ├── osc_vrchat.py → VRChat OSC bridge │

│ └── lsl.py → Lab Streaming Layer outlet │

│ │

│ webhooks.py → event rules + HTTP relay │

│ dashboard.py → HTTP server (React UI) │

│ auth.py → session + WS token management │

│ filters.py → Butterworth bandpass (SOS) │

│ updater.py → version check + upgrade │

│ doctor.py → system diagnostics │

│ ↓ │

│ ws://0.0.0.0:1616 │ http://0.0.0.0:1617 │

└──────────┬───────────────────────────────────────────────┘

│ Local network / internet

├── Browser dashboard (React + Vite)

├── Python / Jupyter notebook

├── VRChat (OSC over UDP)

├── LSL consumers (OpenViBE, MNE, LabRecorder…)

├── IFTTT / Zapier (webhooks)

└── Any WebSocket client

Dashboard Tech Stack

| Module | Responsibility |

|---|---|

hardware.py |

SPI/GPIO initialization, ADS1299 register config (PiEEG) |

ironbci.py |

BLE scan, GATT notifications, ADS1299 packet parsing (IronBCI) |

acquisition.py |

250 Hz read loop (SPI, BLE, or mock), pub/sub queues |

| Layer | Tech |

|---|---|

| Framework | React 19 |

| Bundler | Vite 6 |

| Rendering | Canvas 2D (waveforms, spectra), Three.js (WebXR) |

| FFT | Pure-JS Cooley-Tukey radix-2 (256-point, Hanning window) |

| State | React hooks + refs (no external state library) |

| Styling | Plain CSS |

Systemd Service

Setup creates a service that auto-starts on boot:

sudo systemctl status pieeg-server

sudo systemctl restart pieeg-server

journalctl -u pieeg-server -f

Authentication

Start with --auth to require a 6-digit access code (printed at startup, changes every restart). Sessions persist 24 hours via HttpOnly cookies.

╔══════════════════════════════════════╗

║ DASHBOARD ACCESS CODE ║

║ Code: 847291 ║

╚══════════════════════════════════════╝

| Layer | Protection |

|---|---|

| Access code | HMAC timing-safe comparison |

| Sessions | secrets.token_urlsafe (256-bit), 24h TTL |

| Cookies | HttpOnly, SameSite=Lax |

| WebSocket | Short-lived single-use token |

| Rate limit | 5 attempts / 60s per IP |

| Recordings | Path traversal protection |

| CORS | Origin-reflecting (not wildcard) |

Without

--auth, dashboard and WebSocket are open to your local network.

Development

git clone https://github.com/pieeg-club/PiEEG-server.git

cd PiEEG-server

python -m venv .venv && source .venv/bin/activate # Windows: .venv\Scripts\Activate.ps1

pip install -e ".[dev,ironbci]"

Dashboard (React + Vite)

# Terminal 1

pieeg-server --mock

# Terminal 2

cd dashboard && npm install && npm run dev # → http://localhost:3000

Vite proxies /auth and /ws to the Python server.

Build: cd dashboard && npm run build — output committed to pieeg_server/static/dashboard/ so pip users don't need Node.

Auto-build hook:

cp scripts/pre-commit .git/hooks/pre-commit && chmod +x .git/hooks/pre-commit

Tests

pytest # all tests

pytest -v # verbose

Release

./scripts/build_release.sh # React build → wheel → dist/

./scripts/build_release.sh --upload # + upload to PyPI

- Bump version in

pyproject.toml+pieeg_server/__init__.py git commit -am "release v0.X.0" && git tag v0.X.0./scripts/build_release.sh --upload && git push origin main --tags

Security

Designed for trusted local networks (home lab, research bench). Not hardened for the public internet.

Assumes: private network, physical access to Pi, trusted client devices.

Does NOT defend against: MITM (no TLS), public internet exposure, untrusted users on the same network.

| Not secured | Mitigation |

|---|---|

| No TLS | Dedicated network; or nginx/caddy reverse proxy with self-signed cert |

| No user accounts | Single-user device on home network |

| No CSP headers | Static React build, no user-generated content |

| In-memory sessions | By design — code changes on restart |

| 6-digit code (900k possibilities) | Rate limiting + trusted network; use firewall/VLAN if concerned |

For sensitive deployments: private network only → TLS termination (nginx/caddy) → firewall rules → VPN (WireGuard/Tailscale) for remote access.

Troubleshooting

pieeg-server doctor # checks Pi model, SPI, GPIO, ports, deps, systemd

# exit 0 = ok, 1 = warnings, 2 = errors

Windows

Windows only supports mock mode (--mock). If pip install -e . fails with WinError 2:

pip uninstall pieeg-server -y

pip install -e .

Or use the bundled wrapper: .\pieeg-server.cmd --mock

GPIO: no gpiod dependency

The server talks to GPIO directly via Linux chardev v1 ioctl — no gpiod pip package. Two GPIO pins (chip-select + data-ready) use ~20 lines of struct packing instead of an external dep that has breaking v1/v2 API changes, requires libgpiod-dev C headers, and only works on Linux. The chardev ABI has been stable since Linux 4.8 (2016).

Safety

PiEEG must operate from battery power (5V) only. Do NOT connect to mains-powered equipment via USB. PiEEG is NOT a medical device.

Acknowledgments

Built with guidance from Ildar Rakhmatulin, PhD, creator of the PiEEG platform.

License

MIT

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file pieeg_server-0.28.0.tar.gz.

File metadata

- Download URL: pieeg_server-0.28.0.tar.gz

- Upload date:

- Size: 614.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d050a8c68a9c4d2833843d193a90826f9355cb7fb891cbbed5dc4a26142f8ed0

|

|

| MD5 |

b16a4107b422ad28a4efca22794b75a7

|

|

| BLAKE2b-256 |

6432084feab642b0e341850c9d9cd6e1354cb0d7e6422c2734561f5313d9372f

|

Provenance

The following attestation bundles were made for pieeg_server-0.28.0.tar.gz:

Publisher:

release.yml on pieeg-club/PiEEG-server

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

pieeg_server-0.28.0.tar.gz -

Subject digest:

d050a8c68a9c4d2833843d193a90826f9355cb7fb891cbbed5dc4a26142f8ed0 - Sigstore transparency entry: 1297231212

- Sigstore integration time:

-

Permalink:

pieeg-club/PiEEG-server@adabd5c7082c95a43a90a2dbbc8000113294bd03 -

Branch / Tag:

refs/tags/v0.28.0 - Owner: https://github.com/pieeg-club

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@adabd5c7082c95a43a90a2dbbc8000113294bd03 -

Trigger Event:

push

-

Statement type:

File details

Details for the file pieeg_server-0.28.0-py3-none-any.whl.

File metadata

- Download URL: pieeg_server-0.28.0-py3-none-any.whl

- Upload date:

- Size: 589.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1c54b831baabf68001759b073e2121c46232b58d1e8c85804250251ad248220d

|

|

| MD5 |

47d57a6d6631a514760ce8fc3c54f803

|

|

| BLAKE2b-256 |

a82dcf89249ddfa0458811e64fc9c68f57b1a5ea2257a98f88d87b9c6c7d423d

|

Provenance

The following attestation bundles were made for pieeg_server-0.28.0-py3-none-any.whl:

Publisher:

release.yml on pieeg-club/PiEEG-server

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

pieeg_server-0.28.0-py3-none-any.whl -

Subject digest:

1c54b831baabf68001759b073e2121c46232b58d1e8c85804250251ad248220d - Sigstore transparency entry: 1297231385

- Sigstore integration time:

-

Permalink:

pieeg-club/PiEEG-server@adabd5c7082c95a43a90a2dbbc8000113294bd03 -

Branch / Tag:

refs/tags/v0.28.0 - Owner: https://github.com/pieeg-club

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@adabd5c7082c95a43a90a2dbbc8000113294bd03 -

Trigger Event:

push

-

Statement type: