Generate multi-table relational datasets with behavioral trajectories, correlations, and causal lags. Config-driven, deterministic, no real data required

Project description

Generate multi-table relational datasets where every metric tells the same story. Config-driven. No real data required.

pip install plotsim

plotsim generates multi-table relational datasets from a behavioral description. You define metrics, segments, and how entities behave over time — the engine produces a star schema where every value traces back to one trajectory. shape: every entity follows a behavioral trajectory, and every metric across every table reads from the same trajectory position. When engagement rises, revenue follows. When it declines, churn fires.

Quick start

from plotsim import create, generate_tables

cfg = create(

about="Subscription customers",

unit="customer",

window=("2024-01", "2024-12", "monthly"),

metrics=[

{"name": "engagement", "type": "score", "polarity": "positive"},

{"name": "payments", "type": "count", "polarity": "positive"},

],

segments=[

{"name": "active", "count": 50, "archetype": "growth"},

{"name": "inactive", "count": 30, "archetype": "decline"},

],

)

tables = generate_tables(cfg)

for name, df in tables.items():

print(f"{name}: {len(df)} rows")

# dim_date: 12 rows

# dim_customer: 80 rows

# fct_customer: 960 rows

What you get

A complete dataset of CSV (or Parquet) files, ready to load into a warehouse, notebook, or BI tool. Same config plus same seed produces byte-identical output every time — see the output guide for details.

Who is this for

Educators and students who need realistic datasets for SQL courses, data modeling workshops, analytics training, or portfolio projects — five domain templates ready to go, same seed produces the same data every time.

Data engineers who need test fixtures that behave like production data — with FK integrity, realistic distributions, and configurable corruption — without copying production or hand-rolling three-row CSVs.

Data scientists who need labeled training data with known ground truth — archetype labels, trajectory positions, and temporal holdout splits — to validate models before touching real data.

Analytics engineers who need a star schema to build dbt models, test transformations, or demonstrate a pipeline end-to-end without waiting for upstream data.

BI and analytics teams who need a populated star schema to build dashboards, test reports, or demo a new tool to stakeholders — dims, facts, events, and SCD versioning out of the box.

Demo builders who need a convincing dataset for a conference talk, a product walkthrough, or a proof of concept — correlated metrics that tell a realistic story, not random noise.

How it works

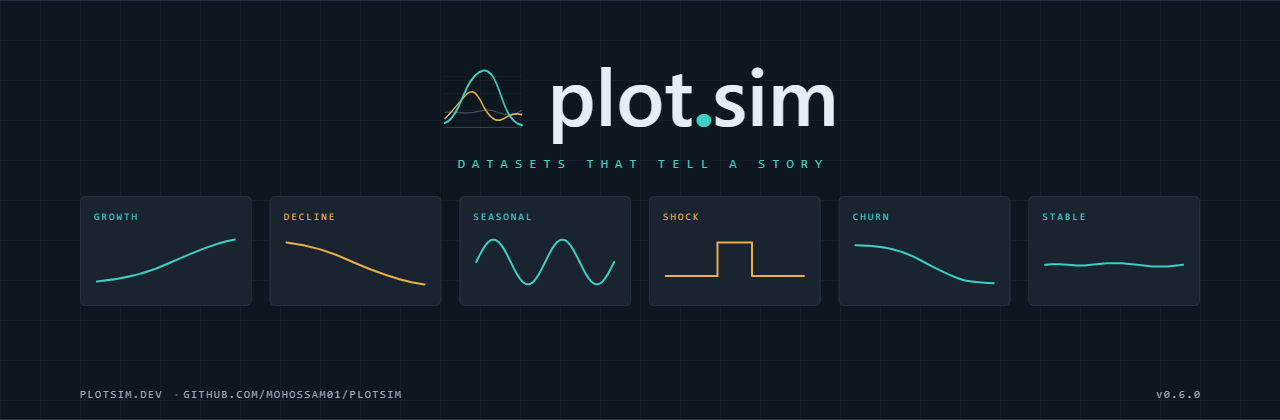

Every entity in the dataset follows a behavioral trajectory — a curve shape like growth, decline, seasonal, or spike-then-crash. At each time period, the entity's position on that curve determines every metric value across every table. Revenue, engagement, churn risk, and support tickets all read from the same position, so they move together the way real business metrics do.

Metric relationships are enforced through a Gaussian copula —

declare engagement opposes churn_risk and the engine delivers the

configured correlation coefficient regardless of whether one metric

is beta-distributed and the other is Poisson. Causal lags compose:

if A → B (lag 2) → C (lag 3), then C reflects A from 5 periods ago.

Output is deterministic. Every random draw flows through a single

seeded numpy.Generator. Same config + same seed = byte-identical

tables across machines, OS, and Python versions. The manifest records

every generation decision — archetype assignments, trajectory

positions, correlation adjustments, quality injections — so any

cell value can be traced back to its origin.

Config-time validation catches problems before generation starts: circular causal chains, non-positive-definite correlation matrices, broken foreign key references, duplicate metric names, and SQL-unsafe identifiers all surface as parse errors with fix suggestions.

See the docs site for the full pipeline.

Docs

mohossam01.github.io/plotsim — quickstart, user guide, tutorials, API reference, cookbooks.

Contributing

See CONTRIBUTING.md for dev setup, test commands, and how to add templates.

License

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file plotsim-0.6.0.tar.gz.

File metadata

- Download URL: plotsim-0.6.0.tar.gz

- Upload date:

- Size: 503.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f9a8171a7e7252116f4246fddeb5b31e479e9f1cad44f26c3509e757789ff945

|

|

| MD5 |

79c2a7887118d3f3a40900674f2cb5bb

|

|

| BLAKE2b-256 |

51dc675b8d19eb411b3cf6ecf401accc51e4d02f303a07a4d69721a589452819

|

File details

Details for the file plotsim-0.6.0-py3-none-any.whl.

File metadata

- Download URL: plotsim-0.6.0-py3-none-any.whl

- Upload date:

- Size: 272.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c487803c8d14c1d2970af2369d2a366e25806e575049d2f055e1c8df63b3b759

|

|

| MD5 |

597094906557c9ba77c5430800c7523f

|

|

| BLAKE2b-256 |

0519813bfd94bc5a60b0ae31546af2d4af8eb04cb9eec0f58e6d3910ce60bc54

|