Tessera — Personal Knowledge Layer for AI. Own your memory across every AI tool.

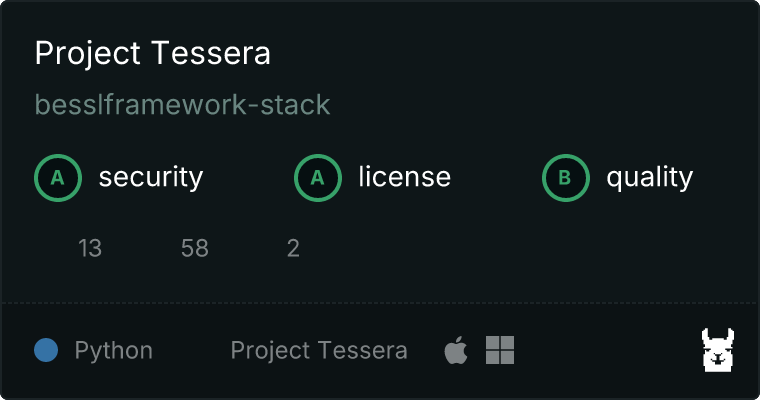

Project description

Tessera

Personal Knowledge Layer for AI. Own your memory across every AI tool.

You use Claude, ChatGPT, Gemini, Copilot. Each conversation generates knowledge that disappears when the session ends. Tessera captures that knowledge, stores it locally, and serves it back to any AI. Your memory, your machine, your data.

What makes Tessera different

- Auto-learning -- Tessera records every interaction and extracts decisions, preferences, and facts automatically. No manual "remember this."

- Interface-agnostic core -- One knowledge engine, multiple interfaces. MCP today, HTTP API for ChatGPT/Gemini/extensions coming next.

- Cross-session memory -- AI remembers your decisions and context between conversations.

- 100% local -- No cloud, no API keys, no data leaving your machine. LanceDB + fastembed/ONNX.

- Hybrid search -- Semantic + keyword search with reranking. Not just vector similarity.

Architecture

+-----------------+

| src/core.py | Business logic (35 functions)

| | Search, memory, knowledge graph,

| | auto-extract, interaction log

+-----------------+

/ | \

+-------------+ +----------------+ +----------+

| mcp_server | | http_server.py | | cli.py |

| (stdio/MCP) | | (REST API) | | (CLI) |

| Claude | | ChatGPT, | | |

| Desktop | | Gemini, etc. | | |

+-------------+ +----------------+ +----------+

(planned)

Core engine:

+--------------------------------------------------+

| LanceDB (vectors) | SQLite (metadata, analytics) |

| fastembed/ONNX (local embeddings, no API keys) |

| Auto-extract (pattern-based fact detection) |

| Interaction log (every tool call recorded) |

+--------------------------------------------------+

One core, multiple interfaces. The same knowledge base works regardless of which AI tool you use.

Get started

1. Install

pip install project-tessera

Or with uv:

uvx --from project-tessera tessera setup

2. Setup

tessera setup

This does everything:

- Creates a workspace config

- Downloads the embedding model (~220MB, first time only)

- Configures Claude Desktop automatically

3. Restart Claude Desktop

Ask Claude about your documents. It searches automatically.

Supported file types (40+)

| Category | Extensions | Install |

|---|---|---|

| Documents | .md .txt .rst .csv |

included |

| Office | .xlsx .docx .pdf |

pip install project-tessera[xlsx,docx,pdf] |

| Code | .py .js .ts .tsx .jsx .java .go .rs .rb .php .c .cpp .h .swift .kt .sh .sql .cs .dart .r .lua .scala |

included |

| Config | .json .yaml .yml .toml .xml .ini .cfg .env |

included |

| Web | .html .htm .css .scss .less .svg |

included |

| Images | .png .jpg .jpeg .webp .gif .bmp .tiff |

pip install project-tessera[ocr] (for text extraction) |

Tools (44)

Search

| Tool | What it does |

|---|---|

search_documents |

Semantic + keyword hybrid search across all docs |

unified_search |

Search documents AND memories in one call |

view_file_full |

Full file view (CSV as table, XLSX per sheet, etc.) |

read_file |

Read any file's full content |

list_sources |

See what's indexed |

Memory

| Tool | What it does |

|---|---|

remember |

Save knowledge that persists across sessions |

recall |

Search past memories with date/category filters |

learn |

Save and immediately index new knowledge |

digest_conversation |

Auto-extract decisions/facts from the current session |

list_memories |

Browse saved memories |

forget_memory |

Delete a specific memory |

export_memories |

Batch export all memories as JSON |

import_memories |

Batch import memories from JSON |

memory_tags |

List all unique tags with counts |

search_by_tag |

Filter memories by specific tag |

memory_categories |

List auto-detected categories (decision/preference/fact) |

search_by_category |

Filter memories by category |

Knowledge graph

| Tool | What it does |

|---|---|

find_similar |

Find documents similar to a given file |

knowledge_graph |

Build a Mermaid diagram of document relationships |

explore_connections |

Show connections around a specific topic |

Auto-learn

| Tool | What it does |

|---|---|

digest_conversation |

Extract and save knowledge from the current session |

toggle_auto_learn |

Turn auto-learning on/off or check status |

review_learned |

Review recently auto-learned memories |

session_interactions |

View tool calls from current/past sessions |

recent_sessions |

Session history with interaction counts |

Intelligence

| Tool | What it does |

|---|---|

decision_timeline |

Track how decisions evolved over time, grouped by topic |

context_window |

Build optimal context within a token budget for cross-AI use |

smart_suggest |

Personalized query suggestions based on past patterns |

topic_map |

Cluster memories by topic with Mermaid mindmap |

knowledge_stats |

Aggregate statistics dashboard (categories, tags, growth) |

Workspace

| Tool | What it does |

|---|---|

ingest_documents |

Index documents (first-time or full rebuild) |

sync_documents |

Incremental sync (only changed files) |

project_status |

Recent changes per project |

extract_decisions |

Find past decisions from logs |

audit_prd |

Check PRD quality (13-section structure) |

organize_files |

Move, rename, archive files |

suggest_cleanup |

Detect backup files, empty dirs, misplaced files |

tessera_status |

Server health: tracked files, sync history, cache |

health_check |

Comprehensive workspace diagnostics |

search_analytics |

Search usage patterns, top queries, response times |

check_document_freshness |

Detect stale documents older than N days |

CLI

tessera setup # One-command setup

tessera init # Interactive setup

tessera ingest # Index all sources

tessera sync # Re-index changed files

tessera check # Workspace health

tessera status # Project status

tessera install-mcp # Configure Claude Desktop

tessera version # Show version

How it works

Documents (Markdown, CSV, XLSX, DOCX, PDF)

|

v

Parse & chunk --> Embed locally (fastembed/ONNX) --> LanceDB (local vector DB)

|

v

src/core.py (search, memory, knowledge graph, auto-extract)

|

v

MCP server (Claude Desktop) / HTTP API (ChatGPT, Gemini, extensions)

Everything runs on your machine. No external API calls for search or embedding.

Claude Desktop config

With uvx (recommended):

{

"mcpServers": {

"tessera": {

"command": "uvx",

"args": ["--from", "project-tessera", "tessera-mcp"]

}

}

}

With pip:

{

"mcpServers": {

"tessera": {

"command": "tessera-mcp"

}

}

}

Config location:

- macOS:

~/Library/Application Support/Claude/claude_desktop_config.json - Windows:

%APPDATA%\Claude\claude_desktop_config.json

Configuration

tessera setup creates workspace.yaml. All parameters are tunable:

workspace:

root: /Users/you/Documents

name: my-workspace

sources:

- path: .

type: document

search:

reranker_weight: 0.7 # Semantic vs keyword balance

max_top_k: 50 # Max results per search

ingestion:

chunk_size: 1024 # Text chunk size

chunk_overlap: 100 # Overlap between chunks

watcher:

poll_interval: 30.0 # Seconds between scans

debounce: 5.0 # Wait before syncing

Or skip config entirely -- Tessera auto-detects your workspace. Set TESSERA_WORKSPACE=/path/to/docs to specify a folder.

Roadmap

See ROADMAP.md for the full plan from v0.6 to v1.0.

| Phase | Version | What changes |

|---|---|---|

| Sponge | v0.7 | Manual memory becomes automatic learning |

| Radar | v0.8 | Reactive search becomes proactive intelligence |

| Gateway | v0.9 | MCP-only becomes multi-interface (HTTP API) |

| Cortex | v1.0 | Search tool becomes Claude's persistent brain |

License

AGPL-3.0 -- see LICENSE.

Commercial licensing: bessl.framework@gmail.com

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file project_tessera-0.8.0.tar.gz.

File metadata

- Download URL: project_tessera-0.8.0.tar.gz

- Upload date:

- Size: 126.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

468582d0a5b6050aebed116de33a0d8c9ba8cc7114b47b0d1f689e3bdec633a5

|

|

| MD5 |

fc7b9dd603b40820c8fae1b77d947445

|

|

| BLAKE2b-256 |

3b1765919e71c79fd3b79a51a54f8f3ce0cfa305a90810c4e247acaf3bb63308

|

Provenance

The following attestation bundles were made for project_tessera-0.8.0.tar.gz:

Publisher:

publish.yml on besslframework-stack/project-tessera

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

project_tessera-0.8.0.tar.gz -

Subject digest:

468582d0a5b6050aebed116de33a0d8c9ba8cc7114b47b0d1f689e3bdec633a5 - Sigstore transparency entry: 1066626091

- Sigstore integration time:

-

Permalink:

besslframework-stack/project-tessera@43b6a1deab68abef825c78ccf23653e89389b66f -

Branch / Tag:

refs/tags/v0.8.0 - Owner: https://github.com/besslframework-stack

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@43b6a1deab68abef825c78ccf23653e89389b66f -

Trigger Event:

push

-

Statement type:

File details

Details for the file project_tessera-0.8.0-py3-none-any.whl.

File metadata

- Download URL: project_tessera-0.8.0-py3-none-any.whl

- Upload date:

- Size: 112.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

7fdab7c975f0e33e6d7fe2a5825f7000863f1248c113f9f66220e99113a66ba5

|

|

| MD5 |

520745dcbb9459641ce9d743d35a830c

|

|

| BLAKE2b-256 |

0c8e1a02f5cea818e00923746dbaebdcdc8a83da13390819ce1ad8750ed13faf

|

Provenance

The following attestation bundles were made for project_tessera-0.8.0-py3-none-any.whl:

Publisher:

publish.yml on besslframework-stack/project-tessera

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

project_tessera-0.8.0-py3-none-any.whl -

Subject digest:

7fdab7c975f0e33e6d7fe2a5825f7000863f1248c113f9f66220e99113a66ba5 - Sigstore transparency entry: 1066626092

- Sigstore integration time:

-

Permalink:

besslframework-stack/project-tessera@43b6a1deab68abef825c78ccf23653e89389b66f -

Branch / Tag:

refs/tags/v0.8.0 - Owner: https://github.com/besslframework-stack

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@43b6a1deab68abef825c78ccf23653e89389b66f -

Trigger Event:

push

-

Statement type: