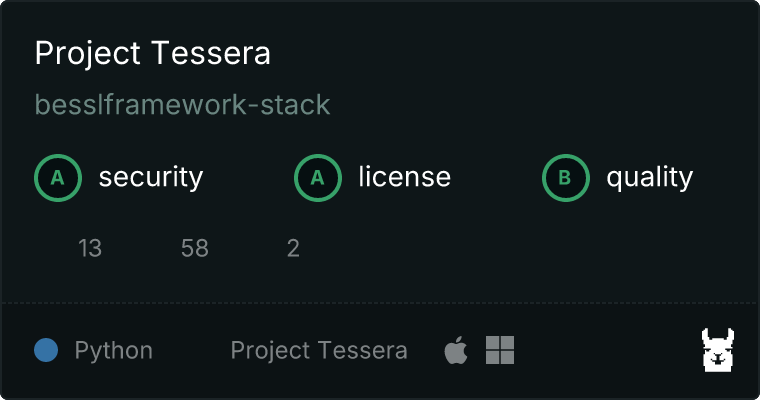

Tessera — Personal Knowledge Layer for AI. Own your memory across every AI tool. 53 MCP tools, REST API, knowledge graph, memory consolidation, ChatGPT Actions, encryption, local-first.

Project description

Tessera

Your AI conversations generate knowledge that vanishes when the session ends. Tessera keeps it.

One knowledge base across Claude, ChatGPT, Gemini, and Copilot. No API keys. No Docker. No data leaves your machine.

pip install project-tessera

tessera setup

# Done. Claude Desktop now has persistent memory + document search.

Why Tessera over alternatives

| Tessera | Mem0 | Basic Memory | mcp-memory-service | |

|---|---|---|---|---|

| Works without API keys | Yes | No (needs OpenAI) | Yes | Partial |

| Works without Docker | Yes | No | Yes | No |

| Document search (40+ types) | Yes | No | Markdown only | No |

| ChatGPT live integration (Actions) | Yes | No | No | No |

| Contradiction detection | Yes | No | No | No |

| Memory confidence scoring | Yes | No | No | No |

| Encrypted vault (AES-256) | Yes | No | No | No |

| HTTP API for non-MCP tools | 41 endpoints | Yes | No | Yes |

| Auto-learning from conversations | Yes | Yes | No | No |

| MCP tools | 53 | ~10 | ~15 | 24 |

What makes Tessera different

ChatGPT can call Tessera's API directly through Custom GPT Actions -- same knowledge base, live access, no manual export. You build one knowledge base and both Claude (MCP) and ChatGPT (HTTP Actions) read and write to it.

Tessera also does things most memory tools skip: scanning for contradictions between old and new memories, scoring how confident you should be in each memory based on how often it's been reinforced, and flagging knowledge that's gone stale.

Setup is pip install and go. LanceDB and fastembed run embedded -- no Docker, no database server, no API keys, no cloud account.

If you set TESSERA_VAULT_KEY, all memories are AES-256-CBC encrypted at rest.

Architecture

How search works (query path)

User asks: "What did we decide about the database?"

|

v

+-----------------------+

| Query Processing |

| Multi-angle decomp | "database decision"

| (2-4 perspectives) | "database", "decision"

+-----------------------+ "decision about database"

|

+-------------+-------------+

| |

v v

+------------------+ +------------------+

| Vector Search | | Keyword Search |

| (LanceDB) | | (FTS index) |

| 384-dim MiniLM | | BM25 scoring |

+------------------+ +------------------+

| |

+-------------+-------------+

|

v

+-----------------------+

| Reranking |

| 70% semantic weight | LinearCombinationReranker

| 30% keyword weight | + version-aware scoring

+-----------------------+

|

v

+-----------------------+

| Result Assembly |

| Dedup (content hash) | 2-pass deduplication

| Verdict labels | found / weak / none

| Cache (60s TTL) |

+-----------------------+

|

v

Top-K results with

confidence scores

How ingestion works (ingest path)

Documents: .md .pdf .docx .xlsx .py .ts .go ... (40+ types)

|

v

+-----------------------+

| File Type Router |

| Markdown, CSV, XLSX | Type-specific parsers

| Code, PDF, Images | with metadata extraction

+-----------------------+

|

v

+-----------------------+

| Chunking Engine |

| 1024 tokens/chunk | Sentence-boundary aware

| 100 token overlap | Heading-preserving

+-----------------------+

|

v

+-----------------------+

| Local Embedding |

| fastembed/ONNX | paraphrase-multilingual

| 384 dimensions | MiniLM-L12-v2

| No API calls | 101 languages

+-----------------------+

|

+-------------+-------------+

| |

v v

+------------------+ +------------------+

| LanceDB | | SQLite |

| Vector storage | | File metadata |

| Columnar format | | Search analytics|

| Zero-config | | Interaction log |

+------------------+ +------------------+

System overview

+--------------------------------------------+

| src/core.py |

| 58 orchestration functions |

| 55 specialized modules, 10.5k LOC |

+--------------------------------------------+

/ | \

+---------------+ +-------------------+ +--------------+

| MCP Server | | HTTP API Server | | CLI |

| Claude Desktop| | FastAPI + Swagger | | 11 commands |

| 53 tools | | 41 endpoints | | setup, sync |

| stdio | | port 8394 | | ingest, api |

+---------------+ +-------------------+ +--------------+

| | |

v v v

+------------------------------------------------------------+

| Storage Layer |

| LanceDB SQLite Filesystem |

| (vectors) (metadata, (memories as .md, |

| analytics, encrypted with |

| interactions) AES-256-CBC) |

| |

| fastembed/ONNX: local embedding, no API keys |

| 101 languages, 384-dim vectors, ~220MB model |

+------------------------------------------------------------+

Get started

1. Install

pip install project-tessera

Or with uv:

uvx --from project-tessera tessera setup

2. Setup

tessera setup

Creates workspace config, downloads embedding model (~220MB, first time only), configures Claude Desktop.

3. Restart Claude Desktop

Ask Claude about your documents. It searches automatically.

Use with ChatGPT (Custom GPT Actions)

tessera api # Start REST API on localhost:8394

ngrok http 8394 # Expose to the internet

# Then create a Custom GPT with the Actions spec from /chatgpt-actions/openapi.json

Full setup guide at http://127.0.0.1:8394/chatgpt-actions/setup. Swagger docs at http://127.0.0.1:8394/docs.

How it works

Hybrid search with reranking

Search queries go through a 4-stage retrieval pipeline:

- Query decomposition -- splits complex queries into 2-4 search angles (core keywords, individual terms, reversed emphasis)

- Hybrid retrieval -- vector similarity (LanceDB) + keyword matching (FTS/BM25) in parallel

- Reranking -- LinearCombinationReranker merges results (70% semantic, 30% keyword weight)

- Verdict scoring -- each result labeled as

confident match(>= 45%),possible match(25-45%), orlow relevance(< 25%)

Version-aware: when multiple versions of the same document exist, Tessera automatically prefers the latest.

Cross-session memory

# Via MCP (Claude)

"Remember that we chose PostgreSQL for the production database"

# Via HTTP API (ChatGPT, Gemini, scripts)

curl -X POST http://127.0.0.1:8394/remember \

-H "Content-Type: application/json" \

-d '{"content": "Use PostgreSQL for production", "tags": ["db", "architecture"]}'

Memories are auto-categorized (decision, preference, or fact), deduplicated via cosine similarity (0.92 threshold), and scored for confidence based on repetition (35%), recency (25%), source diversity (20%), and category weight (20%). If TESSERA_VAULT_KEY is set, they're AES-256-CBC encrypted at rest.

Auto-learning

Extracts decisions, preferences, and facts from conversations. Turn it on/off with toggle_auto_learn, see what it picked up with review_learned.

Contradiction detection

Scans your memories for conflicting statements:

CONTRADICTION (HIGH severity):

"We decided to use PostgreSQL" (2026-03-01)

vs

"Switched to MongoDB for the main database" (2026-03-10)

The newer memory (2026-03-10) likely reflects the current state.

Supports both English and Korean negation patterns.

Cross-AI: ChatGPT Custom GPT Actions

ChatGPT can call Tessera's HTTP API directly through Custom GPT Actions. No export/import -- it reads and writes your knowledge base in real time, same as Claude does through MCP.

# 1. Start Tessera API + tunnel

tessera api

ngrok http 8394

# 2. Get the OpenAPI spec for your Custom GPT

curl https://your-tunnel.ngrok-free.app/chatgpt-actions/openapi.json?server_url=https://your-tunnel.ngrok-free.app

# 3. Get the GPT instruction template

curl https://your-tunnel.ngrok-free.app/chatgpt-actions/instructions

# 4. Full setup guide

curl https://your-tunnel.ngrok-free.app/chatgpt-actions/setup

Create a Custom GPT, paste the instructions, import the OpenAPI spec as an Action, and ChatGPT can search your documents, save memories, recall past decisions -- all hitting the same knowledge base Claude uses.

You can also import past ChatGPT conversations to extract knowledge from them:

curl -X POST http://127.0.0.1:8394/import-conversations \

-H "Content-Type: application/json" \

-d '{"data": "<ChatGPT export JSON>", "source": "chatgpt"}'

Export as Obsidian vault (wikilinks), Markdown, CSV, or JSON:

curl http://127.0.0.1:8394/export?format=obsidian

Memory health analytics

Classifies memories as healthy, stale (90+ days without reinforcement), or orphaned (minimal metadata, no category). Suggests what to clean up and shows growth over time.

Plugin hooks

Extend Tessera with custom scripts triggered on events:

# workspace.yaml

hooks:

on_memory_created:

- script: ./notify-slack.sh

on_contradiction_found:

- script: ./alert.py

7 event types: on_memory_created, on_memory_deleted, on_search, on_session_start, on_session_end, on_ingest_complete, on_contradiction_found.

Supported file types (40+)

| Category | Extensions | Install |

|---|---|---|

| Documents | .md .txt .rst .csv |

included |

| Office | .xlsx .docx .pdf |

pip install project-tessera[xlsx,docx,pdf] |

| Code | .py .js .ts .tsx .jsx .java .go .rs .rb .php .c .cpp .h .swift .kt .sh .sql .cs .dart .r .lua .scala |

included |

| Config | .json .yaml .yml .toml .xml .ini .cfg .env |

included |

| Web | .html .htm .css .scss .less .svg |

included |

| Images | .png .jpg .jpeg .webp .gif .bmp .tiff |

pip install project-tessera[ocr] |

MCP tools (53)

Search (5)

| Tool | What it does |

|---|---|

search_documents |

Semantic + keyword hybrid search across all docs |

unified_search |

Search documents AND memories in one call |

view_file_full |

Full file view (CSV as table, XLSX per sheet) |

read_file |

Read any file's full content |

list_sources |

See what's indexed |

Memory (13)

| Tool | What it does |

|---|---|

remember |

Save knowledge that persists across sessions |

recall |

Search past memories with date/category filters |

learn |

Save and immediately index new knowledge |

list_memories |

Browse saved memories |

forget_memory |

Delete a specific memory |

export_memories |

Batch export all memories as JSON |

import_memories |

Batch import memories from JSON |

memory_tags |

List all unique tags with counts |

search_by_tag |

Filter memories by specific tag |

memory_categories |

List auto-detected categories (decision/preference/fact) |

search_by_category |

Filter memories by category |

find_similar |

Find documents similar to a given file |

knowledge_graph |

Build a Mermaid diagram of document relationships |

Auto-learn (5)

| Tool | What it does |

|---|---|

digest_conversation |

Extract and save knowledge from the current session |

toggle_auto_learn |

Turn auto-learning on/off or check status |

review_learned |

Review recently auto-learned memories |

session_interactions |

View tool calls from current/past sessions |

recent_sessions |

Session history with interaction counts |

Intelligence (7)

| Tool | What it does |

|---|---|

decision_timeline |

Track how decisions evolved over time, grouped by topic |

context_window |

Build optimal context within a token budget |

smart_suggest |

Personalized query suggestions based on past patterns |

topic_map |

Cluster memories by topic with Mermaid mindmap |

knowledge_stats |

Aggregate statistics (categories, tags, growth) |

user_profile |

Auto-built profile (language, preferences, expertise) |

explore_connections |

Show connections around a specific topic |

Insight (6)

| Tool | What it does |

|---|---|

deep_search |

Multi-angle search: decomposes query into 2-4 perspectives, merges best results |

deep_recall |

Multi-angle memory recall with verdict labels |

detect_contradictions |

Scan memories for conflicting statements with severity rating |

memory_confidence |

Rate each memory's reliability (repetition, recency, source diversity) |

memory_health |

Classify memories as healthy/stale/orphaned with cleanup recommendations |

list_plugin_hooks |

View registered event hooks and extensibility points |

Cross-AI (4)

| Tool | What it does |

|---|---|

export_for_ai |

Export memories in ChatGPT/Gemini-readable format |

import_from_ai |

Import memories from external AI tools |

import_conversations |

Extract knowledge from ChatGPT/Claude conversation exports |

export_knowledge |

Export as Obsidian (wikilinks), Markdown, CSV, or JSON |

ChatGPT connects via Custom GPT Actions (HTTP API). See /chatgpt-actions/setup for the full guide.

Security and data (2)

| Tool | What it does |

|---|---|

vault_status |

Check AES-256 encryption status |

migrate_data |

Upgrade data from older schema versions |

Workspace (11)

| Tool | What it does |

|---|---|

ingest_documents |

Index documents (first-time or full rebuild) |

sync_documents |

Incremental sync (only changed files) |

project_status |

Recent changes per project |

extract_decisions |

Find past decisions from logs |

audit_prd |

Check PRD quality (13-section structure) |

organize_files |

Move, rename, archive files |

suggest_cleanup |

Detect backup files, empty dirs, misplaced files |

tessera_status |

Server health: tracked files, sync history, cache |

health_check |

Comprehensive workspace diagnostics |

search_analytics |

Search usage patterns, top queries, response times |

check_document_freshness |

Detect stale documents older than N days |

HTTP API (41 endpoints)

pip install project-tessera[api]

tessera api # http://127.0.0.1:8394

Swagger UI at http://127.0.0.1:8394/docs. Optional auth via TESSERA_API_KEY env var.

All endpoints

| Method | Path | What it does |

|---|---|---|

| GET | /health |

Health check |

| GET | /version |

Version info |

| POST | /search |

Semantic + keyword search |

| POST | /unified-search |

Search docs + memories |

| POST | /remember |

Save a memory |

| POST | /recall |

Search memories with filters |

| POST | /learn |

Save and index knowledge |

| GET | /memories |

List memories |

| DELETE | /memories/{id} |

Delete a memory |

| GET | /memories/categories |

List categories |

| GET | /memories/search-by-category |

Filter by category |

| GET | /memories/tags |

List tags |

| GET | /memories/search-by-tag |

Filter by tag |

| POST | /context-window |

Build token-budgeted context |

| GET | /decision-timeline |

Decision evolution |

| GET | /smart-suggest |

Query suggestions |

| GET | /topic-map |

Topic clusters |

| GET | /knowledge-stats |

Stats dashboard |

| POST | /batch |

Multiple operations in one call |

| GET | /export |

Export as Obsidian/MD/CSV/JSON |

| GET | /export-for-ai |

Export for ChatGPT/Gemini |

| POST | /import-from-ai |

Import from ChatGPT/Gemini |

| POST | /import-conversations |

Import past conversations |

| POST | /migrate |

Run data migration |

| GET | /vault-status |

Encryption status |

| GET | /user-profile |

User profile |

| GET | /status |

Server status |

| GET | /health-check |

Workspace diagnostics |

| POST | /deep-search |

Multi-angle document search |

| POST | /deep-recall |

Multi-angle memory recall |

| GET | /contradictions |

Detect conflicting memories |

| GET | /memory-confidence |

Memory reliability scores |

| GET | /memory-health |

Memory health analytics |

| GET | /hooks |

List plugin hooks |

| GET | /entity-search |

Search entity knowledge graph |

| POST | /entity-graph |

Mermaid diagram from entities |

| GET | /consolidation-candidates |

Find similar memory clusters |

| POST | /consolidate |

Merge similar memories |

| GET | /chatgpt-actions/openapi.json |

OpenAPI spec for ChatGPT Custom GPT Actions |

| GET | /chatgpt-actions/instructions |

GPT instruction template |

| GET | /chatgpt-actions/setup |

Full ChatGPT integration setup guide |

Quick examples

# Search documents

curl -X POST http://127.0.0.1:8394/search \

-H "Content-Type: application/json" \

-d '{"query": "database architecture", "top_k": 5}'

# Save a memory

curl -X POST http://127.0.0.1:8394/remember \

-H "Content-Type: application/json" \

-d '{"content": "Use PostgreSQL for production", "tags": ["db"]}'

# Export for ChatGPT

curl http://127.0.0.1:8394/export-for-ai?target=chatgpt

# Batch (multiple operations, single request)

curl -X POST http://127.0.0.1:8394/batch \

-H "Content-Type: application/json" \

-d '{"operations": [{"method": "search", "params": {"query": "test"}}, {"method": "knowledge_stats"}]}'

CLI (11 commands)

tessera setup # One-command setup (config + model download + Claude Desktop)

tessera init # Interactive setup

tessera ingest # Index all document sources

tessera sync # Re-index changed files only

tessera serve # Start MCP server (stdio)

tessera api # Start HTTP API server (port 8394)

tessera migrate # Upgrade data schema

tessera check # Workspace health diagnostics

tessera status # Project status summary

tessera install-mcp # Configure Claude Desktop

tessera version # Show version

Claude Desktop config

With uvx (recommended):

{

"mcpServers": {

"tessera": {

"command": "uvx",

"args": ["--from", "project-tessera", "tessera-mcp"]

}

}

}

With pip:

{

"mcpServers": {

"tessera": {

"command": "tessera-mcp"

}

}

}

Config location:

- macOS:

~/Library/Application Support/Claude/claude_desktop_config.json - Windows:

%APPDATA%\Claude\claude_desktop_config.json

Configuration

tessera setup creates workspace.yaml:

workspace:

root: /Users/you/Documents

name: my-workspace

sources:

- path: .

type: document

search:

reranker_weight: 0.7 # Semantic vs keyword balance (0.0 = keyword only, 1.0 = vector only)

max_top_k: 50 # Max results per search

ingestion:

chunk_size: 1024 # Tokens per chunk

chunk_overlap: 100 # Overlap between chunks

hooks: # Optional plugin hooks

on_memory_created:

- script: ./my-hook.sh

Or set TESSERA_WORKSPACE=/path/to/docs to skip config file entirely.

Environment variables:

TESSERA_API_KEY-- enable API authenticationTESSERA_VAULT_KEY-- enable AES-256 encryption for memories

Technical details

| Component | Technology | Why |

|---|---|---|

| Vector store | LanceDB | Embedded columnar store. No server process, handles vector + metadata queries natively |

| Embeddings | fastembed/ONNX | Local inference, no API keys. paraphrase-multilingual-MiniLM-L12-v2 (384-dim, 101 languages) |

| Metadata | SQLite | File tracking, search analytics, interaction logging. Thread-safe with reentrant locks |

| Memory storage | Filesystem (.md) | Human-readable, git-friendly, encryptable. YAML frontmatter for metadata |

| Encryption | Pure Python AES-256-CBC | No OpenSSL dependency. PKCS7 padding, random IV per memory |

| HTTP API | FastAPI | Swagger docs, Pydantic validation, async-capable |

| MCP | FastMCP (stdio) | Standard MCP protocol for Claude Desktop |

Numbers

| Metric | Count |

|---|---|

| MCP tools | 53 |

| HTTP endpoints | 46 |

| CLI commands | 11 |

| Core modules | 61 |

| Lines of code | 16,000+ |

| Tests | 976 |

| File types | 40+ |

License

AGPL-3.0 -- see LICENSE.

Commercial licensing: bessl.framework@gmail.com

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file project_tessera-1.4.0.tar.gz.

File metadata

- Download URL: project_tessera-1.4.0.tar.gz

- Upload date:

- Size: 222.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3006d1937e1601112949a2e740e8ab9aab62082ec8050d7dc365ecd770b08584

|

|

| MD5 |

845877c97172a145981815f1c132d870

|

|

| BLAKE2b-256 |

6be4d11530c27fff38b358039f4ca09642f778debb7b1c779daaf55e379b6fc0

|

Provenance

The following attestation bundles were made for project_tessera-1.4.0.tar.gz:

Publisher:

publish.yml on besslframework-stack/project-tessera

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

project_tessera-1.4.0.tar.gz -

Subject digest:

3006d1937e1601112949a2e740e8ab9aab62082ec8050d7dc365ecd770b08584 - Sigstore transparency entry: 1109533564

- Sigstore integration time:

-

Permalink:

besslframework-stack/project-tessera@f4bbebe70a966c3bbd794d2ea71baad98731a2c4 -

Branch / Tag:

refs/tags/v1.4.0 - Owner: https://github.com/besslframework-stack

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@f4bbebe70a966c3bbd794d2ea71baad98731a2c4 -

Trigger Event:

push

-

Statement type:

File details

Details for the file project_tessera-1.4.0-py3-none-any.whl.

File metadata

- Download URL: project_tessera-1.4.0-py3-none-any.whl

- Upload date:

- Size: 180.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

937619e52e25965d8773981c203243f0e717afa02f99f6dfab8268161cbd0391

|

|

| MD5 |

b6c1419441352772d4244545b3f5b3bf

|

|

| BLAKE2b-256 |

188965135dc46edbc12adf43f1e012c1913f2380a06bf25b1d3dff4a735715ad

|

Provenance

The following attestation bundles were made for project_tessera-1.4.0-py3-none-any.whl:

Publisher:

publish.yml on besslframework-stack/project-tessera

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

project_tessera-1.4.0-py3-none-any.whl -

Subject digest:

937619e52e25965d8773981c203243f0e717afa02f99f6dfab8268161cbd0391 - Sigstore transparency entry: 1109533565

- Sigstore integration time:

-

Permalink:

besslframework-stack/project-tessera@f4bbebe70a966c3bbd794d2ea71baad98731a2c4 -

Branch / Tag:

refs/tags/v1.4.0 - Owner: https://github.com/besslframework-stack

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@f4bbebe70a966c3bbd794d2ea71baad98731a2c4 -

Trigger Event:

push

-

Statement type: