Python benchmark functions for Optimization with NumPy, TensorFlow and PyTorch support.

Reason this release was yanked:

Package only included top-level imports.

Project description

Python Benchmark Functions for Optimization

Quick Start • Benchmark Functions

py-benchamrk-functions is a simple library that provides benchmark functions for global optimization. It exposes implementations in major computing frameworks such as NumPy, TensorFlow and PyTorch. All implementations support batch-evaluation of coordinates, allowing for performatic evaluation of candidate solutions in the search space. The main goal of this library is to provide up-to-date implementations of multiple common benchmark functions in the scientific literature.

Quick Start

Start by installing the library using your preferred package manager:

python -m pip install --upgrade pip uv

python -m uv pip install py_benchmark_functions

TensorFlow and Torch backend are optional and can be enable with:

python -m uv pip install py_benchmark_functions[tensorflow]

python -m uv pip install py_benchmark_functions[torch]

You can check if the library was correctly installed by running the following:

import py_benchmark_functions as bf

print(bf.available_backends())

# Output: {'numpy', 'tensorflow', 'torch'}

print(bf.available_functions())

# Output: ['Ackley', ..., 'Zakharov']

Instantiating and using Functions

The library is designed with the following entities:

core.Function: class that represents a benchmark function. An instance of this class represents an instance of the becnmark function for a given domain (core.Domain) and number of dimensions/coordinates.core.Transformation: class that represents a transformed (i.e., shifted, scaled, etc) function. It allows for programatically building new functions from existing ones.core.Metadata: class that represent metadata about a given function (i.e., known global optima, default search space, default parameters, etc). A transformation inherits such metadata from the base function.

The benchmark functions can be instantiated in 3 ways:

- Directly importing from

py_benchmark_functions.imp.{numpy,tensorflow,torch}(e.g.,from py_benchmark_functions.imp.numpy import AckleyNumpy);

from py_benchmark_functions.imp.numpy import AckleyNumpy

fn = AckleyNumpy(dims=2)

print(fn.name, fn.domain)

# Output: Ackley Domain(min=[-35.0, -35.0], max=[35.0, 35.0])

print(fn.metadata)

# Output: Metadata(default_search_space=(-35.0, 35.0), references=['https://arxiv.org/abs/1308.4008', 'https://www.sfu.ca/~ssurjano/optimization.html'], comments='', default_parameters={'a': 20.0, 'b': 0.2, 'c': 6.283185307179586}, global_optimum=0.0, global_optimum_coordinates=<...>)

- Using the global

get_fn,get_np_functionorget_tf_functionfrompy_benchmark_functions;

import py_benchmark_functions as bf

fn = bf.get_fn("Zakharov", 2)

print(fn, type(fn))

# Output: Zakharov(domain=Domain(min=[-5.0, -5.0], max=[10.0, 10.0])) <class 'py_benchmark_functions.imp.numpy.ZakharovNumpy'>

fn1 = bf.get_np_function("Zakharov", 2)

print(fn1, type(fn1))

# Output: Zakharov(domain=Domain(min=[-5.0, -5.0], max=[10.0, 10.0])) <class 'py_benchmark_functions.imp.numpy.ZakharovNumpy'>

fn2 = bf.get_tf_function("Zakharov", 2)

print(fn2, type(fn2))

# Output: Zakharov(domain=Domain(min=[-5.0, -5.0], max=[10.0, 10.0])) <class 'py_benchmark_functions.imp.tensorflow.ZakharovTensorflow'>

fn3 = bf.get_torch_function("Zakharov", 2)

print(fn3, type(fn3))

# Output: Zakharov(domain=Domain(min=[-5.0, -5.0], max=[10.0, 10.0])) <class 'py_benchmark_functions.imp.torch.ZakharovTorch'>

- Using the

Builderclass;

from py_benchmark_functions import Builder

fn = Builder().function("Alpine2").dims(4).transform(vshift=1.0).tensorflow().build()

print(fn, type(fn))

# Output: Transformed(Alpine2) <class 'py_benchmark_functions.imp.tensorflow.TensorflowTransformation'>

Regardless of how you get an instance of a function, all of them define the __call__ method, which allows them to be called directly. Every __call__ receives an x as argument (for NumPy, x should be an np.ndarray, for Tensorflow a tf.Tensor, and for PyTorch a torch.Tensor). The shape of x can either be (batch_size, dims) or (dims,), while the output is (batch_size,) or () (a scalar). Those properties are illustrated below:

import py_benchmark_functions as bf

import numpy as np

fn = bf.get_fn("Ackley", 2)

x = np.array([0.0, 0.0], dtype=np.float32)

print(fn(x))

# Output: -9.536743e-07

x = np.expand_dims(x, axis=0)

print(x, fn(x))

# Output: [[0. 0.]] [-9.536743e-07]

x = np.repeat(x, 3, axis=0)

print(x, fn(x))

# Output:

# [[0. 0.]

# [0. 0.]

# [0. 0.]] [-9.536743e-07 -9.536743e-07 -9.536743e-07]

[!NOTE]

Additionally, for thetorchandtensorflowbackends, it is possible to use theirautogradto differentiate any of the functions. Specifically, they expose the methods.grads(x) -> Tensorand.grads_at(x) -> Tuple[Tensor, Tensor]which returns the gradients for the inputxand, forgrads_at, the value of the function atx(in this order).

[!WARNING] Beware that some functions are not continuously differentiable, which might return

NaN's values! For the specifics of how those backends handle such cases one should refer to the respective official documentation (see A Gentle Introduction totorch.autogradand Introduction to gradients and automatic differentiation).

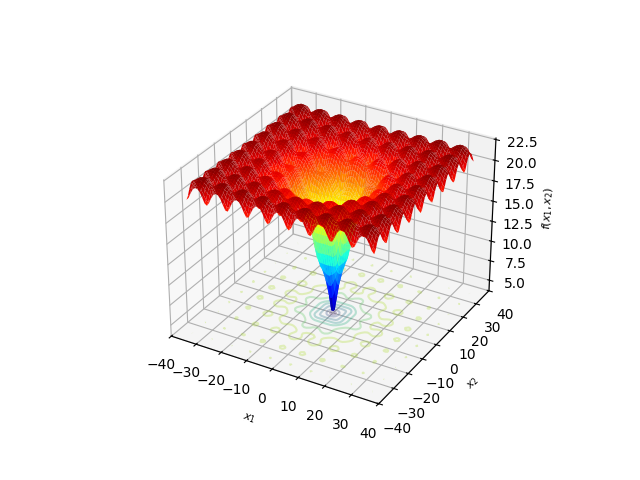

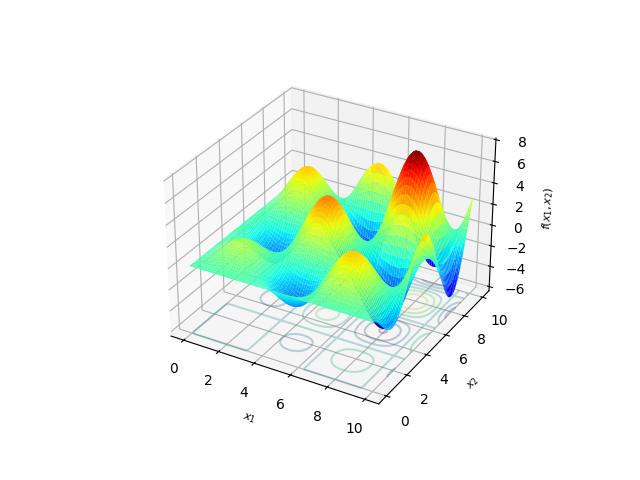

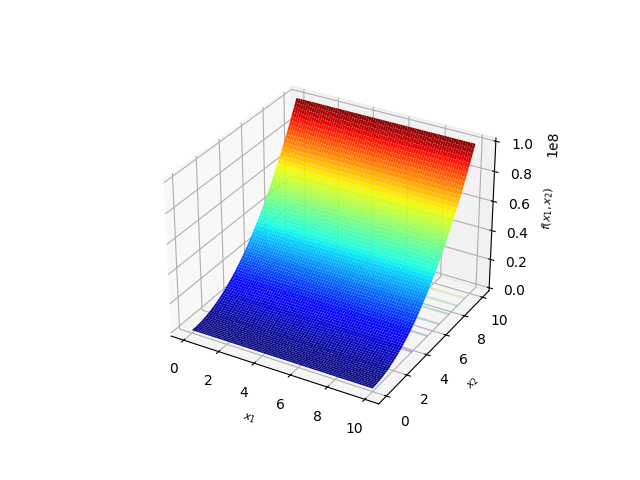

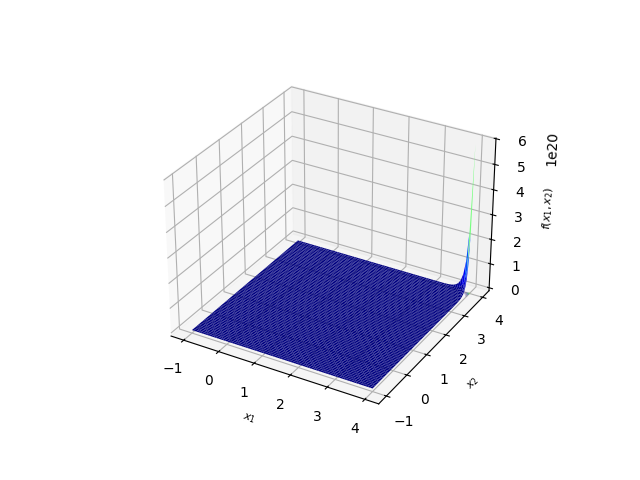

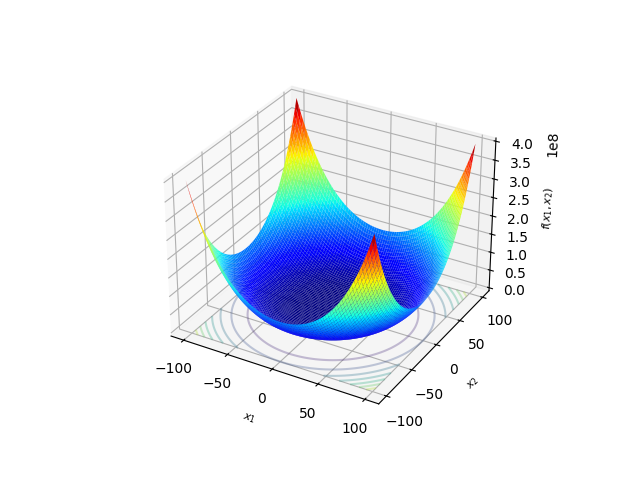

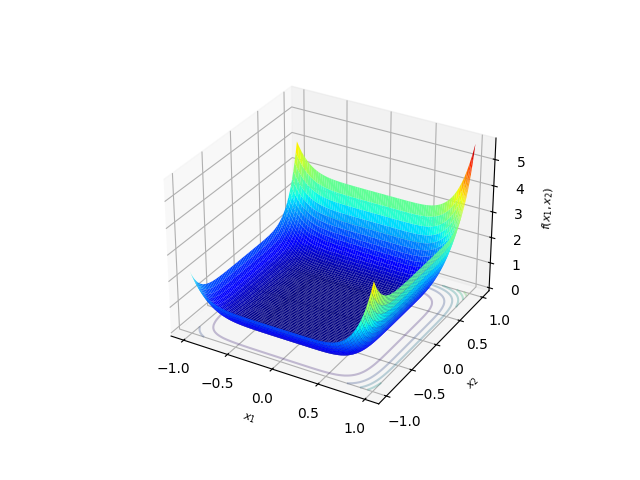

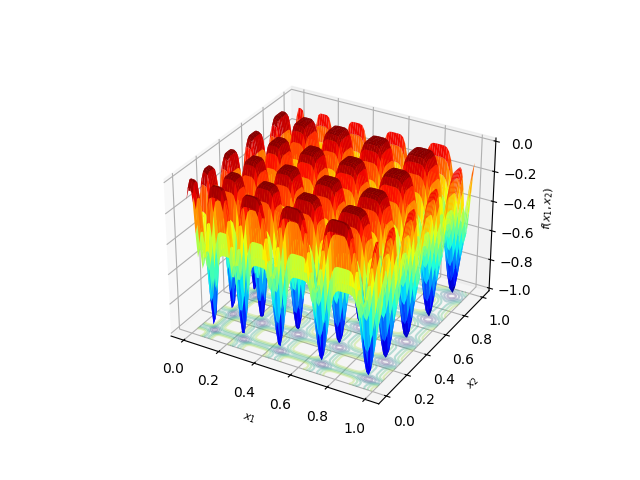

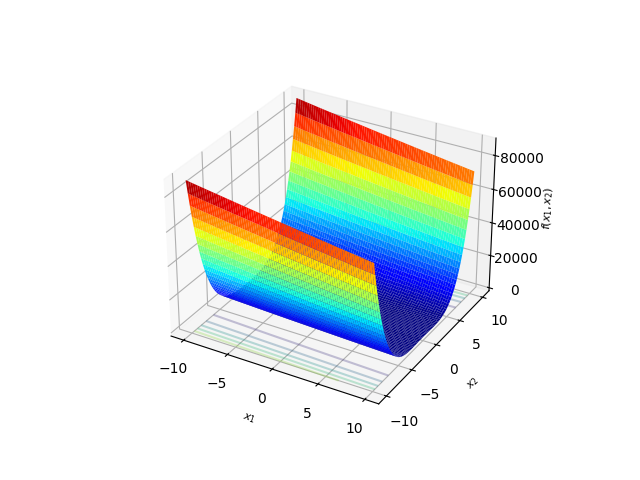

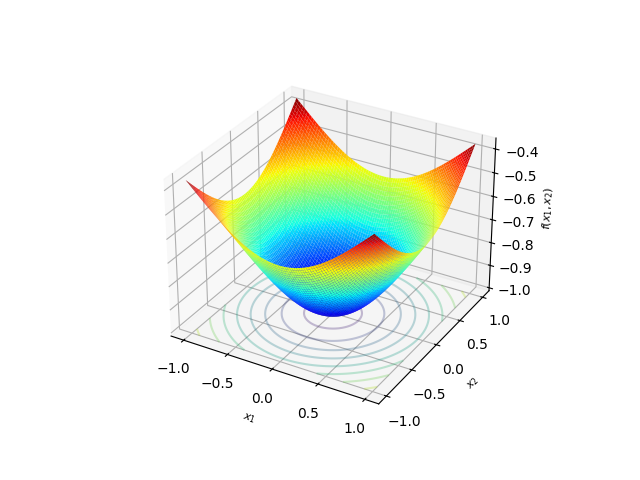

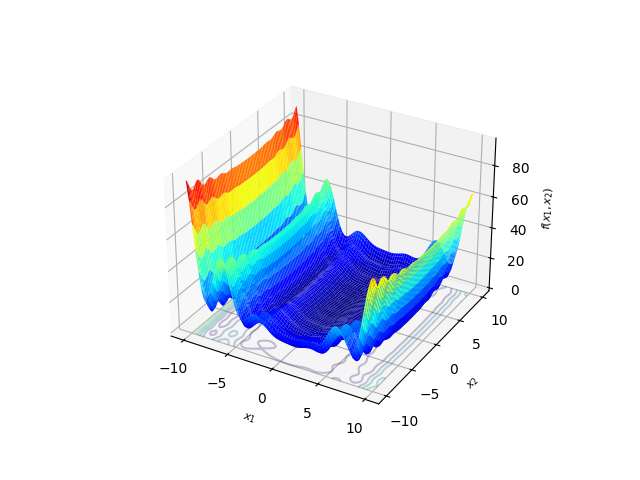

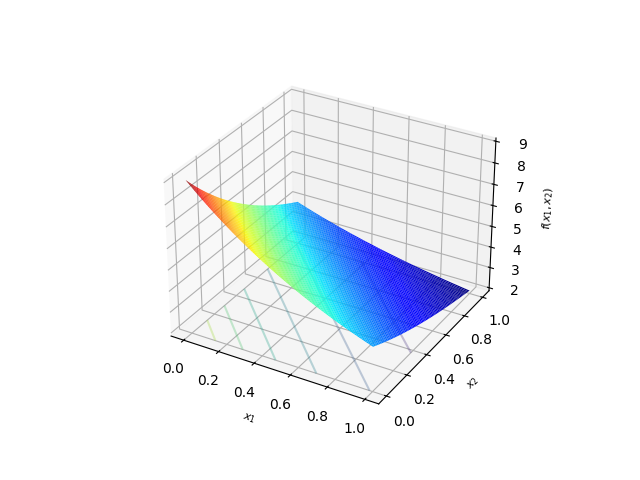

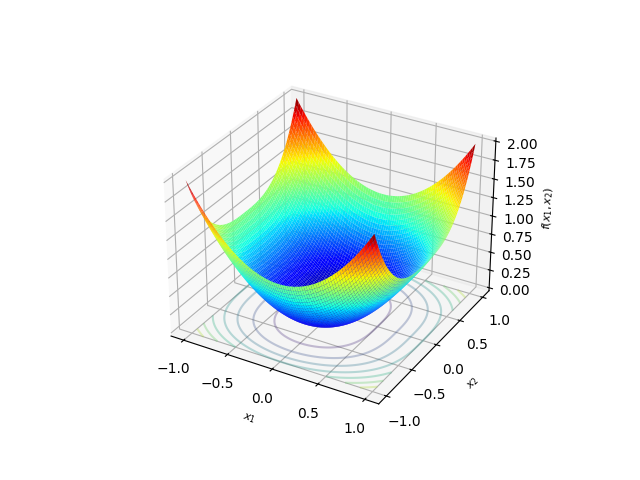

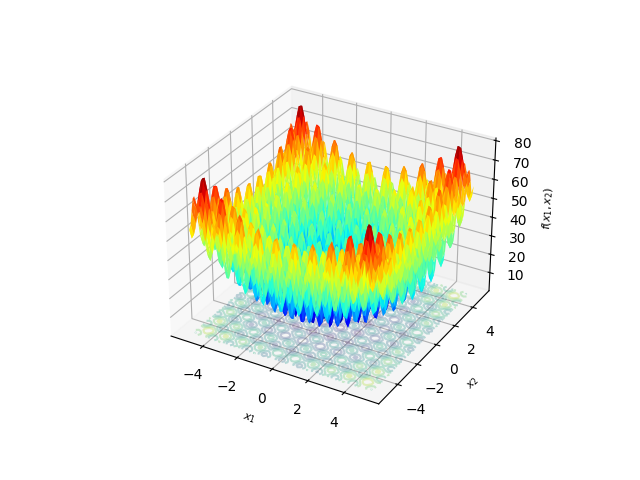

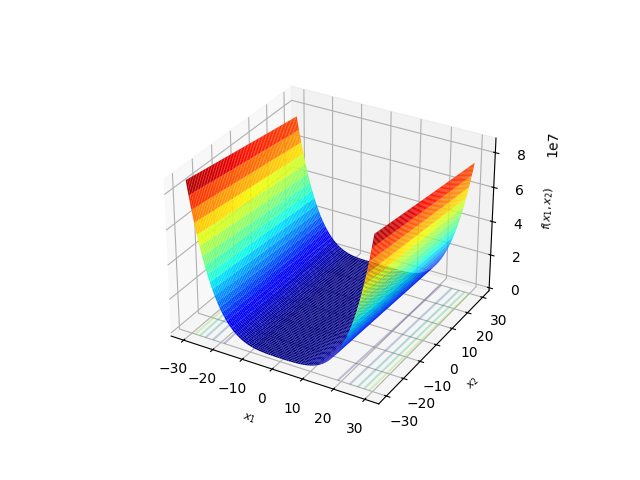

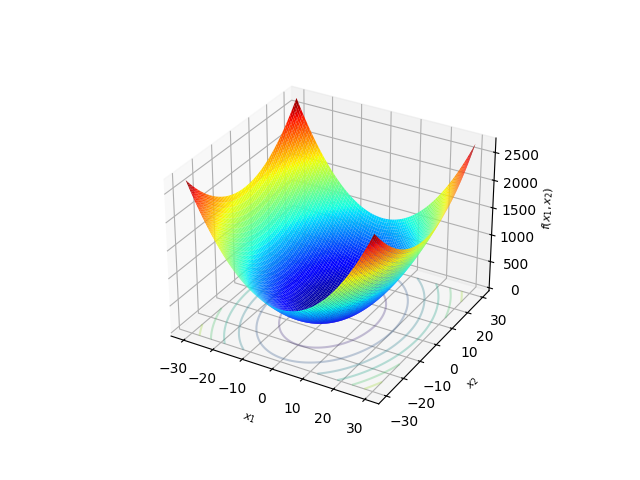

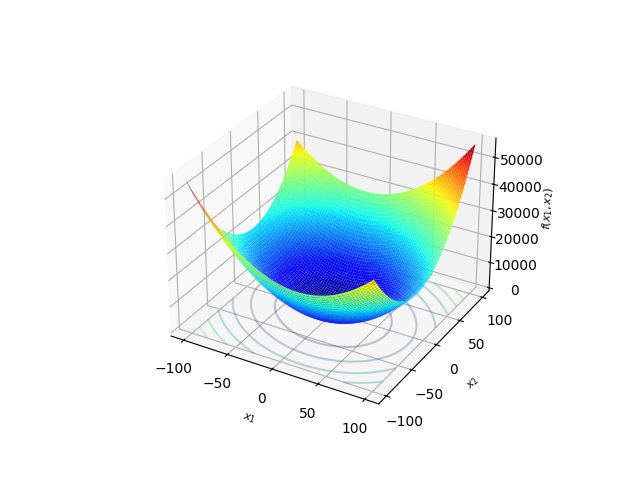

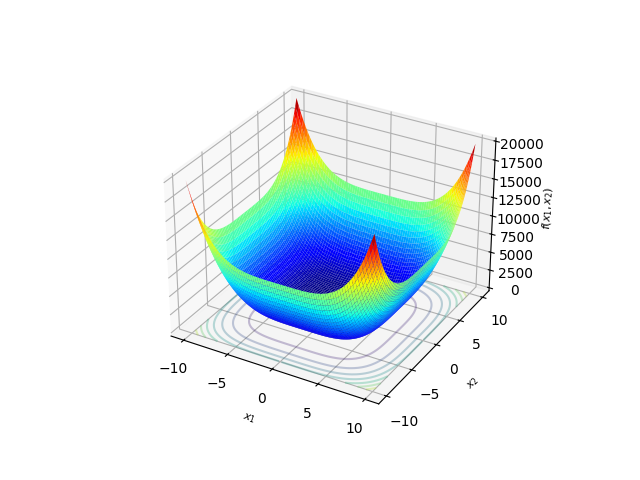

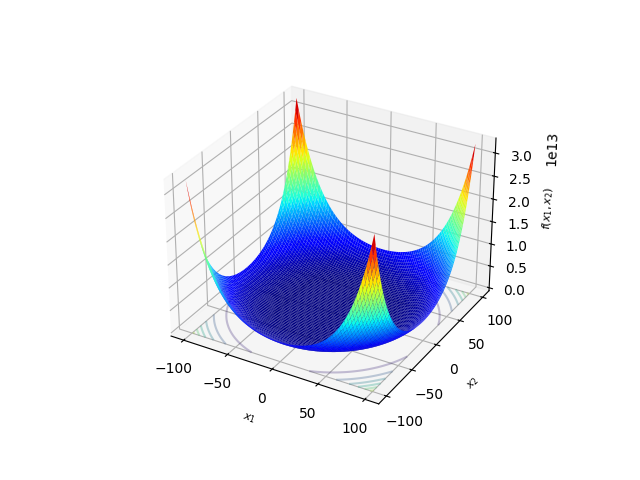

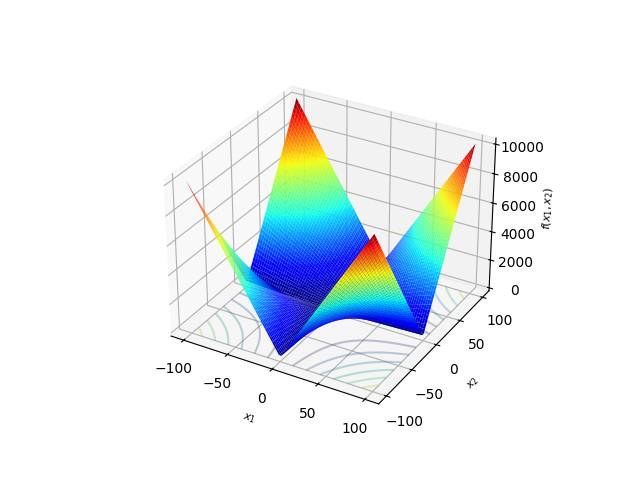

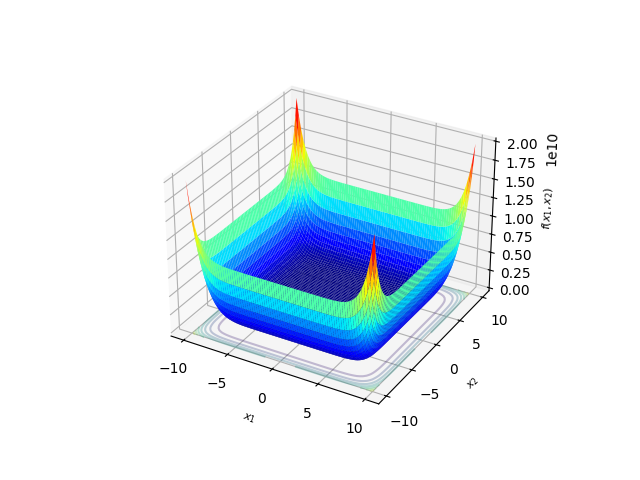

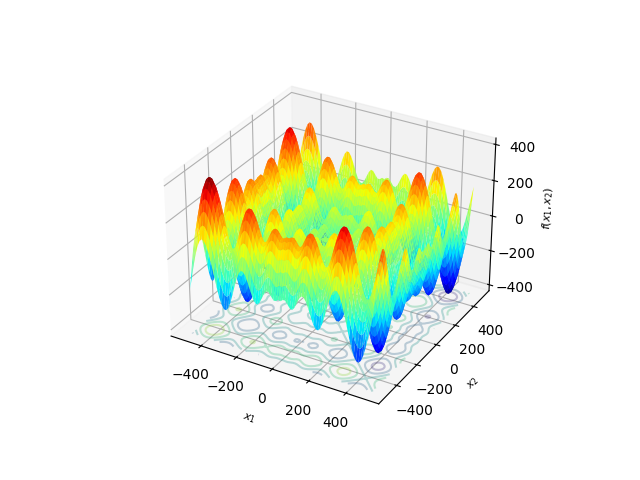

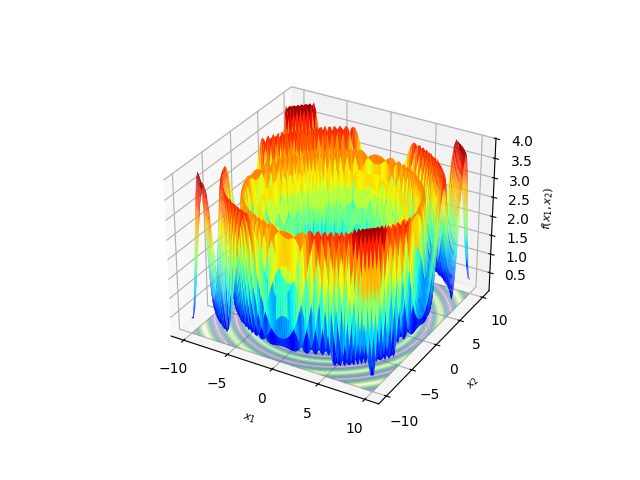

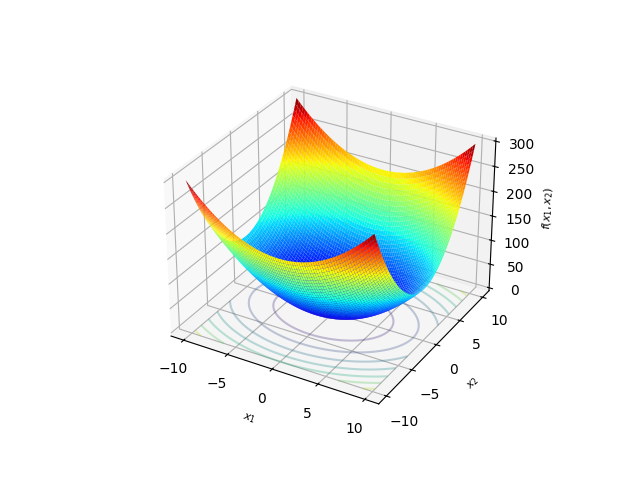

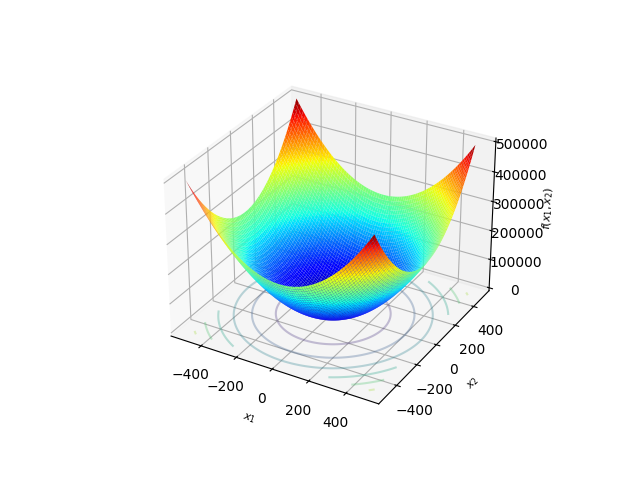

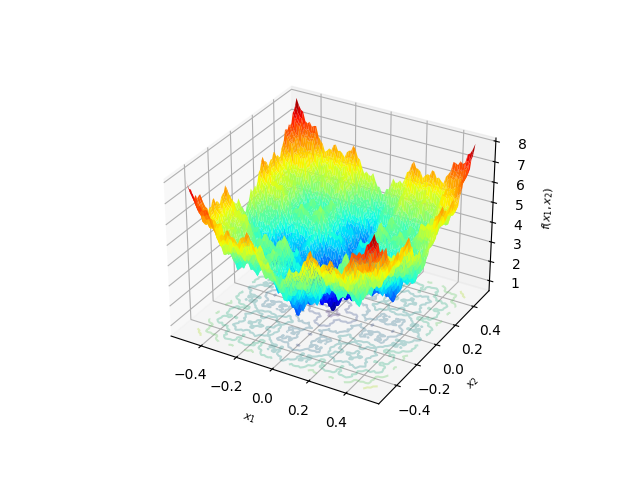

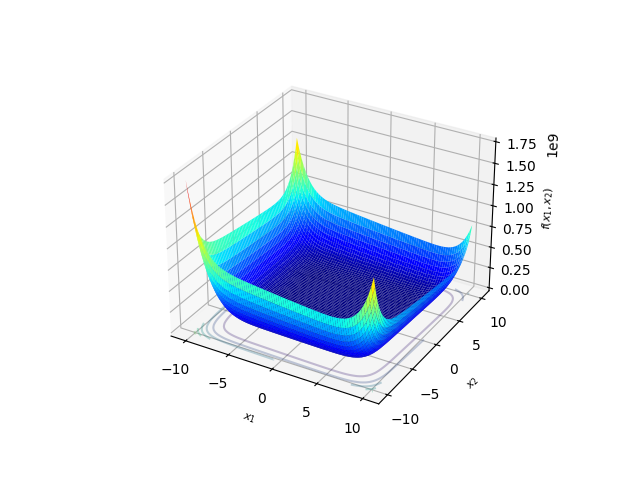

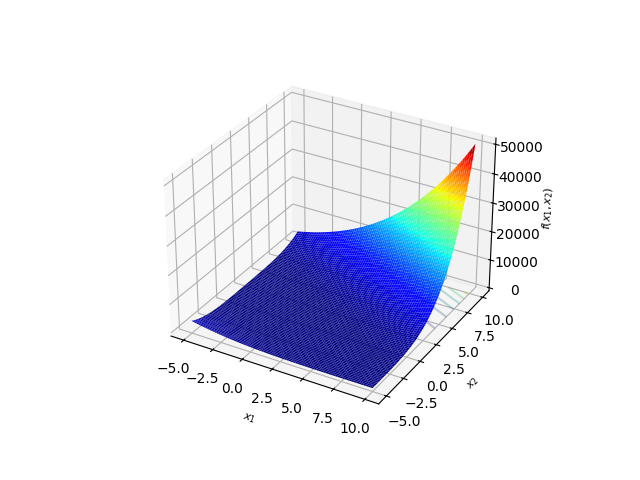

Benchmark Functions

The following table lists the functions officially supported by the library. If you have any suggestion for new functions to add, we encourage you to open an issue or pull request.

Ackley[1],[2] |

Alpine2[1] |

BentCigar[3] |

Brown[1] |

Chung Reynolds[1] |

Csendes[1] |

Deb 3[1] |

Dixon & Price[1],[2] |

Exponential[1] |

Levy[1] |

Mishra 2[1],[5] |

Powell Sum[1] |

Rastrigin[3] |

Rosenbrock[1] |

Rotated Hyper-Ellipsoid[2] |

Sargan[1] |

Schumer Steiglitz[1] |

Schwefel[1] |

Schwefel 2.22[1] |

Schwefel 2.23[1] |

Schwefel 2.26[1] |

Streched V Sine Wave[1] |

Sum Squares[1] |

Trigonometric 2[1] |

Weierstrass[1],[5] |

Whitley[1] |

Zakharov[1] |

References:

[1]: Jamil, M., & Yang, X.-S. (2013). A Literature Survey of Benchmark Functions For Global Optimization Problems. arXiv. https://doi.org/10.48550/ARXIV.1308.4008

[2]: https://www.sfu.ca/~ssurjano/optimization.html

[3]: Special Session & Competition on Single Objective Bound Constrained Optimization (CEC2021)

[4]: https://al-roomi.org/benchmarks/unconstrained/n-dimensions/231-deb-s-function-no-01

[5]: https://infinity77.net/global_optimization/test_functions_nd_M.html

All the images can be generated using the

Drawerutility.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file py_benchmark_functions-0.2.2.tar.gz.

File metadata

- Download URL: py_benchmark_functions-0.2.2.tar.gz

- Upload date:

- Size: 8.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.7.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cd1db85120b6395dcf7520c81a44b4b1b87c8aa5906610a7319e5f95cdcae0c2

|

|

| MD5 |

64973643be2a7cbe23704ce221de3d97

|

|

| BLAKE2b-256 |

bacab38a7d08b38c37cb33d6cfa85e393557896ca6bbb27534fba349e2f0483b

|

File details

Details for the file py_benchmark_functions-0.2.2-py3-none-any.whl.

File metadata

- Download URL: py_benchmark_functions-0.2.2-py3-none-any.whl

- Upload date:

- Size: 5.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.7.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

28a5efb3bc66af3e8d3c41217406b7c4dcf47d59cd52afcc008c40b61748bba2

|

|

| MD5 |

9d83c6e5a9c81cad6f1cf3dbf2444822

|

|

| BLAKE2b-256 |

e4473a6be48983cd5274f5c3a3f20eb793ca469e39fc4314a750c2a4cc3fce57

|