Compare RAG patterns with real benchmarks. Run one command, see which pattern wins.

Project description

rag-playbook

Stop guessing which RAG pattern to use. Compare them with real numbers.

Quick Start · Patterns · Decision Guide · Architecture · CLI Reference

Every RAG tutorial teaches you how to build patterns. None of them tell you which one to actually use.

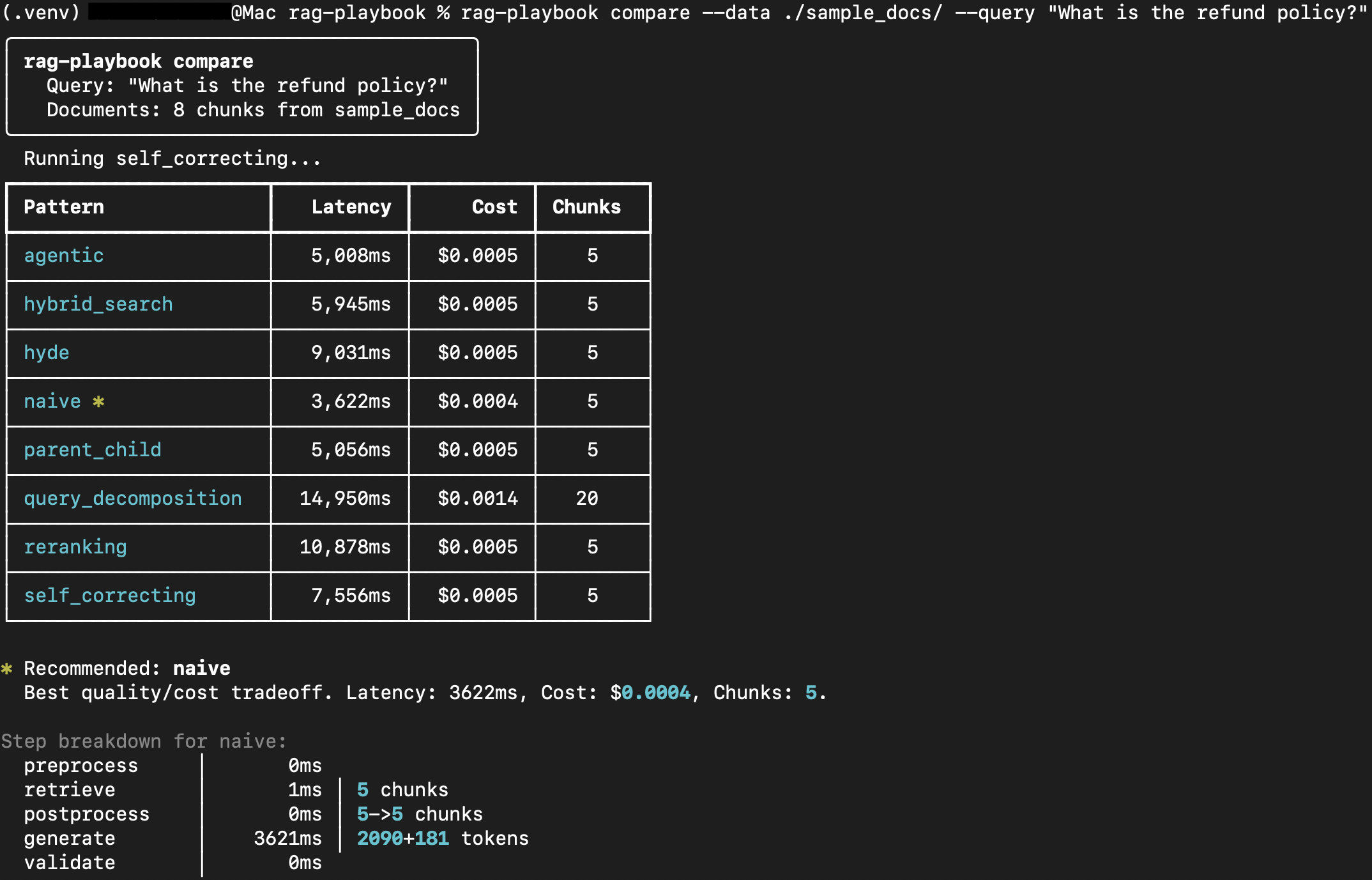

rag-playbook runs the same query against 8 production-tested RAG patterns and shows you which one wins — with real numbers for quality, latency, and cost.

Quick Start

pip install rag-playbook[openai]

export OPENAI_API_KEY=sk-...

# Compare all patterns on your documents

rag-playbook compare --data ./my_docs/ --query "What is the refund policy?"

Patterns

| # | Pattern | Best For | Latency | Cost |

|---|---|---|---|---|

| 01 | Naive | Simple factual queries | ~1s | $ |

| 02 | Hybrid Search | Queries with codes, IDs, exact terms | ~1.1s | $ |

| 03 | Re-ranking | When top-K retrieval isn't precise enough | ~1.4s | $$ |

| 04 | Parent-Child | Long documents with clear sections | ~1s | $ |

| 05 | Query Decomposition | Complex multi-part questions | ~2.1s | $$$ |

| 06 | HyDE | Short or ambiguous queries | ~1.5s | $$ |

| 07 | Self-Correcting | When hallucination risk is high | ~2.8s | $$$ |

| 08 | Agentic | When query intent is unclear | ~3.2s | $$$$ |

Which pattern should I use? (decision guide)

How is this different from [X]?

| NirDiamant/RAG_Techniques | FlashRAG | Ragas | rag-playbook | |

|---|---|---|---|---|

| Format | Jupyter notebooks | Academic library | Eval metrics | Library + CLI |

pip install |

No | Complex | Yes | Yes (simple) |

| Benchmarks | None | Academic | N/A | Practical comparison |

| "Which to use?" | No | No | No | YES |

| License | Non-commercial | MIT | Apache-2.0 | MIT |

Use as a Library

import asyncio

from rag_playbook import create_pattern, Settings

from rag_playbook.core.embedder import create_embedder

from rag_playbook.core.llm import create_llm

from rag_playbook.core.vector_store import create_vector_store

async def main():

settings = Settings() # Reads from .env / environment

llm = create_llm(settings)

embedder = create_embedder(settings)

store = create_vector_store("memory")

pattern = create_pattern("reranking", llm=llm, embedder=embedder, store=store)

# Index your documents first (or use the CLI: rag-playbook ingest)

result = await pattern.query("What is the refund policy?")

print(result.answer)

print(f"Cost: ${result.metadata.cost_usd:.4f}")

print(f"Latency: {result.metadata.latency_ms:.0f}ms")

asyncio.run(main())

See examples/ for more usage patterns.

Installation

# Minimal (in-memory store, OpenAI)

pip install rag-playbook[openai]

# Everything (includes planned vector store backends)

pip install rag-playbook[all]

From Source

git clone https://github.com/Aamirofficiall/rag-playbook.git

cd rag-playbook

uv venv --python 3.11 .venv

source .venv/bin/activate

uv pip install -e ".[dev,chromadb,openai]"

CLI Commands

| Command | Description |

|---|---|

rag-playbook compare |

Compare patterns side-by-side on your documents |

rag-playbook run |

Run a single pattern |

rag-playbook recommend |

Get an LLM-powered pattern recommendation |

rag-playbook ingest |

Load, chunk, embed, and index documents |

rag-playbook bench |

Run full benchmark suite |

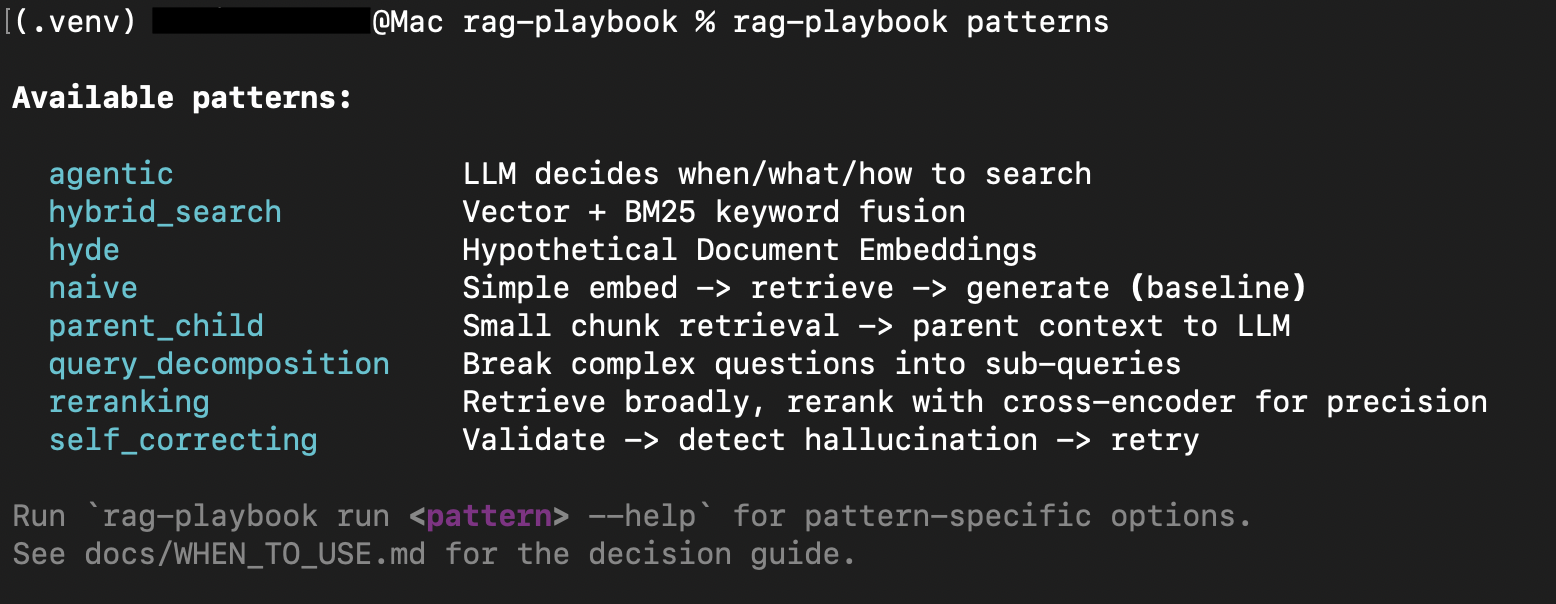

rag-playbook patterns |

List all available patterns |

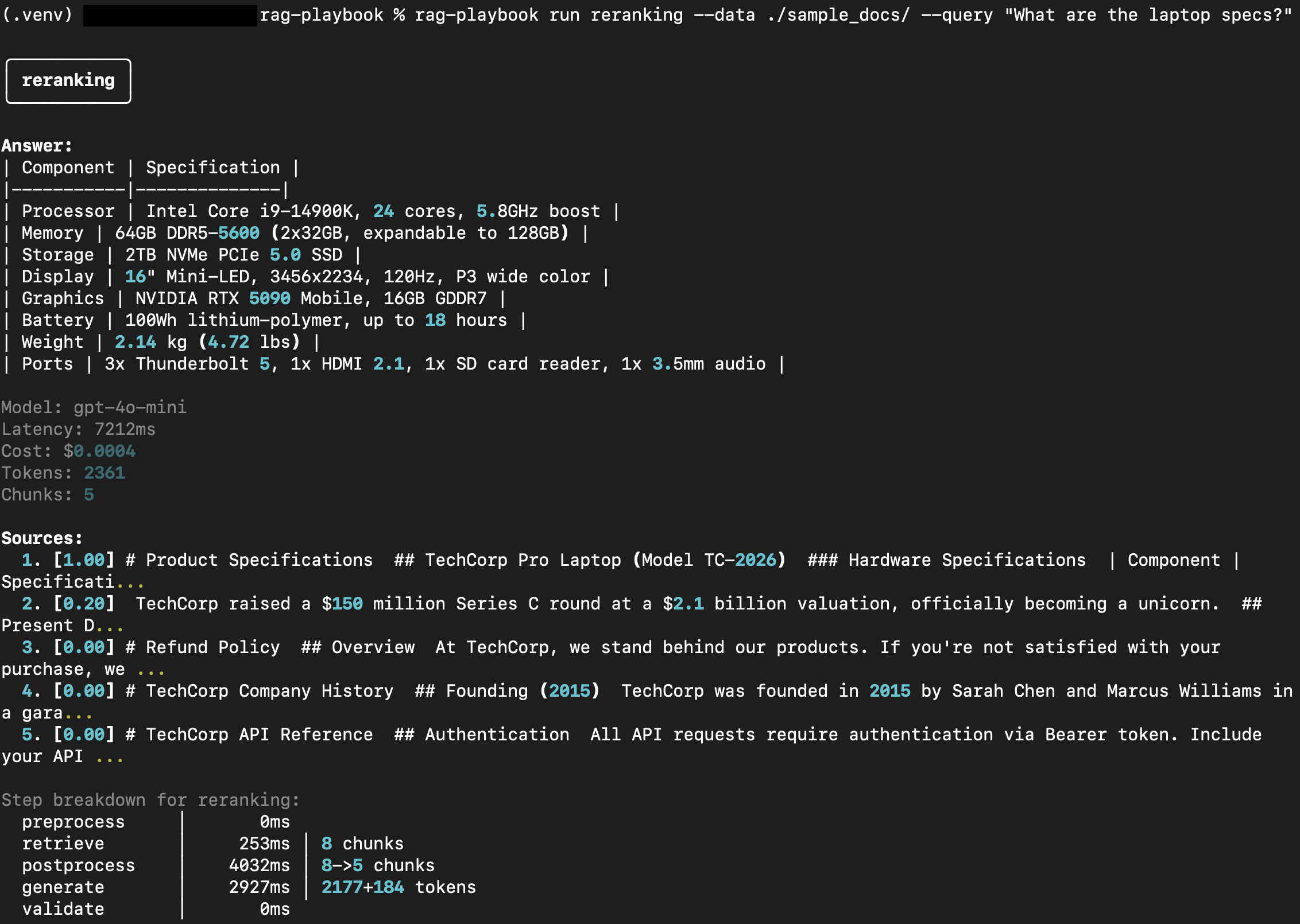

Run a single pattern

rag-playbook run reranking --data ./docs/ --query "What are the laptop specs?"

List available patterns

rag-playbook patterns

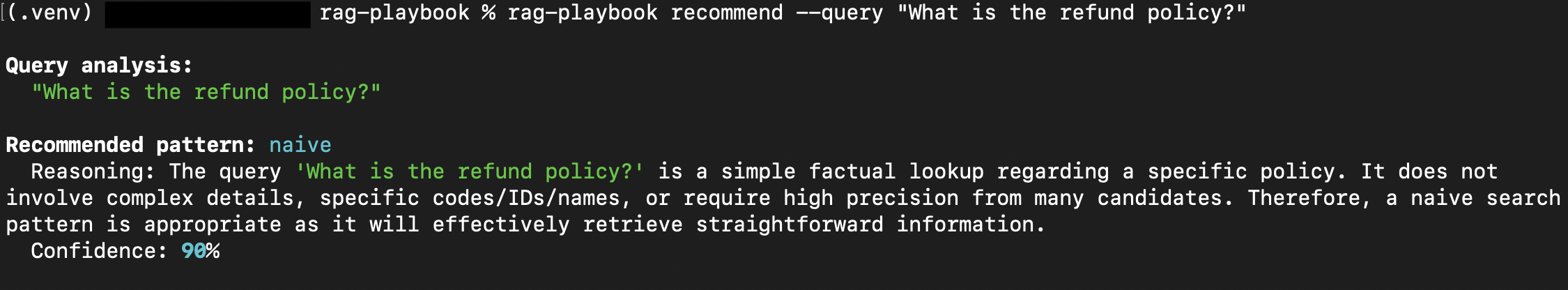

Get a pattern recommendation

rag-playbook recommend --query "What is the refund policy?"

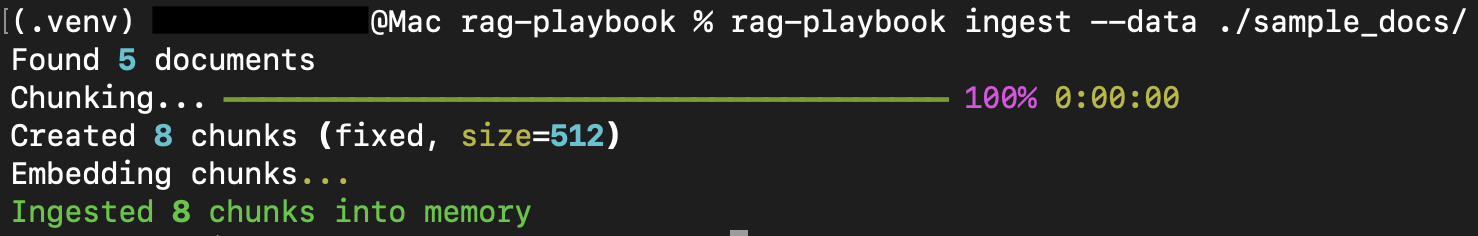

Ingest documents

rag-playbook ingest --data ./sample_docs/

See CLI Reference for full usage.

Configuration

Environment variables map directly to settings — no prefix needed:

# .env — Core settings

OPENAI_API_KEY=sk-... # Required for OpenAI provider

DEFAULT_LLM_PROVIDER=openai # openai | anthropic

DEFAULT_LLM_MODEL=gpt-4o-mini # Any model your provider supports

EMBEDDING_MODEL=text-embedding-3-small

EMBEDDING_DIMENSION=1536

DEFAULT_TOP_K=5

DEFAULT_CHUNK_SIZE=512

DEFAULT_CHUNK_OVERLAP=50

Using OpenRouter (or any OpenAI-compatible API)

Works with any OpenAI-compatible endpoint — OpenRouter, Azure OpenAI, Ollama, vLLM, etc:

OPENAI_API_KEY=sk-or-v1-... # Your OpenRouter key

OPENAI_BASE_URL=https://openrouter.ai/api/v1 # Custom base URL

DEFAULT_LLM_MODEL=openai/gpt-4o-mini # OpenRouter model format

Vector store backends

The default in-memory store works out of the box but re-embeds every session. ChromaDB, pgvector, and Qdrant are planned as persistent backends — the optional dependencies are installable but the store implementations are not yet wired up. Contributions welcome.

Or pass a Settings object directly in code. See .env.example for all options.

Architecture

Document → Chunk → EmbeddedChunk → RetrievedChunk → RAGResult

│ │ │ │

Chunker Embedder VectorStore LLM

LLM providers, embedders, and vector stores are all swappable. Each RAG pattern extends a base class and overrides only the steps it needs. Embeddings are cached automatically so running compare across all 8 patterns doesn't re-embed your docs 8 times.

See Architecture Guide for details.

Development

make install # Install with dev dependencies

make test # Run unit tests

make lint # Lint with ruff

make format # Auto-format with ruff

make type-check # Type check with mypy

make check # Run all checks

See CONTRIBUTING.md for the full guide.

Author

Built by Aamir Shahzad — backend engineer building data systems and AI infrastructure.

License

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file rag_playbook-0.2.2.tar.gz.

File metadata

- Download URL: rag_playbook-0.2.2.tar.gz

- Upload date:

- Size: 2.7 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c69de1ca44279a2a7dd5c2a1a27d929456d0906f6274bd75602951ca62bd75de

|

|

| MD5 |

35c345f9d74e9ecf483e168b3eed7732

|

|

| BLAKE2b-256 |

4b6ad1ed61fdeb2f466247dbcd90eff9feedd68bde073d352c7357959819257d

|

File details

Details for the file rag_playbook-0.2.2-py3-none-any.whl.

File metadata

- Download URL: rag_playbook-0.2.2-py3-none-any.whl

- Upload date:

- Size: 47.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9110922ed05253a3f5a68f7ccf44c9aa6278874c5a67ede09508097f4a72cdba

|

|

| MD5 |

65150dc3e42517b0239094929e7f4840

|

|

| BLAKE2b-256 |

5cd9ed65eaf6a60730c55eb5843e49d4d7f1522f509bad8432511d7455f3c9f9

|