Toolkit for transforming molecular dynamics (MD) trajectories into rich graph representations

Project description

SAWNERGY

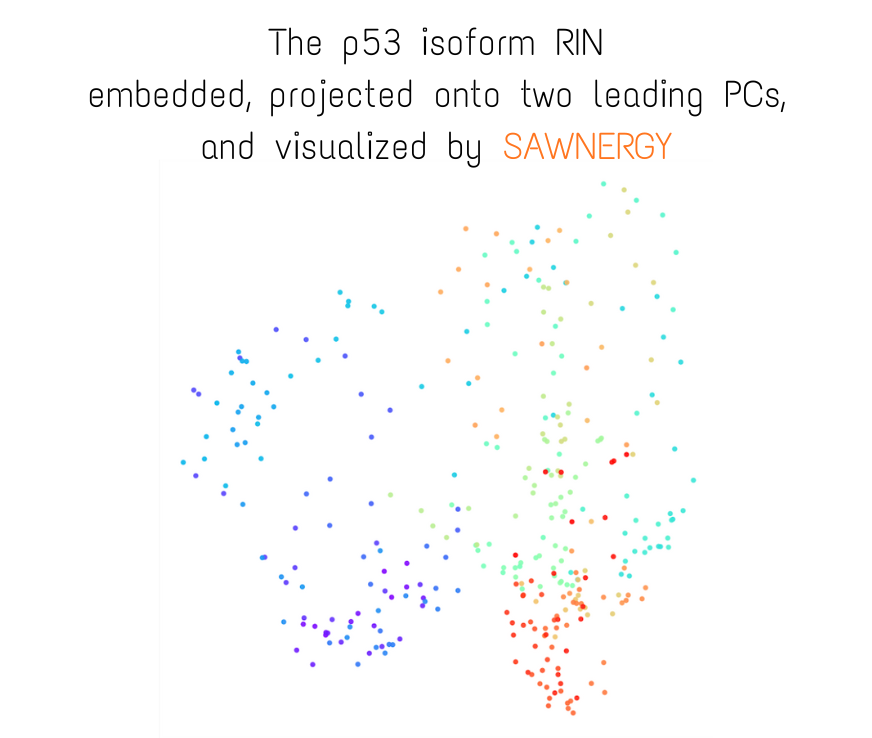

A toolkit for transforming molecular dynamics (MD) trajectories into rich graph representations, sampling

random and self-avoiding walks, learning node embeddings, and visualising residue interaction networks (RINs). SAWNERGY

keeps the full workflow — from cpptraj output to skip-gram embeddings (node2vec approach) — inside Python, backed by efficient Zarr-based archives and optional GPU acceleration.

Why SAWNERGY?

- Bridge simulations and graph ML: Convert raw MD trajectories into residue interaction networks ready for graph algorithms and downstream machine learning tasks.

- Deterministic, shareable artefacts: Every stage produces compressed Zarr archives that contain both data and metadata so runs can be reproduced, shared, or inspected later.

- High-performance data handling: Heavy arrays live in shared memory during walk sampling to allow parallel processing without serealization overhead; archives are written in chunked, compressed form for fast read/write.

- Flexible embedding backends: Train skip-gram with negative sampling (SGNS) models using either PureML or PyTorch.

- Visualization out of the box: Plot and animate residue networks without leaving Python, using the data produced by RINBuilder

Pipeline at a Glance

MD Trajectory + Topology

│

▼

RINBuilder

│ → RIN archive (.zip/.zarr) → Visualizer (display/animate RINs)

▼

Walker

│ → Walks archive (RW/SAW per frame)

▼

Embedder

│ → Embedding archive (frame × vocab × dim)

▼

Downstream ML

Each stage consumes the archive produced by the previous one. Metadata embedded in the archives ensures frame order, node indexing, and RNG seeds stay consistent across the toolchain.

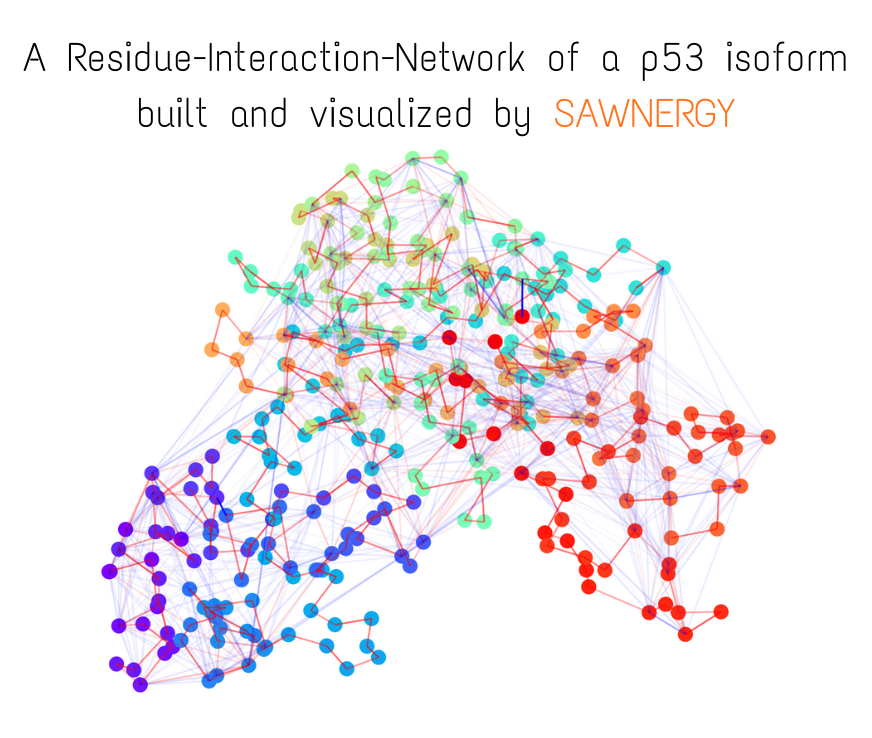

Small visual example (constructed fully from trajectory and topology files)

Core Components

sawnergy.rin.RINBuilder

- Wraps the AmberTools

cpptrajexecutable to:- compute per-frame electrostatic (EMAP) and van der Waals (VMAP) energy matrices at the atomic level,

- project atom–atom interactions to residue–residue interactions using compositional masks,

- prune, symmetrise, remove self-interactions, and L1-normalise the matrices,

- compute per-residue centres of mass (COM) over the same frames.

- Outputs a compressed Zarr archive with transition matrices, optional prenormalised energies, COM snapshots, and rich metadata (frame range, pruning quantile, molecule ID, etc.).

- Supports parallel

cpptrajexecution, batch processing, and keeps temporary stores tidy viaArrayStorage.compress_and_cleanup.

sawnergy.visual.Visualizer

- Opens RIN archives, resolves dataset names from attributes, and renders nodes plus attractive/repulsive edge bundles in 3D using Matplotlib.

- Allows both static frame visualization and trajectory animation.

- Handles backend selection (

Aggfallback in headless environments) and offers convenient colour palettes viavisualizer_util.

sawnergy.walks.Walker

- Attaches to the RIN archive and loads attractive/repulsive transition matrices into shared memory using

walker_util.SharedNDArrayso multiple processes can sample without copying. - Samples random walks (RW) and self-avoiding walks (SAW), optionally time-aware, that is, walks move through transition matrices with transition probabilities proportional to cosine similarity between the current and next frame. Randomness is controlled by the seed passed to the class constructor.

- Persists walks as

(time, walk_id, length+1)tensors (1-based node indices) alongside metadata such aswalk_length,walks_per_node, and RNG scheme.

sawnergy.embedding.Embedder

- Consumes walk archives, generates skip-gram pairs, and normalises them to 0-based indices.

- Provides a unified interface to SGNS implementations:

- PureML backend (

SGNS_PureML): works with thepuremlecosystem, optimistic for CPU training. - PyTorch backend (

SGNS_Torch): usestorch.nn.Embeddingplays nicely with GPUs.

- PureML backend (

- Both

SGNS_PureMLandSGNS_Torchaccept training hyperparameters such as batch_size, LR, optimizer and LR_scheduler, etc. - Exposes

embed_frame(single frame) andembed_all(all frames, deterministic seeding per frame) which return the learned input embedding matrices and write them to disk when requested.

Supporting Utilities

sawnergy.sawnergy_utilArrayStorage: thin wrapper over Zarr v3 with helpers for chunk management, attribute coercion to JSON, and transparent compression to.ziparchives.- Parallel helpers (

elementwise_processor,compose_steps, etc.), temporary file management, logging, and runtime inspection utilities.

sawnergy.logging_util.configure_logging: configure rotating file/console logging consistently across scripts.

Archive Layouts

| Archive | Key datasets (name → shape, dtype) | Important attributes (root attrs) |

|---|---|---|

| RIN | ATTRACTIVE_transitions → (T, N, N), float32 • REPULSIVE_transitions → (T, N, N), float32 (optional) • ATTRACTIVE_energies → (T, N, N), float32 (optional) • REPULSIVE_energies → (T, N, N), float32 (optional) • COM → (T, N, 3), float32 |

time_created (ISO) • com_name = "COM" • molecule_of_interest (int) • frame_range = (start, end) inclusive • frame_batch_size (int) • prune_low_energies_frac (float in [0,1]) • attractive_transitions_name / repulsive_transitions_name (dataset names or None) • attractive_energies_name / repulsive_energies_name (dataset names or None) |

| Walks | ATTRACTIVE_RWs → (T, N·num_RWs, L+1), int32 (optional) • REPULSIVE_RWs → (T, N·num_RWs, L+1), int32 (optional) • ATTRACTIVE_SAWs → (T, N·num_SAWs, L+1), int32 (optional) • REPULSIVE_SAWs → (T, N·num_SAWs, L+1), int32 (optional) Note: node IDs are 1-based. |

time_created (ISO) • seed (int) • rng_scheme = "SeedSequence.spawn_per_batch_v1" • num_workers (int) • in_parallel (bool) • batch_size_nodes (int) • num_RWs / num_SAWs (ints) • node_count (N) • time_stamp_count (T) • walk_length (L) • walks_per_node (int) • attractive_RWs_name / repulsive_RWs_name / attractive_SAWs_name / repulsive_SAWs_name (dataset names or None) • walks_layout = "time_leading_3d" |

| Embeddings | FRAME_EMBEDDINGS → (frames_written, vocab_size, D), typically float32 |

time_created (ISO) • seed (int) • rng_scheme = "SeedSequence.spawn_per_frame_v1" • source_walks_path (str) • model_base = "torch" or "pureml" • rin_type = "attr" or "repuls" • using_mode = `"RW" |

Notes

- In RIN,

Tequals the number of frame batches written (i.e.,frame_rangeswept in steps offrame_batch_size).ATTRACTIVE/REPULSIVE_energiesare pre-normalised absolute energies (written only whenkeep_prenormalized_energies=True), whereasATTRACTIVE/REPULSIVE_transitionsare the row-wise L1-normalised versions used for sampling. - All archives are Zarr v3 groups. ArrayStorage also maintains per-block metadata in root attrs:

array_chunk_size_in_block,array_shape_in_block, andarray_dtype_in_block(dicts keyed by dataset name). You’ll see these in every archive.

Installation

pip install sawnergy

Note: RIN building requires

cpptraj(AmberTools). Ensure it is discoverable via$PATHor theCPPTRAJenvironment variable.

Quick Start

from pathlib import Path

from sawnergy.logging_util import configure_logging

from sawnergy.rin import RINBuilder

from sawnergy.walks import Walker

from sawnergy.embedding import Embedder

import logging

configure_logging("./logs", file_level=logging.WARNING, console_level=logging.INFO)

# 1. Build a Residue Interaction Network archive

rin_path = Path("./RIN_demo.zip")

rin_builder = RINBuilder()

rin_builder.build_rin(

topology_file="system.prmtop",

trajectory_file="trajectory.nc",

molecule_of_interest=1,

frame_range=(1, 100),

frame_batch_size=10,

prune_low_energies_frac=0.3,

output_path=rin_path,

include_attractive=True,

include_repulsive=False,

)

# 2. Sample walks from the RIN

walker = Walker(rin_path, seed=123)

walks_path = Path("./WALKS_demo.zip")

walker.sample_walks(

walk_length=16,

walks_per_node=32,

saw_frac=0.25,

include_attractive=True,

include_repulsive=False,

time_aware=False,

output_path=walks_path,

in_parallel=False,

)

walker.close()

# 3. Train embeddings per frame (PyTorch backend)

import torch

embedder = Embedder(walks_path, base="torch", seed=999)

embeddings_path = embedder.embed_all(

RIN_type="attr",

using="merged",

window_size=4,

num_negative_samples=5,

num_epochs=5,

batch_size=1024,

dimensionality=128,

shuffle_data=True,

output_path="./EMBEDDINGS_demo.zip",

sgns_kwargs={

"optim": torch.optim.Adam,

"optim_kwargs": {"lr": 1e-3},

"lr_sched": torch.optim.lr_scheduler.LambdaLR,

"lr_sched_kwargs": {"lr_lambda": lambda _: 1.0},

"device": "cuda" if torch.cuda.is_available() else "cpu",

},

)

print("Embeddings written to", embeddings_path)

For the PureML backend, supply the relevant optimiser and scheduler via

sgns_kwargs(for exampleoptim=pureml.optimizers.Adam,lr_sched=pureml.optimizers.CosineAnnealingLR).

Visualisation

from sawnergy.visual import Visualizer

v = sawnergy.visual.Visualizer("./RIN_demo.zip")

v.build_frame(1,

node_colors="rainbow",

displayed_nodes="ALL",

displayed_pairwise_attraction_for_nodes="DISPLAYED_NODES",

displayed_pairwise_repulsion_for_nodes="DISPLAYED_NODES",

show_node_labels=True,

show=True

)

Visualizer lazily loads datasets and works even in headless environments (falls back to the Agg backend).

Advanced Notes

- Time-aware walks: Set

time_aware=True, providestickinessandon_no_optionswhen callingWalker.sample_walks. - Shared memory lifecycle: Call

Walker.close()(or use a context manager) to release shared-memory segments. - PureML vs PyTorch: Choose the backend via

Embedder(..., base="pureml"|"torch")and provide backend-specific constructor kwargs throughsgns_kwargs(optimizer, scheduler, device). - ArrayStorage utilities: Use

ArrayStoragedirectly to peek into archives, append arrays, or manage metadata.

Project Structure

├── sawnergy/

│ ├── rin/ # RINBuilder and cpptraj integration helpers

│ ├── walks/ # Walker class and shared-memory utilities

│ ├── embedding/ # Embedder + SGNS backends (PureML / PyTorch)

│ ├── visual/ # Visualizer and palette utilities

│ ├── logging_util.py

│ └── sawnergy_util.py

│

└── README.md

Acknowledgements

SAWNERGY builds on the AmberTools cpptraj ecosystem, NumPy, Matplotlib, Zarr, and PyTorch (for GPU acceleration if necessary; PureML is available by default).

Big thanks to the upstream communities whose work makes this toolkit possible.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file sawnergy-1.0.4.tar.gz.

File metadata

- Download URL: sawnergy-1.0.4.tar.gz

- Upload date:

- Size: 82.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b861caa4293417c31ffc528d7466c6c18eec687f19de1a003cd5212877bd5ec9

|

|

| MD5 |

ffbbc326c5d895319b728deb703778ec

|

|

| BLAKE2b-256 |

79cdc8118589463a0e795514ca1cbf7d2c1c9819d8ba9e734432ee417c0c960e

|

File details

Details for the file sawnergy-1.0.4-py3-none-any.whl.

File metadata

- Download URL: sawnergy-1.0.4-py3-none-any.whl

- Upload date:

- Size: 81.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

82fdba5ec1da44daf80817ed3cf205b507029d7b131455f54b4a7dd7d11eb775

|

|

| MD5 |

7f58074cbe3133c8143d4228436bb943

|

|

| BLAKE2b-256 |

1bdd081125c24ed846e2af8a1b8263a68eee589967563dcdc70196c75458e9e2

|