Specular differentiation in normed vector spaces and its applications

Project description

Specular Differentiation

The Python package specular implements specular differentiation which generalizes classical differentiation.

This implementation strictly follows the definitions, notations, and results in [1] and [2].

A specular derivative (the red line) can be understood as the average of the inclination angles of the right and left derivatives. In contrast, a symmetric derivative (the purple line) is the average of the right and left derivatives. Their difference is illustrated as in the following figure.

Also, specular includes the following applications:

Installation

Requirements

specular-differentiation requires:

- Python >= 3.11

ipython>= 8.12.3matplotlib>= 3.10.8numpy>= 2.4.0pandas>= 2.3.3tqdm>= 4.67.1

User installation

Standard Installation (NumPy backend)

The package is available on PyPI:

pip install specular-differentiation

Advanced Installation (JAX backend)

By default, the package uses the NumPy backend (CPU). To enable hardware acceleration, you can install the package with the JAX backend (GPU/TPU). This adds the following dependencies:

- JAX (

jax,jaxlib>= 0.4):

pip install "specular-differentiation[jax]"

Developer installation

To install all dependencies including tests, docs, and examples. This adds the following dependencies:

pip install -e ".[dev]"

Quick start

The following simple example calculates the specular derivative of the ReLU function $f(x) = max(0, x)$ at the origin.

>>> import specular

>>>

>>> ReLU = lambda x: max(x, 0)

>>> specular.derivative(ReLU, x=0)

0.41421356237309515

Tutorial

Detailed usage examples can be found in documentation.

Applications

Specular differentiation is defined in normed vector spaces, allowing for applications in higher-dimensional Euclidean spaces. Two applications are provided in this repository.

Ordinary differential equation

In [1], seven schemes are proposed for solving ODEs numerically:

- the specular Euler scheme of Type 1~6

- the specular trigonometric scheme

The following example shows that the specular Euler schemes of Type 5 and 6 yield more accurate numerical solutions than classical schemes: the explicit and implicit Euler schemes and the Crank-Nicolson scheme.

Optimization

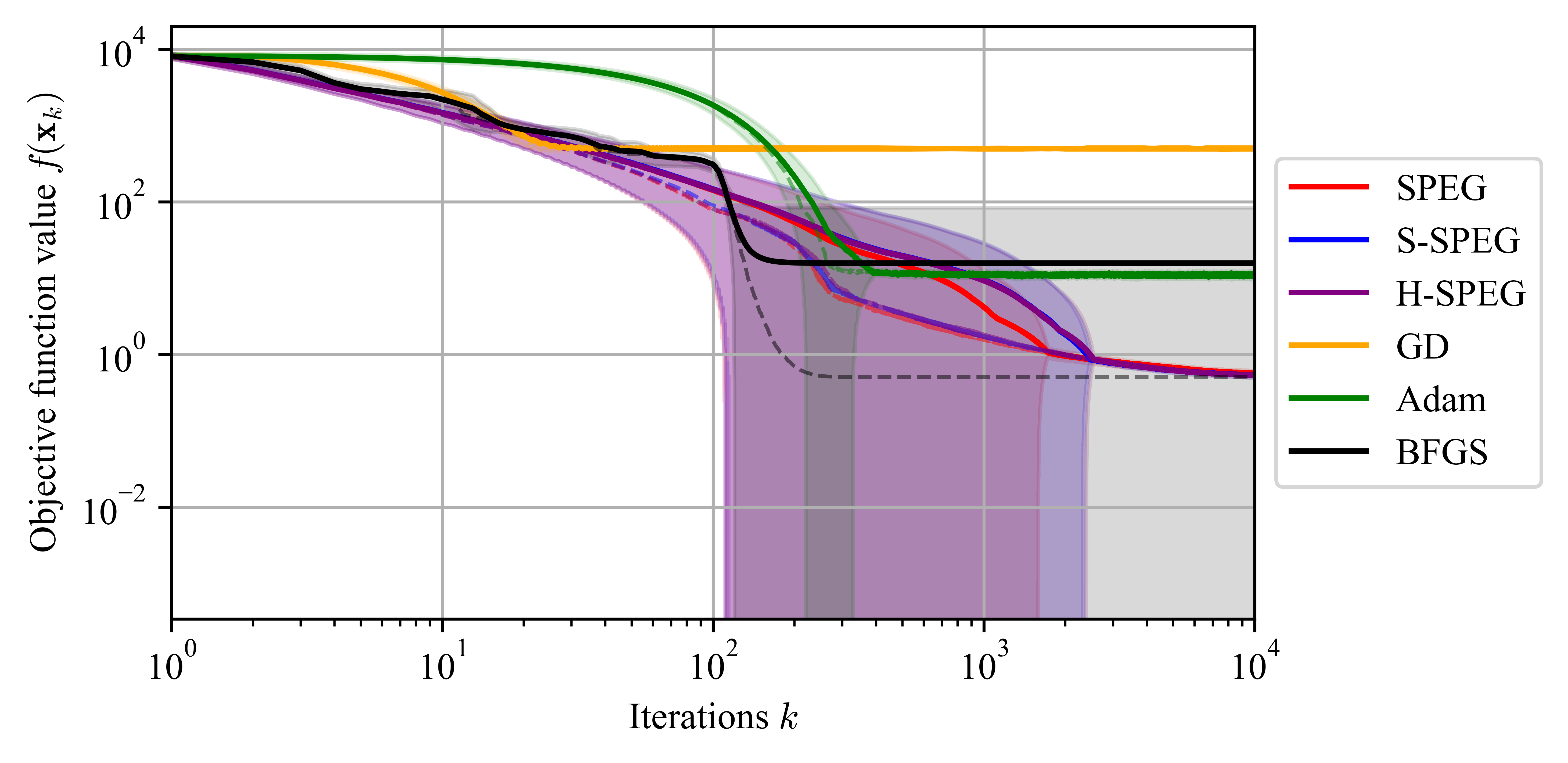

In [2], three methods are proposed for optimizing nonsmooth convex objective functions:

- the specular gradient (SPEG) method

- the stochastic specular gradient (S-SPEG) method

- the hybrid specular gradient (H-SPEG) method

The following example compares the three proposed methods with the classical methods: gradient descent (GD), Adaptive Moment Estimation (Adam), and Broyden-Fletcher-Goldfarb-Shanno (BFGS).

LaTeX symbol

To use the specular differentiation symbol in your LaTeX document, add the following code to your preamble (before \begin{document}):

% Required packages

\usepackage{graphicx}

\usepackage{bm}

% Definition of Specular Differentiation symbol

\newcommand\sd[1][.5]{\mathbin{\vcenter{\hbox{\scalebox{#1}{\,$\bm{\wedge}$}}}}}

Usage examples

Use the symbol in your document (after \begin{document}):

% A specular derivative in the one-dimensional Euclidean space

$f^{\sd}(x)$

% A specular directional derivative in normed vector spaces

$\partial^{\sd}_v f(x)$

References

[1] K. Jung. Nonlinear numerical schemes using specular differentiation for initial value problems of first-order ordinary differential equations. arXiv preprint arXiv:??, 2025.

[2] K. Jung. Specular differentiation in normed vector spaces and its applications to nonsmooth convex optimization. arXiv preprint arXiv:??, 2025.

[3] K. Jung and J. Oh. The specular derivative. arXiv preprint arXiv:2210.06062, 2022.

[4] K. Jung and J. Oh. The wave equation with specular derivatives. arXiv preprint arXiv:2210.06933, 2022.

[5] K. Jung and J. Oh. Nonsmooth convex optimization using the specular gradient method with root-linear convergence. arXiv preprint arXiv:2210.06933, 2024.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file specular_differentiation-0.12.1.tar.gz.

File metadata

- Download URL: specular_differentiation-0.12.1.tar.gz

- Upload date:

- Size: 21.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c354a46c609bdcd699793a4a77359d4295e6fb425cb3b008b8a8ee69f1e63011

|

|

| MD5 |

289092e580240e8e915680ba37b08ad3

|

|

| BLAKE2b-256 |

47bc1cf0af452ceeb19eb1115ad56bd0cfb1e74c82c4dab6335ea247d8740b2b

|

File details

Details for the file specular_differentiation-0.12.1-py3-none-any.whl.

File metadata

- Download URL: specular_differentiation-0.12.1-py3-none-any.whl

- Upload date:

- Size: 21.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ed60b15a34fc22a10f47d723b971c8a15ee65a5dc11379490f4d72d8cbad86c7

|

|

| MD5 |

23f44e9d728f517cd04ed032312b2c87

|

|

| BLAKE2b-256 |

0c9094b6fb93cbc14bb087a175459973e2c7b39ddff4a24d8317c7a7c06ea693

|