TokLens: Looking Beyond Fertility in Tokenizer Evaluation

Project description

TokLens: A Multilingual Lens on Tokenizer Quality for LLMs

Accepted to ACL 2026 SRW. 🎉

Open-source toolkit for evaluating tokenizer quality across languages using six intrinsic metrics. We evaluate 24 tokenizers from major LLM families across 15 typologically diverse languages and correlate with downstream performance.

Key Findings

- Stark cross-lingual disparities persist. GPT-2 produces 56x more tokens per word in Japanese than English. Qwen2.5 and Gemma-2 reduce this gap to under 4x.

- No metric predicts English benchmark performance after controlling for model size (Bonferroni-corrected). Tokenizer quality does not drive English leaderboard scores.

- STRR significantly predicts multilingual performance. On MMLU-ProX, linear mixed-effects models show STRR has a large positive effect (β = +5.7, z = 18.5, p < 0.001).

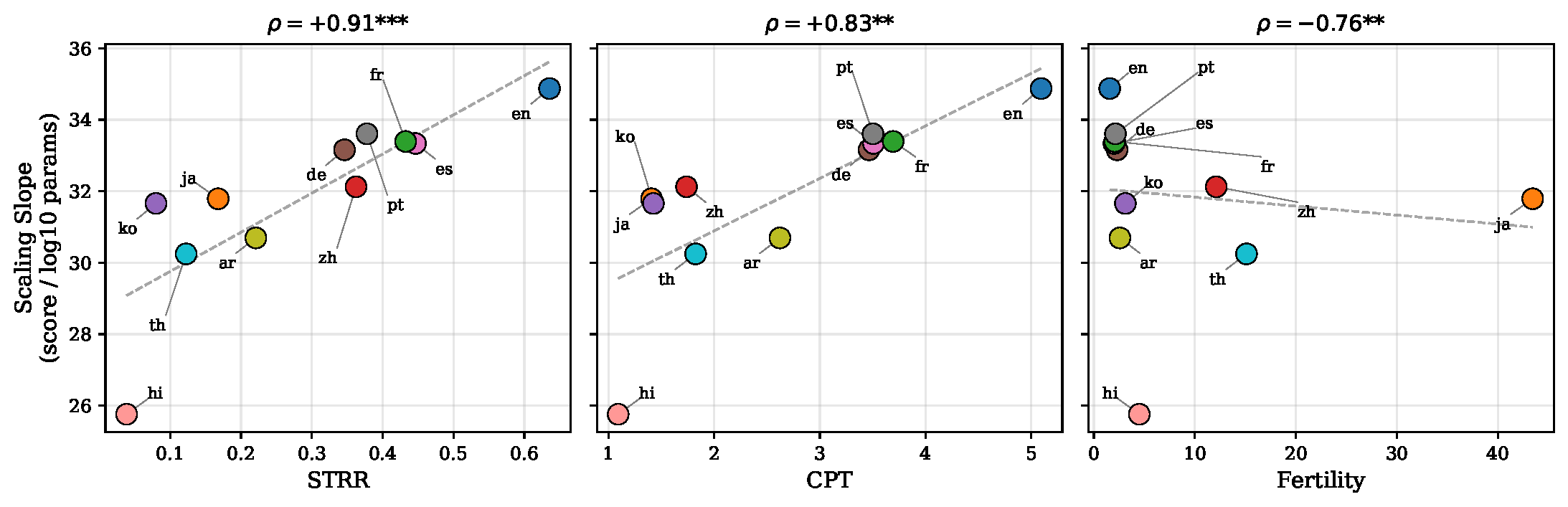

- Higher STRR correlates with steeper scaling. A controlled experiment on the Qwen2.5 family (fixed tokenizer, varying model size) shows languages with higher STRR scale more steeply (ρ = 0.91, p < 0.001).

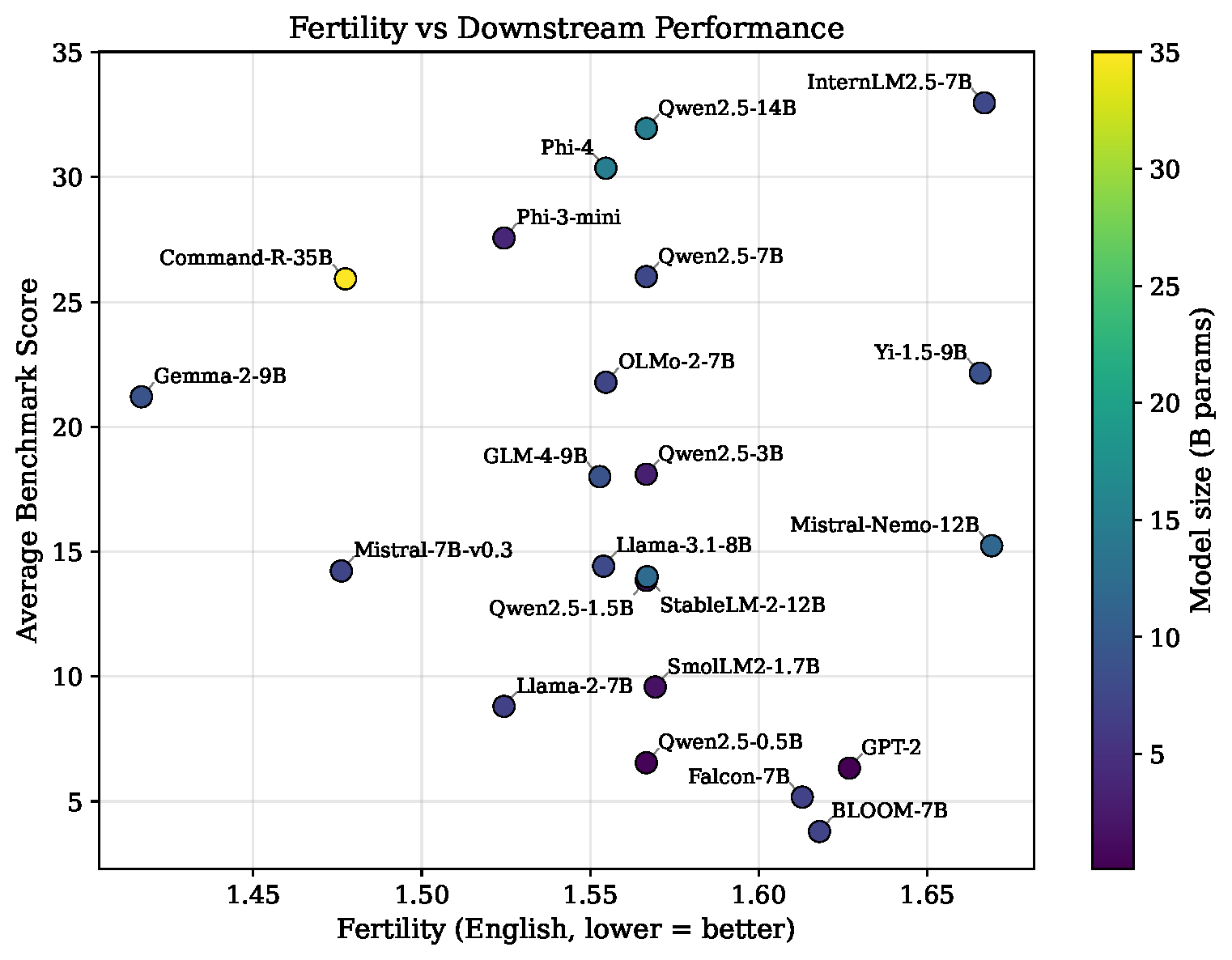

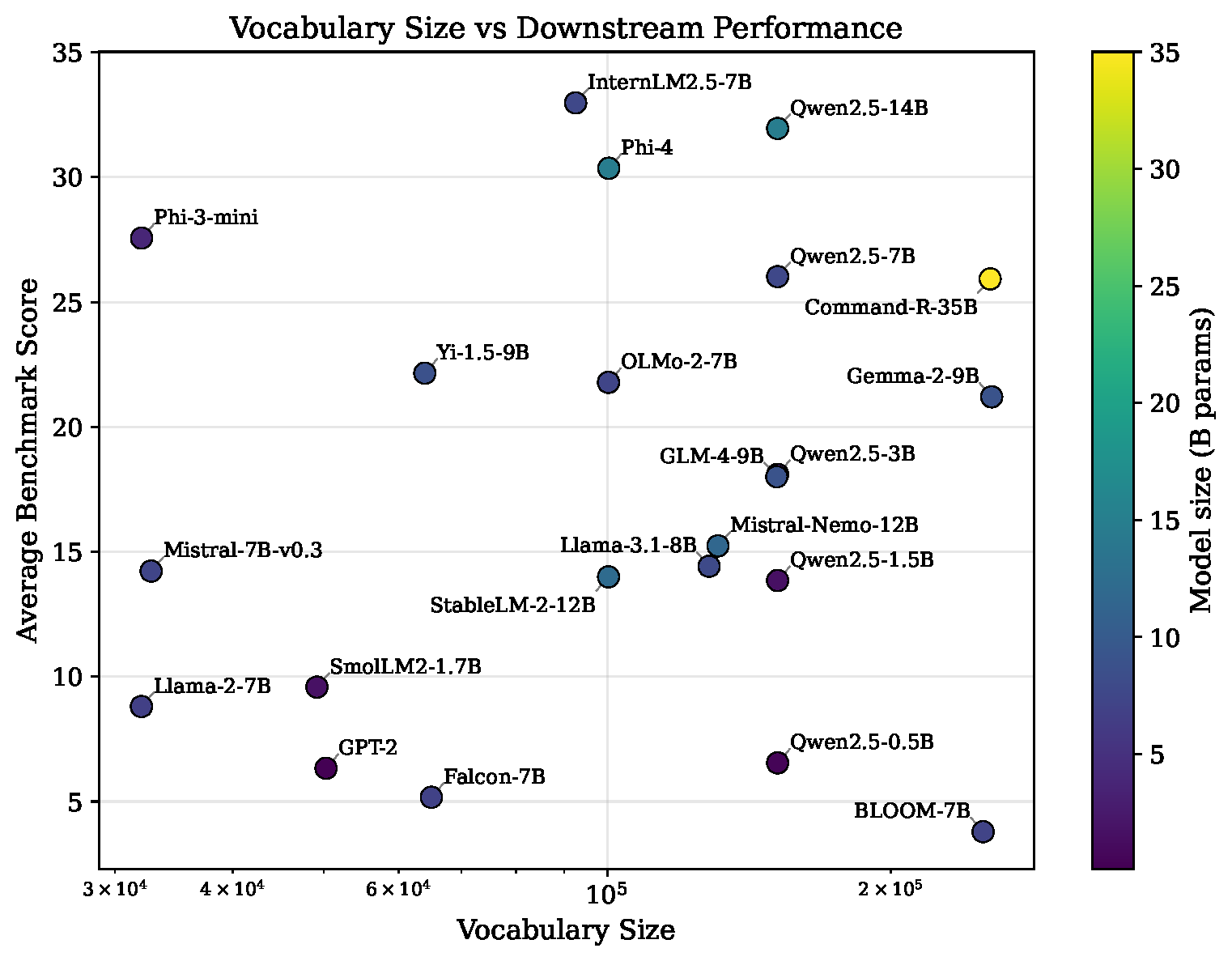

Benchmark correlations

|

|

Per-language scaling slope vs. tokenizer metrics (Qwen2.5 family)

Metrics

| Metric | Description |

|---|---|

| Fertility | Tokens per whitespace-delimited word. Lower = better compression. |

| CPT | Characters per token. |

| Compression ratio | Bytes per token. |

| NSL | Normalized sequence length relative to a reference tokenizer. |

| STRR | Single-token retention rate. Fraction of words encoded as a single token. |

| Parity | Cross-lingual fairness: ratio of token counts for parallel English sentences. |

Models and Languages

22 models with Open LLM Leaderboard v2 scores, plus 2 extra tokenizers (Qwen3, DeepSeek-V3) for metric-only analysis.

15 languages across 6 scripts: English, Chinese, Japanese, Arabic, Hindi, German, Turkish, Korean, Thai, Russian, French, Spanish, Portuguese, Vietnamese, Indonesian.

Quickstart

pip install toklens

from toklens import Analyzer

analyzer = Analyzer.from_pretrained("meta-llama/Llama-3.1-8B")

report = analyzer.evaluate(langs=["en", "zh", "ja", "ar"])

report.print_table()

toklens eval meta-llama/Llama-3.1-8B --langs en zh ja ar

toklens compare meta-llama/Llama-2-7b-hf meta-llama/Llama-3.1-8B

Experiments

Reproduces the full evaluation. Run steps in order:

uv run python -m experiments.pipeline.01_collect_benchmarks

uv run python -m experiments.pipeline.02_compute_metrics

uv run python -m experiments.pipeline.03_correlation

uv run python -m experiments.pipeline.04_figures

Supplementary analyses (LME models, Qtok comparison, BPB, Qwen scaling) are in experiments/analyses/. See experiments/README.md for details.

License

MIT

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file toklens-0.1.2.tar.gz.

File metadata

- Download URL: toklens-0.1.2.tar.gz

- Upload date:

- Size: 29.8 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.9.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

84fe9c29fe9d28add14510235c20034fb20c0efebd85ded57e57db8e23212dba

|

|

| MD5 |

0d5fec95e6f60d6dbdbf30965eef5757

|

|

| BLAKE2b-256 |

2ad5fac1ef1200e4ca425aeb584085b326d4f5a6868d339f6cef9818c6c60df6

|

File details

Details for the file toklens-0.1.2-py3-none-any.whl.

File metadata

- Download URL: toklens-0.1.2-py3-none-any.whl

- Upload date:

- Size: 12.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.9.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

593af36edd754ef8494a1d992c4805e271bd98e196d793de4a3e82a72ce476e7

|

|

| MD5 |

a9bc630dfb24cec31409124f208c6cd5

|

|

| BLAKE2b-256 |

48a8bdfa247dfcbf4a75b1850d188be09fbf6a1c5adf7c5cebb5d8757b8e2341

|