GPU accelerated differential finite elements for solid mechanics with PyTorch.

Project description

torch-fem

Simple GPU accelerated differentiable finite elements for solid mechanics with PyTorch. PyTorch enables efficient computation of sensitivities via automatic differentiation and using them in optimization tasks.

Installation

Your may install torch-fem via pip with

pip install torch-fem

Optional: For GPU support, install CUDA, PyTorch for CUDA, and the corresponding CuPy version.

For CUDA 11.8:

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118

pip install cupy-cuda11x # v11.2 - 11.8

For CUDA 12.9:

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu129

pip install cupy-cuda12x # v12.x

Features

-

Elements

- 1D: Bar1, Bar2

- 2D: Quad1, Quad2, Tria1, Tria2

- 3D: Hexa1, Hexa2, Tetra1, Tetra2

- Shell: Flat-facet triangle (linear only)

-

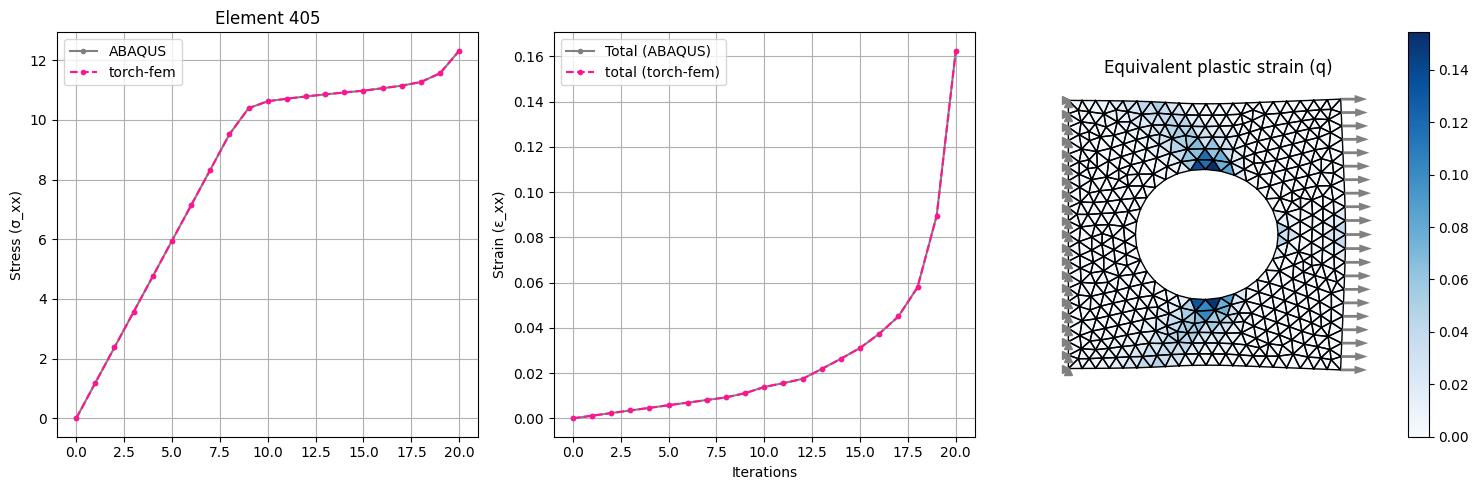

Material models

- Isotropic linear elasticity

- Orthotropic linear elasticity

- Isotropic small strain plasticity

- Isotropic small strain damage

- Hyperelasticity (via automatic differentiation of their energy function)

- Isotropic thermal conductivity

- Orthotropic thermal conductivity

- Custom user material interface

-

Utilities

- Homogenization of orthotropic elasticity for composites

- Simple structured meshing

- I/O to and from other mesh formats via meshio

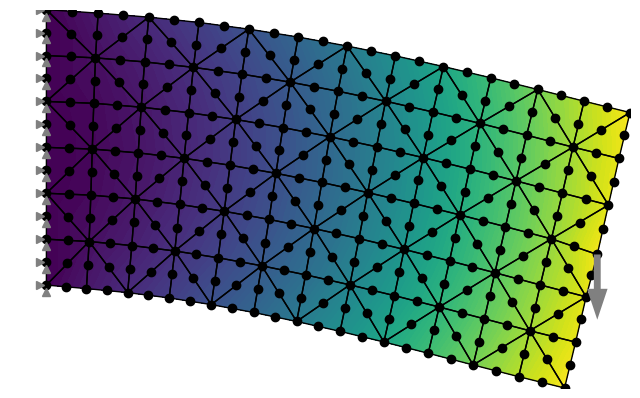

Basic examples

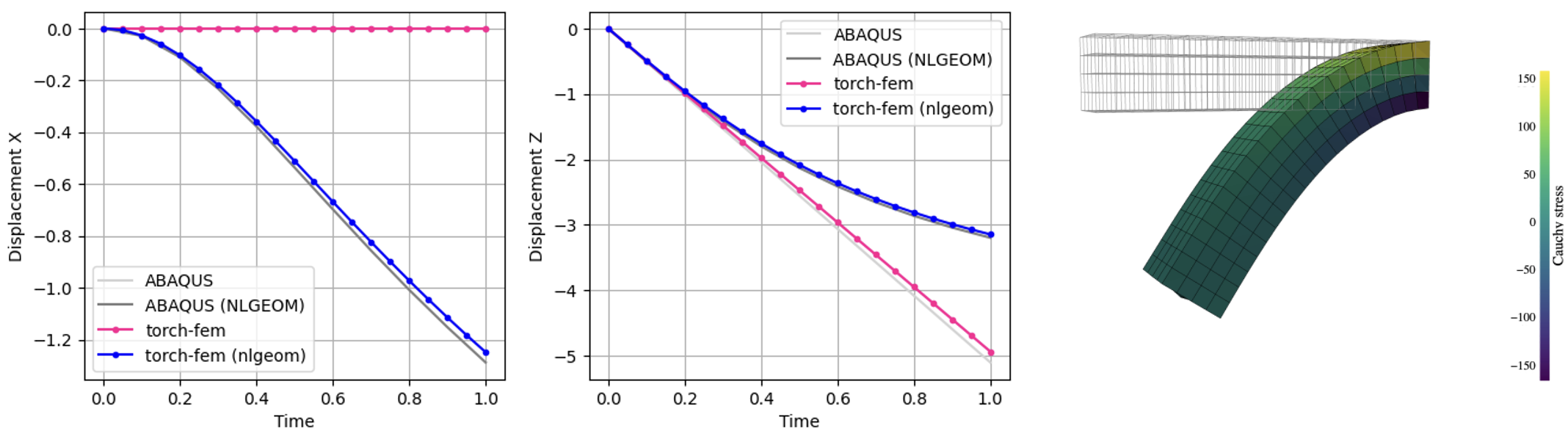

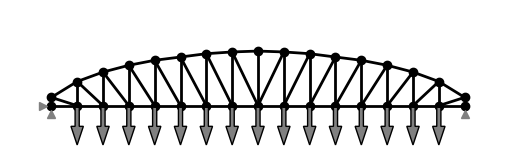

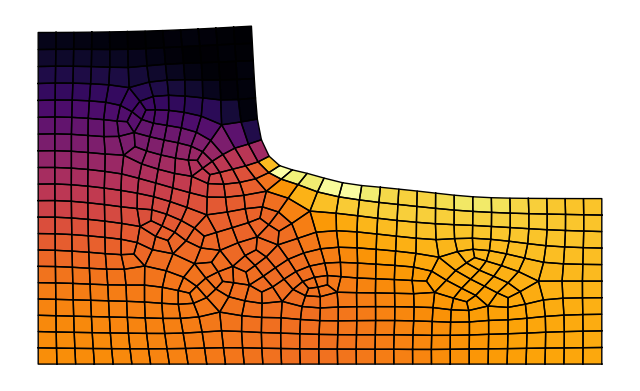

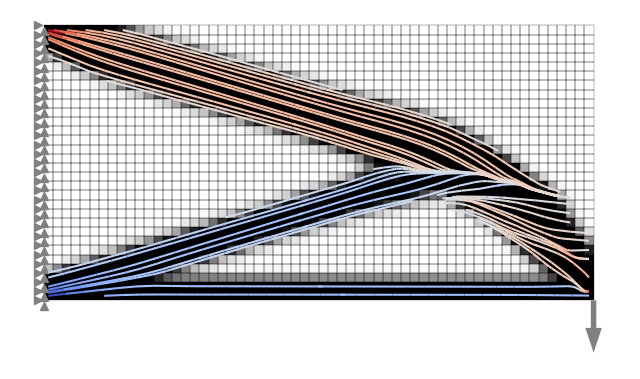

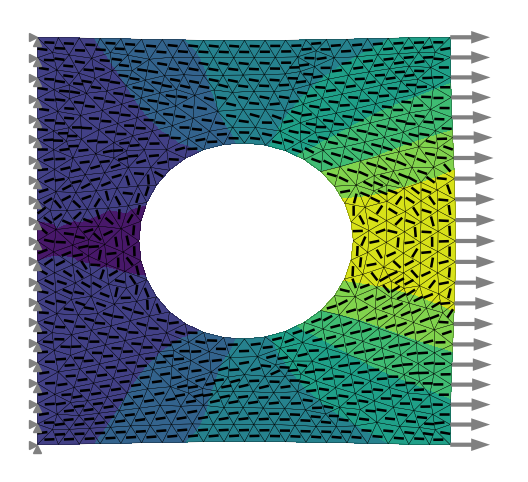

The subdirectory examples->basic contains a couple of Jupyter Notebooks demonstrating the use of torch-fem for trusses, planar problems, shells and solids. You may click on the examples to check out the notebooks online.

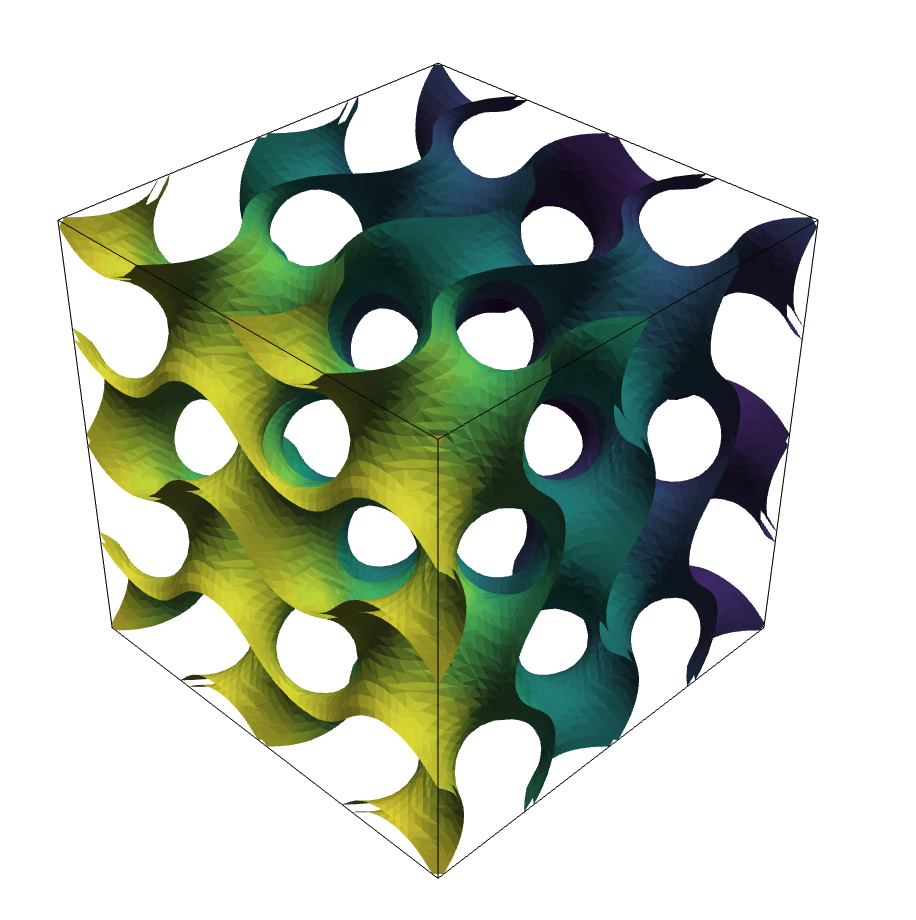

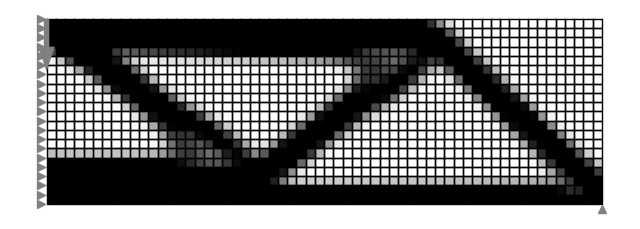

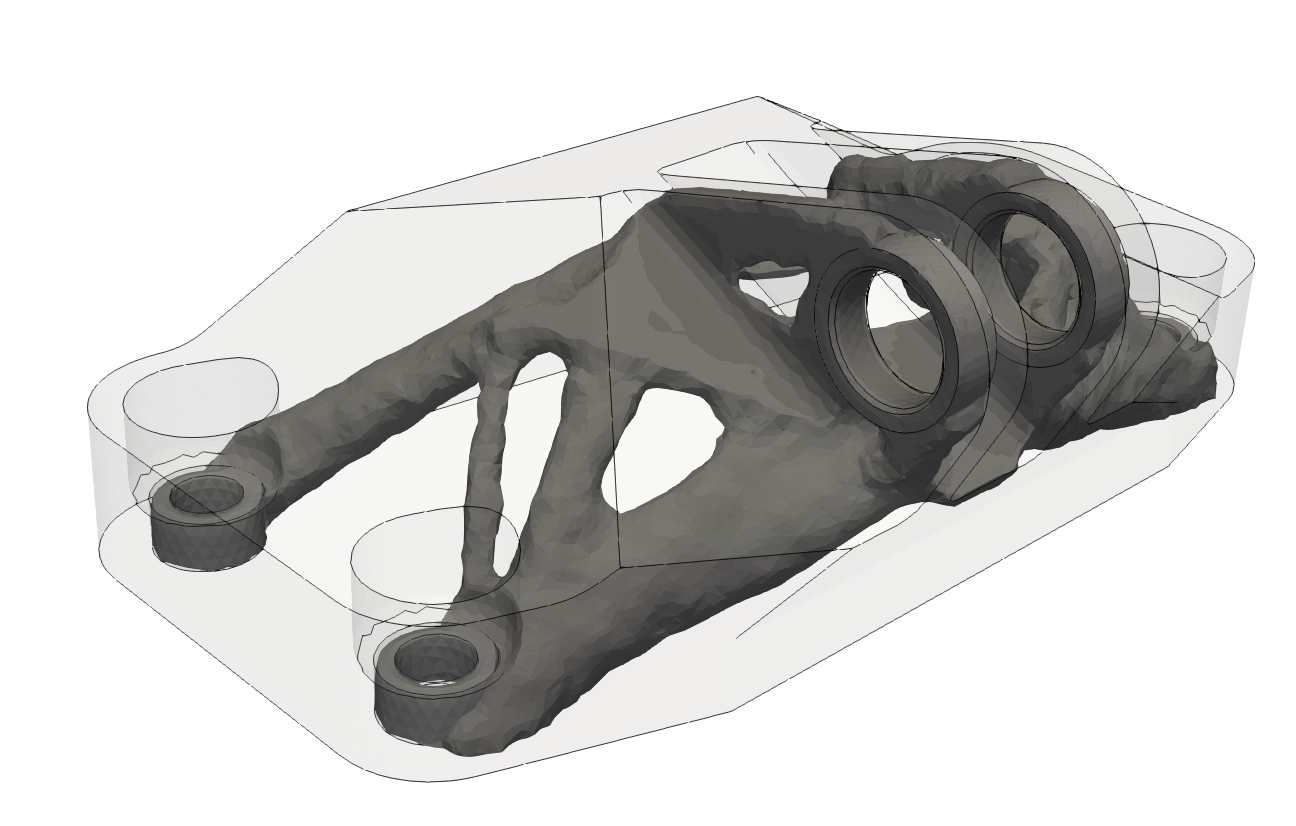

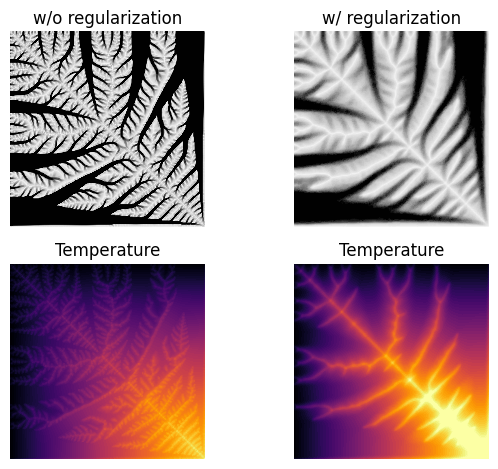

Optimization examples

The subdirectory examples->optimization demonstrates the use of torch-fem for optimization of structures (e.g. topology optimization, composite orientation optimization). You may click on the examples to check out the notebooks online.

Minimal example

This is a minimal example of how to use torch-fem to solve a very simple planar cantilever problem.

import torch

from torchfem import Planar

from torchfem.materials import IsotropicElasticityPlaneStress

torch.set_default_dtype(torch.float64)

# Material

material = IsotropicElasticityPlaneStress(E=1000.0, nu=0.3)

# Nodes and elements

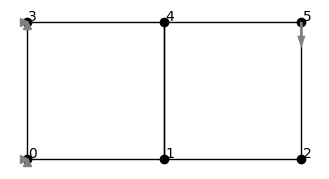

nodes = torch.tensor([[0., 0.], [1., 0.], [2., 0.], [0., 1.], [1., 1.], [2., 1.]])

elements = torch.tensor([[0, 1, 4, 3], [1, 2, 5, 4]])

# Create model

cantilever = Planar(nodes, elements, material)

# Load at tip [Node_ID, DOF]

cantilever.forces[5, 1] = -1.0

# Constrained displacement at left end [Node_IDs, DOFs]

cantilever.constraints[[0, 3], :] = True

# Show model

cantilever.plot(node_markers="o", node_labels=True)

This creates a minimal planar FEM model:

# Solve

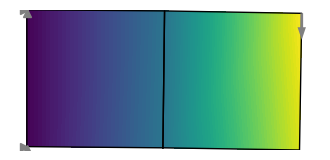

u, f, σ, F, α = cantilever.solve()

# Plot displacement magnitude on deformed state

cantilever.plot(u, node_property=torch.norm(u, dim=1))

This solves the model and plots the result:

If we want to compute gradients through the FEM model, we simply need to define the variables that require gradients. Automatic differentiation is performed through the entire FE solver. Rather than differentiating through individual solver iterations or Newton iterations (this would explode in memory and autograd graph size) though, the implicit function theorem is used to formulate an adjoint backward for solve().

# Enable automatic differentiation

cantilever.thickness.requires_grad = True

u, f, _, _, _ = cantilever.solve(differentiable_parameters=cantilever.thickness)

# Compute sensitivity of compliance w.r.t. element thicknesses

compliance = torch.inner(f.ravel(), u.ravel())

torch.autograd.grad(compliance, cantilever.thickness)[0]

Benchmarks

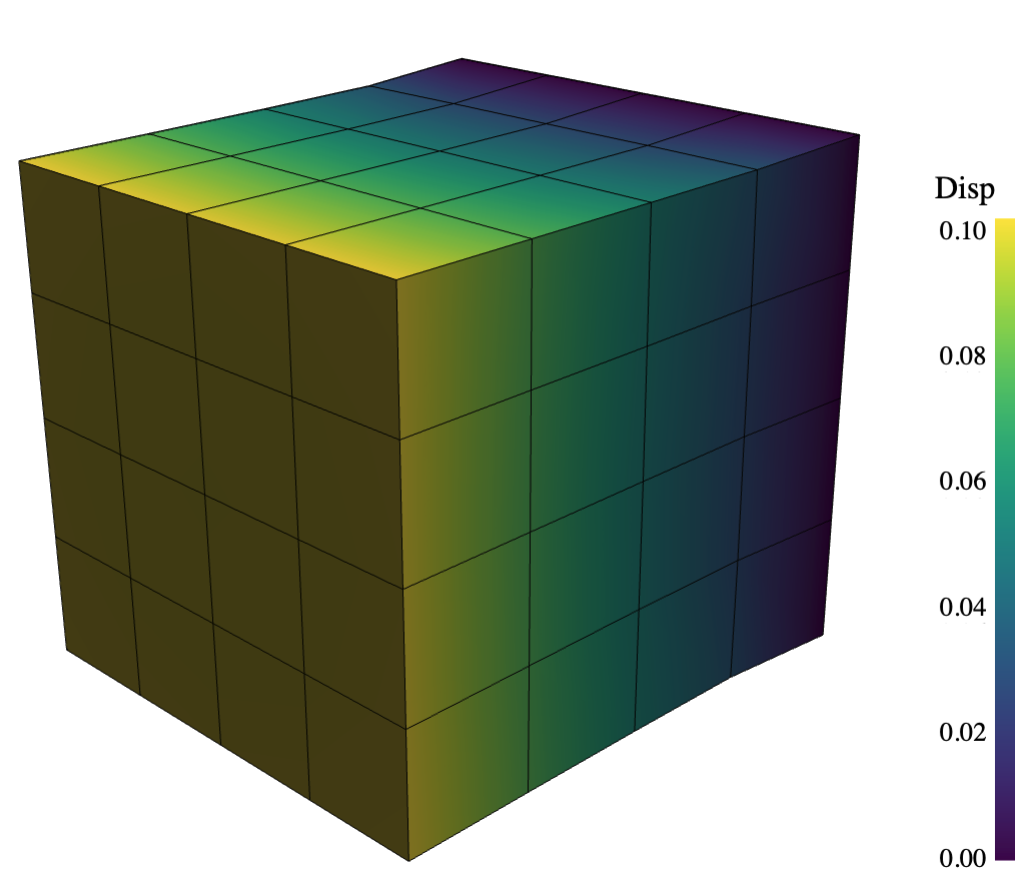

The following benchmarks were performed on a cube subjected to a one-dimensional extension. The cube is discretized with N x N x N linear hexahedral elements, has a side length of 1.0 and is made of a material with Young's modulus of 1000.0 and Poisson's ratio of 0.3. The cube is fixed at one end and a displacement of 0.1 is applied at the other end. The benchmark measures the forward time to assemble the stiffness matrix and the time to solve the linear system. In addition, it measures the backward time to compute the sensitivities of the sum of displacements with respect to forces.

Apple M1 Pro (10 cores, 16 GB RAM)

Python 3.10, SciPy 1.15.3, Apple Accelerate, float64

| N | DOFs | Setup | FWD Solve | BWD Solve | Peak RAM |

|---|---|---|---|---|---|

| 10 | 3000 | 0.02s | 0.16s | 0.37s | 490.4MB |

| 20 | 24000 | 0.14s | 0.74s | 0.35s | 895.6MB |

| 30 | 81000 | 0.52s | 2.73s | 0.84s | 1947.5MB |

| 40 | 192000 | 1.23s | 6.53s | 1.57s | 3060.2MB |

| 50 | 375000 | 2.63s | 13.02s | 3.22s | 4398.7MB |

| 60 | 648000 | 4.71s | 26.17s | 5.48s | 5789.2MB |

| 70 | 1029000 | 9.18s | 46.37s | 9.43s | 7893.5MB |

| 80 | 1536000 | 13.90s | 73.41s | 17.95s | 9739.0MB |

NVIDIA GeForce RTX 5090 (21,760 Cuda cores, 32 GB VRAM)

Python 3.13, CuPy 14.0.1, CUDA 12.9, float64

| N | DOFs | Setup | FWD Solve | BWD Solve | Peak RAM |

|---|---|---|---|---|---|

| 10 | 3000 | 0.27s | 0.45s | 0.46s | 1682.9MB |

| 20 | 24000 | 0.24s | 0.49s | 0.49s | 1703.6MB |

| 30 | 81000 | 0.25s | 0.60s | 0.59s | 1707.4MB |

| 40 | 192000 | 0.25s | 0.78s | 0.73s | 1738.4MB |

| 50 | 375000 | 0.28s | 1.08s | 0.94s | 1710.4MB |

| 60 | 648000 | 0.32s | 1.54s | 1.27s | 1710.8MB |

| 70 | 1029000 | 0.39s | 2.13s | 1.73s | 1728.7MB |

| 80 | 1536000 | 0.49s | 3.76s | 3.22s | 2220.5MB |

Alternatives

There are many alternative FEM solvers in Python that you may also consider:

- Non-differentiable

- Differentiable

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file torch_fem-0.6.1.tar.gz.

File metadata

- Download URL: torch_fem-0.6.1.tar.gz

- Upload date:

- Size: 2.9 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

bd66290056b54b03d663d41de48a470fd6fdae0beb8734cbb25fc32bb18b51fe

|

|

| MD5 |

1f9829e3394df9962ad88d2dbbc94296

|

|

| BLAKE2b-256 |

6ecb35504833a5092559427d312a85b014234c3c568d48f14185f6d88e731515

|

File details

Details for the file torch_fem-0.6.1-py3-none-any.whl.

File metadata

- Download URL: torch_fem-0.6.1-py3-none-any.whl

- Upload date:

- Size: 2.9 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e498fb7383d2b57cc05d147c33b3d1e3c2b4d381233cd59567d6697b80a51987

|

|

| MD5 |

9bd94a4d04fe09c48aea78008280bfa2

|

|

| BLAKE2b-256 |

3996b926c1c46ef6baddfe037679c588bc63814ca1817876a831226deda15480

|