A type-safe wrapper around BeautifulSoup and related HTML parsing utilities

Project description

typed-soup

A type-safe wrapper around BeautifulSoup and utilities for parsing HTML/XML with robust return types and error handling. Extracted from Open-Gov Crawlers.

Motivation

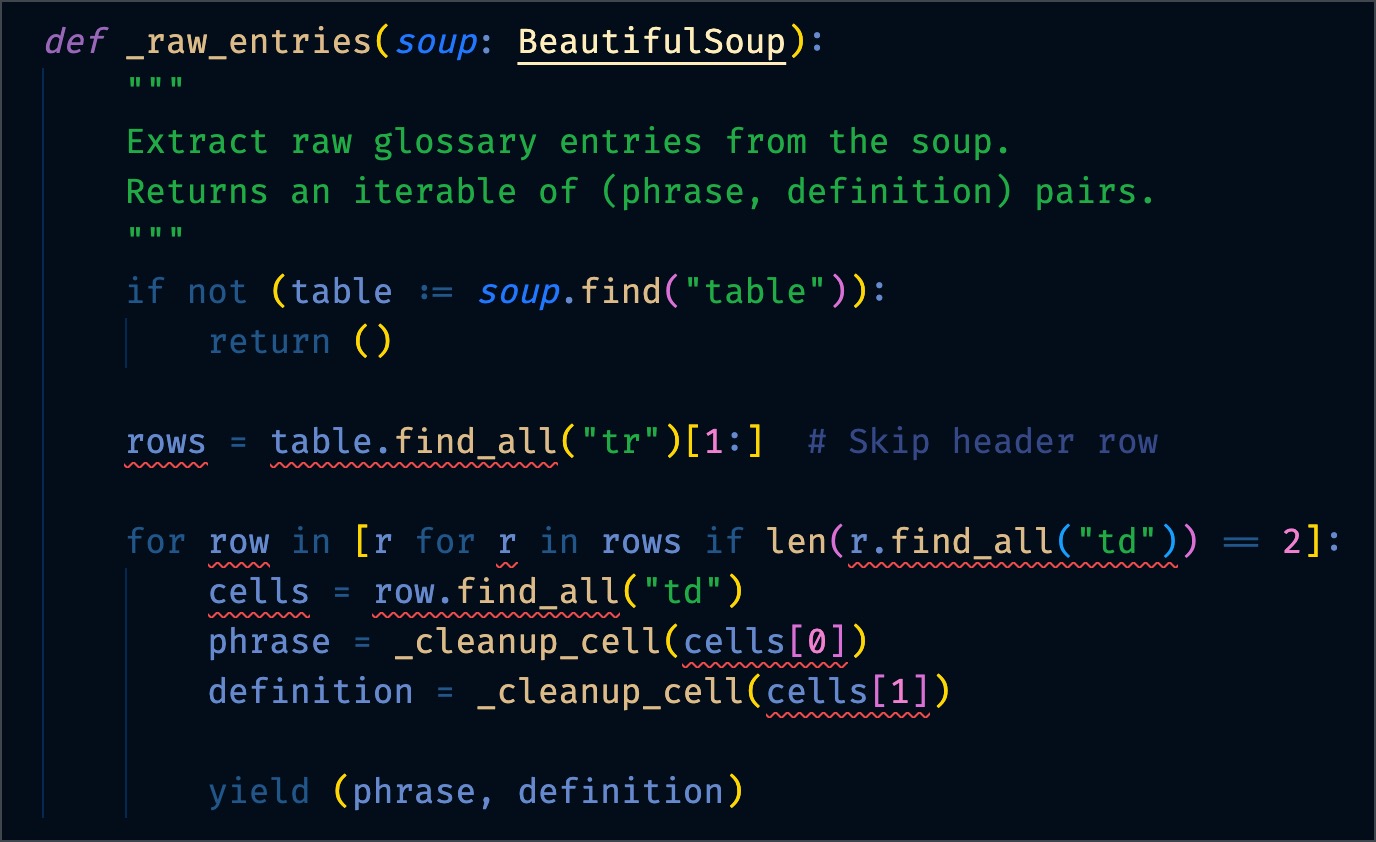

This is an example from production code.

Before

Here are the first five errors. There are 16 in total.

error: Type of "rows" is partially unknown

Type of "rows" is "list[PageElement | Tag | NavigableString] | Unknown" (reportUnknownVariableType)

error: Type of "find_all" is partially unknown

Type of "find_all" is "Unknown | ((name: str | bytes | Pattern[str] | bool | ((Tag) -> bool) | Iterable[str | bytes | Pattern[str] | bool | ((Tag) -> bool)] | ElementFilter | None = None, attrs: Dict[str, str | bytes | Pattern[str] | bool | ((str) -> bool) | Iterable[str | bytes | Pattern[str] | bool | ((str) -> bool)]] = {}, recursive: bool = True, string: str | bytes | Pattern[str] | bool | ((str) -> bool) | Iterable[str | bytes | Pattern[str] | bool | ((str) -> bool)] | None = None, limit: int | None = None, _stacklevel: int = 2, **kwargs: str | bytes | Pattern[str] | bool | ((str) -> bool) | Iterable[str | bytes | Pattern[str] | bool | ((str) -> bool)]) -> ResultSet[PageElement | Tag | NavigableString])" (reportUnknownMemberType)

error: Cannot access attribute "find_all" for class "PageElement"

Attribute "find_all" is unknown (reportAttributeAccessIssue)

error: Cannot access attribute "find_all" for class "NavigableString"

Attribute "find_all" is unknown (reportAttributeAccessIssue)

error: Type of "row" is partially unknown

Type of "row" is "PageElement | Tag | NavigableString | Unknown" (reportUnknownVariableType)

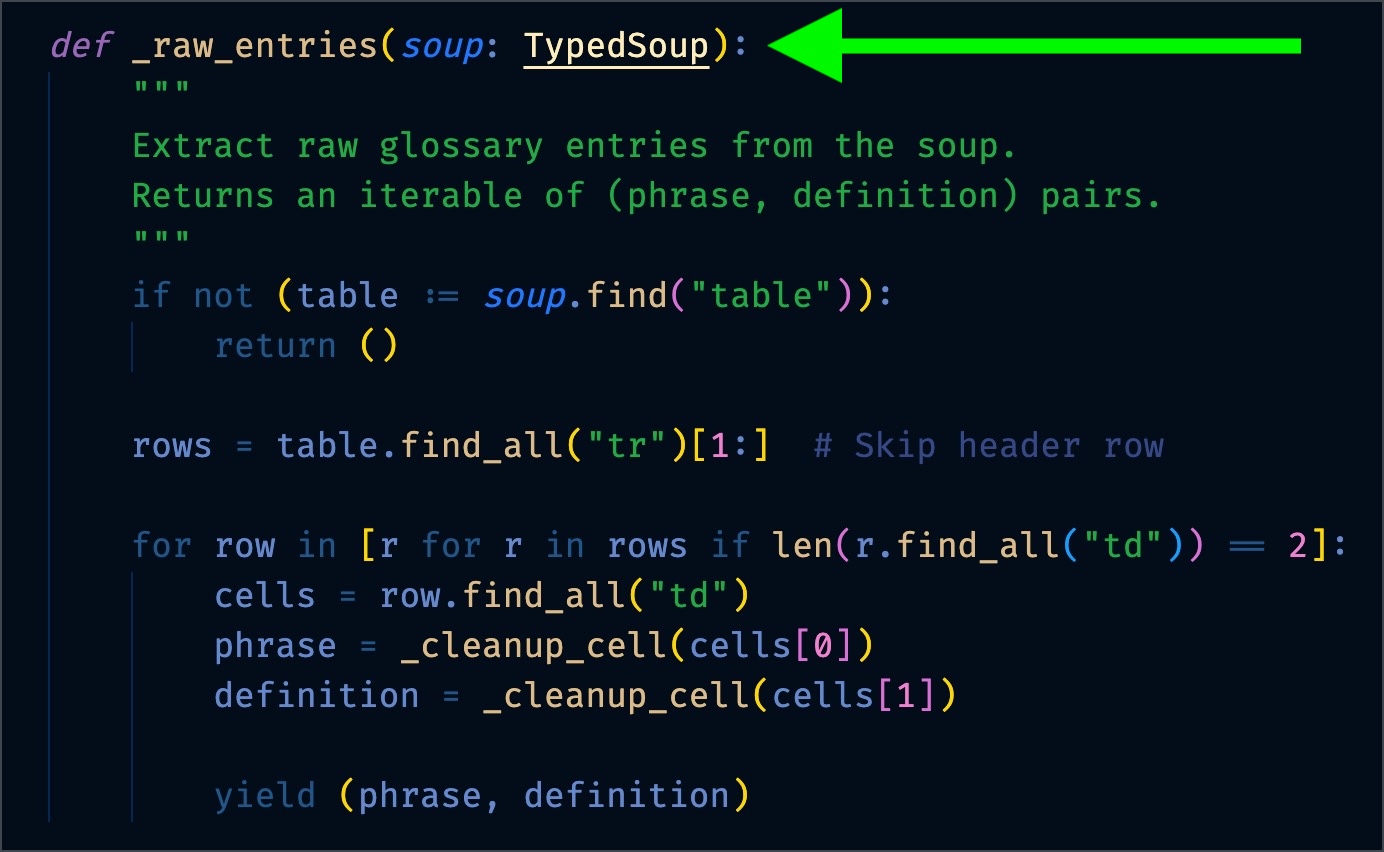

After

Switching out BeautifulSoup for TypedSoup provides type knowledge to the checker and IDE:

Installation

pip install typed-soup

Quick Start

from typed_soup import TypedSoup

from bs4 import BeautifulSoup

# Create a type-safe soup object

soup = TypedSoup(BeautifulSoup("<div>Hello <span>World</span></div>", "html.parser"))

# Find elements with type safety

element = soup.find("span")

if element:

print(element.get_text()) # Type-safe: IDE knows this returns str

Usage

If you're using Scrapy, you can use the from_response function to create a TypedSoup object from a Scrapy response:

from typed_soup import from_response

from scrapy.http.response.html import HtmlResponse

# Assume 'response' is an HtmlResponse object

soup = from_response(response)

# Find an element

element = soup.find("div", class_="example")

if element:

print(element.get_text())

# Find all elements

elements = soup("p")

for elem in elements:

print(elem.get_text())

Or, without Scrapy, you can explicity wrap a BeautifulSoup object in TypedSoup:

from typed_soup import TypedSoup

from bs4 import BeautifulSoup

soup = TypedSoup(BeautifulSoup(html_content, "html.parser"))

Supported Functions

I'm adding functions as I need them. If you have a request, please open an issue. These are the ones that I needed for a dozen spiders:

findfind_all__call__(implicit find_all, e.g.soup("p")- standard BeautifulSoup API)get_textchildrentag_nameparentnext_siblingget_content_after_elementstring

And then these help create a TypedSoup object:

from_responseTypedSoup

Type Safety Benefits

- All methods return properly typed results

- No more

Nonesurprises - optional values are properly typed and described in the function signatures - IDE autocomplete support for all methods

- Static type checking support with mypy/pyright

- Runtime type validation for BeautifulSoup results

License

This project is licensed under the MIT License.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file typed_soup-0.1.5.tar.gz.

File metadata

- Download URL: typed_soup-0.1.5.tar.gz

- Upload date:

- Size: 3.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.1.3 CPython/3.13.3 Darwin/24.4.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b4cd4ec0d2938b0e3dc9da21e54f7f659f3adef513192ed481754705bb953994

|

|

| MD5 |

dbb2d9dcd28b454b148319fa91c912be

|

|

| BLAKE2b-256 |

aac48a31476c9ba1aeea052ad71f8f09eb2cedc51784b8601d33f0983df25129

|

File details

Details for the file typed_soup-0.1.5-py3-none-any.whl.

File metadata

- Download URL: typed_soup-0.1.5-py3-none-any.whl

- Upload date:

- Size: 4.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.1.3 CPython/3.13.3 Darwin/24.4.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9e6cf898de4d3bc06ccfe573c71b0d986ea6229df6dd1439f6825760cfc1223d

|

|

| MD5 |

e696f5762d781bf319d0f4ba7722f37c

|

|

| BLAKE2b-256 |

d906d30afe7fa6afb47a1bce01f4cd859a8f7c13027b1503f2f1b1f839853de8

|