LLM structured output with automatic retry on validation failure

Project description

validation_loop

LLM structured output with automatic retry on validation failure.

validation_loop sends a prompt to any LLM, forces the response into a Pydantic model, runs your custom validation logic, and retries automatically if validation fails -- feeding the error back to the LLM so it can self-correct.

It uses Instructor for structured output extraction, LiteLLM for provider routing, and Tenacity for retry orchestration.

Installation

# Core (you still need a provider SDK installed for LiteLLM to route to)

pip install validation-loop

# With a specific provider

pip install validation-loop[openai]

pip install validation-loop[anthropic]

pip install validation-loop[mistral]

pip install validation-loop[google]

pip install validation-loop[cohere]

# All providers

pip install validation-loop[all]

Quick start

Function form

from pydantic import BaseModel, ValidationError

from validation_loop import validation_loop

class MovieReview(BaseModel):

title: str

rating: float

summary: str

def validate_review(review: MovieReview) -> dict:

"""Business logic that runs after Pydantic validation.

Raise an exception listed in retry_exceptions to trigger a retry."""

if len(review.summary) < 20:

raise ValueError("Summary is too short to be useful")

return {

"title": review.title.upper(),

"rating": review.rating,

"summary": review.summary,

}

result = validation_loop(

schema=MovieReview,

prompt="Review the movie Inception in one short sentence.",

validation_callable=validate_review,

model="openai/gpt-4.1-mini", # any LiteLLM model string

max_attempts=3,

retry_exceptions=(ValidationError, ValueError),

)

Decorator form

The val_loop decorator turns a validation function into a ready-to-call LLM pipeline. The Pydantic schema is extracted from the first parameter's type annotation:

from pydantic import BaseModel, ValidationError

from validation_loop import val_loop

class MovieReview(BaseModel):

title: str

rating: float

summary: str

@val_loop(model="openai/gpt-4.1-mini", max_attempts=3, retry_exceptions=(ValidationError, ValueError))

def review_movie(review: MovieReview) -> dict:

if len(review.summary) < 20:

raise ValueError("Summary too short")

return {"title": review.title.upper(), "rating": review.rating}

# Call it with a prompt:

result = review_movie(prompt="Review the movie Inception.")

# Override settings at call time:

result = review_movie(

prompt="Review The Matrix.",

model="anthropic/claude-sonnet-4-20250514",

max_attempts=5,

)

The decorator also works without arguments, using defaults:

@val_loop

def review_movie(review: MovieReview) -> dict:

return {"title": review.title}

Prompt formats

The prompt parameter accepts a plain string (shown above) or a list for more advanced use cases -- multi-turn conversations, images from local files, and image URLs. See PROMPT_FORMATS.md for details and examples.

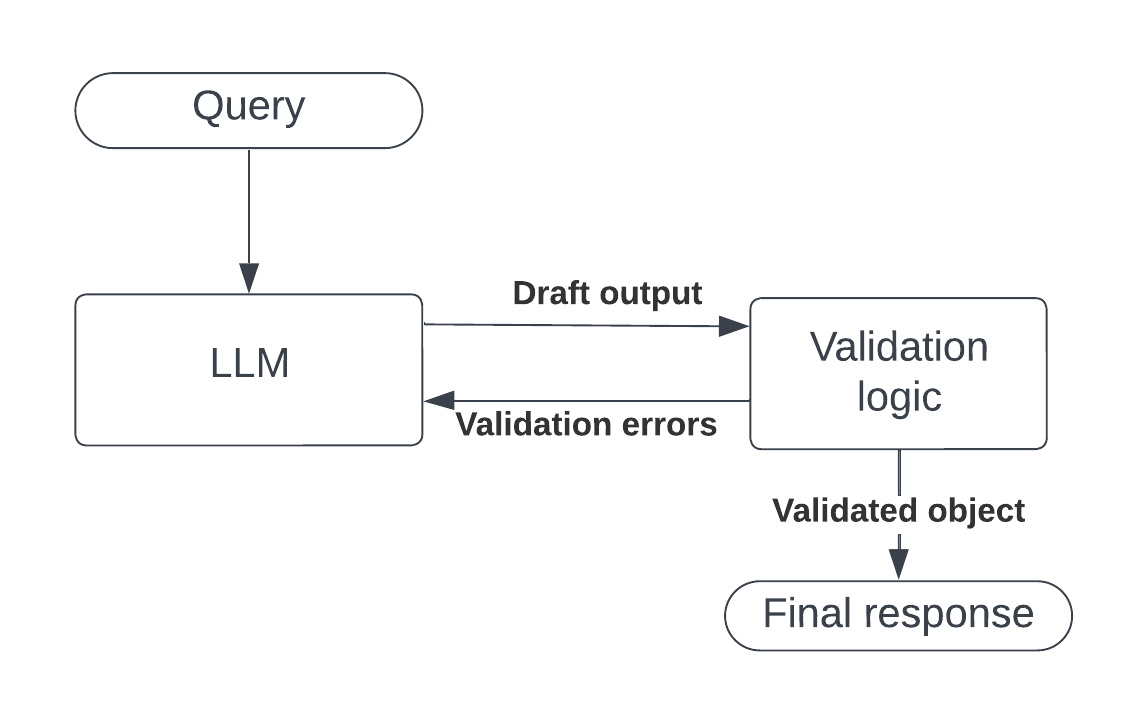

How it works

- Your Pydantic schema is wrapped in a subclass that runs

validation_callableinsidemodel_post_init, so both Pydantic validation errors and your custom errors are visible to Instructor's retry loop. - Instructor calls the LLM via LiteLLM and parses the response into the schema.

- If validation fails, the error text is appended to the conversation and the LLM is called again.

- After

max_attemptsfailures, aRuntimeErroris raised with the last validation error as the cause.

API reference

validation_loop(schema, prompt, validation_callable, ...)

| Parameter | Type | Default | Description |

|---|---|---|---|

schema |

Type[BaseModel] |

required | Pydantic model defining the expected output |

prompt |

str | list |

required | See Prompt formats |

validation_callable |

Callable |

required | Called with the model instance; return value is returned on success |

model |

str |

"openai/gpt-4.1-mini" |

Any LiteLLM model string |

max_attempts |

int |

3 |

Max LLM calls before giving up |

retry_exceptions |

tuple[Type[Exception], ...] |

(ValidationError,) |

Exception types that trigger a retry |

@val_loop / @val_loop(...)

Decorator arguments: model, max_attempts, retry_exceptions (same defaults as above).

The decorated function accepts: prompt (required), plus optional keyword overrides for model, max_attempts, retry_exceptions.

ImageURL

from validation_loop import ImageURL

A simple wrapper marking a URL string as an image for use in prompt lists. See PROMPT_FORMATS.md.

License

MIT

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file validation_loop-0.1.1.tar.gz.

File metadata

- Download URL: validation_loop-0.1.1.tar.gz

- Upload date:

- Size: 7.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.3.4 CPython/3.12.3 Linux/6.17.0-22-generic

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

dfad56c2c9941162a47d914b3c2757424d81c93979e5a9bda721e15fe1ea7f92

|

|

| MD5 |

8c2705ccf5e937969cfb2a3eaeee307a

|

|

| BLAKE2b-256 |

fe68a60ff88aab5217df7ca6a955da51ede8adb686b6d51ad9a2672da91f63d6

|

File details

Details for the file validation_loop-0.1.1-py3-none-any.whl.

File metadata

- Download URL: validation_loop-0.1.1-py3-none-any.whl

- Upload date:

- Size: 8.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.3.4 CPython/3.12.3 Linux/6.17.0-22-generic

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

24bc5cb7e345b7886ed6ac8a374629c57e7fbeaf19e5ddd1e09fc287ba72b47b

|

|

| MD5 |

fae5ac7a3f4a0ccf3299b6934c782f8f

|

|

| BLAKE2b-256 |

78b1c703e7748b752405dc4c2f32681c4ff8c4fb31c0114d62a14e9515a5caef

|